Hierarchical clustering

Overview

Teaching: 70 min

Exercises: 20 minQuestions

What is hierarchical clustering and how does it differ from other clustering methods?

How do we carry out hierarchical clustering in R?

What distance matrix and linkage methods should we use?

How can we validate identified clusters?

Objectives

Understand when to use hierarchical clustering on high-dimensional data.

Perform hierarchical clustering on high-dimensional data and evaluate dendrograms.

Understand and explore different distance matrix and linkage methods.

Use the Dunn index to validate clustering methods.

Why use hierarchical clustering on high-dimensional data?

When analysing high-dimensional data in the life sciences, it is often useful to identify groups of similar data points to understand more about the relationships within the dataset. General clustering algorithms group similar data points (or observations) into groups (or clusters). This results in a set of clusters, where each cluster is distinct, and the data points within each cluster have similar characteristics. The clustering algorithm works by iteratively grouping data points so that different clusters may exist at different stages of the algorithm’s progression.

Here, we describe hierarchical clustering. Unlike K-means clustering, hierarchical clustering does not require the number of clusters $k$ to be specified by the user before the analysis is carried out.

Hierarchical clustering also provides an attractive dendrogram, a tree-like diagram showing the degree of similarity between clusters. The dendrogram is a key feature of hierarchical clustering. This tree-shaped graph allows the similarity between data points in a dataset to be visualised and the arrangement of clusters produced by the analysis to be illustrated. Dendrograms are created using a distance (or dissimilarity) that quantify how different are pairs of observations, and a clustering algorithm to fuse groups of similar data points together.

In this episode we will explore hierarchical clustering for identifying clusters in high-dimensional data. We will use agglomerative hierarchical clustering (see box) in this episode.

Agglomerative and Divisive hierarchical clustering

There are two main methods of carrying out hierarchical clustering: agglomerative clustering and divisive clustering. The former is a ‘bottom-up’ approach to clustering whereby the clustering approach begins with each data point (or observation) being regarded as being in its own separate cluster. Pairs of data points are merged as we move up the tree. Divisive clustering is a ‘top-down’ approach in which all data points start in a single cluster and an algorithm is used to split groups of data points from this main group.

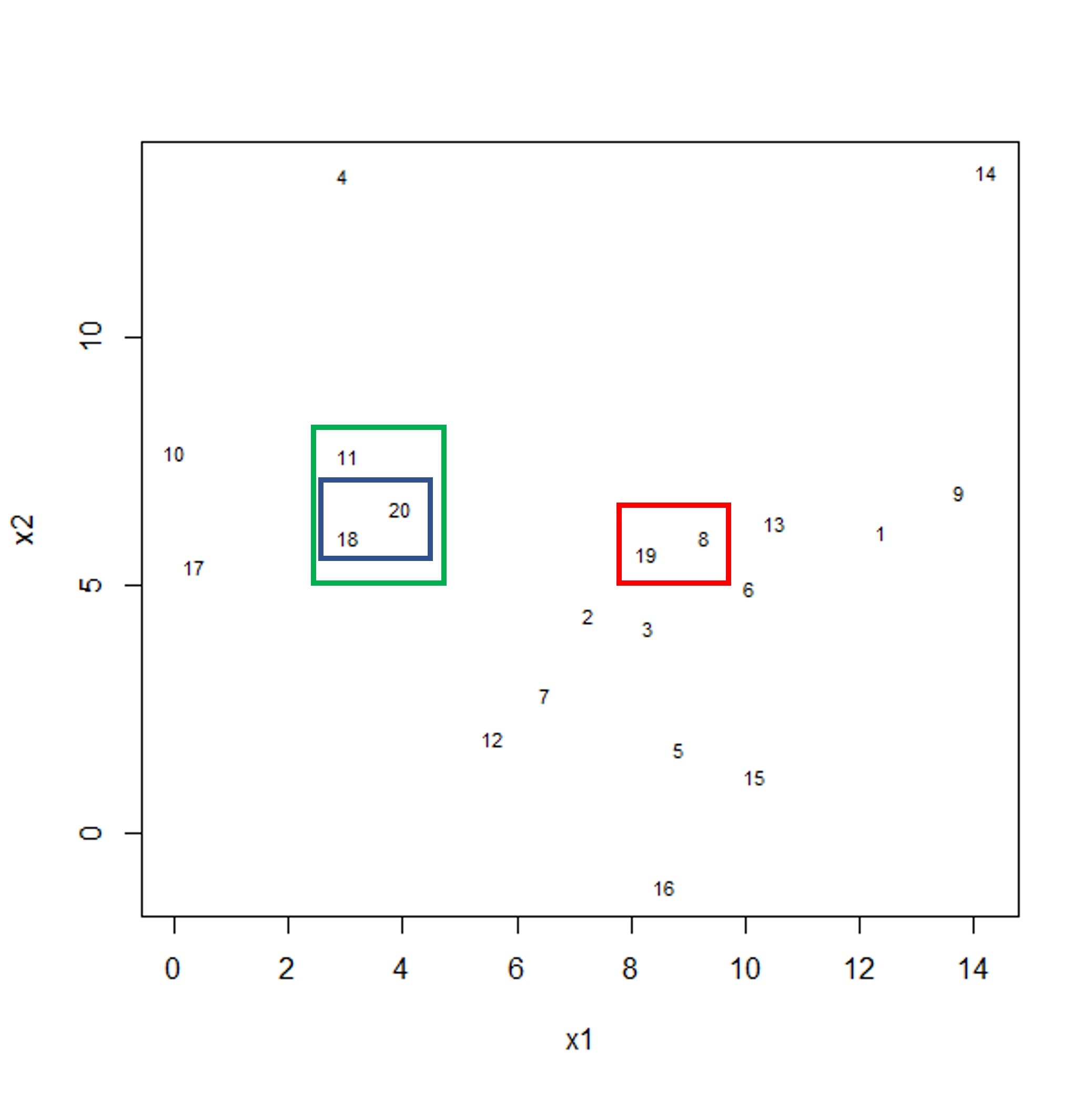

The agglomerative hierarchical clustering algorithm

To start with, we measure distance (or dissimilarity) between pairs of observations. Initially, and at the bottom of the dendrogram, each observation is considered to be in its own individual cluster. We start the clustering procedure by fusing the two observations that are most similar according to a distance matrix. Next, the next-most similar observations are fused so that the total number of clusters is number of observations minus 2 (see panel below). Groups of observations may then be merged into a larger cluster (see next panel below, green box). This process continues until all the observations are included in a single cluster.

Example data showing two clusters of observation pairs.

Example data showing fusing of one observation into larger cluster.

A motivating example

To motivate this lesson, let’s first look at an example where hierarchical

clustering is really useful, and then we can understand how to apply it in more

detail. To do this, we’ll return to the large methylation dataset we worked

with in the regression lessons. Let’s load and visually inspect the data:

library("minfi")

library("here")

library("ComplexHeatmap")

methyl <- readRDS(here("data/methylation.rds"))

# transpose this Bioconductor dataset to show features in columns

methyl_mat <- t(assay(methyl))

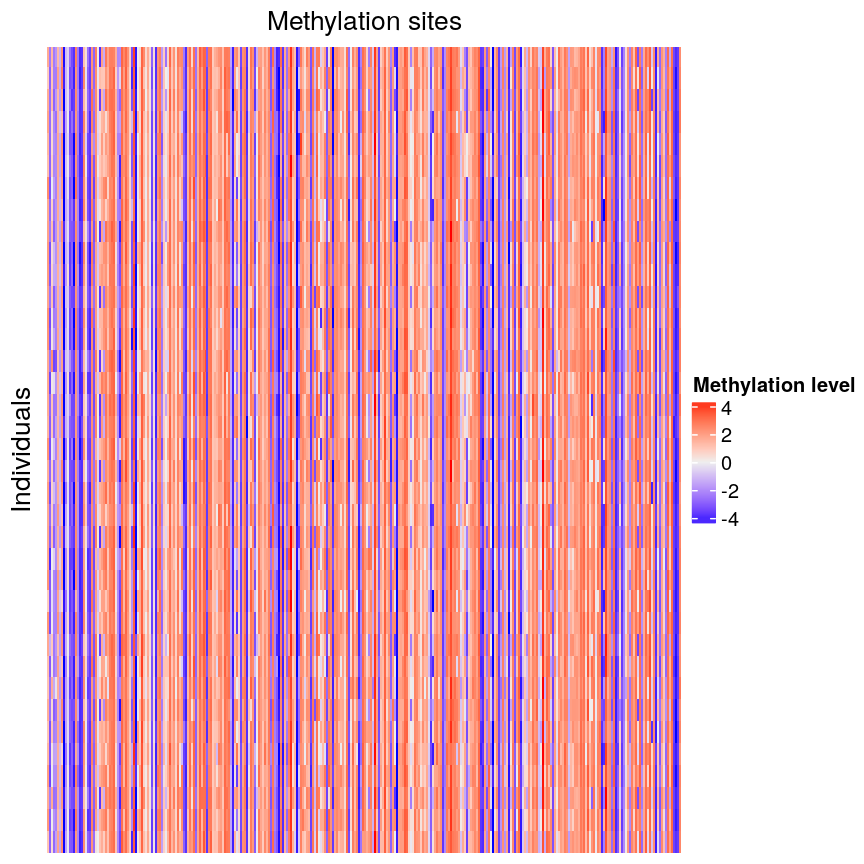

Looking at a heatmap of these data, we may spot some patterns – many columns appear to have a similar methylation levels across all rows. However, they are all quite jumbled at the moment, so it’s hard to tell how many line up exactly.

Heatmap of methylation data.

Looking at a heatmap of these data, we may spot some patterns – looking at the vertical stripes, many columns appear to have a similar methylation levels across all rows. However, they are all quite jumbled at the moment, so it’s hard to tell how many line up exactly. In addition, it is challenging to tell how many groups containing similar methylation levels we may have or what the similarities and differences are between groups.

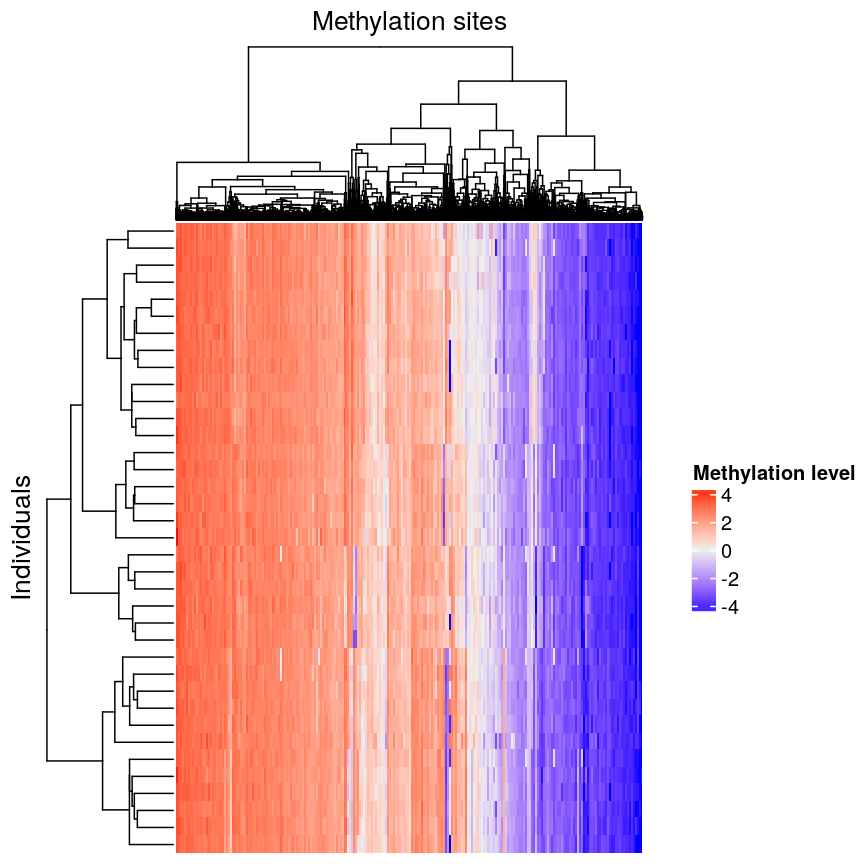

We can order these data to make the patterns more clear using hierarchical

clustering. To do this, we can change the arguments we pass to

Heatmap() from the ComplexHeatmap package. Heatmap()

groups features based on dissimilarity (here, Euclidean distance) and orders

rows and columns to show clustering of features and observations.

Heatmap(methyl_mat,

name = "Methylation level",

cluster_rows = TRUE, cluster_columns = TRUE,

row_dend_width = unit(0.2, "npc"),

column_dend_height = unit(0.2, "npc"),

show_row_names = FALSE, show_column_names = FALSE,

row_title="Individuals", column_title = "Methylation sites"

)

Heatmap of methylation data clustered by methylation sites and individuals.

We can see that clustering the features (CpG sites) results in an overall gradient of high to low methylation levels from left to right. Maybe more interesting is the fact that the rows (corresponding to individuals) are now grouped according to methylation patterns. For example, 12 samples seem to have lower methylation levels for a small subset of CpG sites in the middle, relative to all the other samples. It’s not clear without investigating further what the cause of this is – it could be a batch effect, or a known grouping (e.g., old vs young samples). However, clustering like this can be a useful part of exploratory analysis of data to build hypotheses.

Hierarchical clustering

Now, let’s cover the inner workings of hierarchical clustering in more detail. Hierarchical clustering is a type of clustering that also allows us to estimate the number of clusters. There are two things to consider before carrying out clustering:

- how to define dissimilarity between observations using a distance matrix, and

- how to define dissimilarity between clusters and when to fuse separate clusters.

Defining the dissimilarity between observations: creating the distance matrix

Agglomerative hierarchical clustering is performed in two steps: calculating the distance matrix (containing distances between pairs of observations) and iteratively grouping observations into clusters using this matrix.

There are different ways to specify a distance matrix for clustering:

- Specify distance as a pre-defined option using the

methodargument indist(). Methods includeeuclidean(default),maximumandmanhattan. - Create a self-defined function which calculates distance from a matrix or from two vectors. The function should only contain one argument.

Of pre-defined methods of calculating the distance matrix, Euclidean is one of the most commonly used. This method calculates the shortest straight-line distances between pairs of observations.

Another option is to use a correlation matrix as the input matrix to the clustering algorithm. The type of distance matrix used in hierarchical clustering can have a big effect on the resulting tree. The decision of which distance matrix to use before carrying out hierarchical clustering depends on the type of data and question to be addressed.

Defining the dissimilarity between clusters: Linkage methods

The second step in performing hierarchical clustering after defining the distance matrix (or another function defining similarity between data points) is determining how to fuse different clusters.

Linkage is used to define dissimilarity between groups of observations (or clusters) and is used to create the hierarchical structure in the dendrogram. Different linkage methods of creating a dendrogram are discussed below.

hclust() supports various linkage methods (e.g complete,

single, ward D, ward D2, average, median) and these are also supported

within the Heatmap() function. The method used to perform hierarchical

clustering in Heatmap() can be specified by the arguments

clustering_method_rows and clustering_method_columns. Each linkage method

uses a slightly different algorithm to calculate how clusters are fused together

and therefore different clustering decisions are made depending on the linkage

method used.

Complete linkage (the default in hclust()) works by computing all pairwise

dissimilarities between data points in different clusters. For each pair of two clusters,

it sets their dissimilarity to the maximum dissimilarity value observed

between any of these clusters’ constituent points. The two clusters

with smallest dissimilarity value are then fused.

Computing a dendrogram

Dendrograms are useful tools that plot the grouping of points and clusters into bigger clusters.

We can create and plot dendrograms in R using hclust() which takes

a distance matrix as input and creates a tree using hierarchical

clustering. Here we create some example data to carry out hierarchical

clustering.

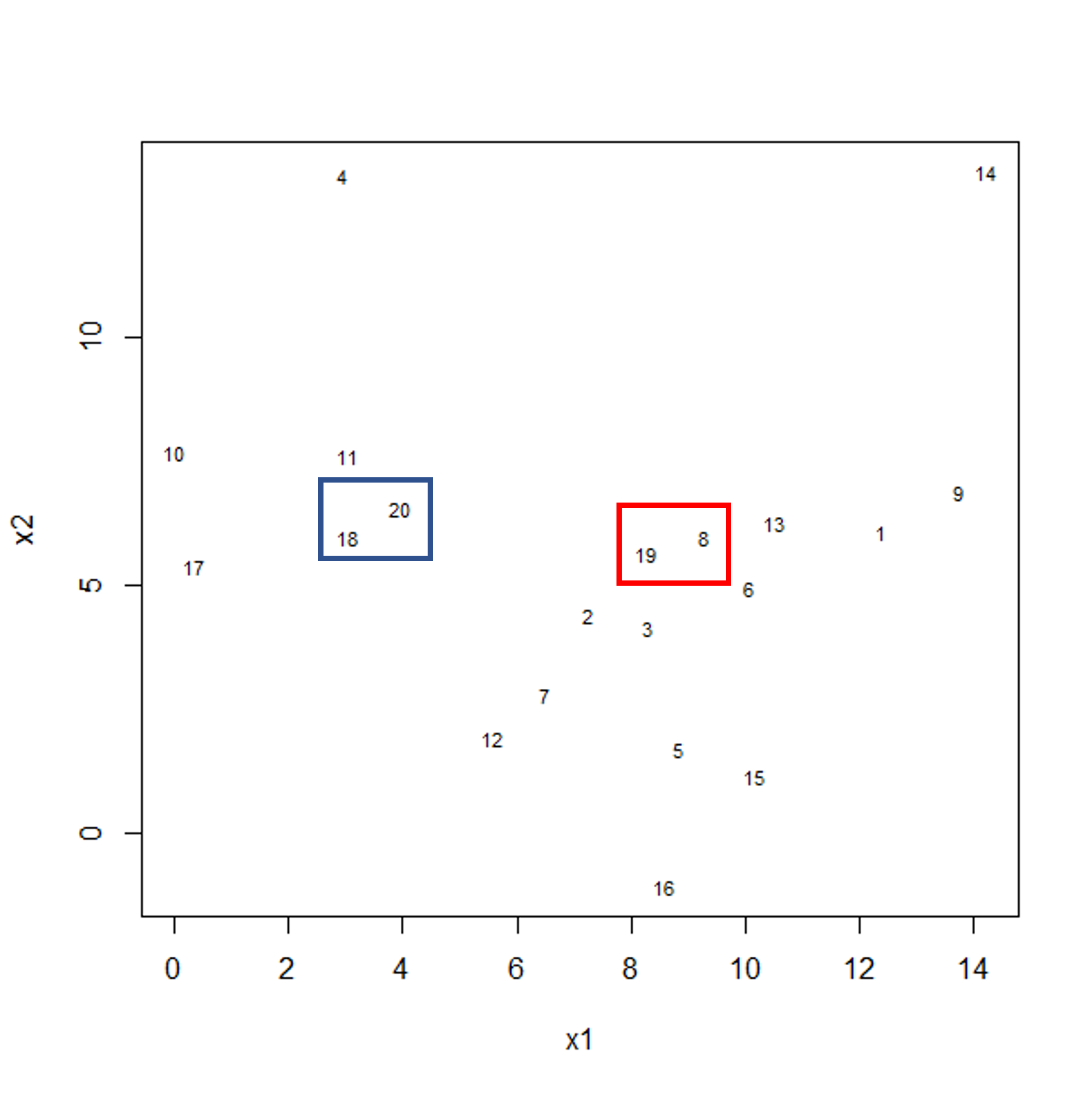

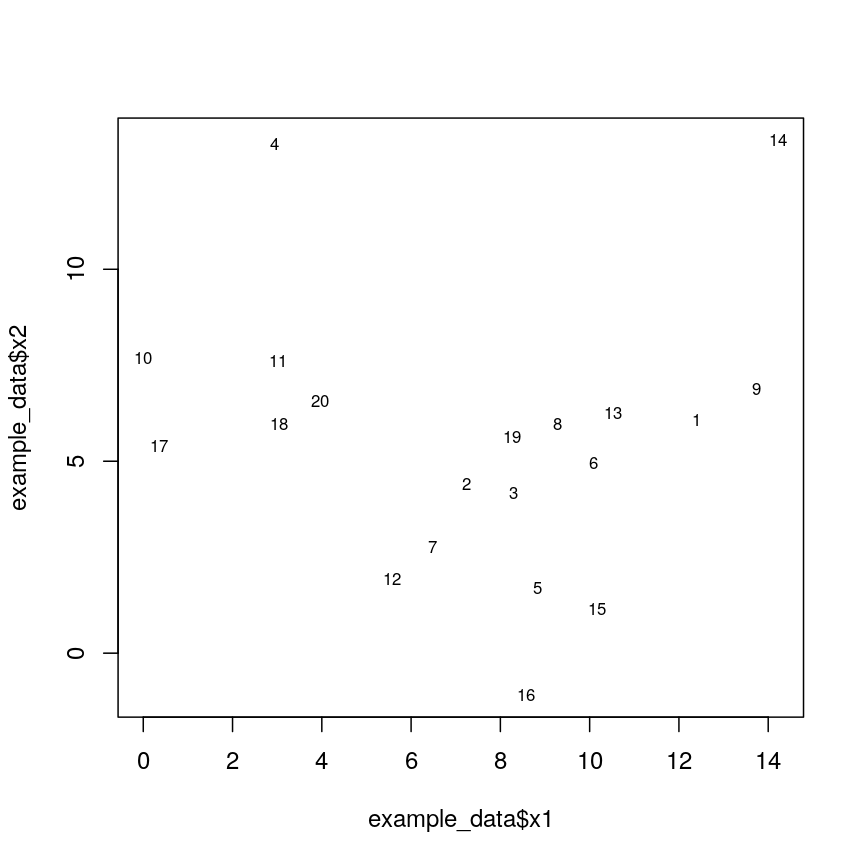

Let’s generate 20 data points in 2D space. Each point is generated to belong to one of three classes/groups. Suppose we did not know which class data points belonged to and we want to identify these via cluster analysis. Let’s first generate and plot our data:

#First, create some example data with two variables x1 and x2

set.seed(450)

example_data <- data.frame(

x1 = rnorm(20, 8, 4.5),

x2 = rnorm(20, 6, 3.4)

)

#plot the data and name data points by row numbers

plot(example_data$x1, example_data$x2, type = "n")

text(

example_data$x1,

example_data$x2,

labels = rownames(example_data),

cex = 0.7

)

Scatter plot of randomly-generated data x2 versus x1.

## calculate distance matrix using euclidean distance

dist_m <- dist(example_data, method = "euclidean")

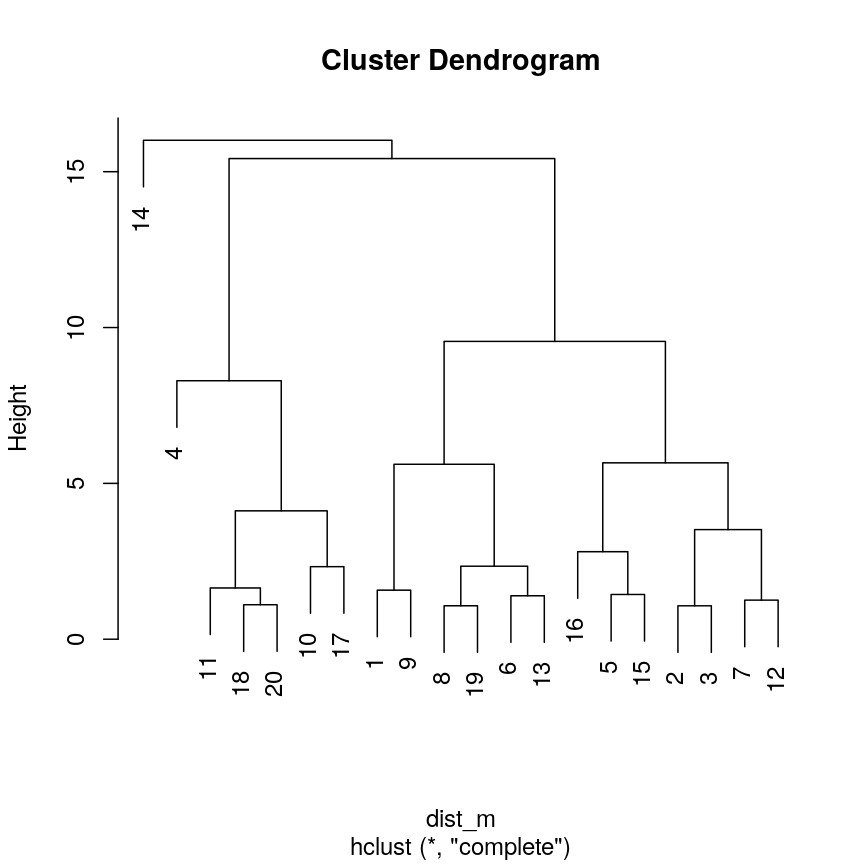

Hierarchical clustering carried out on the data can be used to produce a dendrogram showing how the data is partitioned into clusters. But how do we interpret this dendrogram? Let’s explore this using our example data in a Challenge.

Challenge 1

Use

hclust()to implement hierarchical clustering using the distance matrixdist_mand thecompletelinkage method and plot the results as a dendrogram usingplot(). Why is hierarchical clustering and the resulting dendrogram useful for performing clustering this case?Solution:

clust <- hclust(dist_m, method = "complete") plot(clust)

A dendrogram of the randomly-generated data.

Hierarchical clustering is particularly useful (compared to K-means) when we do not know the number of clusters before we perform clustering. It is useful in this case since we have assumed we do not already know what a suitable number of clusters may be.

A dendrogram, such as the one generated in Challenge 1, shows similarities/differences in distances between data points. Each vertical line at the bottom of the dendrogram (‘leaf’) represents one of the 20 data points. These leaves fuse into fewer vertical lines (‘branches’) as the height increases. Observations that are similar fuse into the same branches. The height at which any two data points fuse indicates how different these two points are. Points that fuse at the top of the tree are very different from each other compared with two points that fuse at the bottom of the tree, which are quite similar. You can see this by comparing the position of similar/dissimilar points according to the scatterplot with their position on the tree.

Identifying the number of clusters

To identify the number of clusters, we can make a horizontal cut through the dendrogram at a user-defined height. The sets of observations beneath this cut and single points representing clusters above can be thought of as distinct clusters. Equivalently, we can count the vertical lines we encounter crossing the horizontal cut and the number of single points above the cut. For example, a cut at height 10 produces 3 clusters for the dendrogram in Challenge 1, while a cut at height 4 produces 8 clusters.

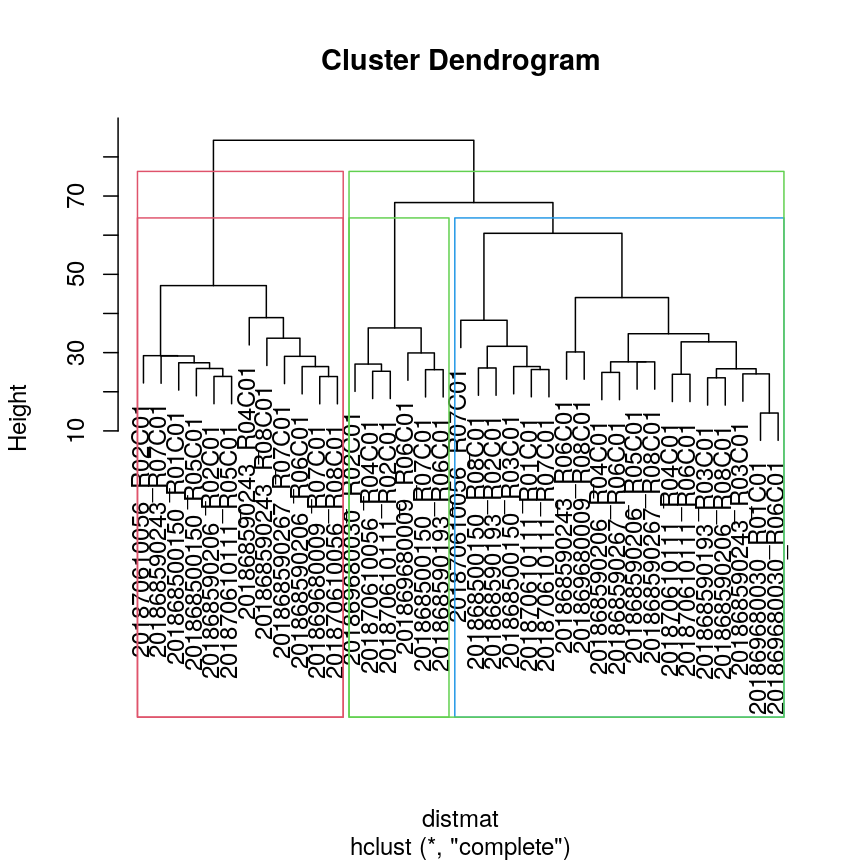

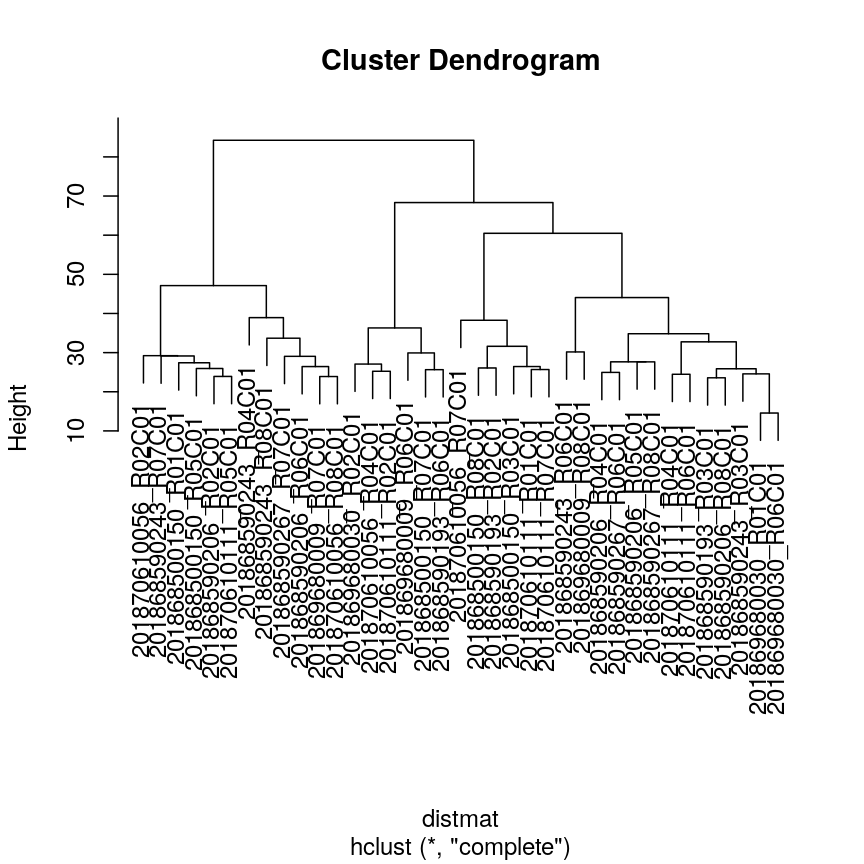

Dendrogram visualisation

We can first visualise cluster membership by highlight branches in dendrograms.

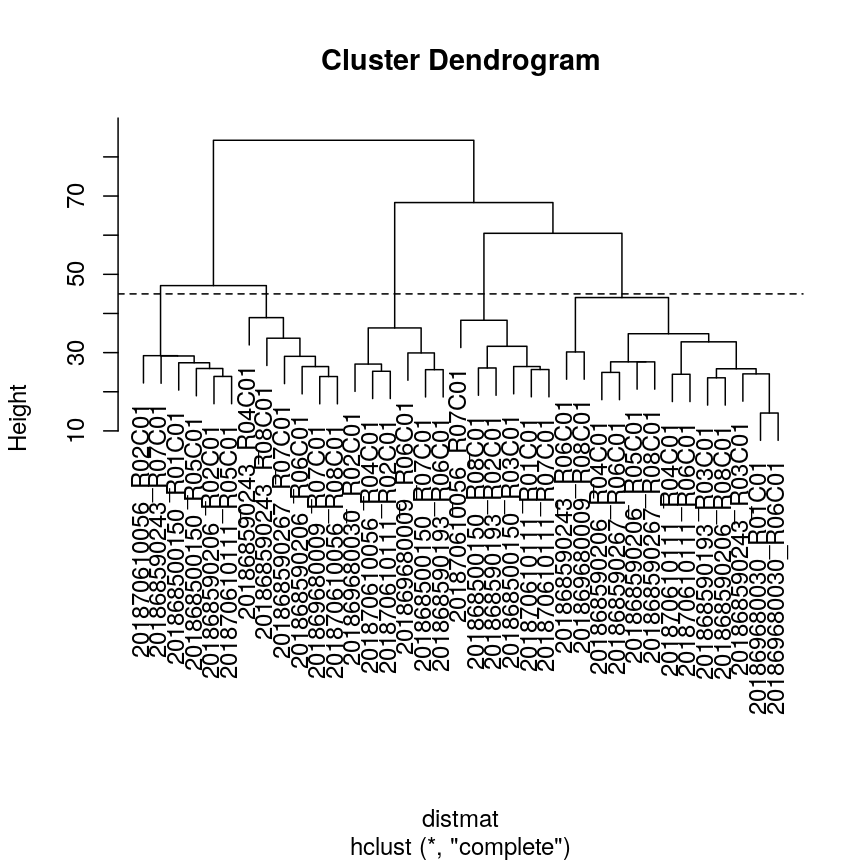

In this example, we calculate a distance matrix between

samples in the methyl_mat dataset. We then draw boxes round clusters obtained with cutree().

## create a distance matrix using euclidean distance

distmat <- dist(methyl_mat)

## hierarchical clustering using complete method

clust <- hclust(distmat)

## plot resulting dendrogram

plot(clust)

## draw border around three clusters

rect.hclust(clust, k = 3, border = 2:6) #border argument specifies the colours

## draw border around two clusters

rect.hclust(clust, k = 2, border = 2:6)

Dendrogram with boxes around clusters.

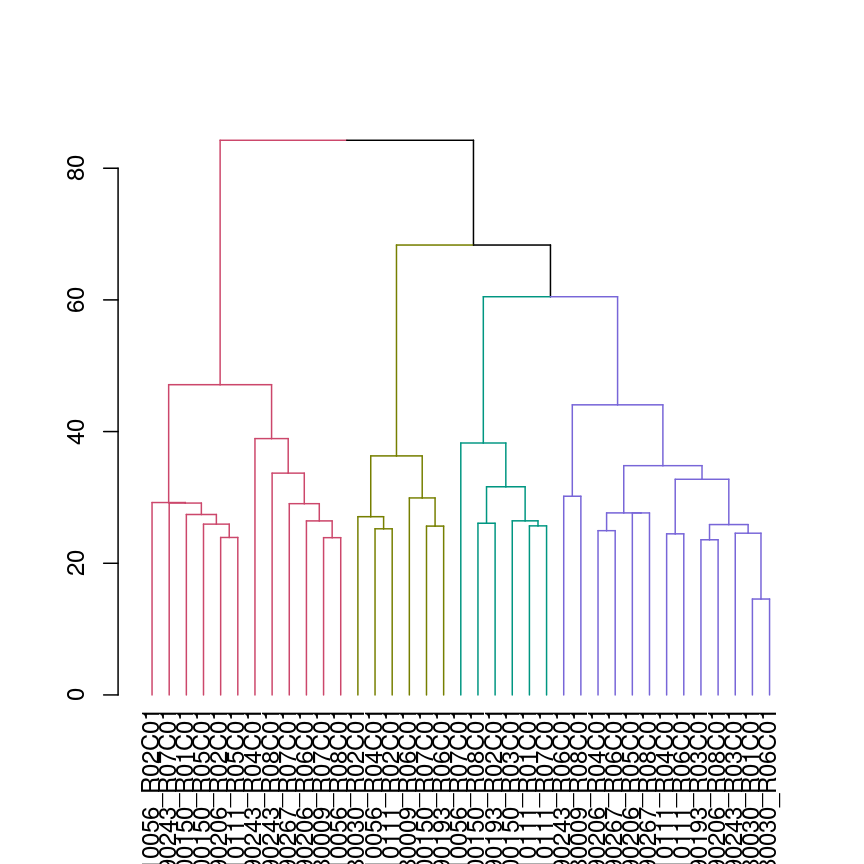

We can also colour clusters downstream of a specified cut using color_branches()

from the dendextend package.

## cut tree at height = 4

cut <- cutree(clust, h = 50)

library("dendextend")

avg_dend_obj <- as.dendrogram(clust)

## colour branches of dendrogram depending on clusters

plot(color_branches(avg_dend_obj, h = 50))

Dendrogram with coloured branches delineating different clusters.

Numerical visualisation

In addition to visualising clusters directly on the dendrogram, we can cut

the dendrogram to determine number of clusters at different heights

using cutree(). This function cuts a dendrogram into several

groups (or clusters) where the number of desired groups is controlled by the

user, by defining either k (number of groups) or h (height at which tree is

cut). The function outputs the cluster labels of each data point in order.

## k is a user defined parameter determining

## the desired number of clusters at which to cut the treee

as.numeric(cutree(clust, k = 9))

[1] 1 2 1 3 2 2 4 3 1 4 4 5 4 4 6 7 1 5 4 5 4 3 5 7 4 8 4 1 8 9 5 2 8 4 1 4 2

## h is a user defined parameter determining

## the numeric height at which to cut the tree

as.numeric(cutree(clust, h = 36))

[1] 1 2 1 3 2 2 4 3 1 4 4 5 4 4 6 7 1 5 4 5 4 3 5 7 4 8 4 1 8 9 5 2 8 4 1 4 2

## both give same results

four_cut <- cutree(clust, h = 50)

## we can produce the cluster each observation belongs to

## using the mutate and count functions

library(dplyr)

example_cl <- mutate(data.frame(methyl_mat), cluster = four_cut)

count(example_cl, cluster)

cluster n

1 1 12

2 2 6

3 3 6

4 4 13

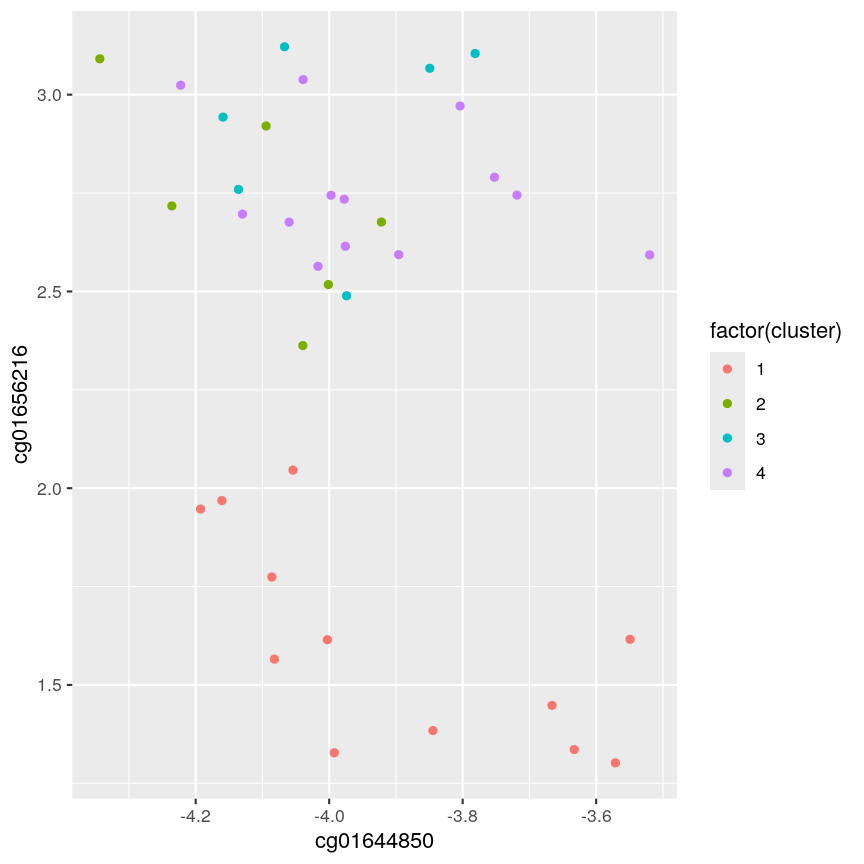

#plot cluster each point belongs to on original scatterplot

library(ggplot2)

ggplot(example_cl, aes(x = cg01644850, y = cg01656216, color = factor(cluster))) + geom_point()

Scatter plot of data x2 versus x1, coloured by cluster.

Note that this cut produces 4 clusters (zero before the cut and another four downstream of the cut).

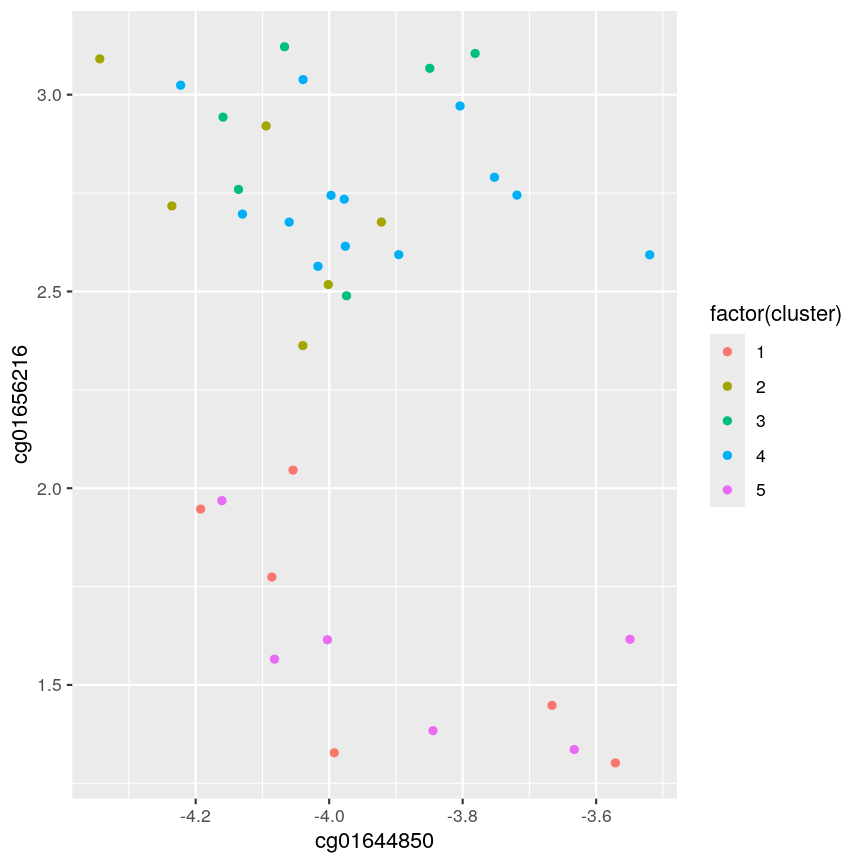

Challenge 2:

Identify the value of

kincutree()that gives the same output ash = 36Solution:

plot(clust) ## create horizontal line at height = 45 abline(h = 45, lty = 2)

A dendrogram of the randomly-generated data.

cutree(clust, h = 45)201868500150_R01C01 201868500150_R03C01 201868500150_R05C01 201868500150_R07C01 1 2 1 3 201868500150_R08C01 201868590193_R02C01 201868590193_R03C01 201868590193_R06C01 2 2 4 3 201868590206_R02C01 201868590206_R04C01 201868590206_R05C01 201868590206_R06C01 1 4 4 5 201868590206_R08C01 201868590243_R03C01 201868590243_R04C01 201868590243_R06C01 4 4 5 4 201868590243_R07C01 201868590243_R08C01 201868590267_R06C01 201868590267_R07C01 1 5 4 5 201868590267_R08C01 201869680009_R06C01 201869680009_R07C01 201869680009_R08C01 4 3 5 4 201869680030_R01C01 201869680030_R02C01 201869680030_R06C01 201870610056_R02C01 4 3 4 1 201870610056_R04C01 201870610056_R07C01 201870610056_R08C01 201870610111_R01C01 3 2 5 2 201870610111_R02C01 201870610111_R04C01 201870610111_R05C01 201870610111_R06C01 3 4 1 4 201870610111_R07C01 2cutree(clust, k = 5)201868500150_R01C01 201868500150_R03C01 201868500150_R05C01 201868500150_R07C01 1 2 1 3 201868500150_R08C01 201868590193_R02C01 201868590193_R03C01 201868590193_R06C01 2 2 4 3 201868590206_R02C01 201868590206_R04C01 201868590206_R05C01 201868590206_R06C01 1 4 4 5 201868590206_R08C01 201868590243_R03C01 201868590243_R04C01 201868590243_R06C01 4 4 5 4 201868590243_R07C01 201868590243_R08C01 201868590267_R06C01 201868590267_R07C01 1 5 4 5 201868590267_R08C01 201869680009_R06C01 201869680009_R07C01 201869680009_R08C01 4 3 5 4 201869680030_R01C01 201869680030_R02C01 201869680030_R06C01 201870610056_R02C01 4 3 4 1 201870610056_R04C01 201870610056_R07C01 201870610056_R08C01 201870610111_R01C01 3 2 5 2 201870610111_R02C01 201870610111_R04C01 201870610111_R05C01 201870610111_R06C01 3 4 1 4 201870610111_R07C01 2five_cut <- cutree(clust, h = 45) library(dplyr) example_cl <- mutate(data.frame(methyl_mat), cluster = five_cut) count(example_cl, cluster)cluster n 1 1 6 2 2 6 3 3 6 4 4 13 5 5 6library(ggplot2) ggplot(example_cl, aes(x=cg01644850, y = cg01656216, color = factor(cluster))) + geom_point()

A scatter plot of the x and y variables of the example data, with points coloured by cluster.

Five clusters (

k = 5) gives similar results toh = 45. You can plot a horizontal line on the dendrogram ath = 45to help identify corresponding value ofk.

The effect of different linkage methods

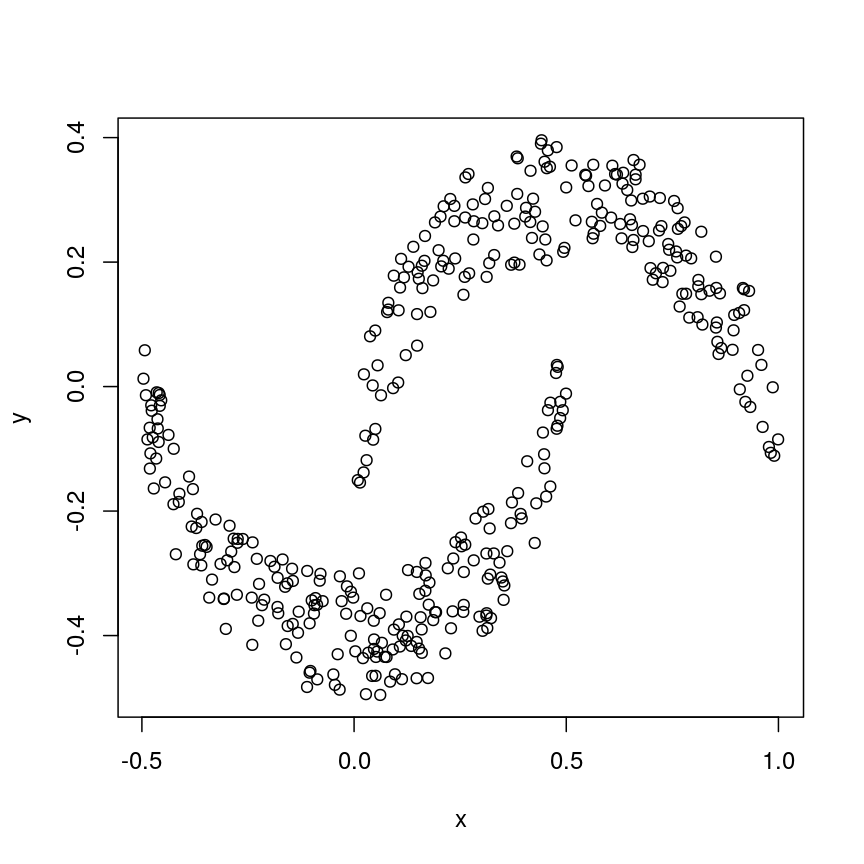

Now let us look into changing the default behaviour of hclust(). First, we’ll load a synthetic dataset, comprised of two variables representing two crescent-shaped point clouds:

cres <- readRDS(here::here("data/cres.rds"))

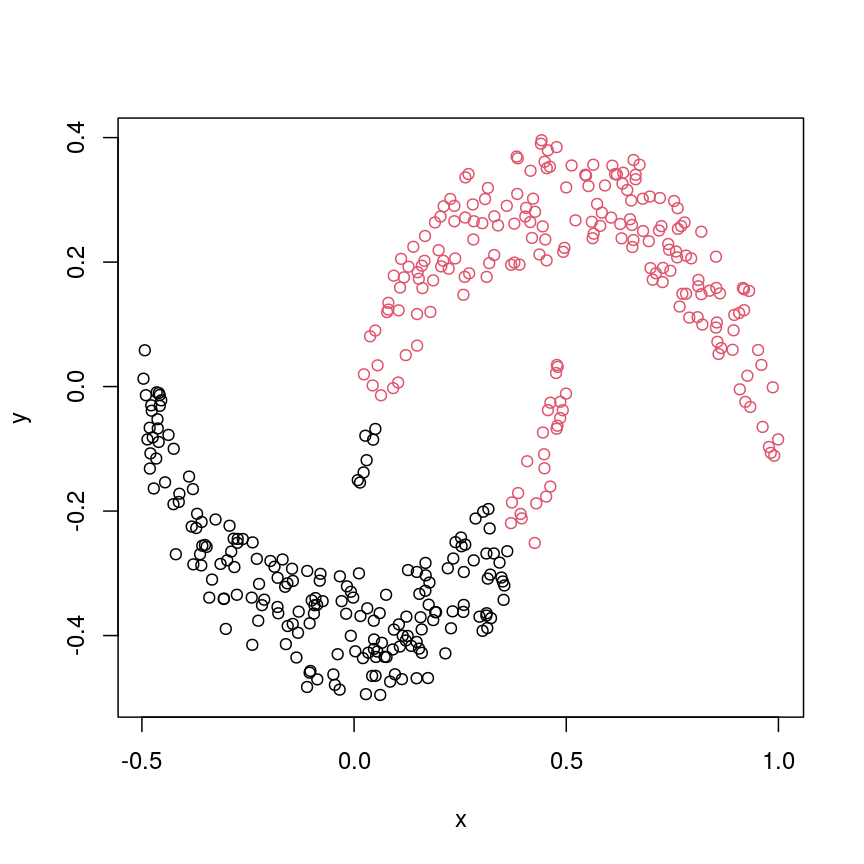

plot(cres)

Scatter plot of data simulated according to two crescent-shaped point clouds.

We might expect that the crescents are resolved into separate clusters. But if we run hierarchical clustering with the default arguments, we get this:

cresClass <- cutree(hclust(dist(cres)), k=2) # save partition for colouring

plot(cres, col=cresClass) # colour scatterplot by partition

Scatter plot of crescent-shaped simulated data, coloured according to clusters calculated using Euclidean distance.

Challenge 3

Carry out hierarchical clustering on the

cresdata that we generated above. Try out different linkage methods and usecutree()to split each resulting dendrogram into two clusters. Plot the results colouring the dots according to their inferred cluster identity.Which method(s) give you the expected clustering outcome?

Hint: Check

?hclustto see the possible values of the argumentmethod(the linkage method used).Solution:

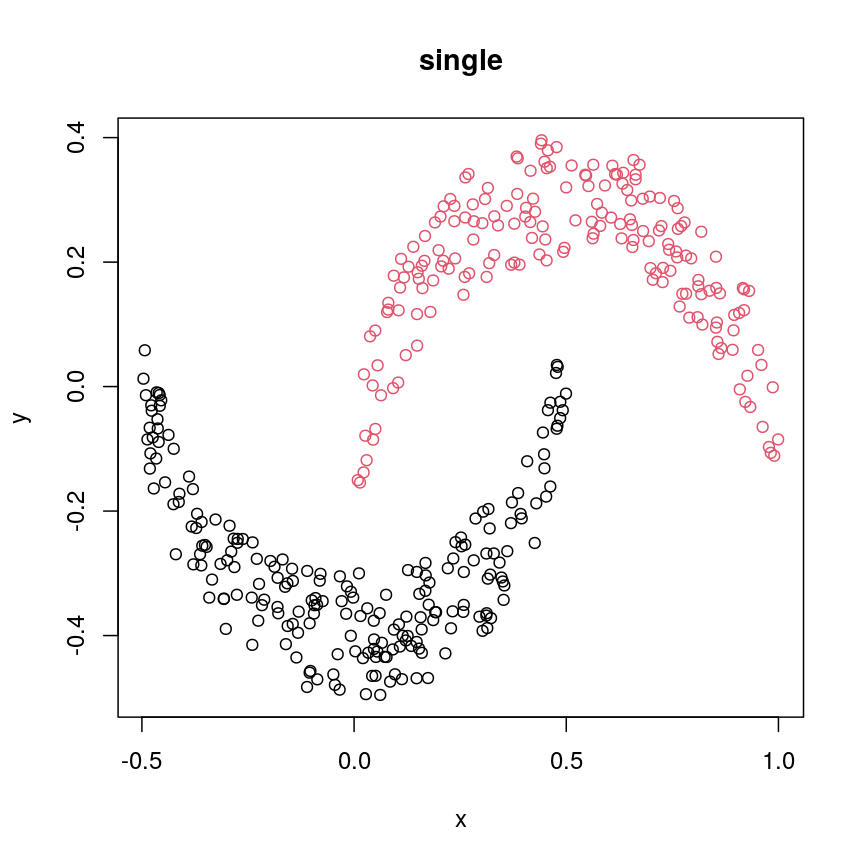

#?hclust # "complete", "single", "ward.D", "ward.D2", "average", "mcquitty", "median" or "centroid" cresClassSingle <- cutree(hclust(dist(cres),method = "single"), k=2) plot(cres, col=cresClassSingle, main="single")

A scatter plot of synthetic data coloured by cluster.

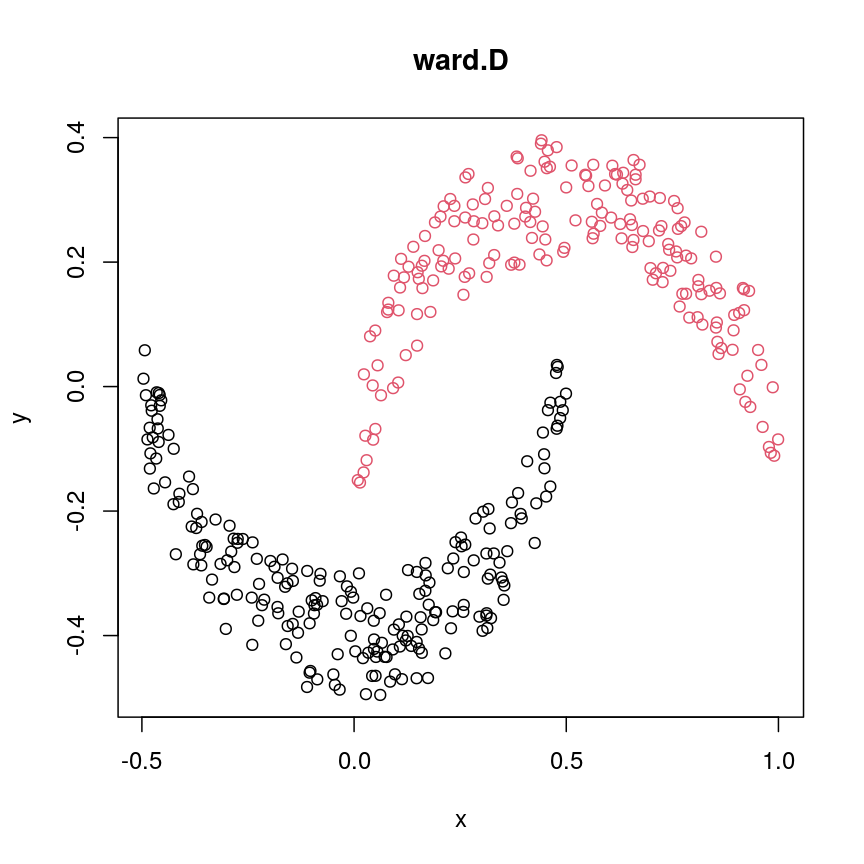

cresClassWard.D <- cutree(hclust(dist(cres), method="ward.D"), k=2) plot(cres, col=cresClassWard.D, main="ward.D")

A scatter plot of synthetic data coloured by cluster.

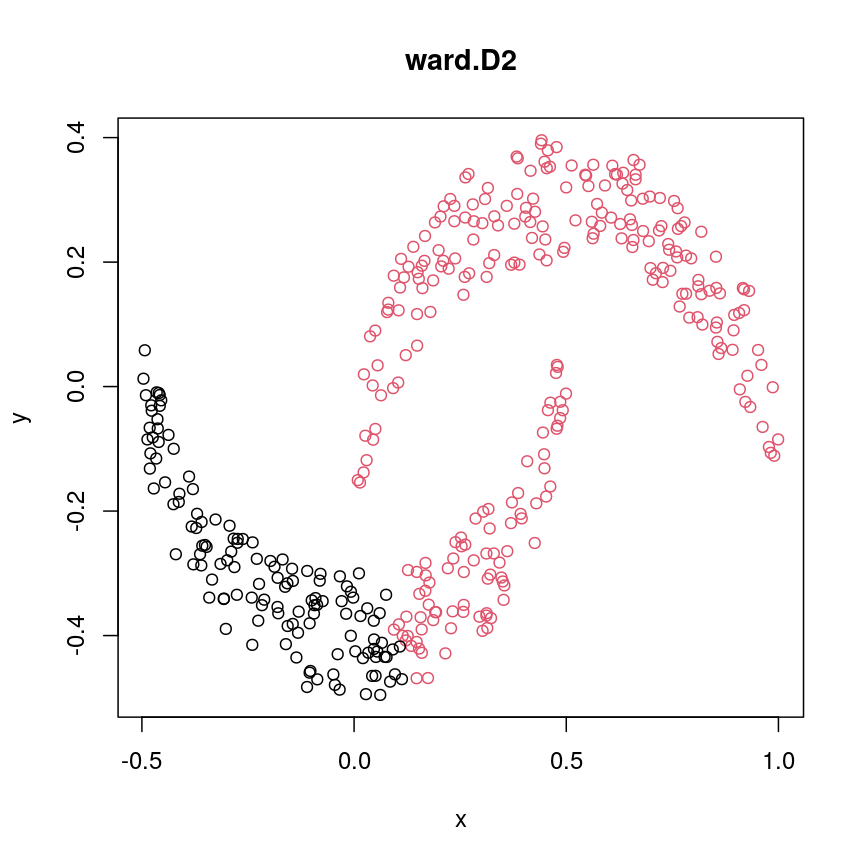

cresClassWard.D2 <- cutree(hclust(dist(cres), method="ward.D2"), k=2) plot(cres, col=cresClassWard.D2, main="ward.D2")

A scatter plot of synthetic data coloured by cluster.

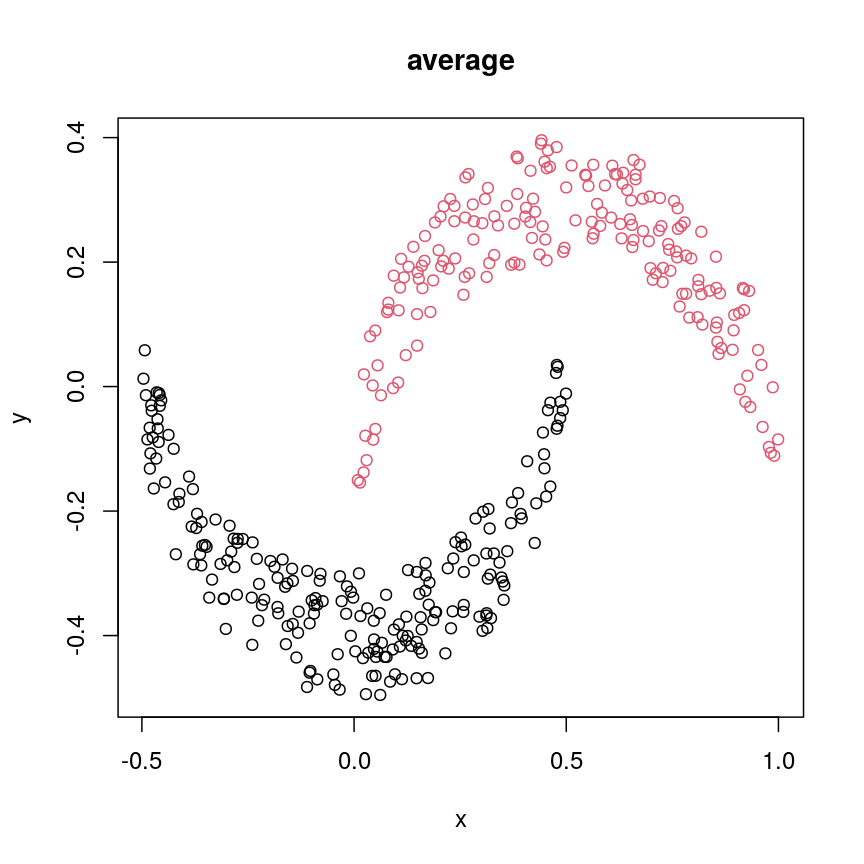

cresClassAverage <- cutree(hclust(dist(cres), method="average"), k=2) plot(cres, col=cresClassAverage, main="average")

A scatter plot of synthetic data coloured by cluster.

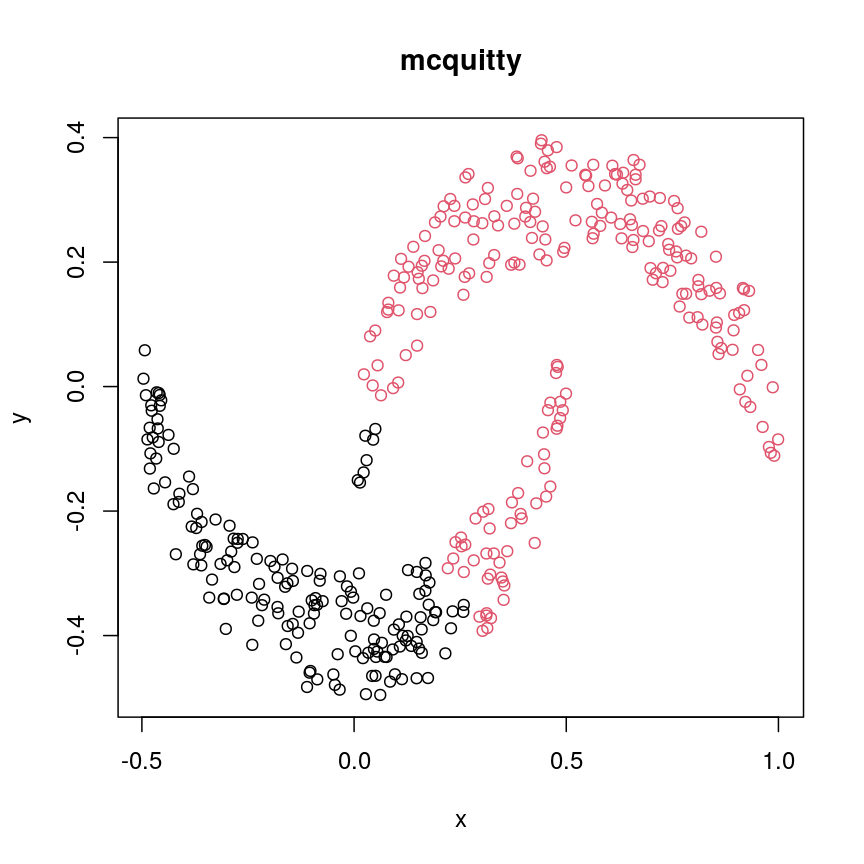

cresClassMcquitty <- cutree(hclust(dist(cres), method="mcquitty"), k=2) plot(cres, col=cresClassMcquitty, main="mcquitty")

A scatter plot of synthetic data coloured by cluster.

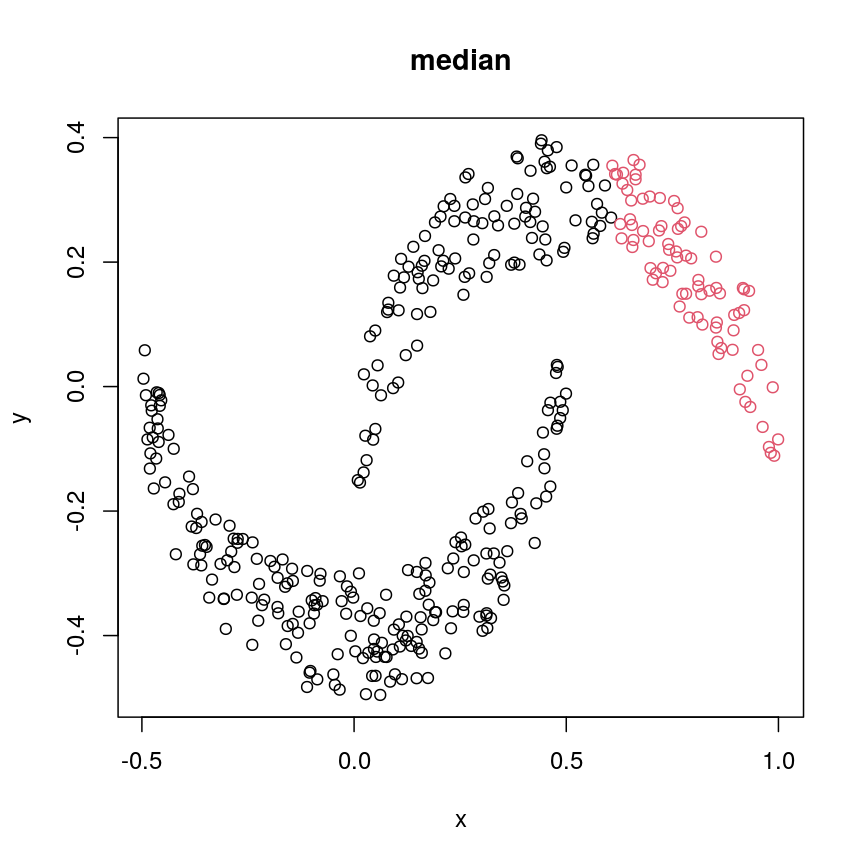

cresClassMedian<- cutree(hclust(dist(cres), method="median"), k=2) plot(cres, col=cresClassMedian, main="median")

A scatter plot of synthetic data coloured by cluster.

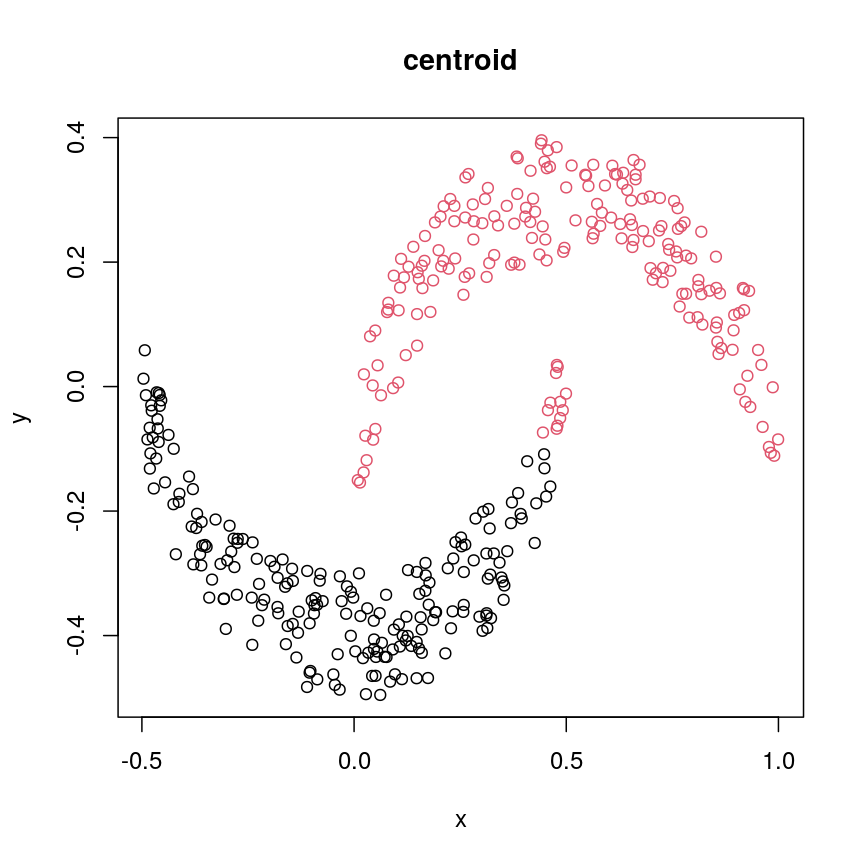

cresClassCentroid<- cutree(hclust(dist(cres), method="centroid"), k=2) plot(cres, col=cresClassCentroid, main="centroid")

A scatter plot of synthetic data coloured by cluster.

The linkage methods

single,ward.D, andaverageresolve each crescent as a separate cluster.

The help page of hclust() gives some intuition on linkage methods. It describes complete

(the default) and single as opposite ends of a spectrum with all other methods in between.

When using complete linkage, the distance between two clusters is assumed to be the distance

between both clusters’ most distant points. This opposite it true for single linkage, where

the minimum distance between any two points, one from each of two clusters is used. Single

linkage is described as friends-of-friends appporach - and really, it groups all close-together

points into the same cluster (thus resolving one cluster per crescent). Complete linkage on the

other hand recognises that some points a the tip of a crescent are much closer to points in the

other crescent and so it splits both crescents.

Using different distance methods

So far, we’ve been using Euclidean distance to define the dissimilarity or distance between observations. However, this isn’t always the best metric for how dissimilar the observations are. Let’s make an example to demonstrate. Here, we’re creating two samples each with ten observations of random noise:

set.seed(20)

cor_example <- data.frame(

sample_a = rnorm(10),

sample_b = rnorm(10)

)

rownames(cor_example) <- paste(

"Feature", 1:nrow(cor_example)

)

Now, let’s create a new sample that has exactly the same pattern across all

our features as sample_a, just offset by 5:

cor_example$sample_c <- cor_example$sample_a + 5

You can see that this is a lot like the assay() of our methylation object

from earlier, where columns are observations or samples, and rows are features:

head(cor_example)

sample_a sample_b sample_c

Feature 1 1.1626853 -0.02013537 6.162685

Feature 2 -0.5859245 -0.15038222 4.414076

Feature 3 1.7854650 -0.62812676 6.785465

Feature 4 -1.3325937 1.32322085 3.667406

Feature 5 -0.4465668 -1.52135057 4.553433

Feature 6 0.5696061 -0.43742787 5.569606

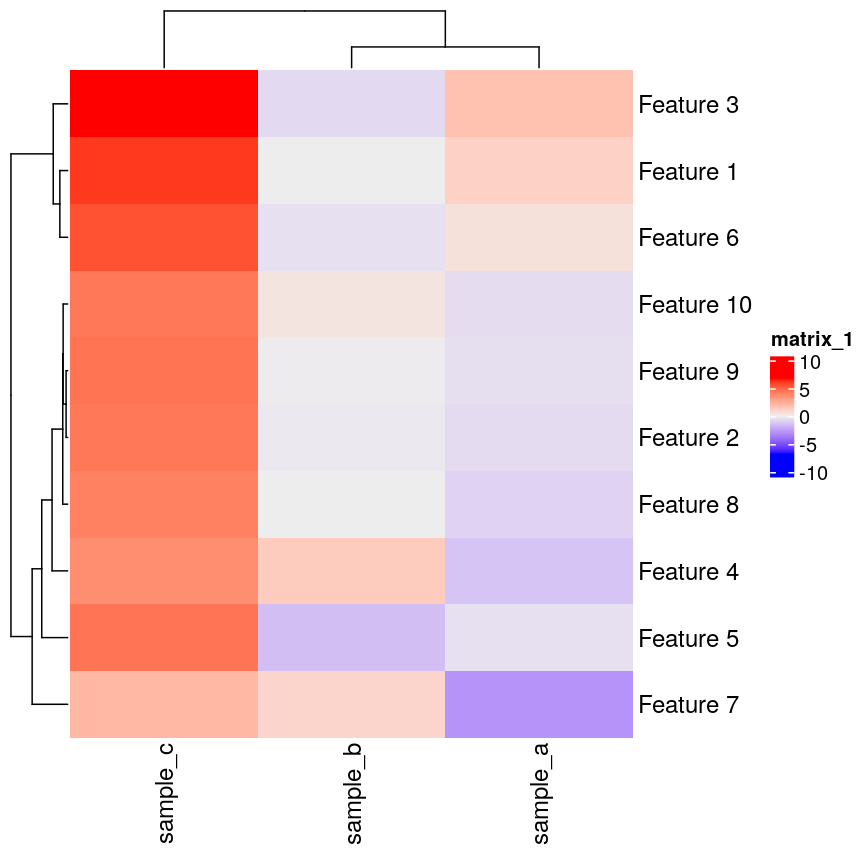

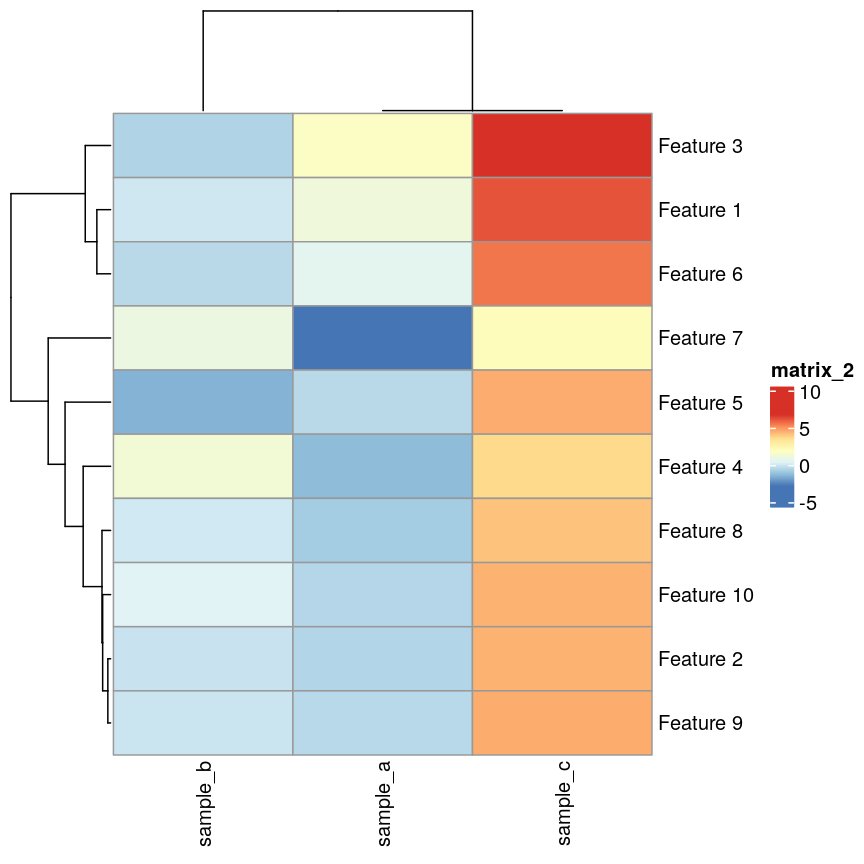

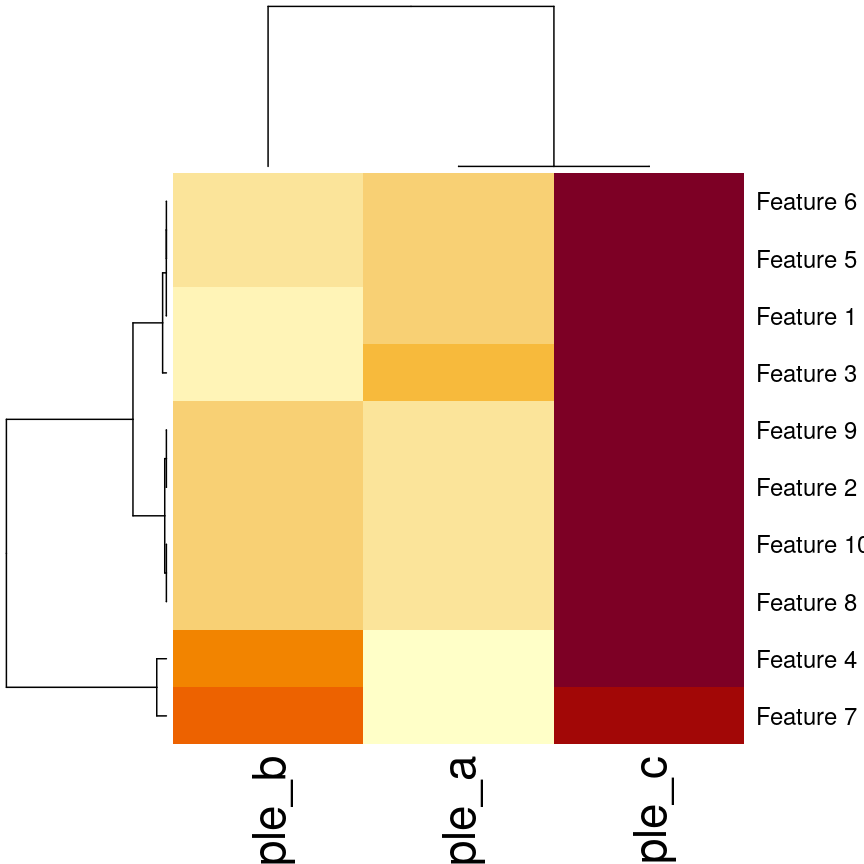

If we plot a heatmap of this, we can see that sample_a and sample_b are

grouped together because they have a small distance from each other, despite

being quite different in their pattern across the different features.

In contrast, sample_a and sample_c are very distant, despite having

exactly the same pattern across the different features.

Heatmap(as.matrix(cor_example))

Heatmap of simulated data.

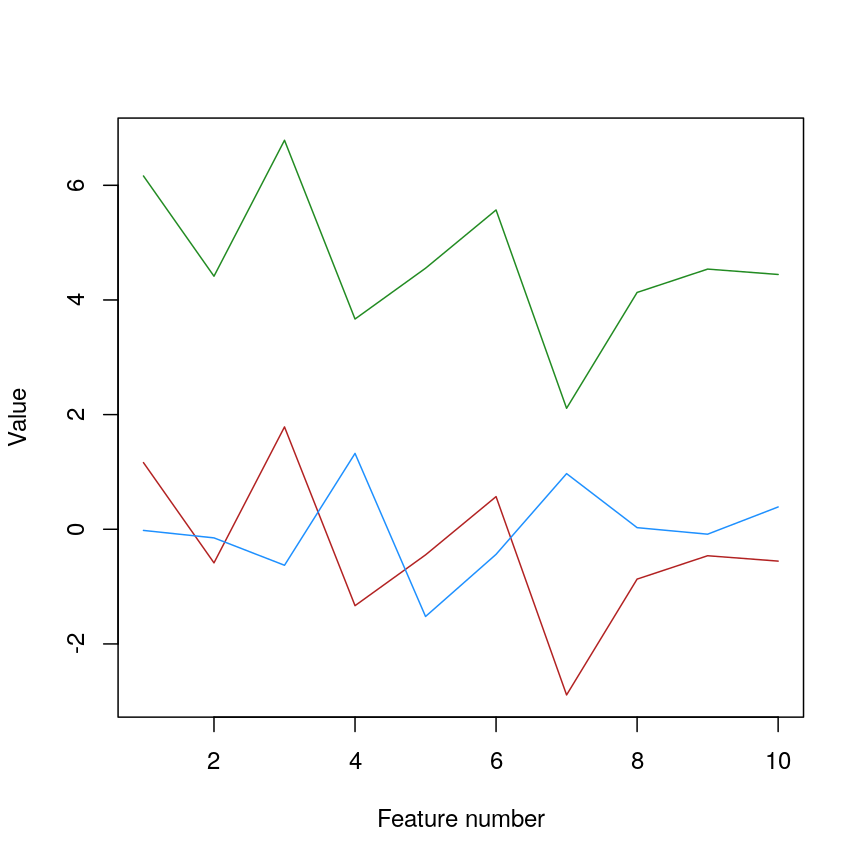

We can see that more clearly if we do a line plot:

## create a blank plot (type = "n" means don't draw anything)

## with an x range to hold the number of features we have.

## the range of y needs to be enough to show all the values for every feature

plot(

1:nrow(cor_example),

rep(range(cor_example), 5),

type = "n",

xlab = "Feature number",

ylab = "Value"

)

## draw a red line for sample_a

lines(cor_example$sample_a, col = "firebrick")

## draw a blue line for sample_b

lines(cor_example$sample_b, col = "dodgerblue")

## draw a green line for sample_c

lines(cor_example$sample_c, col = "forestgreen")

Line plot of simulated value versus observation number, coloured by sample.

We can see that sample_a and sample_c have exactly the same pattern across

all of the different features. However, due to the overall difference between

the values, they have a high distance to each other.

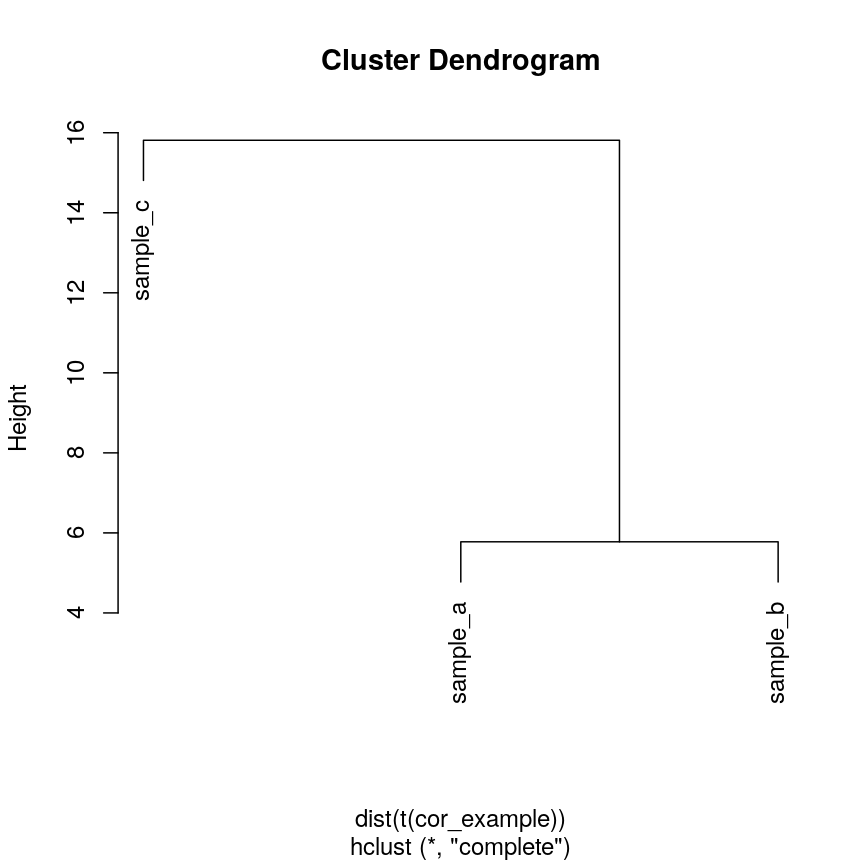

We can see that if we cluster and plot the data ourselves using Euclidean

distance:

clust_dist <- hclust(dist(t(cor_example)))

plot(clust_dist)

Dendrogram of the example simulated data clustered according to Euclidean distance.

In some cases, we might want to ensure that samples that have similar patterns,

whether that be of gene expression, or DNA methylation, have small distances

to each other. Correlation is a measure of this kind of similarity in pattern.

However, high correlations indicate similarity, while for a distance measure

we know that high distances indicate dissimilarity. Therefore, if we wanted

to cluster observations based on the correlation, or the similarity of patterns,

we can use 1 - cor(x) as the distance metric.

The input to hclust() must be a dist object, so we also need to call

as.dist() on it before passing it in.

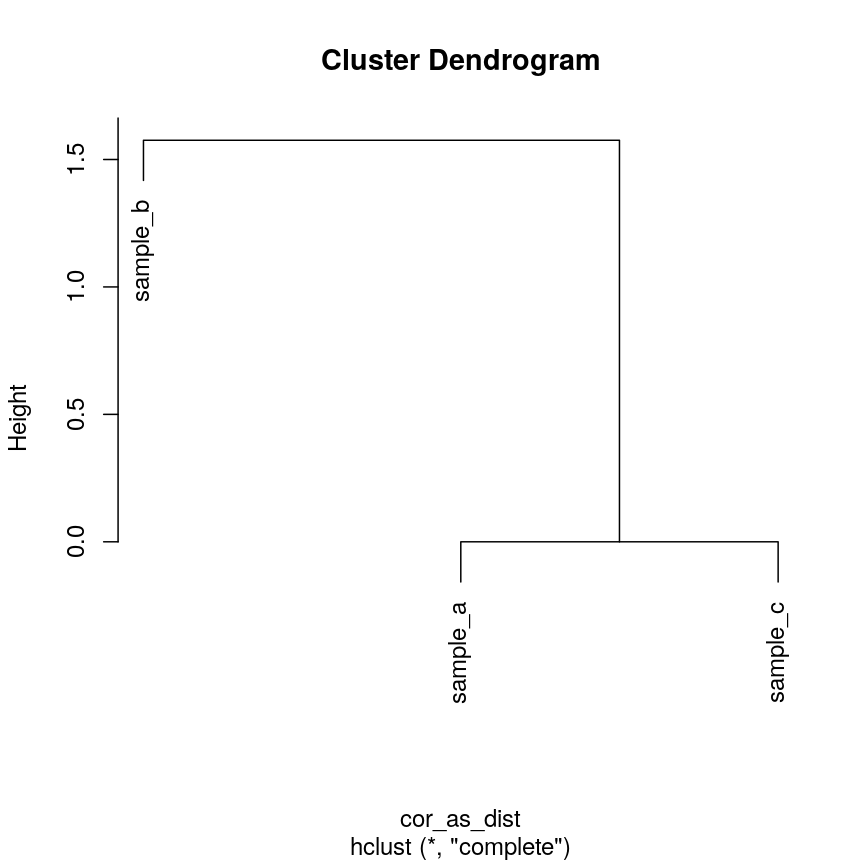

cor_as_dist <- as.dist(1 - cor(cor_example))

clust_cor <- hclust(cor_as_dist)

plot(clust_cor)

Dendrogram of the example simulated data clustered according to correlation.

Now, sample_a and sample_c that have identical patterns across the features

are grouped together, while sample_b is seen as distant because it has a

different pattern, even though its values are closer to sample_a.

Using your own distance function is often useful, especially if you have missing

or unusual data. It’s often possible to use correlation and other custom

dissimilarity measures in functions that perform hierarchical clustering, such as

pheatmap() and stats::heatmap():

## pheatmap allows you to select correlation directly

pheatmap(as.matrix(cor_example), clustering_distance_cols = "correlation")

Heatmaps of features versus samples clustered in the samples according to correlation.

## Using the built-in stats::heatmap

heatmap(

as.matrix(cor_example),

distfun = function(x) as.dist(1 - cor(t(x)))

)

Heatmaps of features versus samples clustered in the samples according to correlation.

Validating clusters

Now that we know how to carry out hierarchical clustering, how do we know how many clusters are optimal for the dataset?

Hierarchical clustering carried out on any dataset will produce clusters, even when there are no ‘real’ clusters in the data! We need to be able to determine whether identified clusters represent true groups in the data, or whether clusters have been identified just due to chance. In the last episode, we have introduced silhouette scores as a measure of cluster compactness and bootstrapping to assess cluster robustness. Such tests can be used to compare different clustering algorithms, for example, those fitted using different linkage methods.

Here, we introduce the Dunn index, which is a measure of cluster compactness. The Dunn index is the ratio of the smallest distance between any two clusters and to the largest intra-cluster distance found within any cluster. This can be seen as a family of indices which differ depending on the method used to compute distances. The Dunn index is a metric that penalises clusters that have larger intra-cluster variance and smaller inter-cluster variance. The higher the Dunn index, the better defined the clusters.

Let’s calculate the Dunn index for clustering carried out on the

methyl_mat dataset using the clValid package.

## calculate dunn index

## (ratio of the smallest distance between obs not in the same cluster

## to the largest intra-cluster distance)

library("clValid")

## calculate euclidean distance between points

distmat <- dist(methyl_mat)

clust <- hclust(distmat, method = "complete")

plot(clust)

Dendrogram for clustering of methylation data.

cut <- cutree(clust, h = 50)

## retrieve Dunn's index for given matrix and clusters

dunn(distance = distmat, cut)

[1] 0.8823501

The value of the Dunn index has no meaning in itself, but is used to compare between sets of clusters with larger values being preferred.

Challenge 4

Examine how changing the

horkarguments incutree()affects the value of the Dunn index.Solution:

library("clValid") distmat <- dist(methyl_mat) clust <- hclust(distmat, method = "complete") #Varying h ## Obtaining the clusters cut_h_20 <- cutree(clust, h = 20) cut_h_30 <- cutree(clust, h = 30) ## How many clusters? length(table(cut_h_20))[1] 36length(table(cut_h_30))[1] 14dunn(distance = distmat, cut_h_20)[1] 1.61789dunn(distance = distmat, cut_h_30)[1] 0.8181846#Varying k ## Obtaining the clusters cut_k_10 <- cutree(clust, k = 10) cut_k_5 <- cutree(clust, k = 5) ## How many clusters? length(table(cut_k_5))[1] 5length(table(cut_k_10))[1] 10dunn(distance = distmat, cut_k_5)[1] 0.8441528dunn(distance = distmat, cut_k_10)[1] 0.7967132

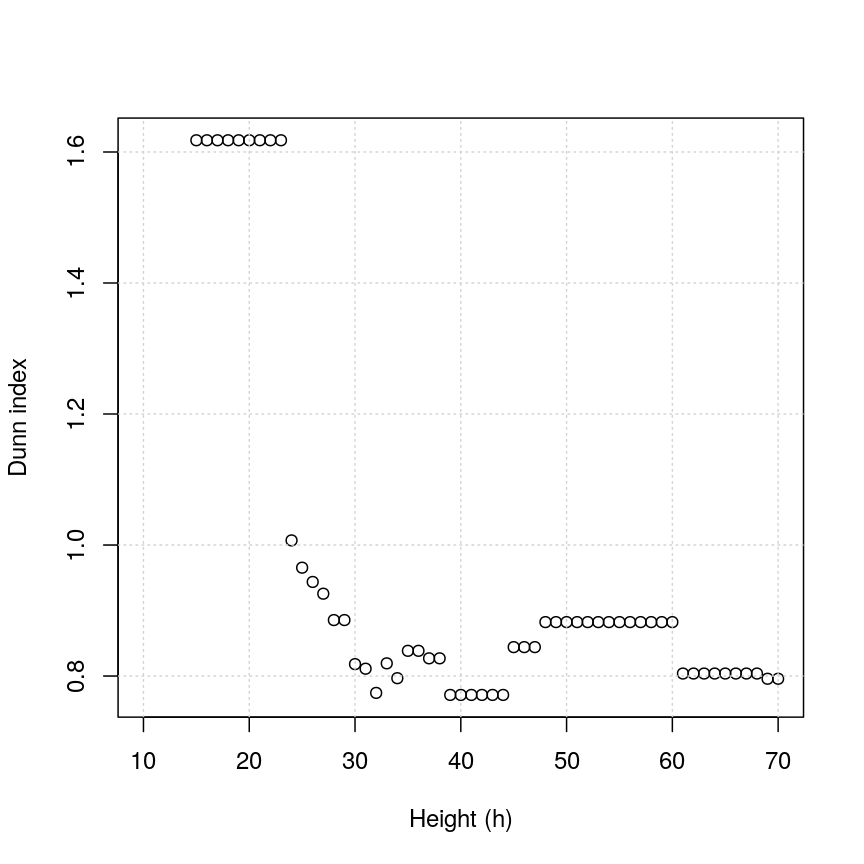

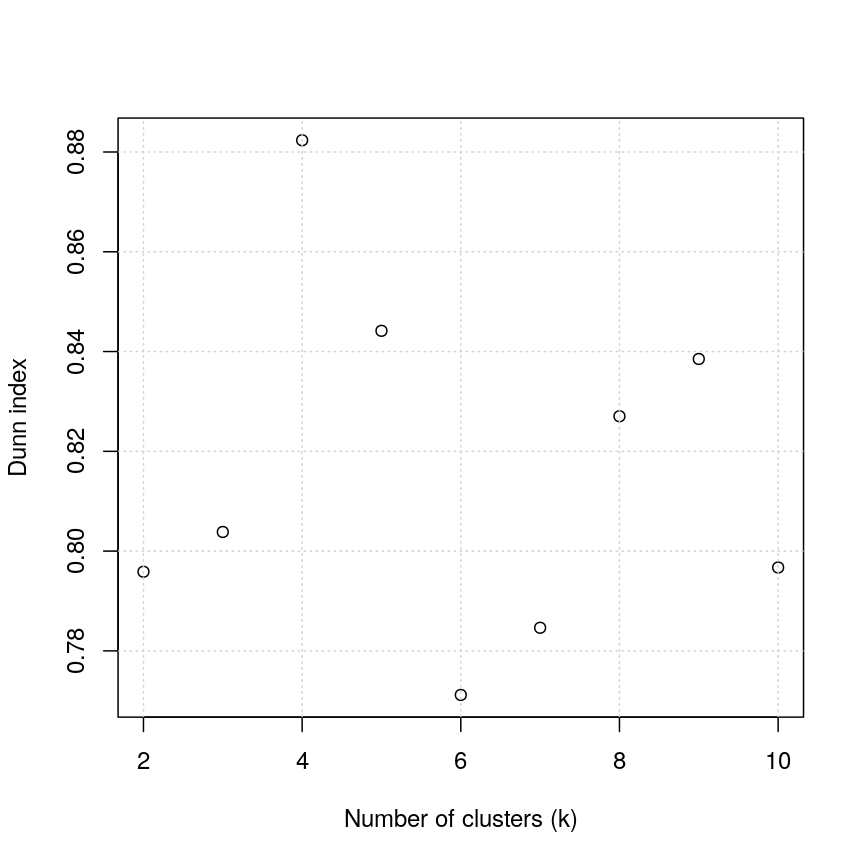

The figures below show in a more systematic way how changing the values of k and

h using cutree() affect the Dunn index.

h_seq <- 70:10

h_dunn <- sapply(h_seq, function(x) dunn(distance = distmat, cutree(clust, h = x)))

k_seq <- seq(2, 10)

k_dunn <- sapply(k_seq, function(x) dunn(distance = distmat, cutree(clust, k = x)))

plot(h_seq, h_dunn, xlab = "Height (h)", ylab = "Dunn index")

grid()

Dunn index versus cut height for methylation data.

You can see that at low values of h, the Dunn index can be high. But this

is not very useful - cutting the given tree at a low h value like 15 leads to allmost all observations

ending up each in its own cluster. More relevant is the second maximum in the plot, around h=55.

Looking at the dendrogram, this corresponds to k=4.

plot(k_seq, k_dunn, xlab = "Number of clusters (k)", ylab = "Dunn index")

grid()

Scatter plot of Dunn index versus the number of clusters for the methylation data.

For the given range of k values explored, we obtain the highest Dunn index with k=4.

This is in agreement with the previous plot.

There have been criticisms of the use of the Dunn index in validating clustering results, due to its high sensitivity to noise in the dataset. An alternative is to use silhouette scores (see the k-means clustering episode).

As we said before (see previous episode), clustering is a non-trivial task. It is important to think about the nature of your data and your expectations rather than blindly using a some algorithm for clustering or cluster validation.

Further reading

- Dunn, J. C. (1974) Well-separated clusters and optimal fuzzy partitions. Journal of Cybernetics 4(1):95–104.

- Halkidi, M., Batistakis, Y. & Vazirgiannis, M. (2001) On clustering validation techniques. Journal of Intelligent Information Systems 17(2/3):107-145.

- James, G., Witten, D., Hastie, T. & Tibshirani, R. (2013) An Introduction to Statistical Learning with Applications in R. Section 10.3.2 (Hierarchical Clustering).

- Understanding the concept of Hierarchical clustering Technique. towards data science blog.

Key Points

Hierarchical clustering uses an algorithm to group similar data points into clusters. A dendrogram is used to plot relationships between clusters (using the

hclust()function in R).Hierarchical clustering differs from k-means clustering as it does not require the user to specify expected number of clusters

The distance (dissimilarity) matrix can be calculated in various ways, and different clustering algorithms (linkage methods) can affect the resulting dendrogram.

The Dunn index can be used to validate clusters using the original dataset.