All in One View

Content from Setting the Scene

Last updated on 2026-02-24 | Edit this page

Estimated time: 15 minutes

Overview

Questions

- What are we teaching in this course?

- What motivated the selection of topics covered in the course?

Objectives

- Setting the scene and expectations

- Making sure everyone has all the necessary software installed

Introduction

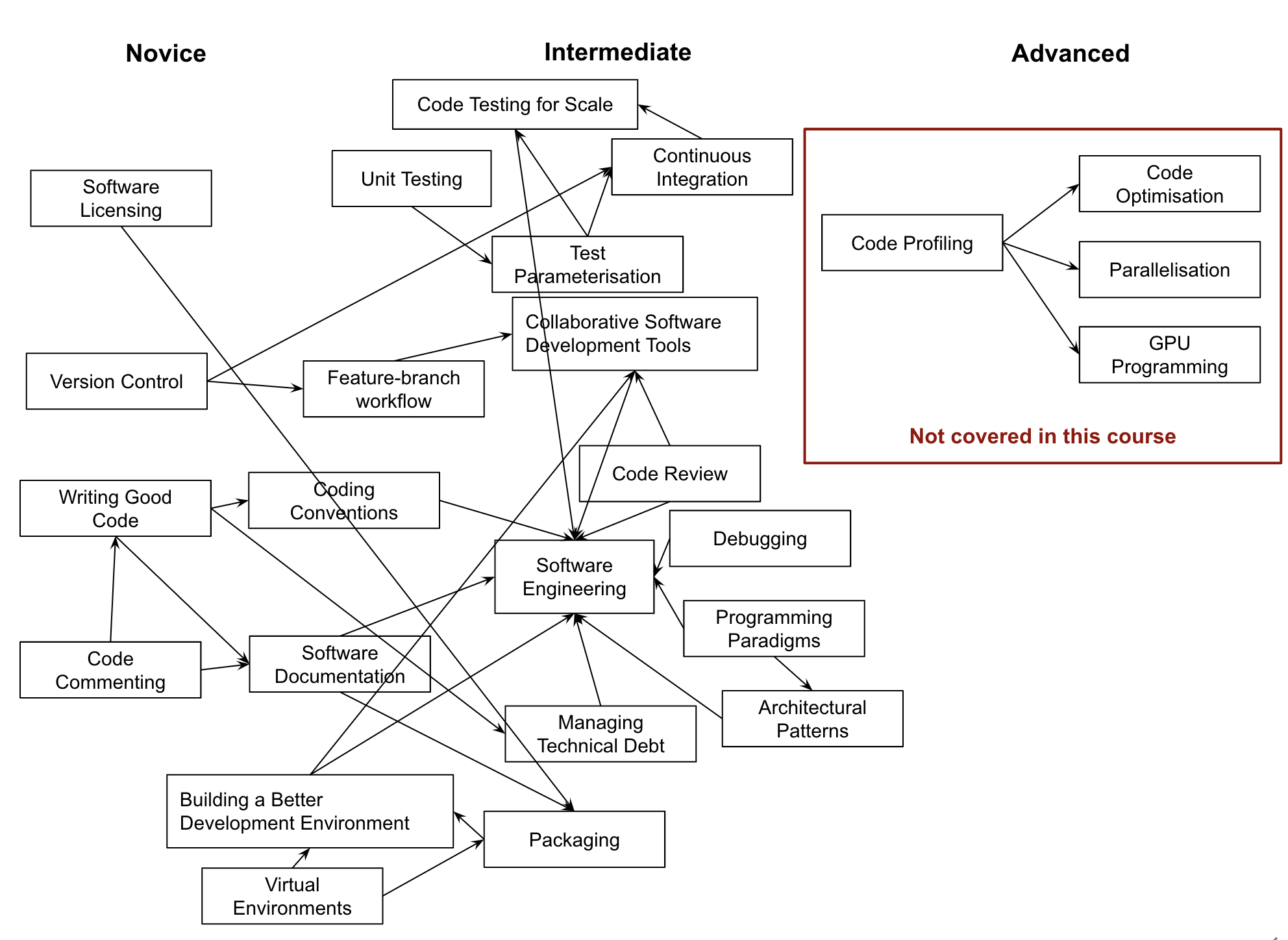

So, you have gained basic software development skills either by self-learning or attending, e.g., a novice Software Carpentry course. You have been applying those skills for a while by writing code to help with your work and you feel comfortable developing code and troubleshooting problems. However, your software has now reached a point where there is too much code to be kept in one script. Perhaps it is involving more researchers (developers) and users, and more collaborative development effort is needed to add new functionality while ensuring previous development efforts remain functional and maintainable.

This course provides the next step in software development - it teaches some intermediate software engineering skills and best practices to help you restructure existing code and design more robust, reusable and maintainable code, automate the process of testing and verifying software correctness and support collaborations with others in a way that mimics a typical software development process within a team.

The course uses a number of different software development tools and techniques interchangeably as you would in a real life. We had to make some choices about topics and tools to teach here, based on established best practices, ease of tool installation for the audience, length of the course and other considerations. Tools used here are not mandated though: alternatives exist and we point some of them out along the way. Over time, you will develop a preference for certain tools and programming languages based on your personal taste or based on what is commonly used by your group, collaborators or community. However, the topics covered should give you a solid foundation for working on software development in a team and producing high quality software that is easier to develop and sustain in the future by yourself and others. Skills and tools taught here, while Python-specific, are transferable to other similar tools and programming languages.

The course is organised into the following sections.

flowchart LR

accTitle: Overview of sections in the lesson

accDescr {Course overview diagram. Arrows connect the following boxed

text in order: 1) Setting up software environment 2) Verifying software

correctness 3) Software development as a process 4) Collaborative

development for reuse 5) Managing software over its lifetime.}

A(1. Setting up software environment) --> B(2. Verifying software correctness)

B --> C(3. Software development as a process)

C --> D(4. Collaborative development for reuse)

D --> E(5. Managing software over its lifetime)Section 1: Setting up Software Environment

In the first section we are going to set up our working environment and familiarise ourselves with various tools and techniques for software development in a typical collaborative code development cycle:

- Virtual environments for isolating a project from other projects developed on the same machine

- Command line for running code and interacting with the command line tool Git for

- Integrated Development Environment for code development, testing and debugging, Version control and using code branches to develop new features in parallel,

- GitHub (central and remote source code management platform supporting version control with Git) for code backup, sharing and collaborative development, and

- Python code style guidelines to make sure our code is documented, readable and consistently formatted.

Section 2: Verifying Software Correctness at Scale

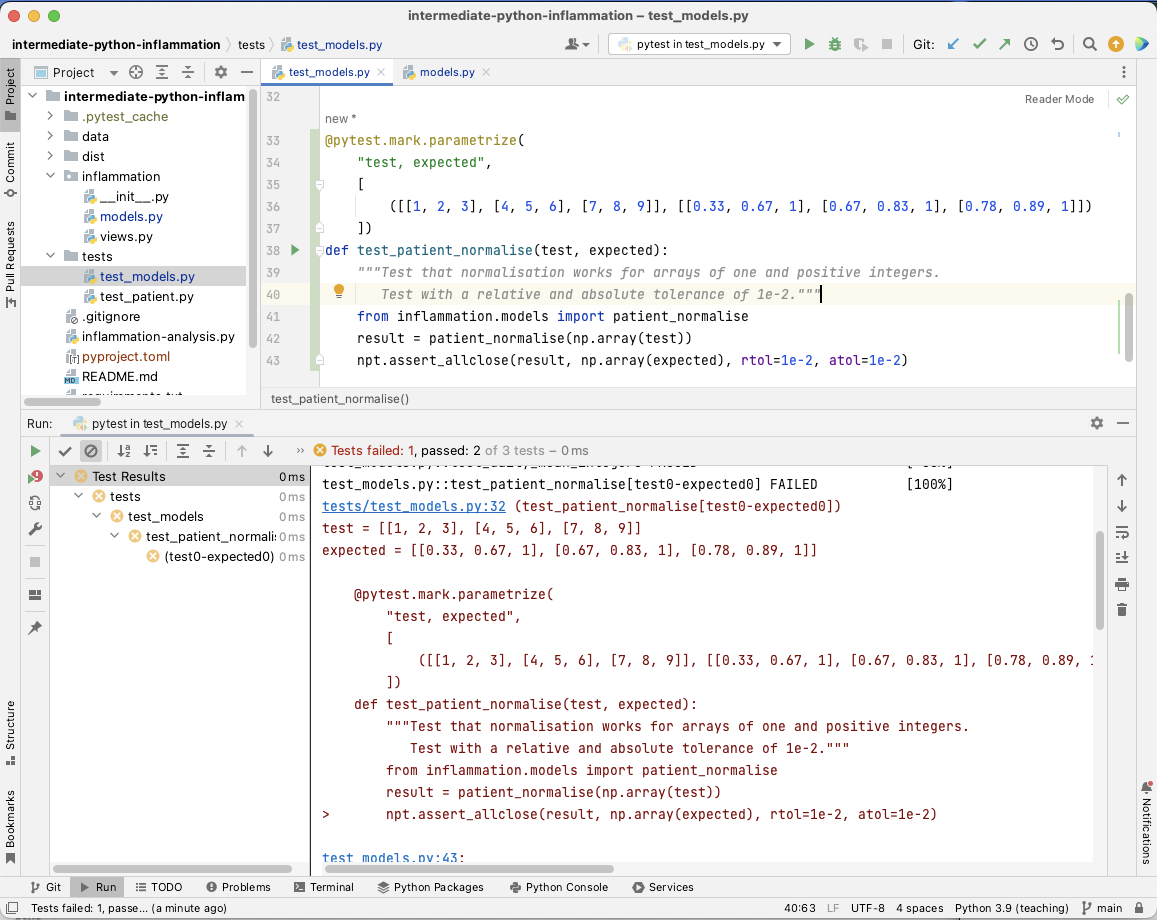

Once we know our way around different code development tools, techniques and conventions, in this section we learn:

- how to set up a test framework and write tests to verify the behaviour of our code is correct, and

- how to automate and scale testing with Continuous Integration (CI) using GitHub Actions (a CI service available on GitHub).

Section 3: Software Development as a Process

In this section, we step away from writing code for a bit to look at software from a higher level as a process of development and its components:

- different types of software requirements and designing and architecting software to meet them, how these fit within the larger software development process and what we should consider when testing against particular types of requirements.

- different programming and software design paradigms, each representing a slightly different way of thinking about, structuring and implementing the code.

Section 4: Collaborative Software Development for Reuse

Advancing from developing code as an individual, in this section you will start working with your fellow learners on a group project (as you would do when collaborating on a software project in a team), and learn:

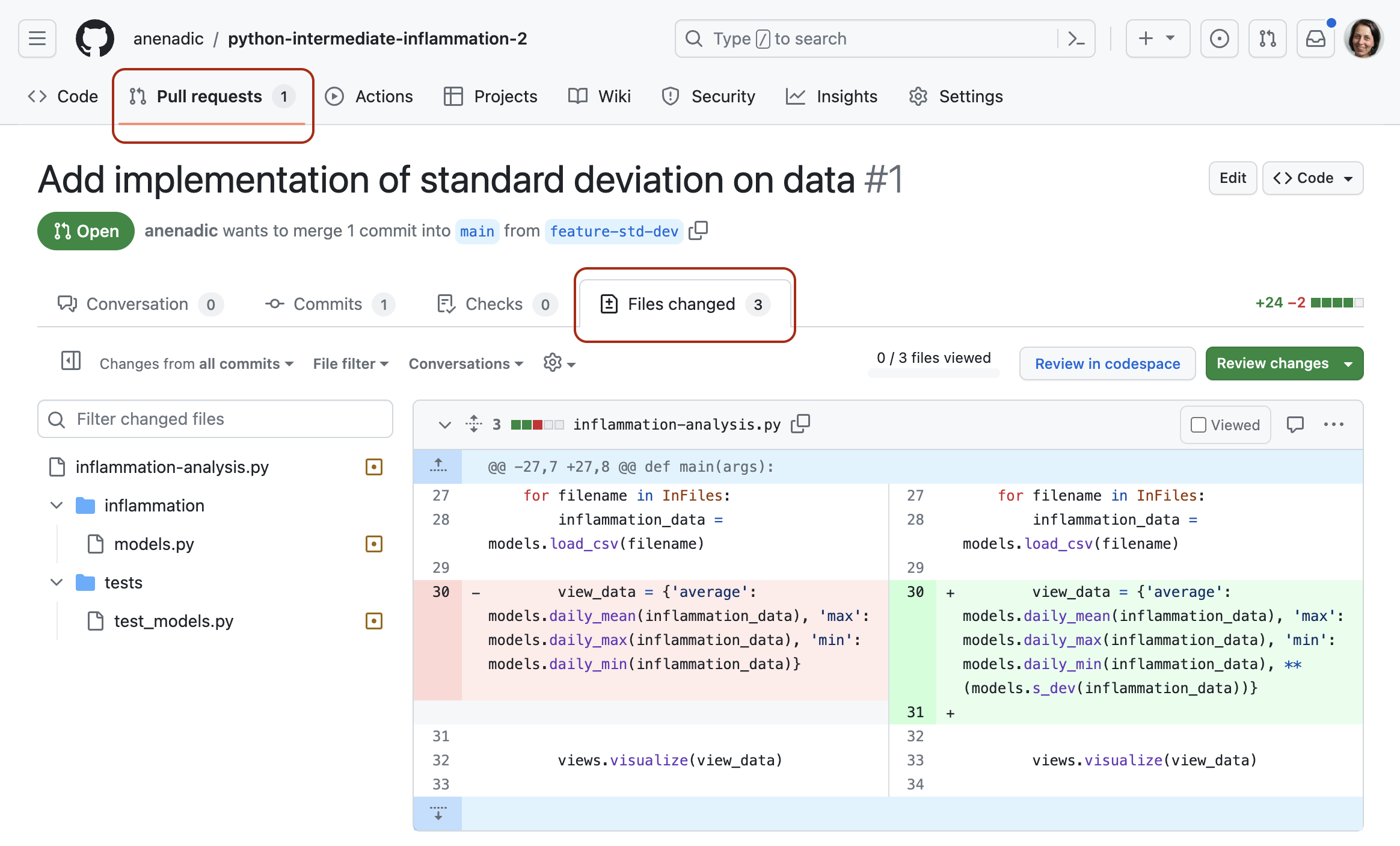

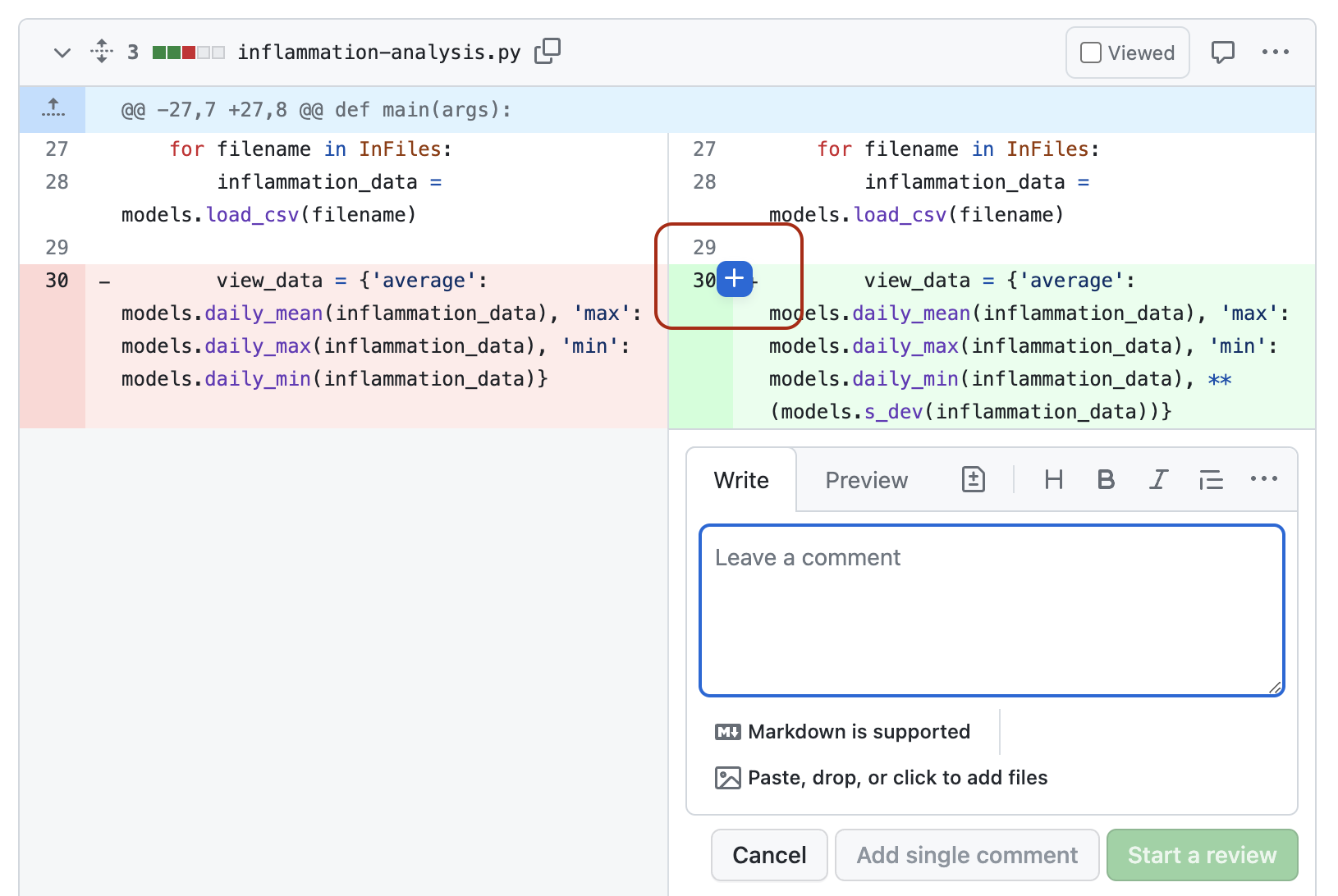

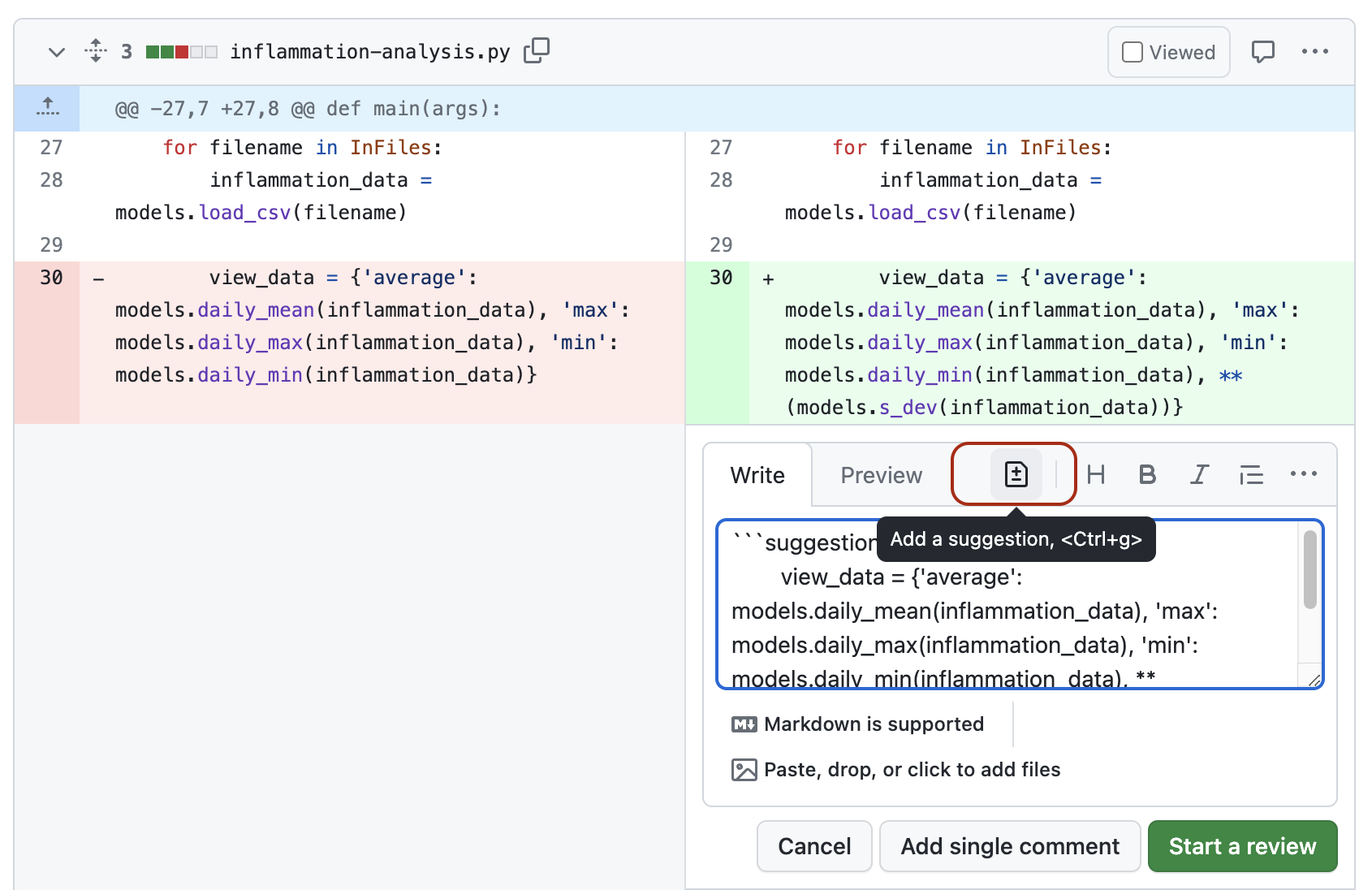

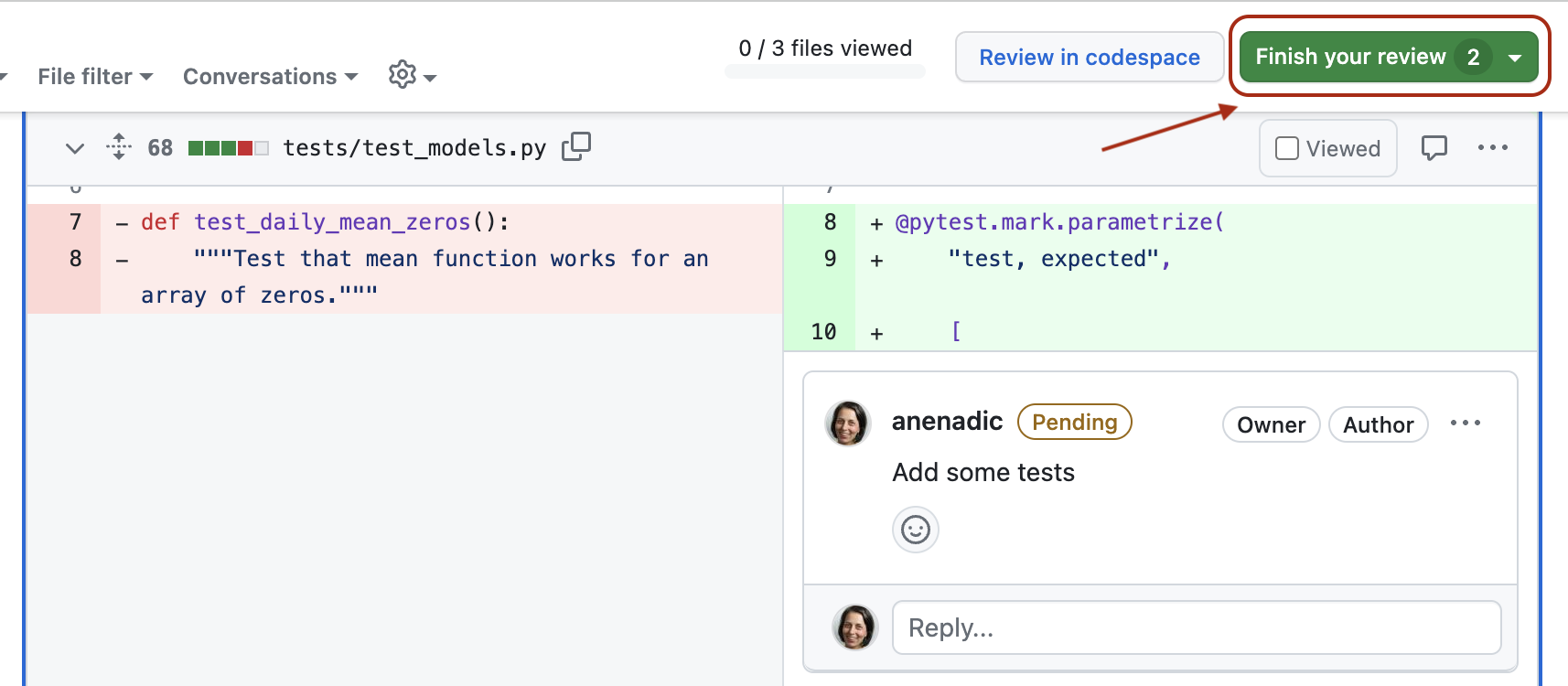

- how code review can help improve team software contributions, identify wider codebase issues, and increase codebase knowledge across a team.

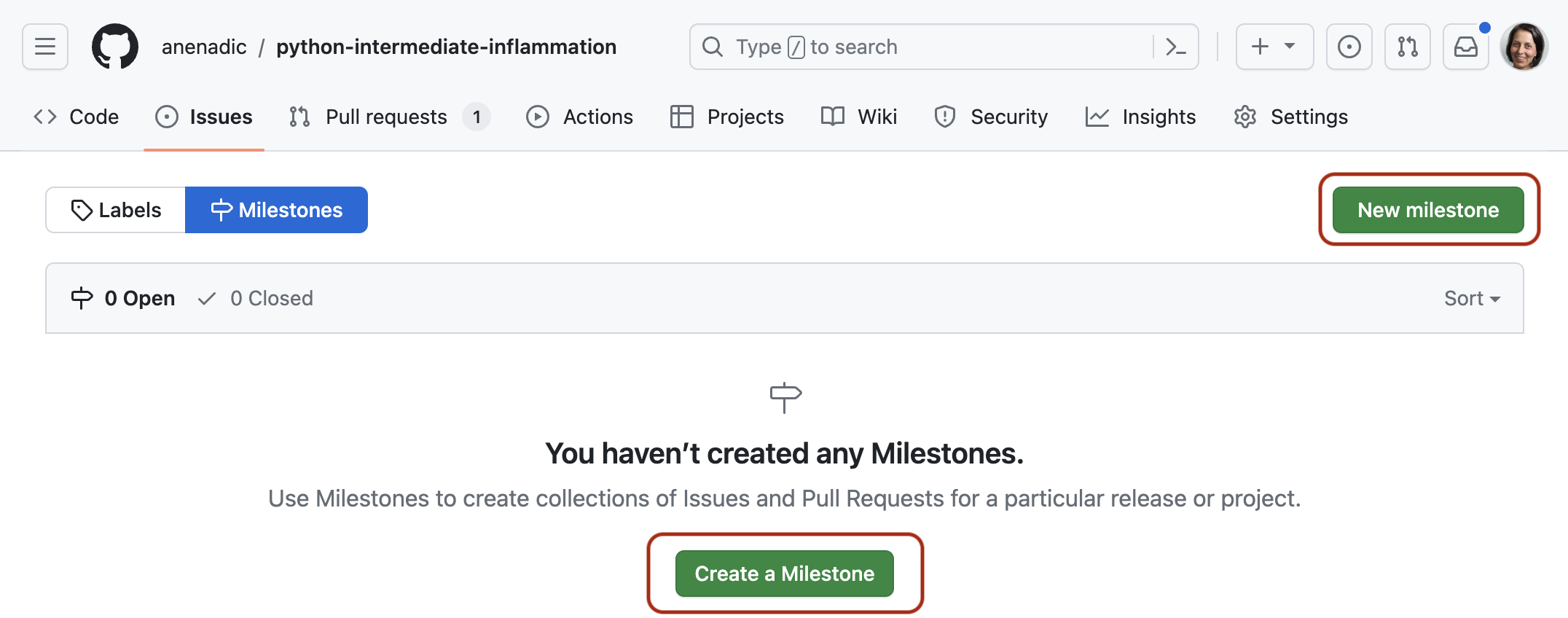

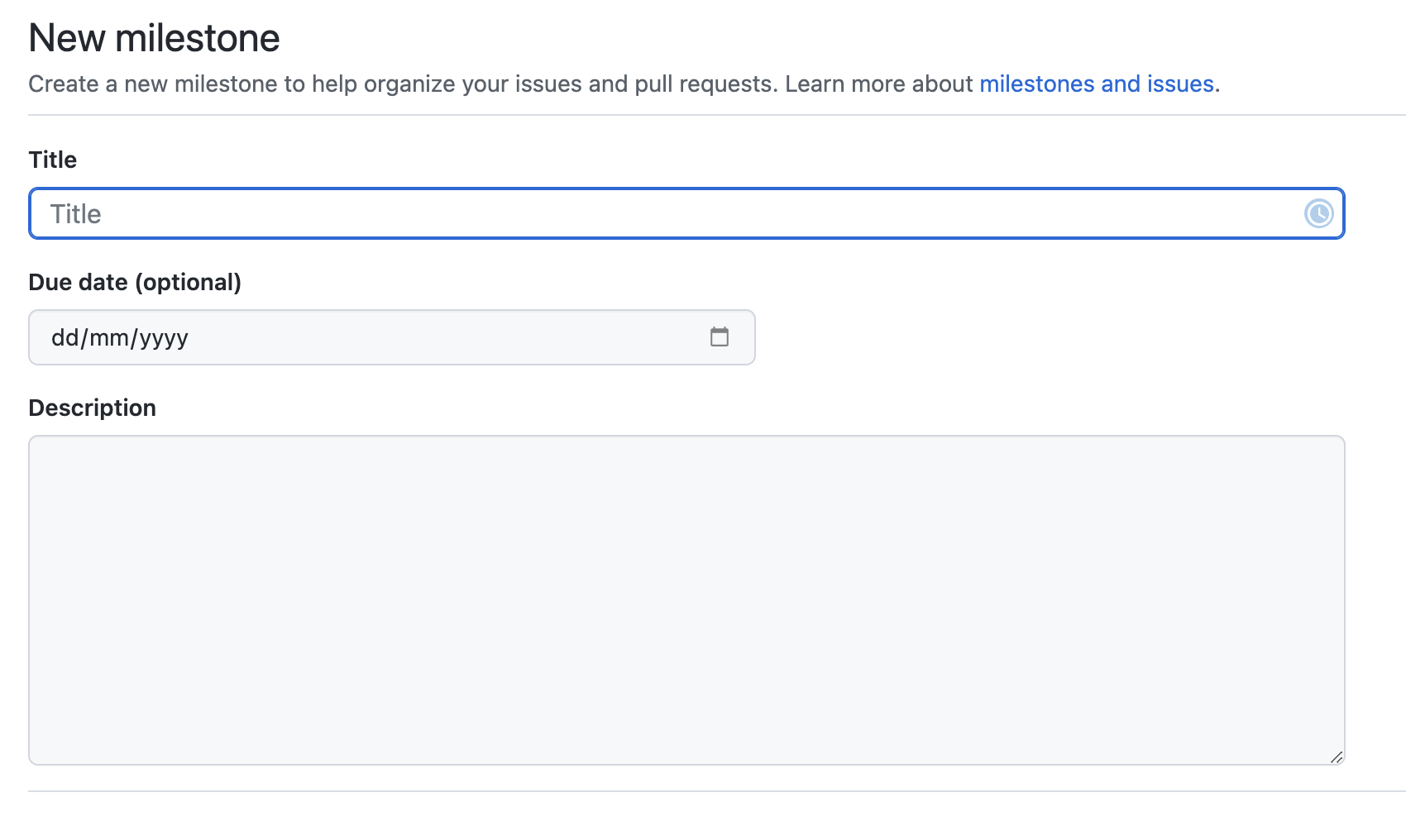

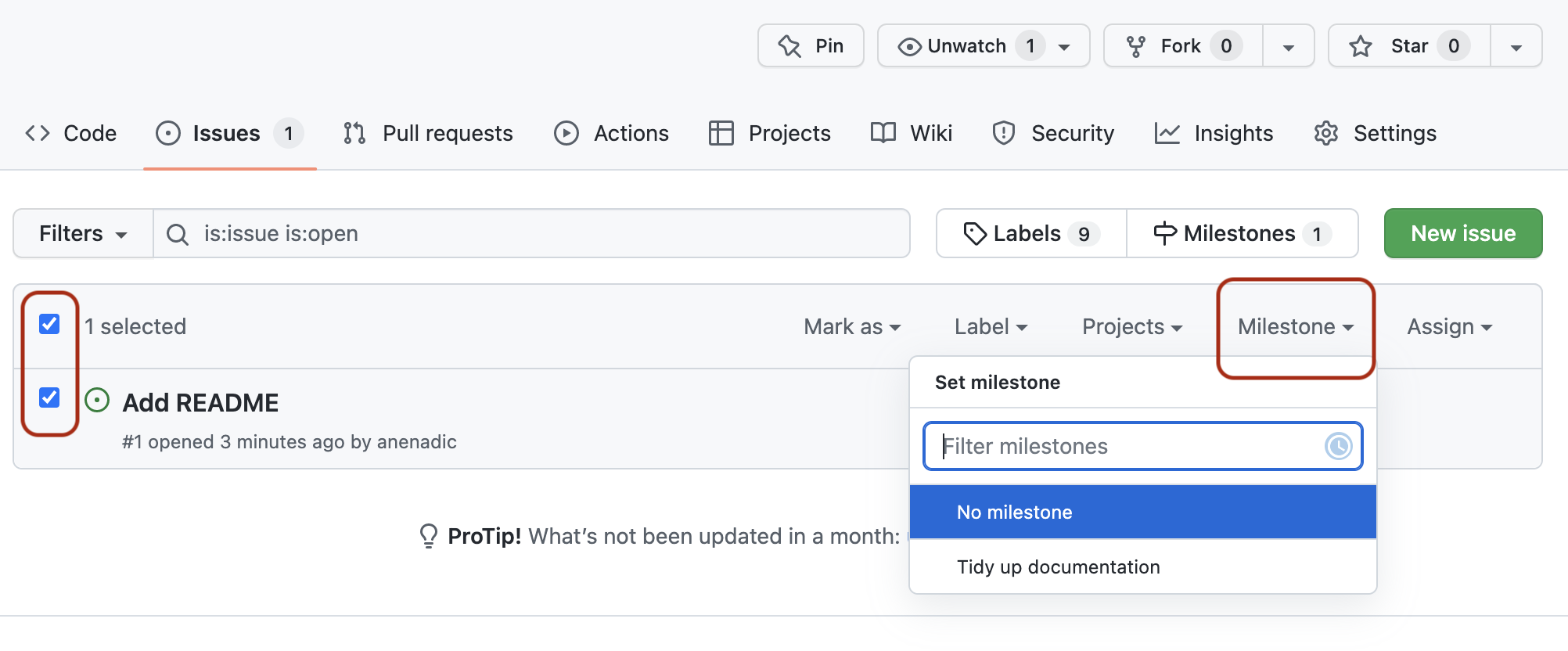

- what we can do to prepare our software for further development and reuse, by adopting best practices in documenting, licencing, tracking issues, supporting your software, and packaging software for release to others.

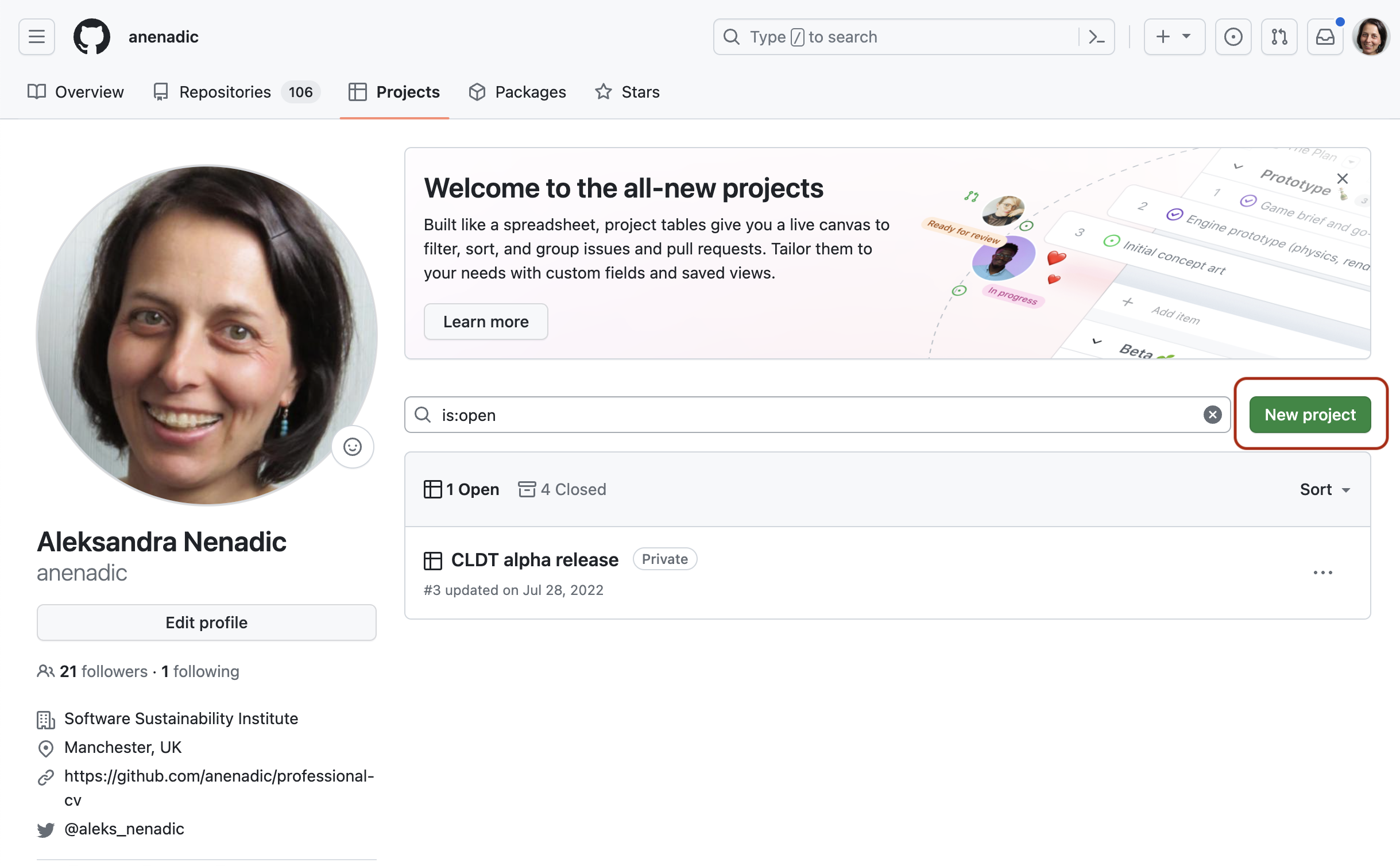

Section 5: Managing and Improving Software Over Its Lifetime

Finally, we move beyond just software development to managing a collaborative software project and will look into:

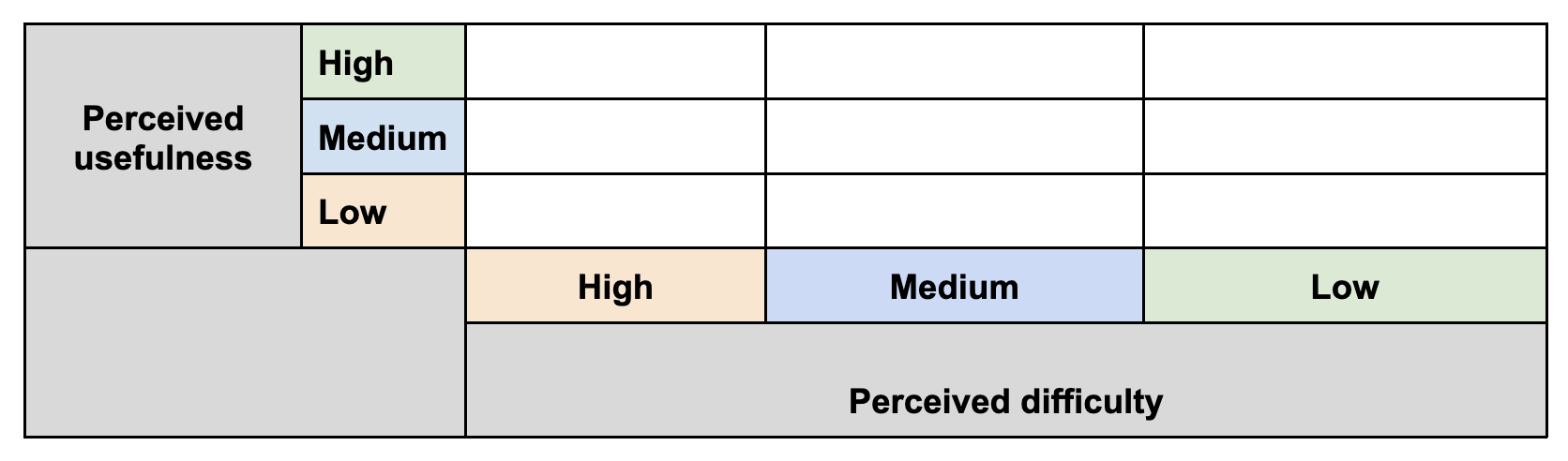

- internal planning and prioritising tasks for future development using agile techniques and effort estimation, management of internal and external communication, and software improvement through feedback.

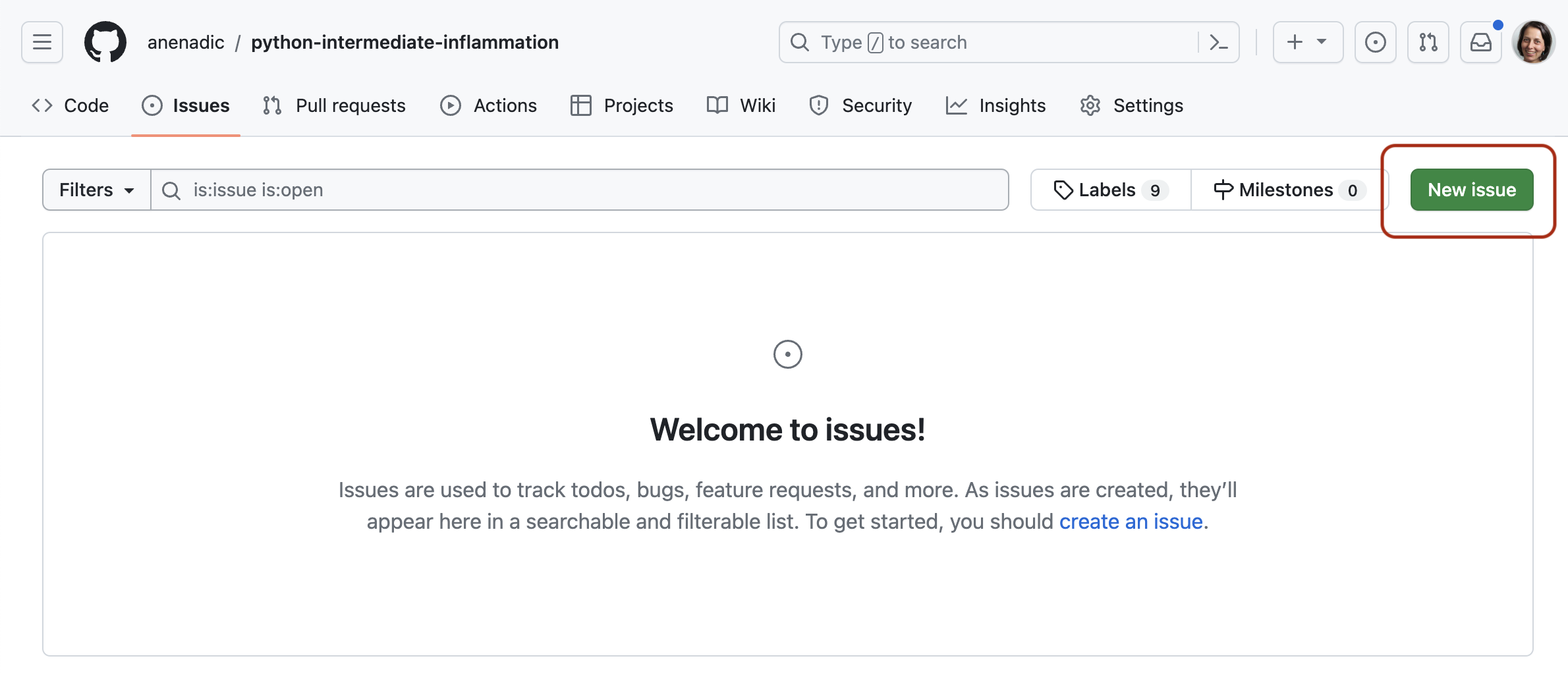

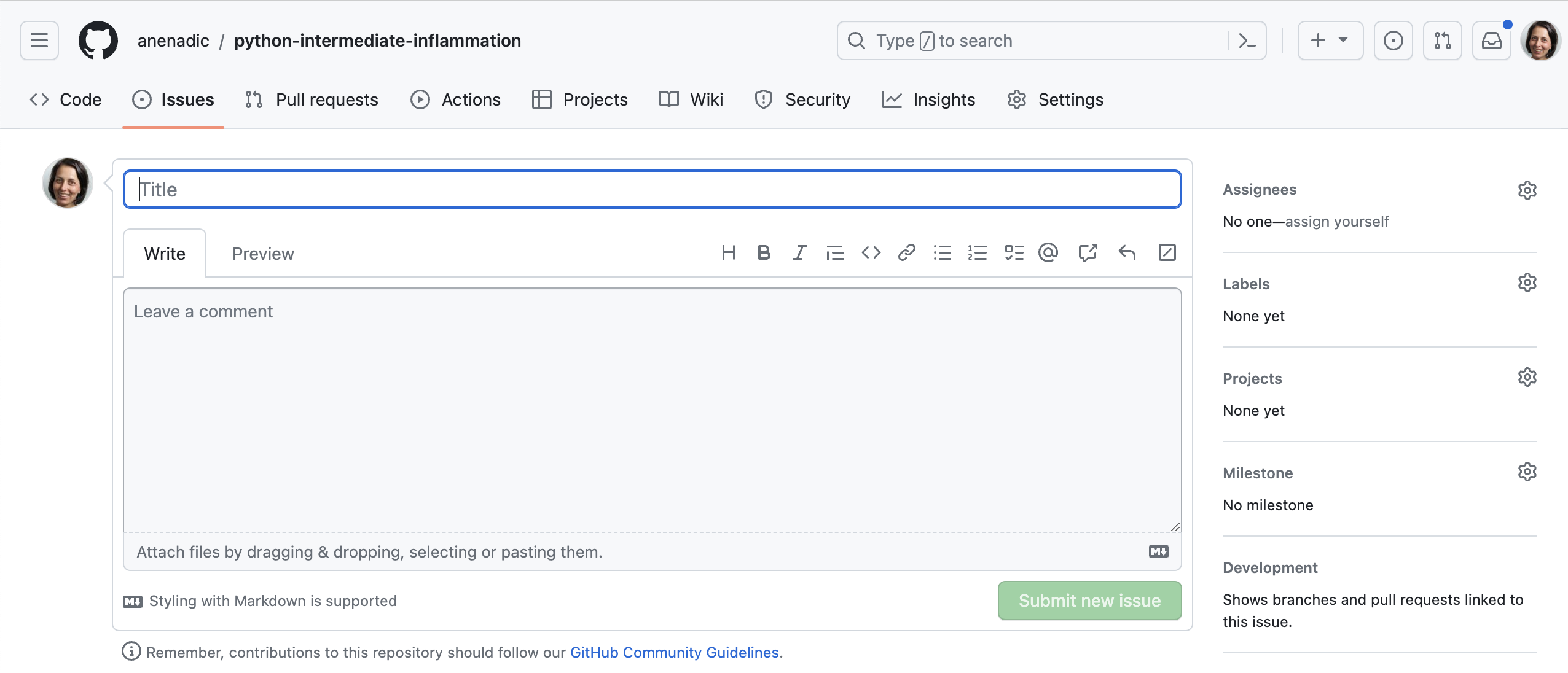

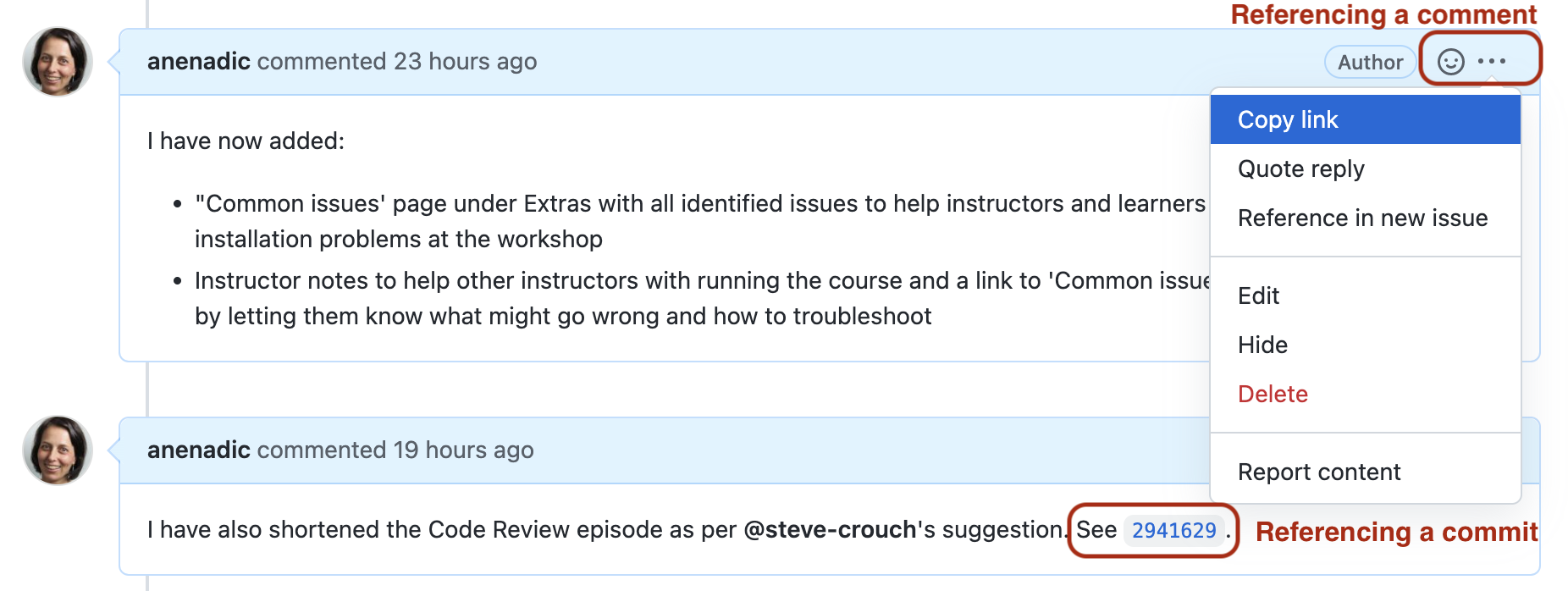

- how to adopt a critical mindset not just towards our own software project but also to assess other people’s software to ensure it is suitable for us to reuse, identify areas for improvement, and how to use GitHub to register good quality issues with a particular code repository.

Before We Start

A few notes before we start.

Prerequisite Knowledge

This is an intermediate-level software development course intended for people who have already been developing code in Python (or other languages) and applying it to their own problems after gaining basic software development skills. So, it is expected for you to have some prerequisite knowledge on the topics covered, as outlined at the beginning of the lesson. Check out this quiz to help you test your prior knowledge and determine if this course is for you.

Setup, Common Issues & Fixes

Have you setup and installed all the tools and accounts required for this course? Check the list of common issues, fixes & tips if you experience any problems running any of the tools you installed - your issue may be solved there.

Compulsory and Optional Exercises

Exercises are a crucial part of this course and the narrative. They are used to reinforce the points taught and give you an opportunity to practice things on your own. Please do not be tempted to skip exercises as that will get your local software project out of sync with the course and break the narrative. Exercises that are clearly marked as “optional” can be skipped without breaking things but we advise you to go through them too, if time allows. All exercises contain solutions but, wherever possible, try and work out a solution on your own.

Outdated Screenshots

Throughout this lesson we will make use and show content from Graphical User Interface (GUI) tools such as Integrated Development Environments (IDEs) and GitHub. These are evolving tools and platforms, always adding new features and new visual elements. Screenshots in the lesson may then become out-of-sync, refer to or show content that no longer exists or is different to what you see on your machine. If during the lesson you find screenshots that no longer match what you see or have a big discrepancy with what you see, please open an issue describing what you see and how it differs from the lesson content. Feel free to add as many screenshots as necessary to clarify the issue.

- This lesson focuses on core, intermediate skills covering the whole software development life-cycle that will be of most use to anyone working collaboratively on code.

- For code development in teams - you need more than just the right tools and languages. You need a strategy (best practices) for how you’ll use these tools as a team.

- The lesson follows on from the novice Software Carpentry lesson, but this is not a prerequisite for attending as long as you have some basic Python, command line and Git skills and you have been using them for a while to write code to help with your work.

Content from Section 1: Setting Up Environment For Collaborative Code Development

Last updated on 2026-02-24 | Edit this page

Estimated time: 10 minutes

Overview

Questions

- What tools are needed to collaborate on code development effectively?

Objectives

- Provide an overview of all the different tools that will be used in this course.

The first section of the course is dedicated to setting up your environment for collaborative software development and introducing the project that we will be working on throughout the course. In order to build working (research) software efficiently and to do it in collaboration with others rather than in isolation, you will have to get comfortable with using a number of different tools interchangeably as they will make your life a lot easier. There are many options when it comes to deciding which software development tools to use for your daily tasks - we will use a few of them in this course that we believe make a difference. There are sometimes multiple tools for the job - we select one to use but mention alternatives too. As you get more comfortable with different tools and their alternatives, you will select the one that is right for you based on your personal preferences or based on what your collaborators are using.

flowchart LR

accDescr {Topics on effective collaborating on code development covered in

the current section: virtual development environments, version control &

sharing code, writing readable code & code standards}

A("`1. Setting up software environment

- Isolating & running code: command line, virtual environment & IDE

- Version control and sharing code: Git & GitHub

- Well-written & readable code: PEP8`")

A --> B(2. Verifying software correctness)

B --> C(3. Software development as a process)

C --> D(4. Collaborative development for reuse)

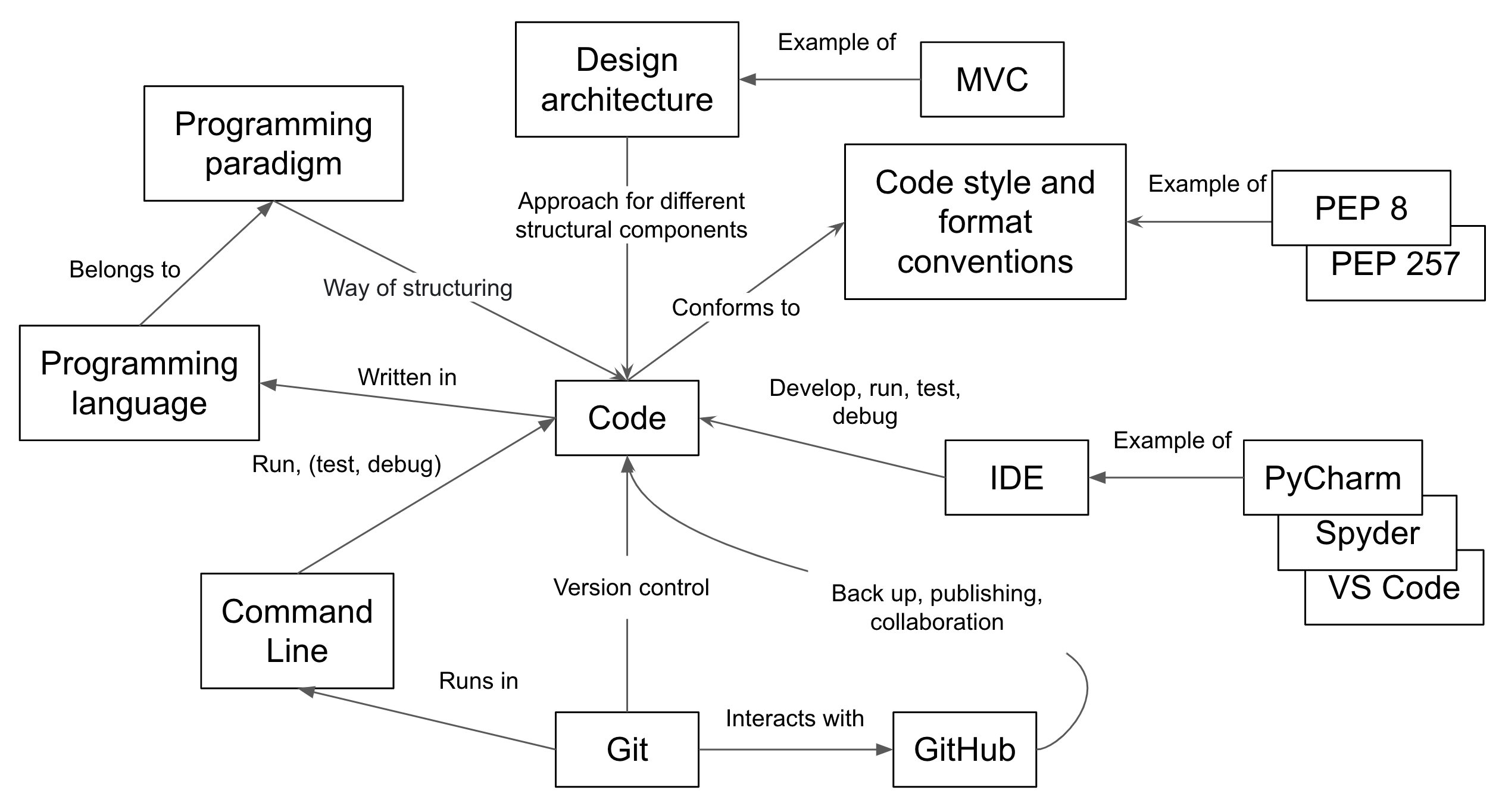

D --> E(5. Managing software over its lifetime)Here is an overview of the tools we will be using.

Setup, Common Issues & Fixes

Have you setup and installed all the tools and accounts required for this course? Check the list of common issues, fixes & tips if you experience any problems running any of the tools you installed - your issue may be solved there.

Command Line & Python Virtual Development Environment

We will use the command line

(also known as the command line shell/prompt/console) to run our Python

code and interact with the version control tool Git and software sharing

platform GitHub. We will also use command line tools venv

and pip to set

up a Python virtual development environment and isolate our software

project from other Python projects we may work on.

Note: some Windows users experience the issue

where Python hangs from Git Bash (i.e. typing python causes

it to just hang with no error message or output) - see the solution to

this issue.

Integrated Development Environment (IDE)

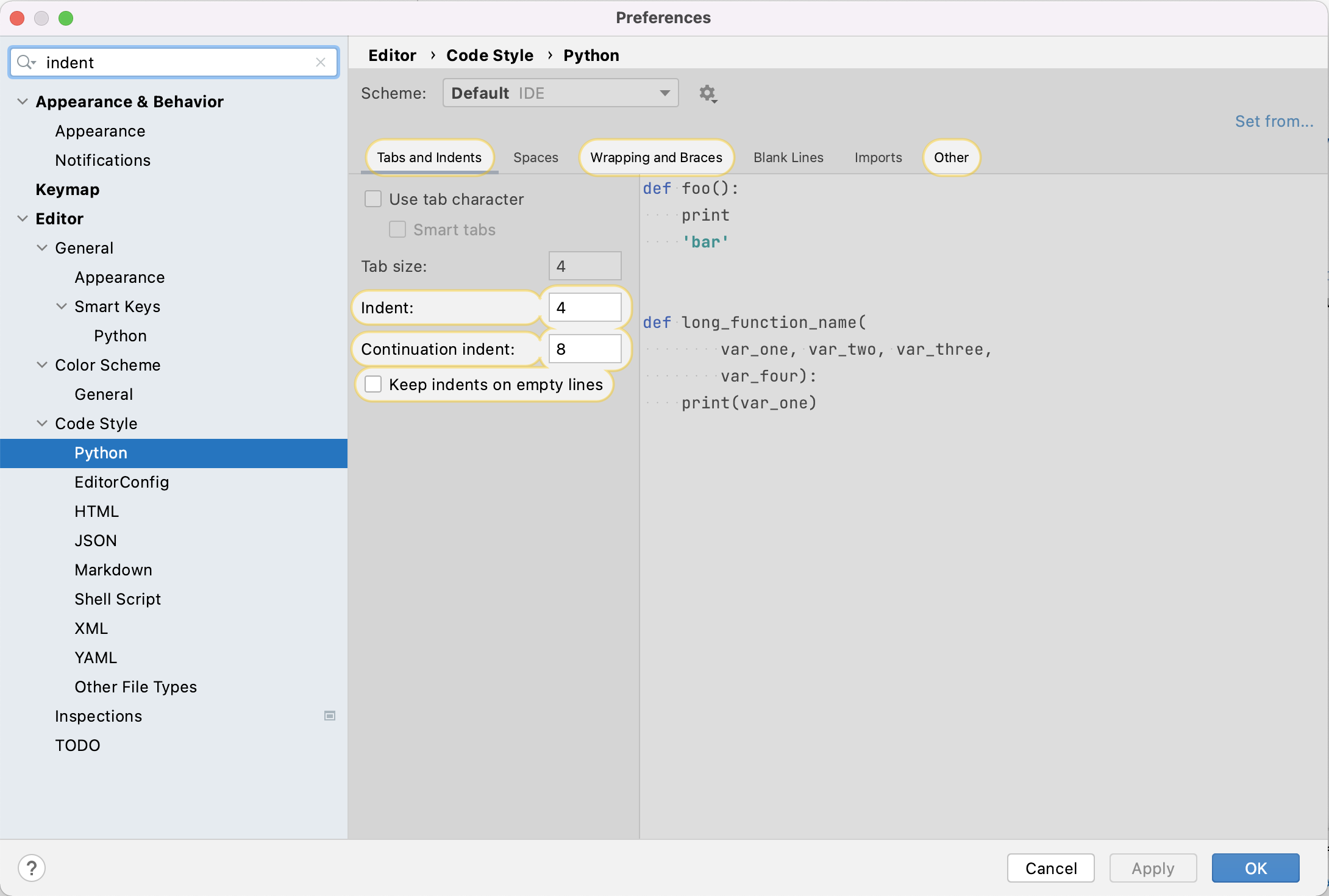

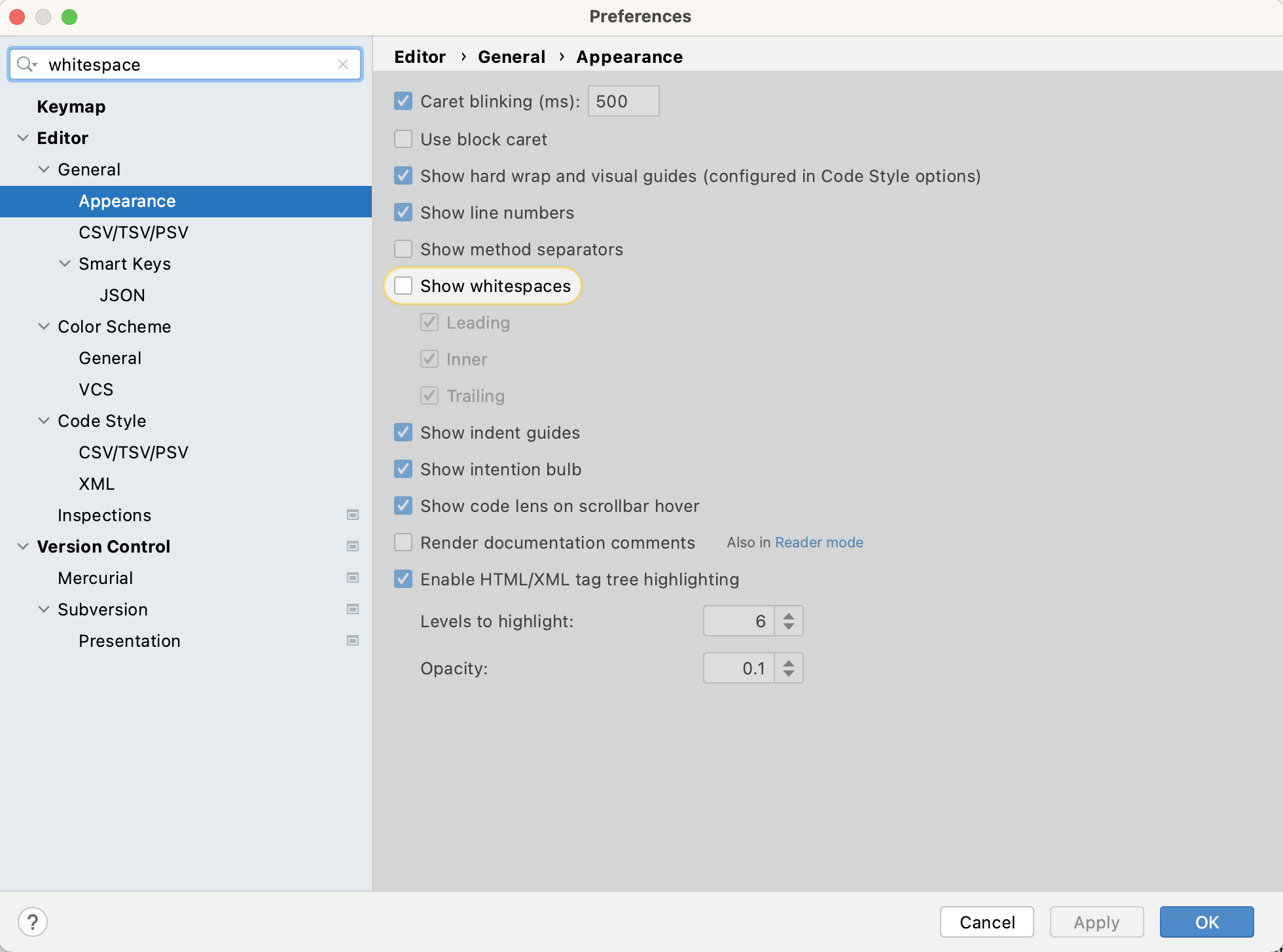

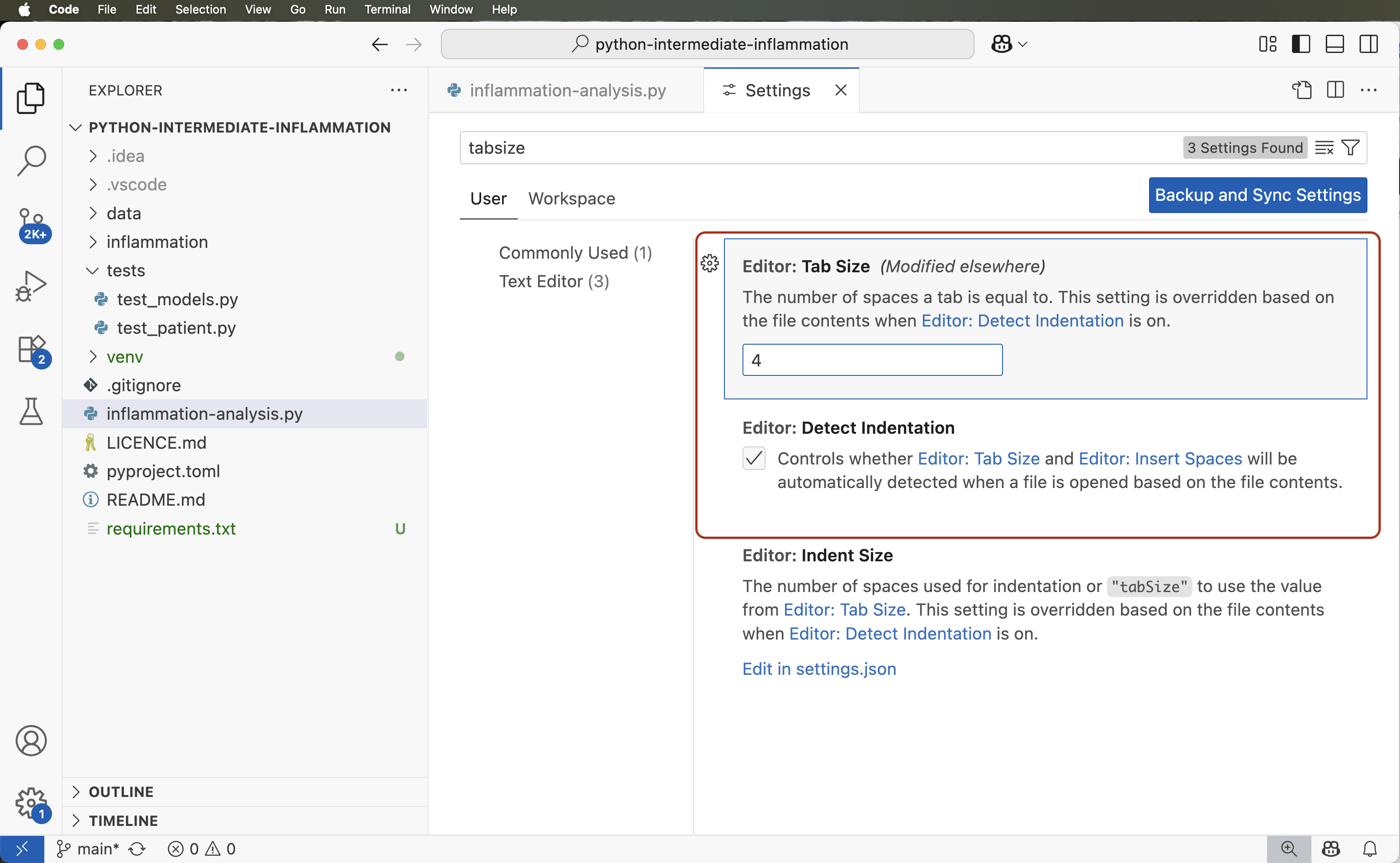

An IDE integrates a number of tools that we need to develop a software project that goes beyond a single script - including a smart code editor, a code compiler/interpreter, a debugger, etc. It will help you write well-formatted and readable code that conforms to code style guides (such as PEP8 for Python) more efficiently by giving relevant and intelligent suggestions for code completion and refactoring. IDEs often integrate command line console and version control tools - we teach them separately in this course as this knowledge can be ported to other programming languages and command line tools you may use in the future (but is applicable to the integrated versions too).

You have a choice of using PyCharm or Visual Studio Code (VS Code) in this course.

Git & GitHub

Git is a free and open source distributed version control system designed to save every change made to a (software) project, allowing others to collaborate and contribute. In this course, we use Git to version control our code in conjunction with GitHub for code backup and sharing. GitHub is one of the leading integrated products and social platforms for modern software development, monitoring and management - it will help us with version control, issue management, code review, code testing/Continuous Integration, and collaborative development. An important concept in collaborative development is version control workflows (i.e. how to effectively use version control on a project with others).

Python Coding Style

Most programming languages will have associated standards and conventions for how the source code should be formatted and styled. Although this sounds pedantic, it is important for maintaining the consistency and readability of code across a project. Therefore, one should be aware of these guidelines and adhere to whatever the project you are working on has specified. In Python, we will be looking at a convention called PEP8.

Let us get started with setting up our software development environment!

- In order to develop (write, test, debug, backup) code efficiently, you need to use a number of different tools.

- When there is a choice of tools for a task you will have to decide which tool is right for you, which may be a matter of personal preference or what the team or community you belong to is using.

Content from 1.1 Introduction to Our Software Project

Last updated on 2026-05-05 | Edit this page

Estimated time: 30 minutes

Overview

Questions

- What is the design architecture of our example software project?

- Why is splitting code into smaller functional units (modules) good when designing software?

Objectives

- Use Git to obtain a working copy of our software project from GitHub.

- Inspect the structure and architecture of our software project.

- Understand Model-View-Controller (MVC) architecture in software design and its use in our project.

Patient Inflammation Study Project

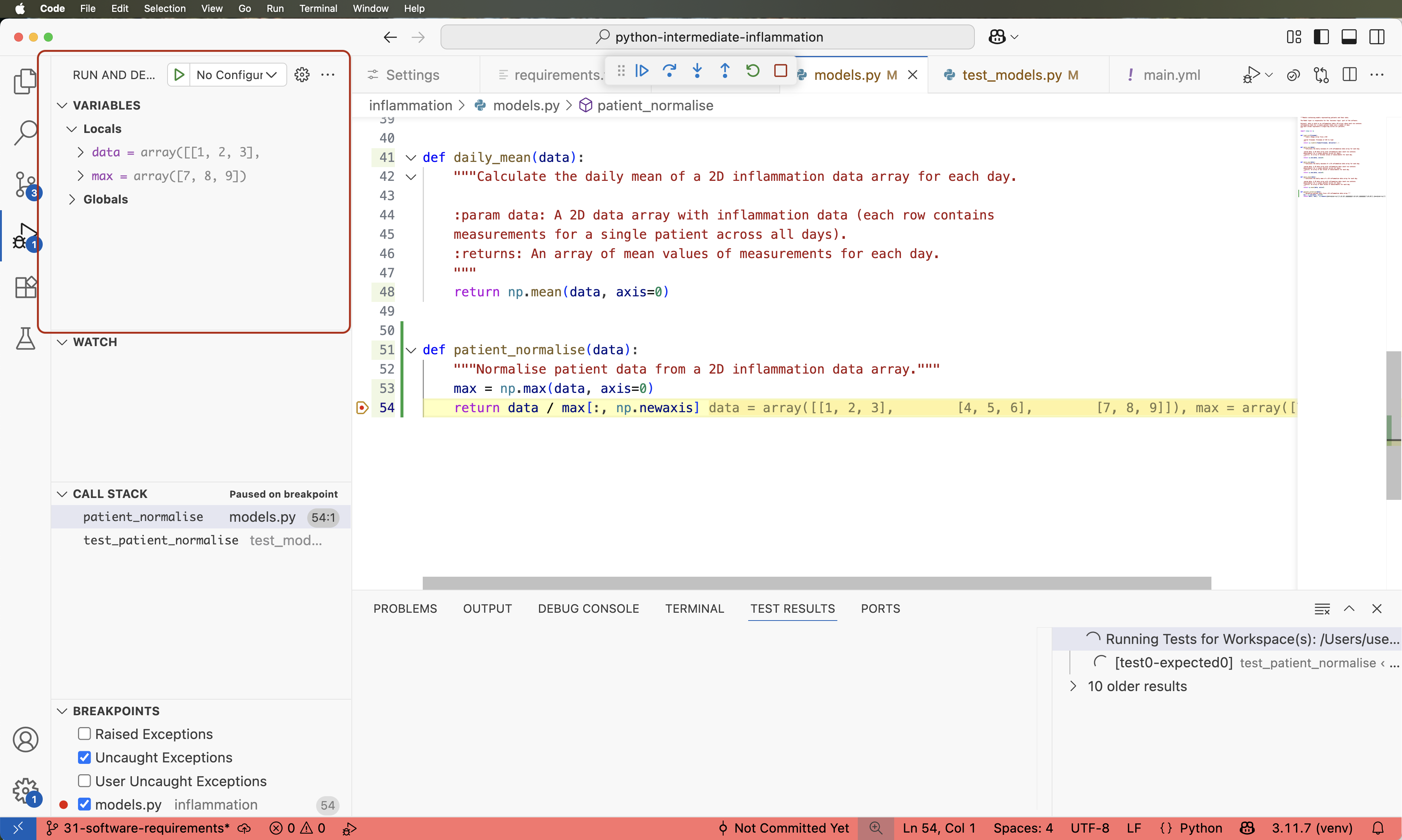

You have joined a software development team that has been working on the patient inflammation study project developed in Python and stored on GitHub. The project analyses the data to study the effect of a new treatment for arthritis by analysing the inflammation levels in patients who have been given this treatment. It reuses the inflammation datasets from the Software Carpentry Python novice lesson.

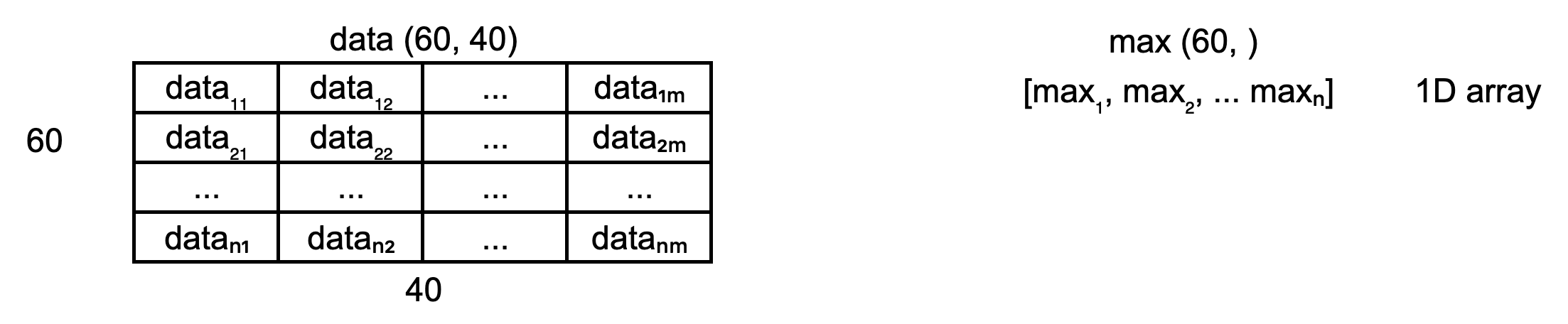

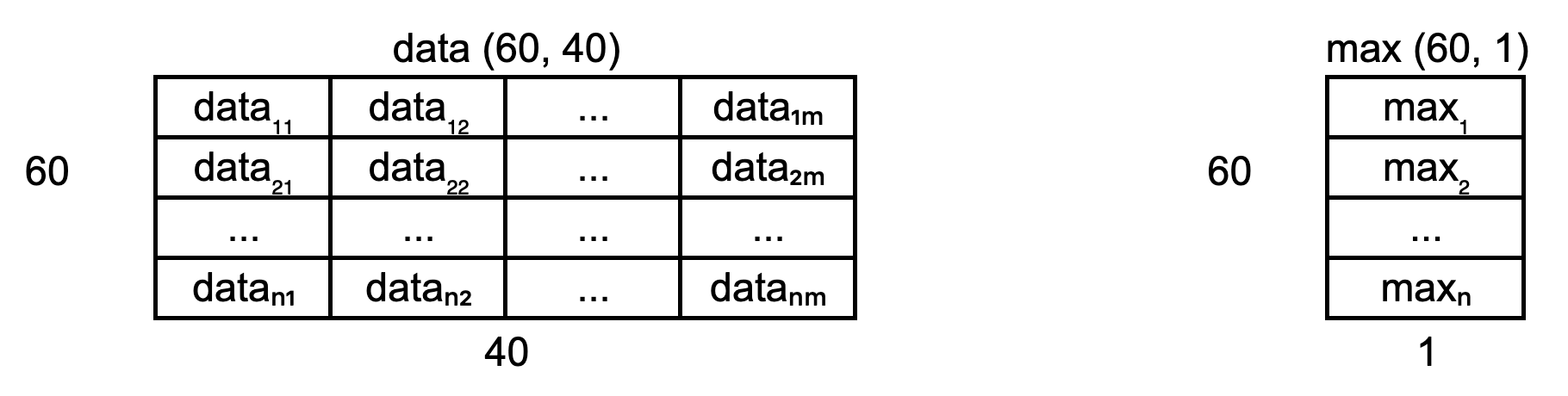

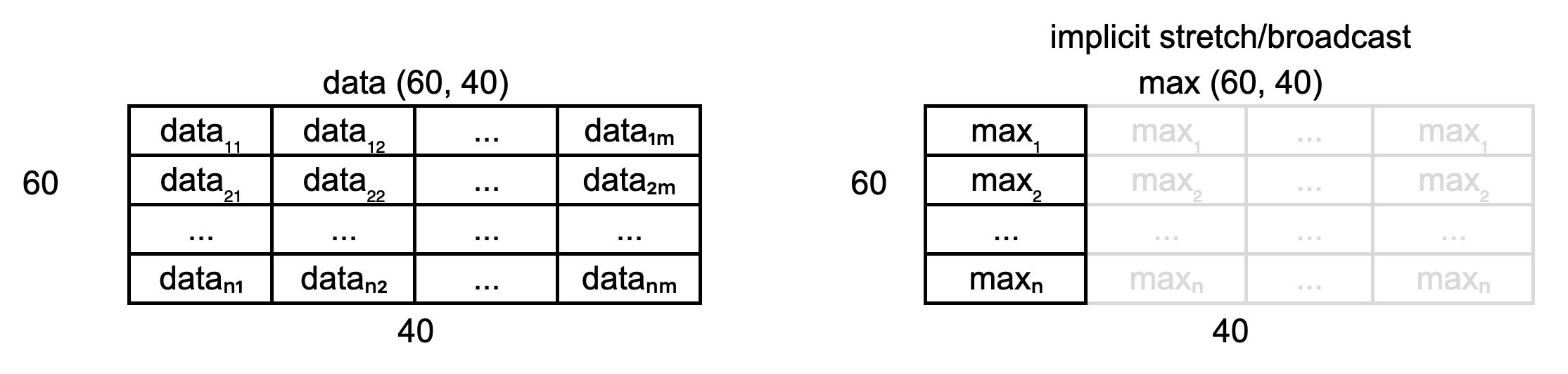

Inflammation study pipeline from the Software Carpentry Python novice lesson

What Does Patient Inflammation Data Contain?

Each dataset records inflammation measurements from a separate clinical trial of the drug, and each dataset contains information for 60 patients, who had their inflammation levels recorded (in some arbitrary units of inflammation measurement) for 40 days whilst participating in the trial. A snapshot of one of the data files is shown in the diagram above.

Each of the data files uses the popular comma-separated (CSV) format to represent the data, where:

- each row holds inflammation measurements for a single patient

- each column represents a successive day in the trial

- each cell represents an inflammation reading on a given day for a patient

The project is not finished and contains some errors. You will be working on your own and in collaboration with others to fix and build on top of the existing code during the course.

Downloading Our Software Project

To start working on the project, you will first create a fork of the software project repository from GitHub within your own GitHub account and then obtain a local copy of that project (from your GitHub) on your machine.

- Make sure you have a GitHub account and that you have set up SSH key pair for authentication with GitHub.

Note: while it is possible to use HTTPS with a personal access token for authentication with GitHub, the recommended and supported authentication method to use for this course is SSH with key pairs.

Log into your GitHub account.

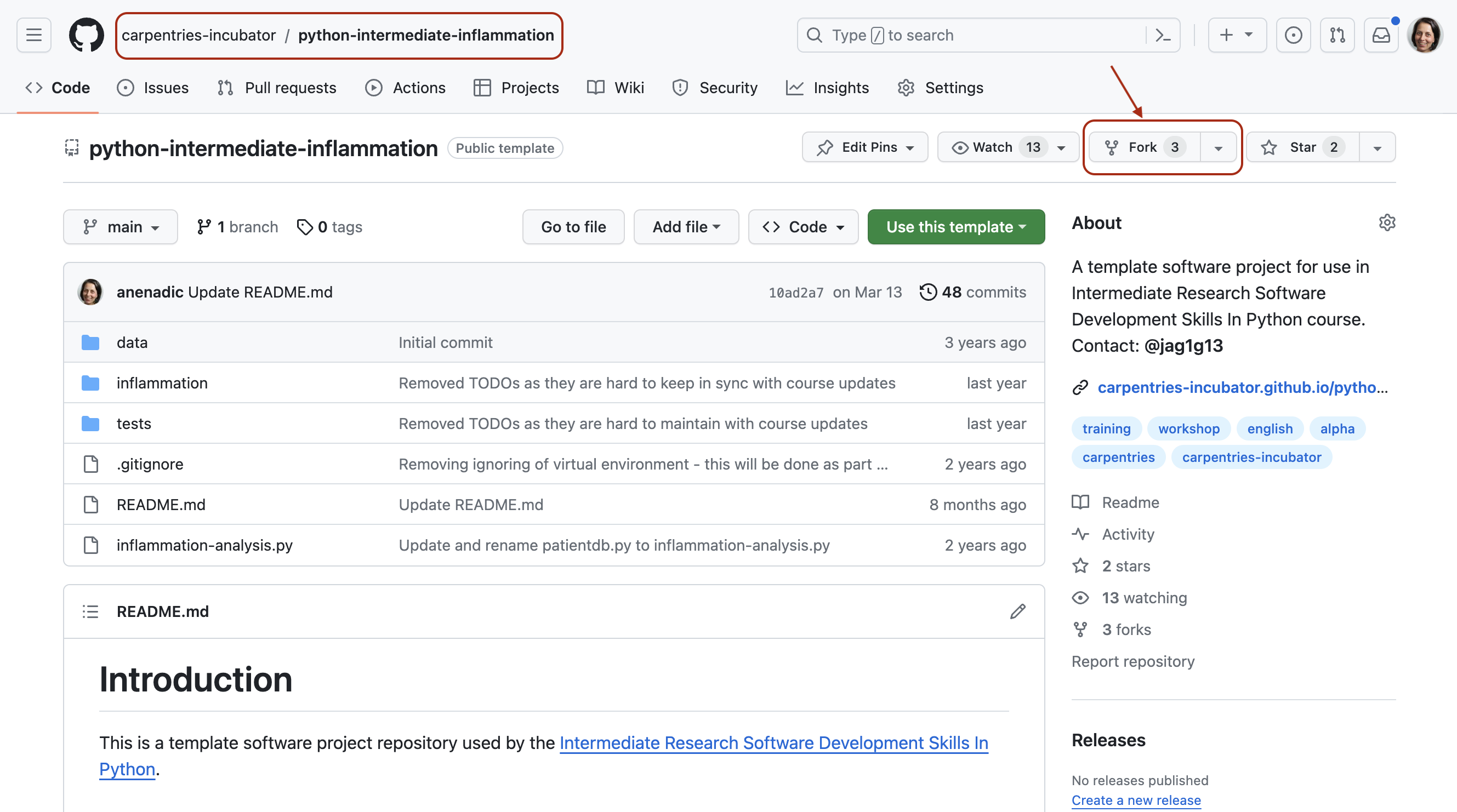

Go to the software project repository in GitHub.

- Click the

Forkbutton towards the top right of the repository’s GitHub page to create a fork of the repository under your GitHub account. Remember, you will need to be signed into GitHub for theForkbutton to work.

Note: each participant is creating their own fork of the project to work on.

Note 2: we are creating a fork of the software project repository (instead of copying it from its template) because we want to preserve the history of all commits (with template copying you only get a snapshot of a repository at a given point in time).

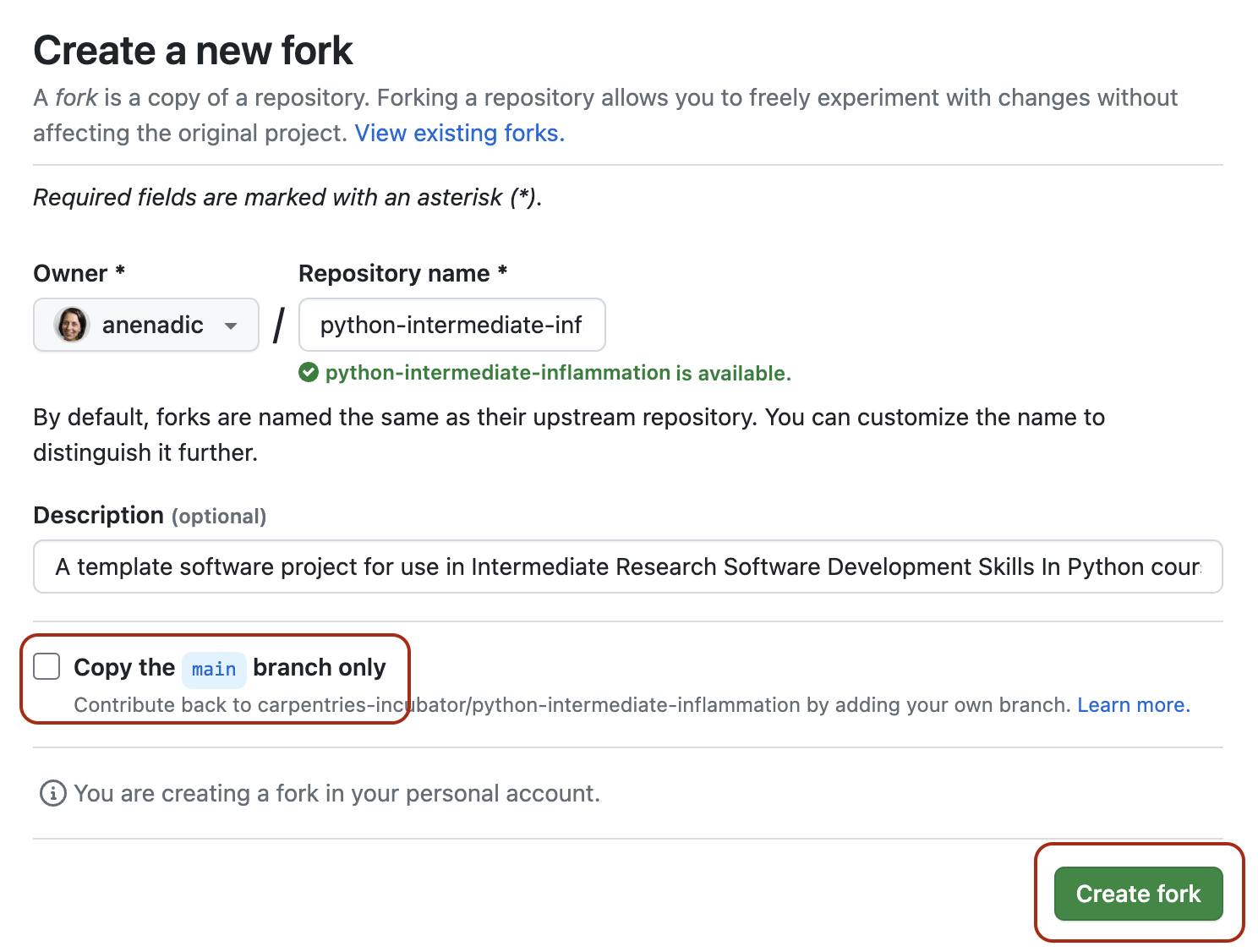

- Make sure to select your personal account and set the name of the

project to

python-intermediate-inflammation(you can call it anything you like, but it may be easier for future group exercises if everyone uses the same name). Ensure that you uncheck theCopy the main branch onlyoption. This guarantees you get all the branches from this repository needed for later exercises.

Click the

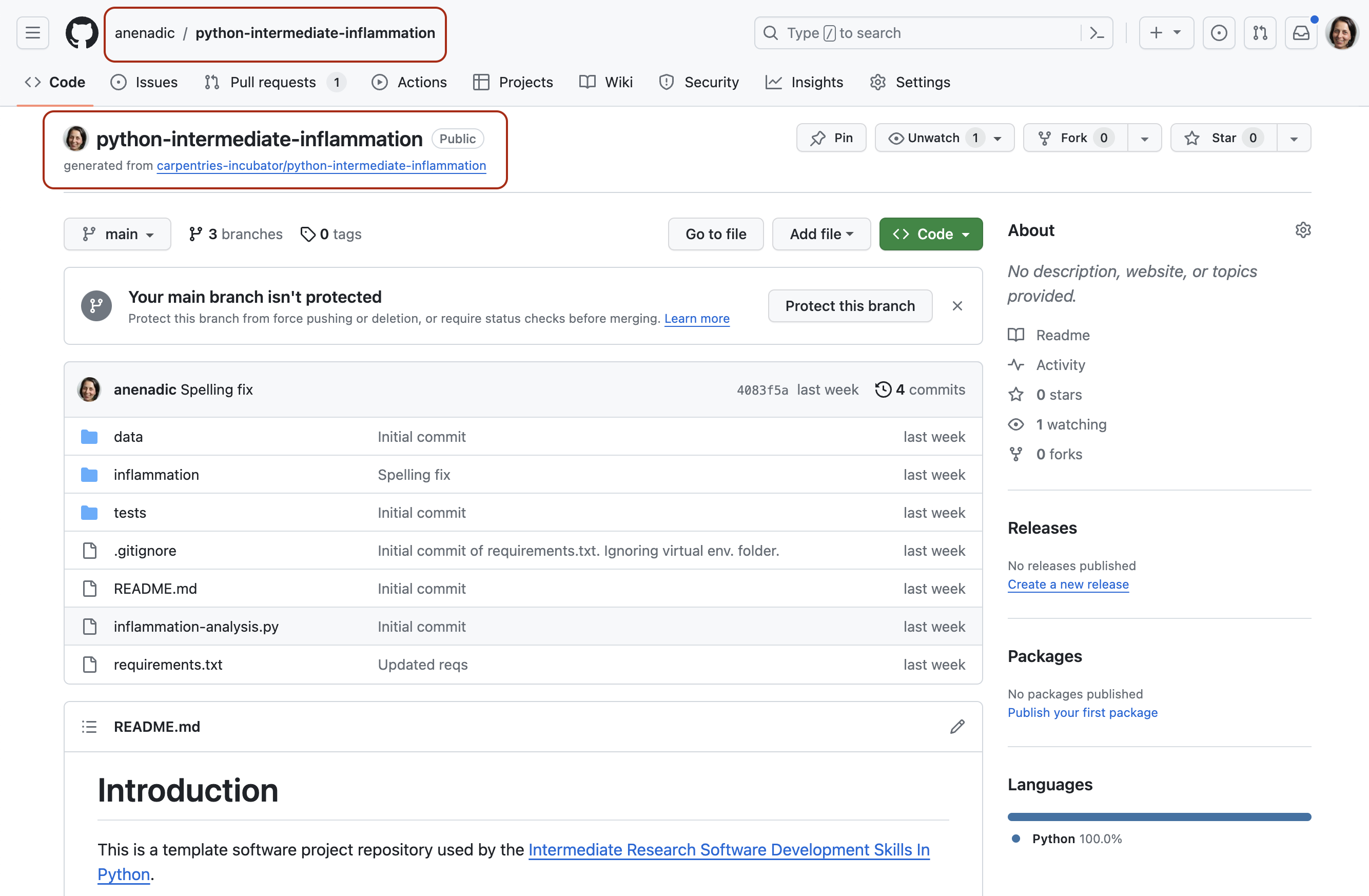

Create forkbutton and wait for GitHub to create the forked copy of the repository under your account.Locate the forked repository under your own GitHub account. GitHub should redirect you there automatically after creating the fork. If this does not happen, click your user icon in the top right corner and select

Your Repositoriesfrom the drop-down menu, then locate your newly created fork.

Exercise: Obtain the Software Project Locally

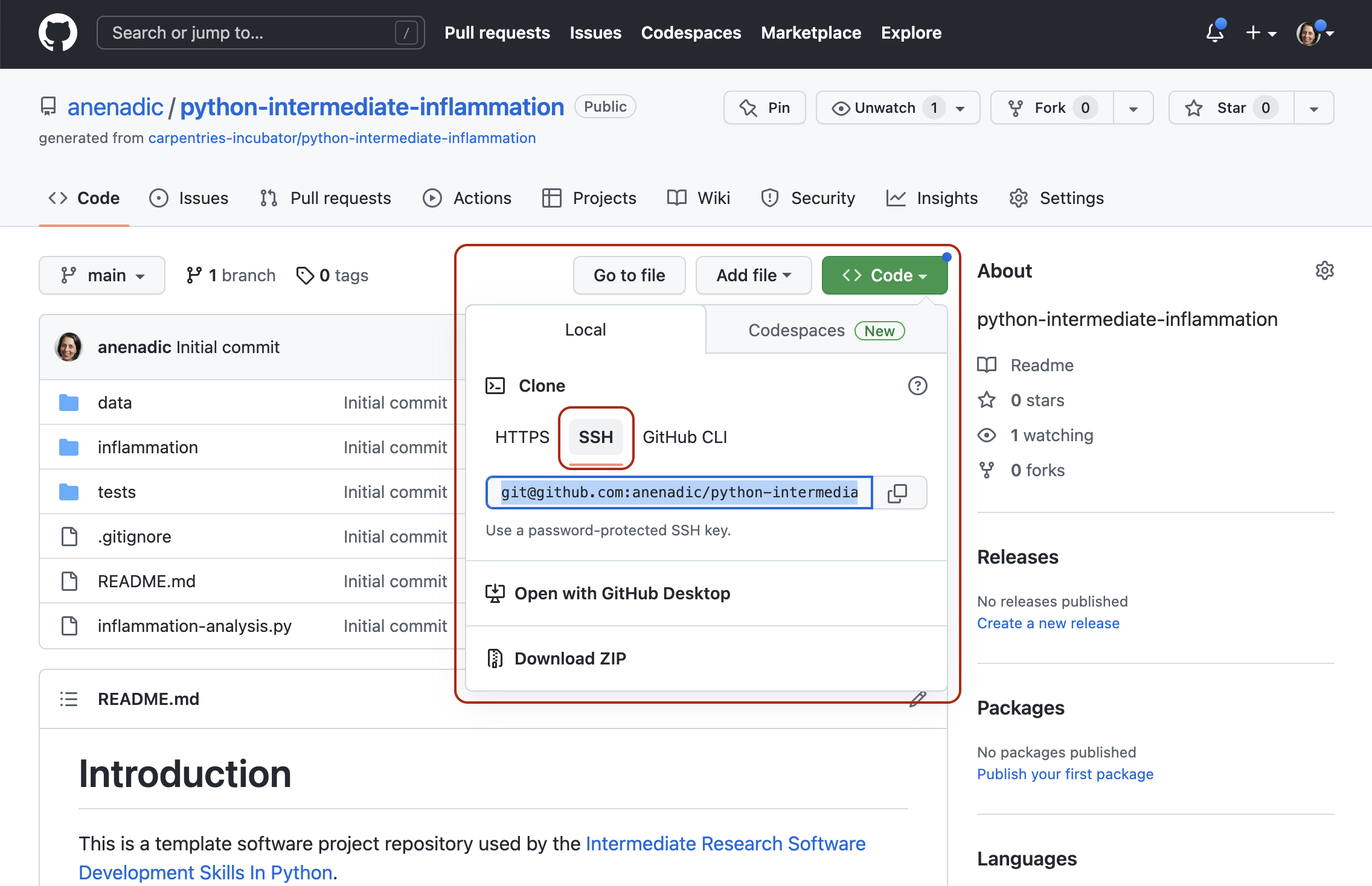

Using the command line, clone the forked repository from your GitHub account into the home directory on your computer using SSH. Which command(s) would you use to get a detailed list of contents of the directory you have just cloned?

- Find the SSH URL of the software project repository to clone from your GitHub account. Make sure you do not clone the original repository but rather your own fork, as you should be able to push commits to it later on. Also make sure you select the SSH tab and not the HTTPS one. For this course, SSH is the preferred way of authenticating when sending your changes back to GitHub. If you have only authenticated through HTTPS in the past, please follow the guidance to add an SSH key to your GitHub account.

- Make sure you are located in your home directory in the command line with:

- From your home directory in the command line, do:

Make sure you are cloning your fork of the software project and not the original repository.

- Navigate into the cloned repository folder in your command line with:

Note: If you have accidentally copied the HTTPS URL of your repository instead of the SSH one, you can easily fix that from your project folder in the command line with:

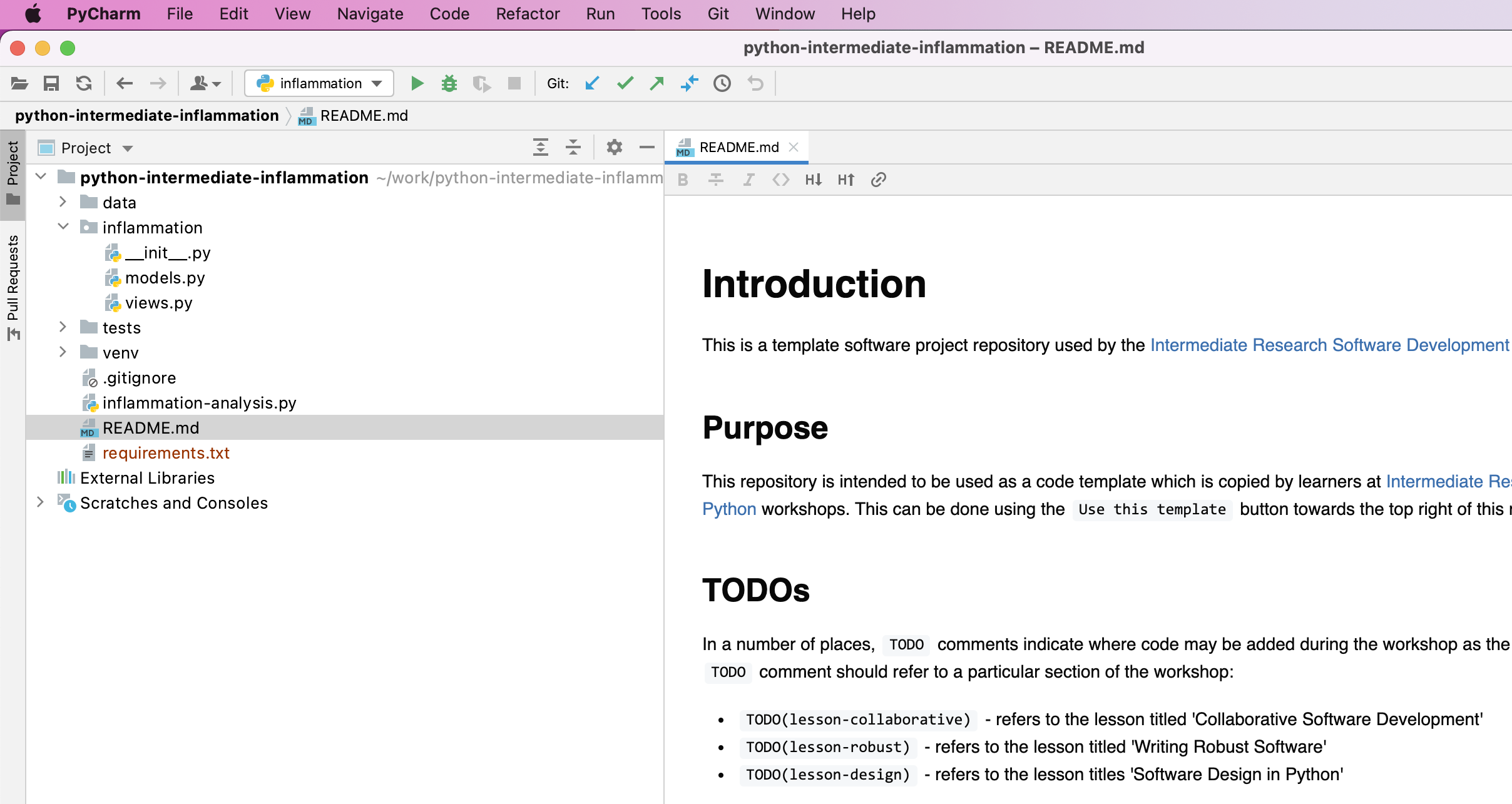

Our Software Project’s Structure

Let’s inspect the content of the software project from the command

line. From the root directory of the project, you can use the command

ls -l to get a more detailed list of the contents. You

should see something similar to the following.

BASH

$ cd ~/python-intermediate-inflammation

$ ls -l

total 24

-rw-r--r-- 1 carpentry users 1055 20 Apr 15:41 README.md

drwxr-xr-x 18 carpentry users 576 20 Apr 15:41 data

drwxr-xr-x 5 carpentry users 160 20 Apr 15:41 inflammation

-rw-r--r-- 1 carpentry users 1122 20 Apr 15:41 inflammation-analysis.py

drwxr-xr-x 4 carpentry users 128 20 Apr 15:41 testsAs can be seen from the above, our software project contains the

README file (that typically describes the project, its

usage, installation, authors and how to contribute), Python script

inflammation-analysis.py, and three directories -

inflammation, data and tests.

The Python script inflammation-analysis.py provides the

main entry point in the application, and on closer inspection, we can

see that the inflammation directory contains two more

Python scripts - views.py and models.py. We

will have a more detailed look into these shortly.

BASH

$ ls -l inflammation

total 24

-rw-r--r-- 1 alex staff 71 29 Jun 09:59 __init__.py

-rw-r--r-- 1 alex staff 838 29 Jun 09:59 models.py

-rw-r--r-- 1 alex staff 649 25 Jun 13:13 views.pyDirectory data contains several files with patients’

daily inflammation information (along with some other files):

BASH

$ ls -l data

total 264

-rw-r--r-- 1 alex staff 5365 25 Jun 13:13 inflammation-01.csv

-rw-r--r-- 1 alex staff 5314 25 Jun 13:13 inflammation-02.csv

-rw-r--r-- 1 alex staff 5127 25 Jun 13:13 inflammation-03.csv

-rw-r--r-- 1 alex staff 5367 25 Jun 13:13 inflammation-04.csv

-rw-r--r-- 1 alex staff 5345 25 Jun 13:13 inflammation-05.csv

-rw-r--r-- 1 alex staff 5330 25 Jun 13:13 inflammation-06.csv

-rw-r--r-- 1 alex staff 5342 25 Jun 13:13 inflammation-07.csv

-rw-r--r-- 1 alex staff 5127 25 Jun 13:13 inflammation-08.csv

-rw-r--r-- 1 alex staff 5327 25 Jun 13:13 inflammation-09.csv

-rw-r--r-- 1 alex staff 5342 25 Jun 13:13 inflammation-10.csv

-rw-r--r-- 1 alex staff 5127 25 Jun 13:13 inflammation-11.csv

-rw-r--r-- 1 alex staff 5340 25 Jun 13:13 inflammation-12.csv

-rw-r--r-- 1 alex staff 22554 25 Jun 13:13 python-novice-inflammation-data.zip

-rw-r--r-- 1 alex staff 12 25 Jun 13:13 small-01.csv

-rw-r--r-- 1 alex staff 15 25 Jun 13:13 small-02.csv

-rw-r--r-- 1 alex staff 12 25 Jun 13:13 small-03.csvAs previously mentioned, each of the inflammation data files contains separate trial data for 60 patients over 40 days.

Exercise: Have a Peek at the Data

Which command(s) would you use to list the contents or a first few

lines of data/inflammation-01.csv file?

- To list the entire content of a file from the project root do:

cat data/inflammation-01.csv. - To list the first 5 lines of a file from the project root do:

OUTPUT

0,0,1,3,1,2,4,7,8,3,3,3,10,5,7,4,7,7,12,18,6,13,11,11,7,7,4,6,8,8,4,4,5,7,3,4,2,3,0,0

0,1,2,1,2,1,3,2,2,6,10,11,5,9,4,4,7,16,8,6,18,4,12,5,12,7,11,5,11,3,3,5,4,4,5,5,1,1,0,1

0,1,1,3,3,2,6,2,5,9,5,7,4,5,4,15,5,11,9,10,19,14,12,17,7,12,11,7,4,2,10,5,4,2,2,3,2,2,1,1

0,0,2,0,4,2,2,1,6,7,10,7,9,13,8,8,15,10,10,7,17,4,4,7,6,15,6,4,9,11,3,5,6,3,3,4,2,3,2,1

0,1,1,3,3,1,3,5,2,4,4,7,6,5,3,10,8,10,6,17,9,14,9,7,13,9,12,6,7,7,9,6,3,2,2,4,2,0,1,1Directory tests contains several tests that have been

implemented already. We will be adding more tests during the course as

our code grows.

BASH

$ ls -l tests

total 16

-rw-r--r-- 1 alex staff 941 18 Dec 11:42 test_models.py

-rw-r--r-- 1 alex staff 182 18 Dec 11:42 test_patient.pyAn important thing to note here is that the structure of our project is not arbitrary. One of the big differences between novice and intermediate software development is planning the structure of your code. This structure includes software components and behavioural interactions between them, including how these components are laid out in a directory and file structure. A novice will often make up the structure of their code as they go along. However, for more advanced software development, we need to plan and design this structure - called a software architecture - beforehand.

Let us have a quick look into what a software architecture is and which architecture is used by our software project before we start adding more code to it.

Software Architecture

A software architecture is the fundamental structure of a software system that is decided at the beginning of project development based on its requirements and cannot be changed that easily once implemented. It refers to a “bigger picture” of a software system that describes high-level components (modules) of the system and how they interact.

In software design and development, large systems or programs are

often decomposed into a set of smaller modules each with a subset of

functionality. Typical examples of modules in programming are software

libraries; some software libraries, such as numpy and

matplotlib in Python, are bigger modules that contain

several smaller sub-modules. Another example of modules are classes in

object-oriented programming languages.

Programming Modules and Interfaces

Although modules are self-contained and independent elements to a large extent (they can depend on other modules), there are well-defined ways of how they interact with one another. These rules of interaction are called programming interfaces - they define how other modules (clients) can use a particular module. Typically, an interface to a module includes rules on how a module can take input from and how it gives output back to its clients. A client can be a human, in which case we also call these user interfaces. Even smaller functional units such as functions/methods have clearly defined interfaces - a function/method’s definition (also known as a signature) states what parameters it can take as input and what it returns as an output.

We are going to talk about software architecture and design a bit more in Section 3 - for now it is sufficient to know that the way our software project’s code is structured is intentional.

Our Project’s Architecture

Our software project uses the Model-View-Controller (MVC) architecture. MVC architecture divides the software logic into three interconnected modules:

- Model (data) - represents the data used by a program and contains operations/rules for manipulating and changing the data in the model (a database, a file, a single data object or a series of objects - for example a table representing patients’ data).

- View (client interface) - provides means of displaying data to users/clients within an application (i.e. provides visualisation of the state of the model). For example, displaying a window with input fields and buttons (Graphical User Interface, GUI) or textual options within a command line (Command Line Interface, CLI) are examples of Views.

- Controller (processes that handle input/output and manipulate the data) - accepts input from the View and performs the corresponding action on the Model (changing the state of the model) and then updates the View accordingly.

In our project, inflammation-analysis.py is the

Controller module that performs basic statistical

analysis over patient data and provides the main entry point into the

application. The View and Model

modules are contained in the files views.py and

models.py, respectively, and are conveniently named. Data

underlying the Model is contained within the directory

data - as we have seen already it contains several files

with patients’ daily inflammation information.

We will revisit the software architecture and MVC topics once again in later episodes when we talk in more detail about software architecture and design. We now proceed to set up our virtual development environment and start working with the code using a more convenient graphical tool - an Integrated Development Environment (IDE).

- Programming interfaces define how individual modules within a software application interact among themselves or how the application itself interacts with its users.

- MVC is a software design architecture which divides the application into three interconnected modules: Model (data), View (user interface), and Controller (input/output and data manipulation).

- The software project we use throughout this course is an example of an MVC application that manipulates patients’ inflammation data and performs basic statistical analysis using Python.

Content from 1.2 Virtual Environments For Software Development

Last updated on 2025-07-30 | Edit this page

Estimated time: 30 minutes

Overview

Questions

- What are virtual environments in software development and why you should use them?

- How can we manage Python virtual environments and external (third-party) libraries?

Objectives

- Set up a Python virtual environment for our software project using

venvandpip. - Run our software from the command line.

Introduction

So far we have cloned our software project from GitHub and inspected its contents and architecture a bit. We now want to run our code to see what it does - let us do that from the command line. For the most part of the course we will run our code and interact with Git from the command line. While we will develop and debug our code using an IDE and it is possible to use Git from the IDE too, typing commands in the command line allows you to familiarise yourself and learn it well. A bonus is that this knowledge is transferable to running code in other programming languages and is independent from any IDE you may use in the future.

If you have a little peek into our code (e.g. run

cat inflammation/views.py from the project root), you will

see the following two lines somewhere at the top.

This means that our code requires two external

libraries (also called third-party packages or dependencies) -

numpy and matplotlib. Python applications

often use external libraries that don’t come as part of the standard

Python distribution. This means that you will have to use a package

manager tool to install them on your system. Applications will also

sometimes need a specific version of an external library (e.g. because

they were written to work with feature, class, or function that may have

been updated in more recent versions), or a specific version of Python

interpreter. This means that each Python application you work with may

require a different setup and a set of dependencies so it is useful to

be able to keep these configurations separate to avoid confusion between

projects. The solution for this problem is to create a self-contained

virtual environment per project, which contains a

particular version of Python installation plus a number of additional

external libraries.

Virtual environments are not just a feature of Python - most modern programming languages use a similar mechanism to isolate libraries or dependencies for a specific project, making it easier to develop, run, test and share code with others. Some examples include Bundler for Ruby, Conan for C++, or Maven with classpath for Java. This can also be achieved with more generic package managers like Spack, which is used extensively in HPC settings to resolve complex dependencies. In this episode, we learn how to set up a virtual environment to develop our code and manage our external dependencies.

Virtual Environments

So what exactly are virtual environments, and why use them?

A Python virtual environment helps us create an isolated working copy of a software project that uses a specific version of Python interpreter together with specific versions of a number of external libraries installed into that virtual environment. Python virtual environments are implemented as directories with a particular structure within software projects, containing links to specified dependencies allowing isolation from other software projects on your machine that may require different versions of Python or external libraries.

As more external libraries are added to your Python project over time, you can add them to its specific virtual environment and avoid a great deal of confusion by having separate (smaller) virtual environments for each project rather than one huge global environment with potential package version clashes. Another big motivator for using virtual environments is that they make sharing your code with others much easier (as we will see shortly). Here are some typical scenarios where the use of virtual environments is highly recommended (almost unavoidable):

- You have an older project that only works under Python 2. You do not have the time to migrate the project to Python 3 or it may not even be possible as some of the third party dependencies are not available under Python 3. You have to start another project under Python 3. The best way to do this on a single machine is to set up two separate Python virtual environments.

- One of your Python 3 projects is locked to use a particular older version of a third party dependency. You cannot use the latest version of the dependency as it breaks things in your project. In a separate branch of your project, you want to try and fix problems introduced by the new version of the dependency without affecting the working version of your project. You need to set up a separate virtual environment for your branch to ‘isolate’ your code while testing the new feature.

You do not have to worry too much about specific versions of external libraries that your project depends on most of the time. Virtual environments also enable you to always use the latest available version without specifying it explicitly. They also enable you to use a specific older version of a package for your project, should you need to.

A Specific Python or Package Version is Only Ever Installed Once

Note that you will not have a separate Python or package installations for each of your projects - they will only ever be installed once on your system but will be referenced from different virtual environments.

Managing Python Virtual Environments

There are several commonly used command line tools for managing Python virtual environments:

-

venv, available by default from the standardPythondistribution fromPython 3.3+ -

virtualenv, needs to be installed separately but supports bothPython 2.7+andPython 3.3+versions -

pipenv, created to fix certain shortcomings ofvirtualenv -

conda, package and environment management system (also included as part of the Anaconda Python distribution often used by the scientific community) -

poetry, a modern Python packaging tool which handles virtual environments automatically

While there are pros and cons for using each of the above, all will

do the job of managing Python virtual environments for you and it may be

a matter of personal preference which one you go for. In this course, we

will use venv to create and manage our virtual environment

(which is the preferred way for Python 3.3+). The upside is that

venv virtual environments created from the command line are

also recognised and picked up automatically by the IDEs we will use in

this course, as we will see in the next episode.

Managing External Packages

Part of managing your (virtual) working environment involves

installing, updating and removing external packages on your system. The

Python package manager tool pip is most commonly used for

this - it interacts and obtains the packages from the central repository

called Python Package Index (PyPI).

pip can now be used with all Python distributions

(including Anaconda).

A Note on Anaconda and conda

Anaconda is an open source Python distribution commonly used for

scientific programming - it conveniently installs Python, package and

environment management conda, and a number of commonly used

scientific computing packages so you do not have to obtain them

separately. conda is an independent command line tool

(available separately from the Anaconda distribution too) with dual

functionality: (1) it is a package manager that helps you find Python

packages from remote package repositories and install them on your

system, and (2) it is also a virtual environment manager. So, you can

use conda for both tasks instead of using venv

and pip.

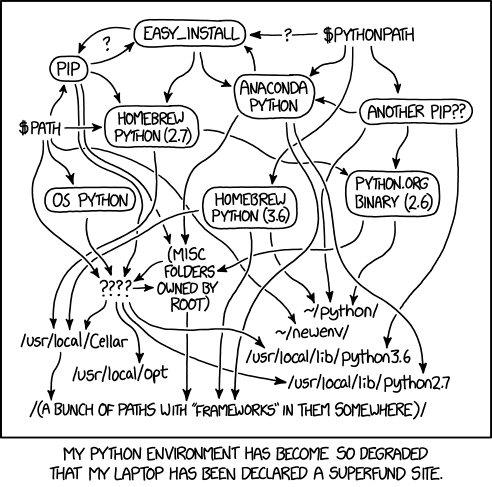

Many Tools for the Job

Installing and managing Python distributions, external libraries and

virtual environments is, well, complex. There is an abundance of tools

for each task, each with its advantages and disadvantages, and there are

different ways to achieve the same effect (and even different ways to

install the same tool!). Note that each Python distribution comes with

its own version of pip - and if you have several Python

versions installed you have to be extra careful to use the correct

pip to manage external packages for that Python

version.

venv and pip are considered the de

facto standards for virtual environment and package management for

Python 3. However, the advantages of using Anaconda and

conda are that you get (most of the) packages needed for

scientific code development included with the distribution. If you are

only collaborating with others who are also using Anaconda, you may find

that conda satisfies all your needs. It is good, however,

to be aware of all these tools, and use them accordingly. As you become

more familiar with them you will realise that equivalent tools work in a

similar way even though the command syntax may be different (and that

there are equivalent tools for other programming languages too to which

your knowledge can be ported).

Let us have a look at how we can create and manage virtual

environments from the command line using venv and manage

packages using pip.

Making Sure You Can Invoke Python

You can test your Python installation from the command line with:

BASH

$ python3 --version # on Mac/Linux

$ python --version # on Windows — Windows installation comes with a python.exe file rather than a python3.exe file If you are using Windows and invoking python command

causes your Git Bash terminal to hang with no error message or output,

you may need to create an alias for the python executable

python.exe, as explained in the troubleshooting

section.

Creating Virtual Environments Using venv

Creating a virtual environment with venv is done by

executing the following command:

where /path/to/new/virtual/environment is a path to a

directory where you want to place it - conventionally within your

software project so they are co-located. This will create the target

directory for the virtual environment (and any parent directories that

don’t exist already).

What is -m Flag in

python3 Command?

The Python -m flag means “module” and tells the Python

interpreter to treat what follows -m as the name of a

module and not as a single, executable program with the same name. Some

modules (such as venv or pip) have main entry

points and the -m flag can be used to invoke them on the

command line via the python command. The main difference

between running such modules as standalone programs (e.g. executing

“venv” by running the venv command directly) versus using

python3 -m command seems to be that with latter you are in

full control of which Python module will be invoked (the one that came

with your environment’s Python interpreter vs. some other version you

may have on your system). This makes it a more reliable way to set

things up correctly and avoid issues that could prove difficult to trace

and debug.

For our project let us create a virtual environment called “venv”. First, ensure you are within the project root directory, then:

If you list the contents of the newly created directory “venv”, on a Mac or Linux system (slightly different on Windows as explained below) you should see something like:

OUTPUT

total 8

drwxr-xr-x 12 alex staff 384 5 Oct 11:47 bin

drwxr-xr-x 2 alex staff 64 5 Oct 11:47 include

drwxr-xr-x 3 alex staff 96 5 Oct 11:47 lib

-rw-r--r-- 1 alex staff 90 5 Oct 11:47 pyvenv.cfgSo, running the python3 -m venv venv command created the

target directory called “venv” containing:

-

pyvenv.cfgconfiguration file with a home key pointing to the Python installation from which the command was run, -

binsubdirectory (calledScriptson Windows) containing a symlink of the Python interpreter binary used to create the environment and the standard Python library, -

lib/pythonX.Y/site-packagessubdirectory (calledLib\site-packageson Windows) to contain its own independent set of installed Python packages isolated from other projects, and - various other configuration and supporting files and subdirectories.

Naming Virtual Environments

What is a good name to use for a virtual environment? Using “venv” or “.venv” as the name for an environment and storing it within the project’s directory seems to be the recommended way - this way when you come across such a subdirectory within a software project, by convention you know it contains its virtual environment details. A slight downside is that all different virtual environments on your machine then use the same name and the current one is determined by the context of the path you are currently located in. A (non-conventional) alternative is to use your project name for the name of the virtual environment, with the downside that there is nothing to indicate that such a directory contains a virtual environment. In our case, we have settled to use the name “venv” instead of “.venv” since it is not a hidden directory and we want it to be displayed by the command line when listing directory contents (the “.” in its name that would, by convention, make it hidden). In the future, you will decide what naming convention works best for you. Here are some references for each of the naming conventions:

- The Hitchhiker’s Guide to Python notes that “venv” is the general convention used globally

- The Python Documentation indicates that “.venv” is common

- “venv” vs “.venv” discussion

Once you’ve created a virtual environment, you will need to activate it.

On Mac or Linux, it is done as:

On Windows, recall that we have Scripts directory

instead of bin and activating a virtual environment is done

as:

Activating the virtual environment will change your command line’s prompt to show what virtual environment you are currently using (indicated by its name in round brackets at the start of the prompt), and modify the environment so that running Python will get you the particular version of Python configured in your virtual environment.

You can verify you are using your virtual environment’s version of

Python by checking the path using the command which:

OUTPUT

/home/alex/python-intermediate-inflammation/venv/bin/python3When you’re done working on your project, you can exit the environment with:

If you have just done the deactivate, ensure you

reactivate the environment ready for the next part:

Python Within A Virtual Environment

Within an active virtual environment, commands python3

and python should both refer to the version of Python 3 you

created the environment with (note you may have multiple Python 3

versions installed).

However, on some machines with Python 2 installed,

python command may still be hardwired to the copy of Python

2 installed outside of the virtual environment - this can cause errors

and confusion.

You can always check which version of Python you are using in your

virtual environment with the command which python to be

absolutely sure. We continue using python3 in this material

to avoid mistakes, but the command python may work for you

as expected.

Note that, since our software project is being tracked by Git, the newly created virtual environment will show up in version control - we will see how to handle it using Git in one of the subsequent episodes.

Installing External Packages Using pip

We noticed earlier that our code depends on two external

packages/libraries - numpy and

matplotlib. In order for the code to run on your machine,

you need to install these two dependencies into your virtual

environment.

To install the latest version of a package with pip you

use pip’s install command and specify the package’s name,

e.g.:

or like this to install multiple packages at once for short:

How About

pip3 install <package-name> Command?

You may have seen or used the

pip3 install <package-name> command in the past,

which is shorter and perhaps more intuitive than

python3 -m pip install. However, the official

Pip documentation recommends python3 -m pip install and

core Python developer Brett Cannon offers a more detailed

explanation of edge cases when the two commands may produce

different results and why python3 -m pip install is

recommended. In this material, we will use python3 -m

whenever we have to invoke a Python module from command line.

If you run the python3 -m pip install command on a

package that is already installed, pip will notice this and

do nothing.

To install a specific version of a Python package give the package

name followed by == and the version number,

e.g. python3 -m pip install numpy==1.21.1.

To specify a minimum version of a Python package, you can do

python3 -m pip install numpy>=1.20.

To upgrade a package to the latest version,

e.g. python3 -m pip install --upgrade numpy.

To display information about a particular installed package do:

OUTPUT

Name: numpy

Version: 1.26.2

Summary: Fundamental package for array computing in Python

Home-page: https://numpy.org

Author: Travis E. Oliphant et al.

Author-email:

License: Copyright (c) 2005-2023, NumPy Developers.

All rights reserved.

...

Required-by: contourpy, matplotlibTo list all packages installed with pip (in your current

virtual environment):

OUTPUT

Package Version

--------------- -------

contourpy 1.2.0

cycler 0.12.1

fonttools 4.45.0

kiwisolver 1.4.5

matplotlib 3.8.2

numpy 1.26.2

packaging 23.2

Pillow 10.1.0

pip 23.0.1

pyparsing 3.1.1

python-dateutil 2.8.2

setuptools 67.6.1

six 1.16.0To uninstall a package installed in the virtual environment do:

python3 -m pip uninstall <package-name>. You can also

supply a list of packages to uninstall at the same time.

Installing Our Local Project as a Package Using

pip

Often when working on a Python project, the project itself will be a

Python package (like numpy or matplotlib

above) or at the very least it might be useful to treat it like a

package. Said another way, it is usually the case we want a convenient

way to call the Python code we are writing from another location, and

making this code accessible as a package is the best way to do this. We

will save the details of Python packaging for a future episode, and for the

meantime we can use the minimal package setup that our project already

comes with, which is contained in the pyproject.toml file.

Once again, we can use pip to install our local

package:

If the above command fails for you - your pip

installation is older than version 21.3. Such older versions of

pip do not support pyproject.toml as the

package metadata. Given these versions of pip are now over

4 years old, we strongly recommend that you update pip if

you can with:

This is similar syntax to above, with two important differences:

- The

--editableor-eflag indicates that the package we are specifying should be an “editable” install. An “editable” install is one that allows the package in our environment to change dynamically based on source code locally. This is very convenient when we are developing the package because we can instantly see changes when we call the code from within our virtual environment, rather than having to install the local package again to get the updates. - The argument

'.'indicates that the package we want to install is located in the current directory. Thepyproject.tomlfile located in this directory then handles the rest.

If we reissue the pip list command we should now see our

local package with the name

python-intermediate-inflammation in the output:

OUTPUT

Package Version Editable project location

-------------------------------- ----------- ----------------------------------------------------------------------------------------------

contourpy 1.3.1

cycler 0.12.1

exceptiongroup 1.2.2

fonttools 4.56.0

iniconfig 2.0.0

kiwisolver 1.4.8

matplotlib 3.10.0

numpy 2.2.3

packaging 24.2

pillow 11.1.0

pip 22.0.2

pluggy 1.5.0

pyparsing 3.2.1

pytest 8.3.4

python-dateutil 2.9.0.post0

python-intermediate-inflammation 0.0.0 /path/to/your/project/directory/python-intermediate-inflammation

setuptools 59.6.0

six 1.17.0

tomli 2.2.1Exporting/Importing Virtual Environments Using pip

You are collaborating on a project with a team so, naturally, you

will want to share your environment with your collaborators so they can

easily ‘clone’ your software project with all of its dependencies and

everyone can replicate equivalent virtual environments on their

machines. pip has a handy way of exporting, saving and

sharing virtual environments.

To export your active environment use the

python3 -m pip freeze --exclude-editable command to produce

a list of packages installed in the virtual environment. A common

convention is to put this list in a requirements.txt

file:

BASH

(venv) $ python3 -m pip freeze --exclude-editable > requirements.txt

(venv) $ cat requirements.txtOUTPUT

contourpy==1.2.0

cycler==0.12.1

fonttools==4.45.0

kiwisolver==1.4.5

matplotlib==3.8.2

numpy==1.26.2

packaging==23.2

Pillow==10.1.0

pyparsing==3.1.1

python-dateutil==2.8.2

six==1.16.0The first of the above commands will create a

requirements.txt file in your current directory. Yours may

look a little different, depending on the version of the packages you

have installed, as well as any differences in the packages that they

themselves use. Also, we need to use the --exclude-editable

command so that our local package is not included in the output,

otherwise pip will try to pull from a specific commit at the time we

made the editable install, which is not what we want.

The requirements.txt file can then be committed to a

version control system (we will see how to do this using Git in one of

the following episodes) and get shipped as part of your software and

shared with collaborators and/or users. They can then replicate your

environment and install all the necessary packages from the project root

as follows:

As your project grows you may need to update your environment for a

variety of reasons. For example, one of your project’s dependencies has

just released a new version (dependency version number update), you need

an additional package for data analysis (adding a new dependency) or you

have found a better package and no longer need the older package (adding

a new and removing an old dependency). What you need to do in this case

(apart from installing the new and removing the packages that are no

longer needed from your virtual environment) is update the contents of

the requirements.txt file accordingly by re-issuing

pip freeze command and propagate the updated

requirements.txt file to your collaborators via your code

sharing platform (e.g. GitHub).

Official Documentation

For a full list of options and commands, consult the official

venv documentation and the Installing

Python Modules with pip guide. Also check out the guide

“Installing

packages using pip and virtual environments”.

Running Python Scripts From Command Line

Congratulations! Your environment is now activated and set up to run

our inflammation-analysis.py script from the command

line.

You should already be located in the root of the

python-intermediate-inflammation directory (if not, please

navigate to it from the command line now). To run the script, type the

following command:

OUTPUT

usage: inflammation-analysis.py [-h] infiles [infiles ...]

inflammation-analysis.py: error: the following arguments are required: infilesIn the above command, we tell the command line two things:

- to find a Python interpreter (in this case, the one that was configured via the virtual environment), and

- to use it to run our script

inflammation-analysis.py, which resides in the current directory.

As we can see, the Python interpreter ran our script, which threw an

error -

inflammation-analysis.py: error: the following arguments are required: infiles.

It looks like the script expects a list of input files to process, so

this is expected behaviour since we do not supply any.

We should run our code as follows, passing one (or more) data file(s) as input:

Optional Exercises

Have a look at some optional exercises.

- Virtual environments keep Python versions and dependencies required by different projects separate.

- A virtual environment is itself a directory structure.

- Use

venvto create and manage Python virtual environments. - Use

pipto install and manage Python external (third-party) libraries. -

pipallows you to declare all dependencies for a project in a separate file (by convention calledrequirements.txt) which can be shared with collaborators/users and used to replicate a virtual environment. - Use

python3 -m pip freeze --exclude-editable > requirements.txtto take snapshot of your project’s dependencies. - Use

python3 -m pip install -r requirements.txtto replicate someone else’s virtual environment on your machine from therequirements.txtfile.

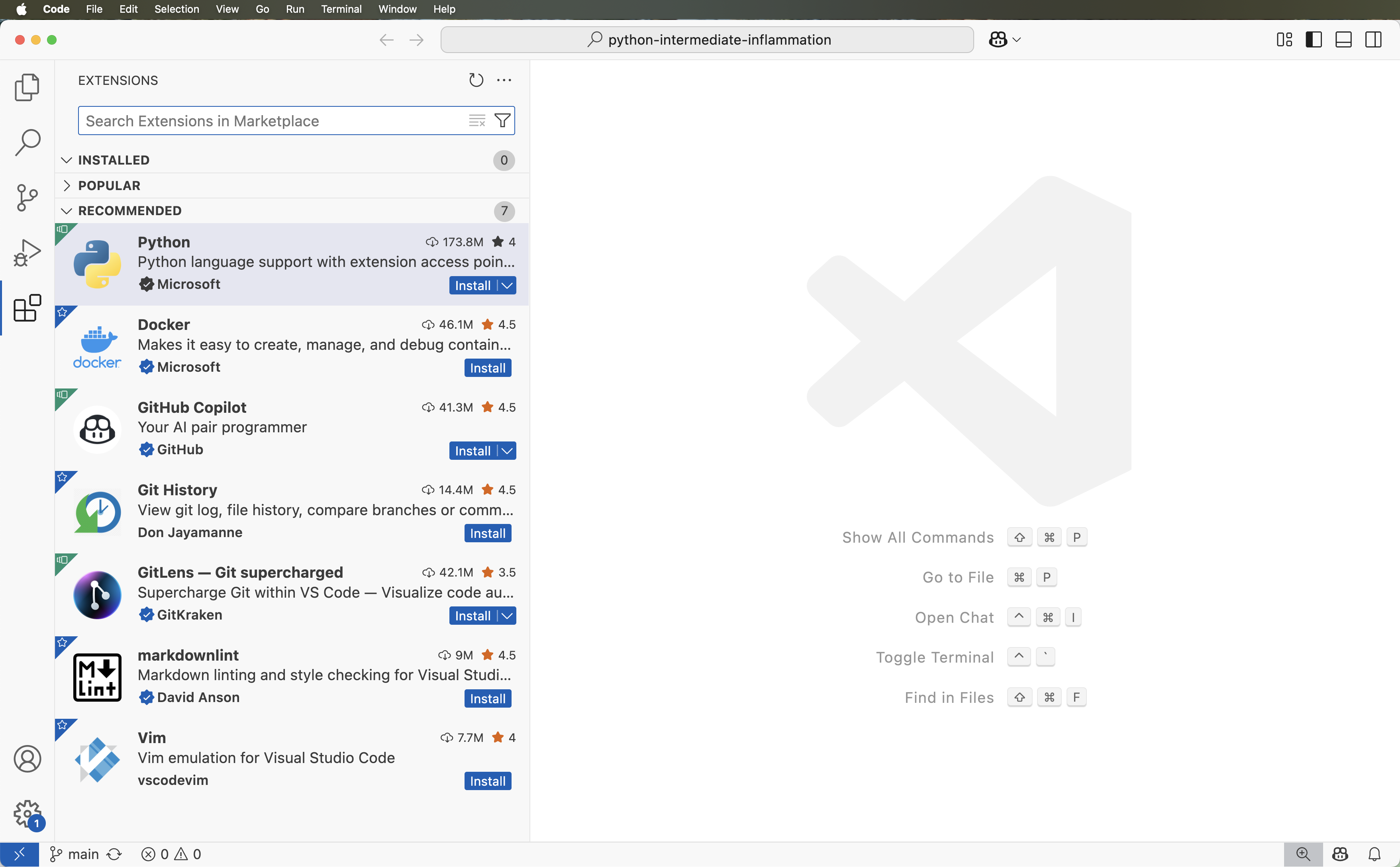

Content from 1.3 Integrated Software Development Environments

Last updated on 2025-07-30 | Edit this page

Estimated time: 35 minutes

Overview

Questions

- What are Integrated Development Environments (IDEs)?

- What are the advantages of using IDEs for software development?

Objectives

- Set up a (virtual) development environment in an IDE

- Use the IDE to run a Python script

Introduction

As we have seen in the previous episode - even a simple software project is typically split into smaller functional units and modules, which are kept in separate files and subdirectories. As your code starts to grow and becomes more complex, it will involve many different files and various external libraries. You will need an application to help you manage all the complexities of, and provide you with some useful (visual) facilities for, the software development process. Such clever and useful graphical software development applications are called Integrated Development Environments (IDEs).

Integrated Development Environments

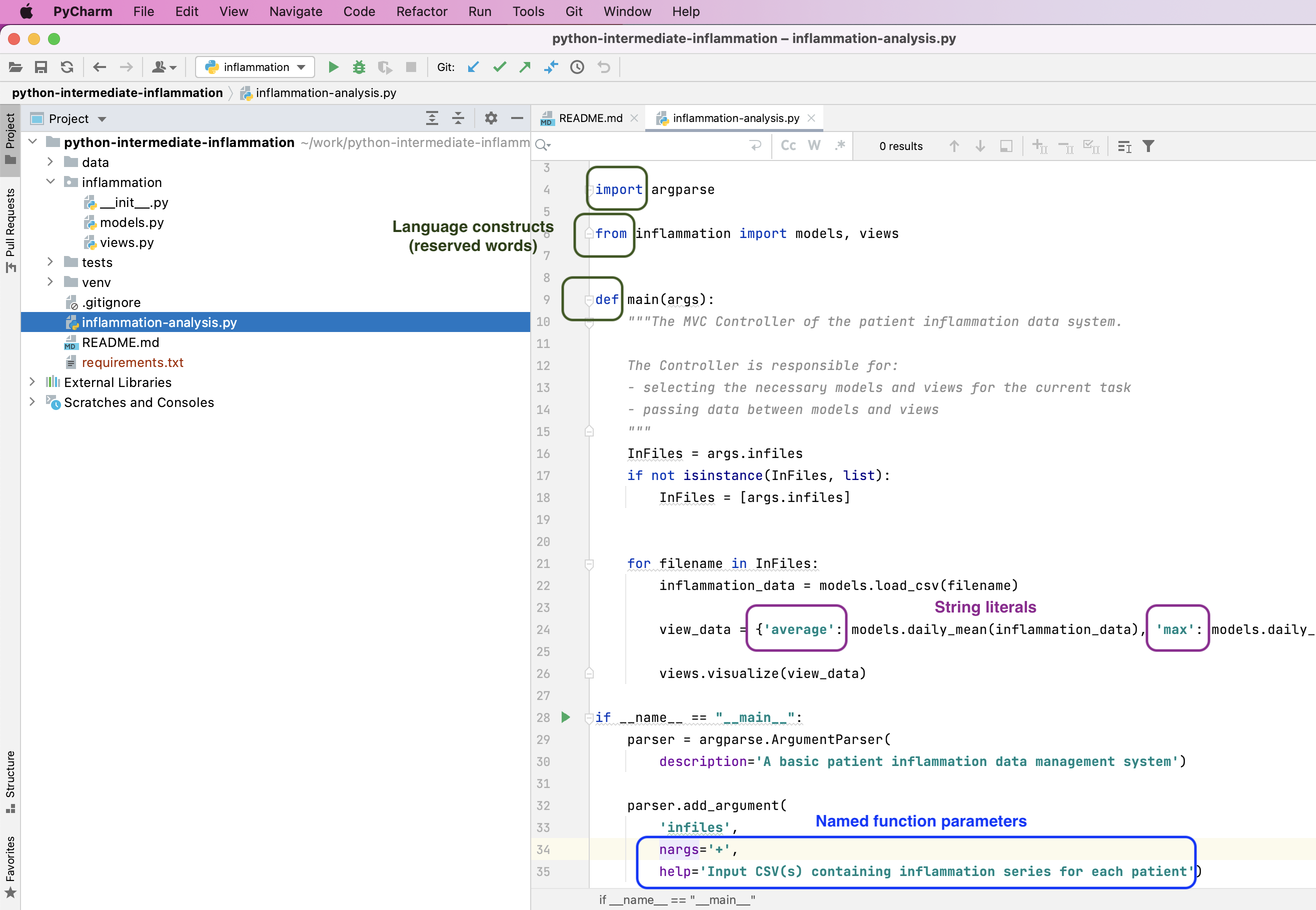

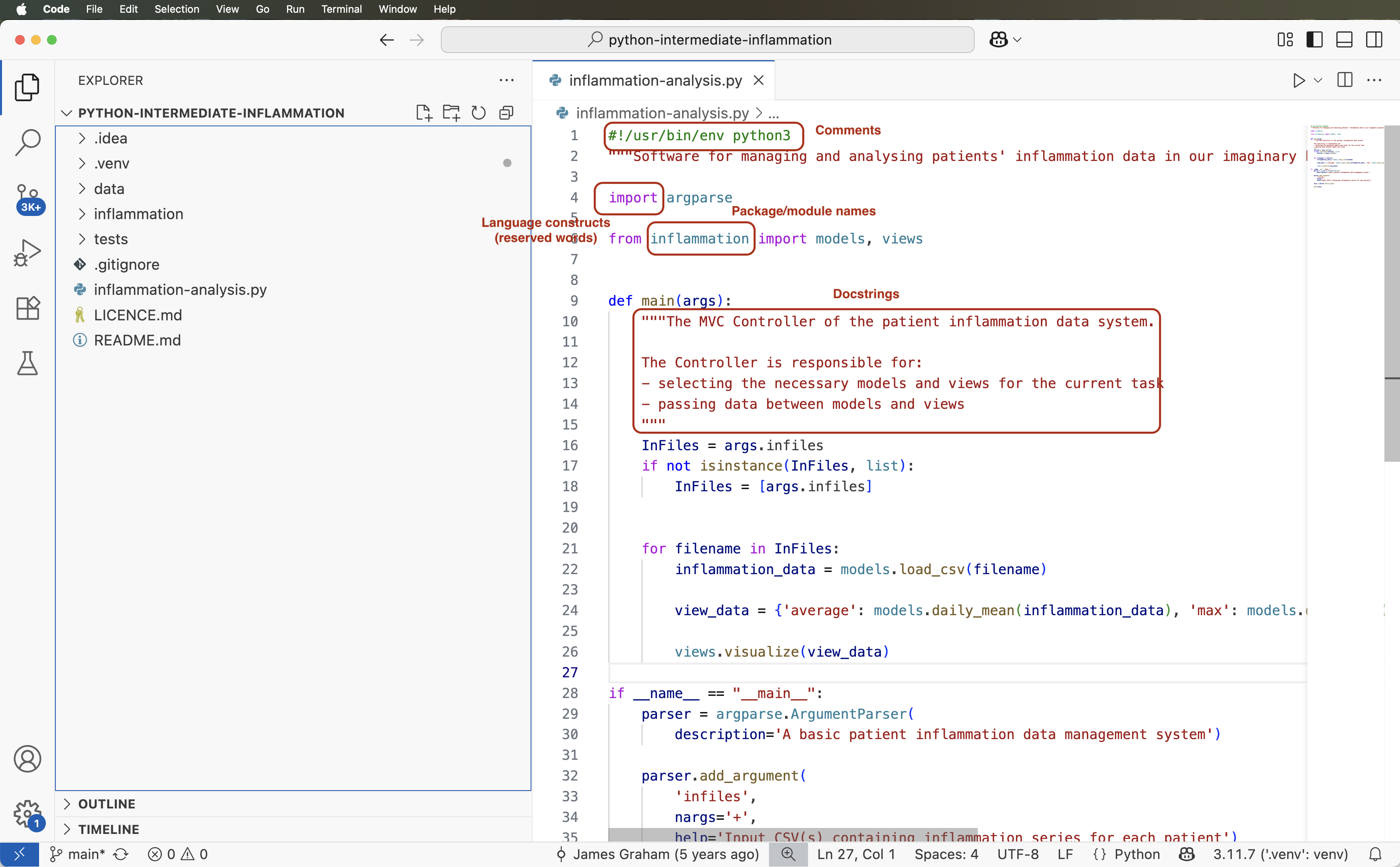

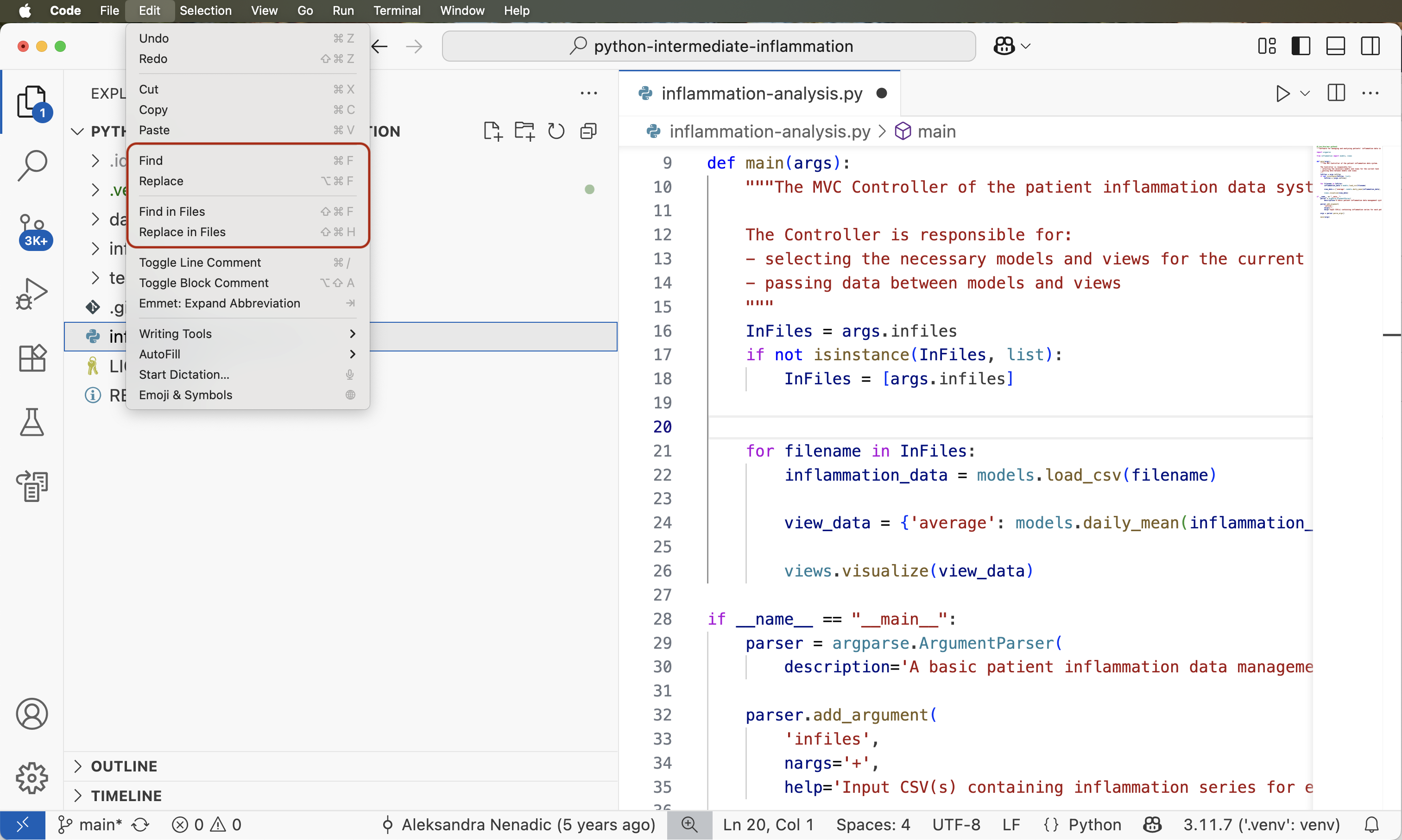

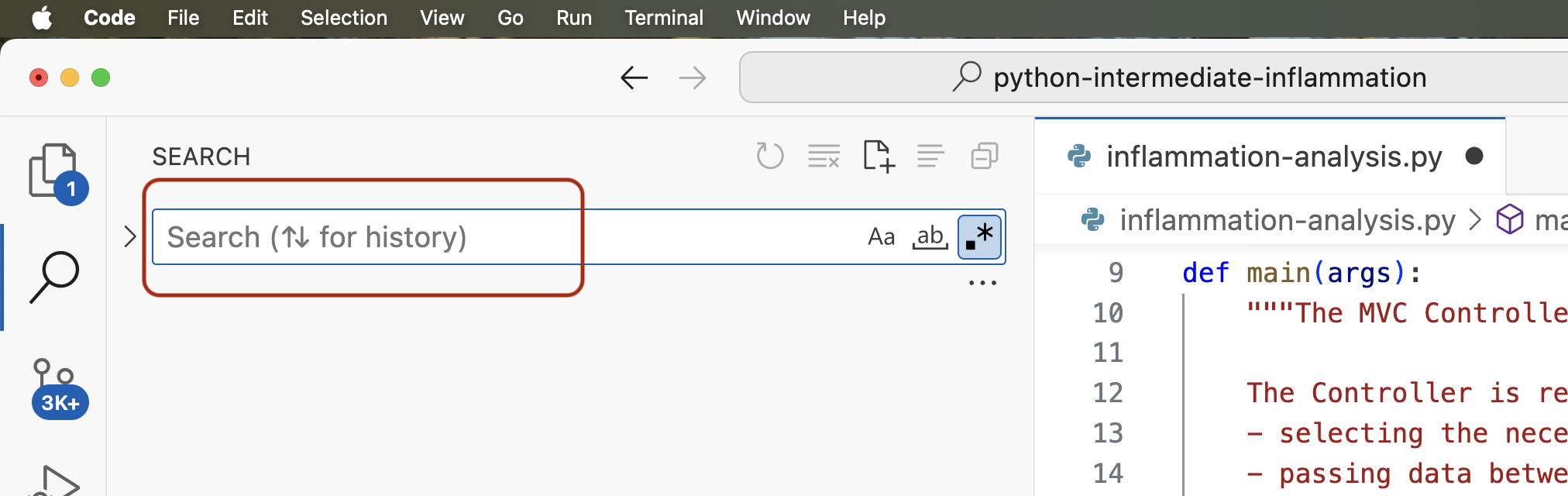

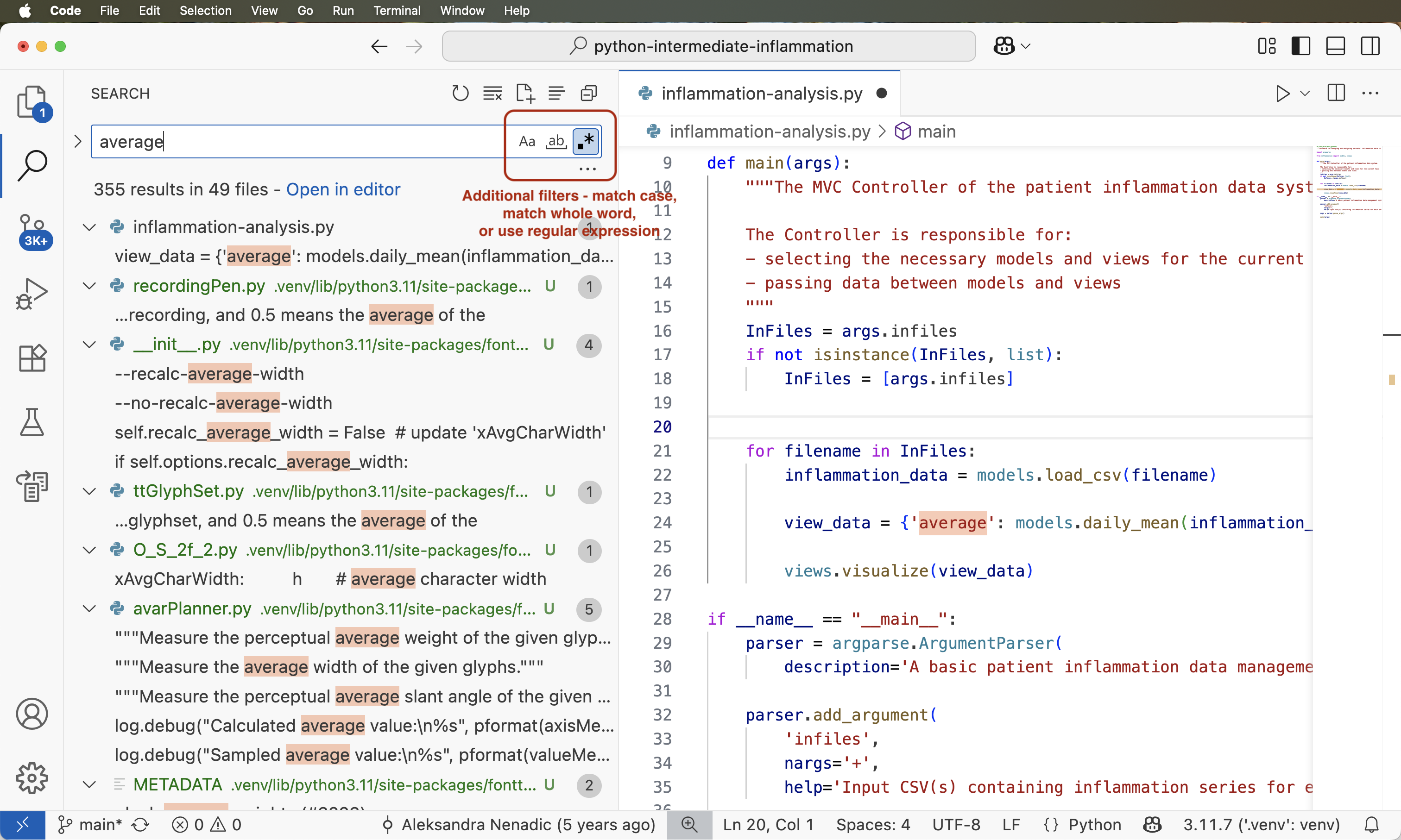

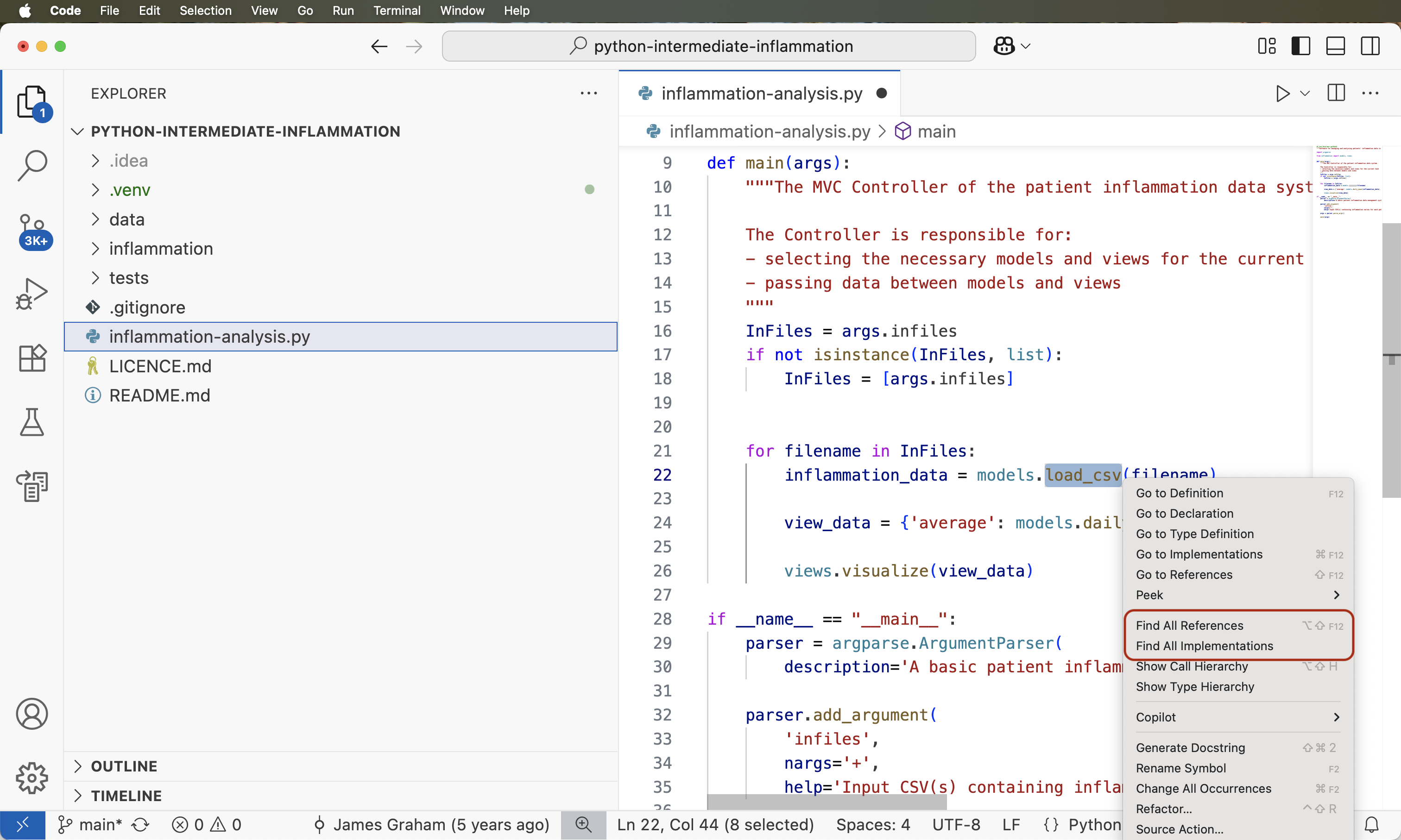

An IDE normally consists of at least a source code editor, build automation tools and a debugger. The boundaries between modern IDEs and other aspects of the broader software development process are often blurred. Nowadays IDEs also offer version control support, tools to construct graphical user interfaces (GUI) and web browser integration for web app development, source code inspection for dependencies and many other useful functionalities. The following is a list of the most commonly seen IDE features:

- syntax highlighting - to show the language constructs, keywords and the syntax errors with visually distinct colours and font effects

- code completion - to speed up programming by offering a set of possible (syntactically correct) code options

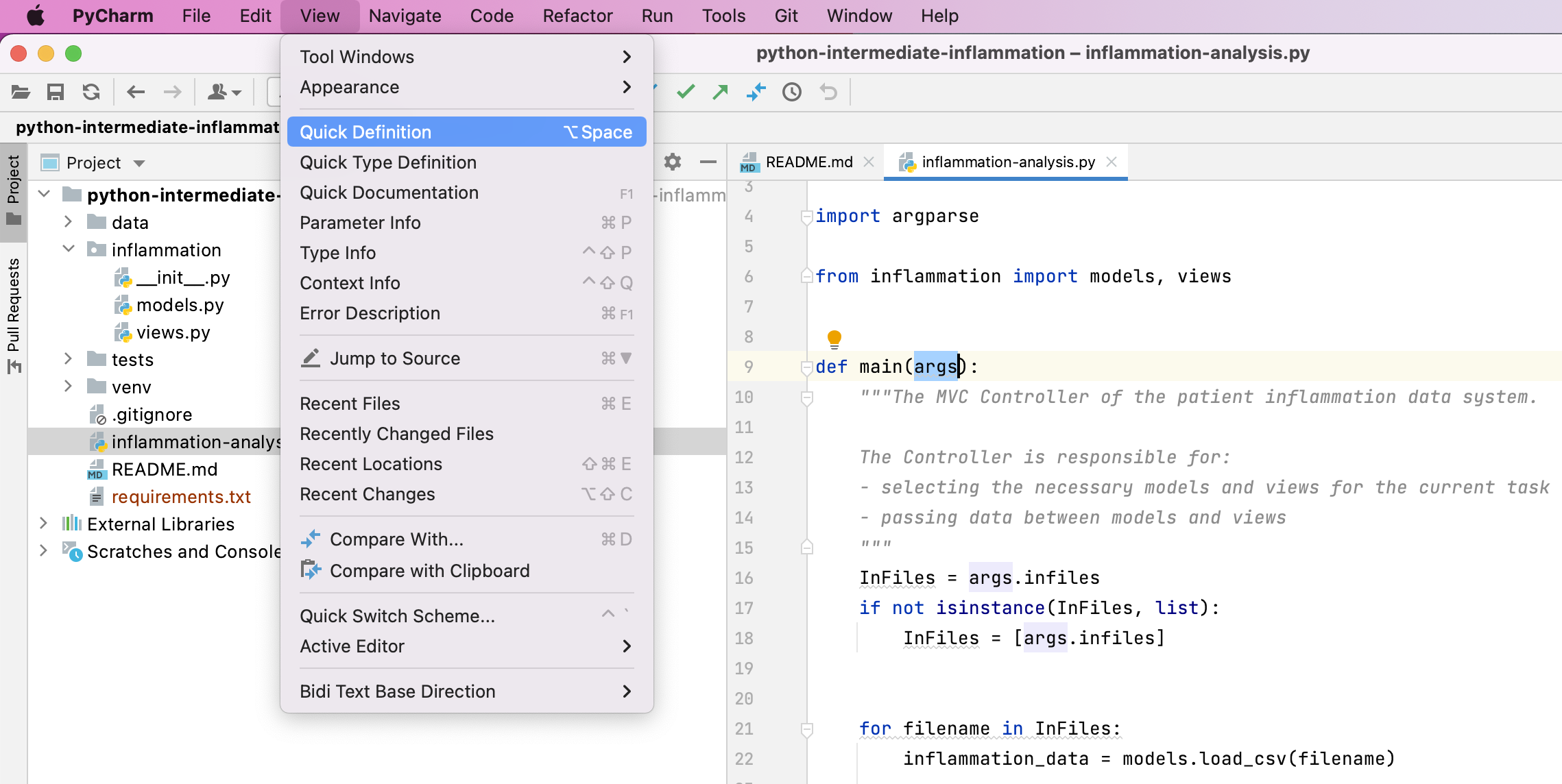

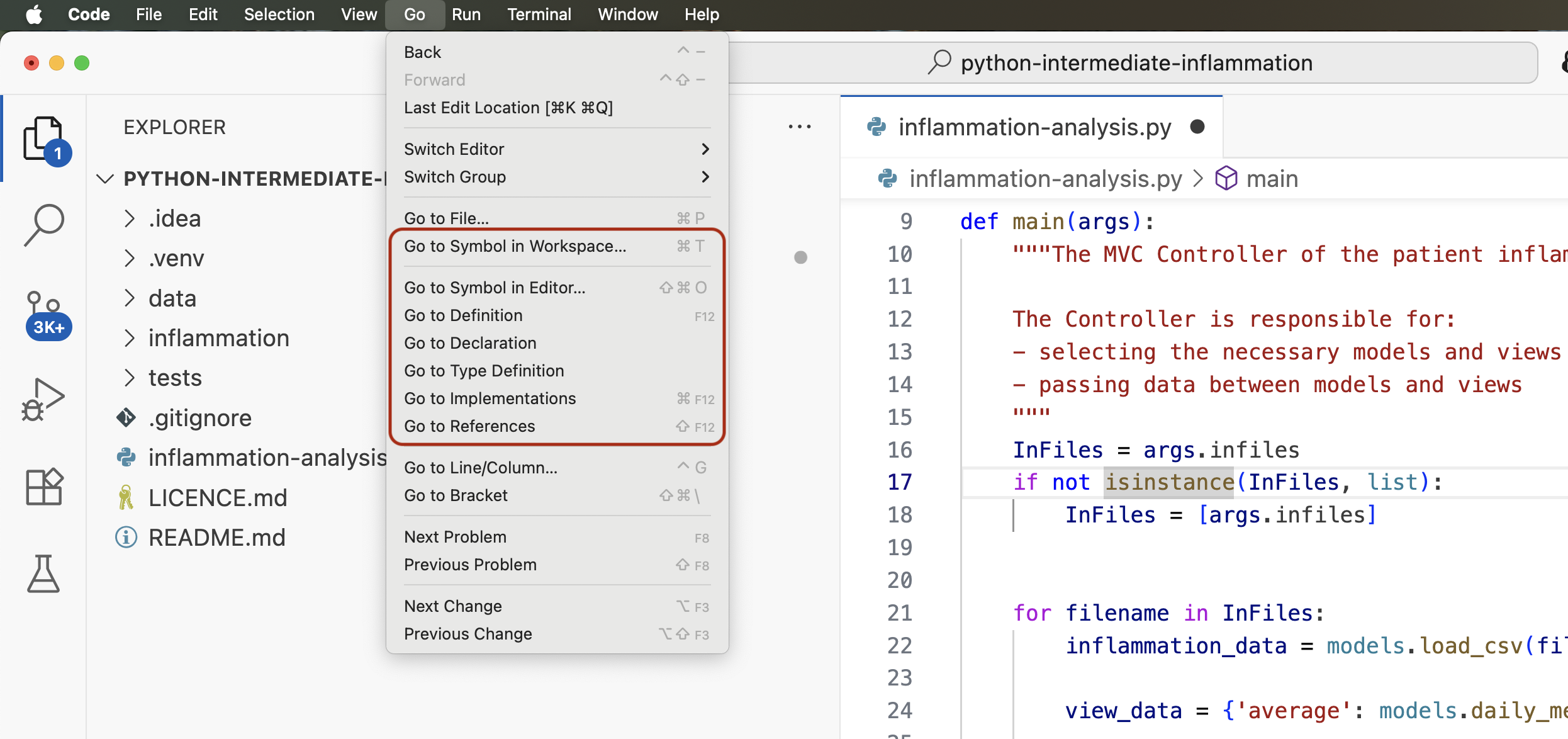

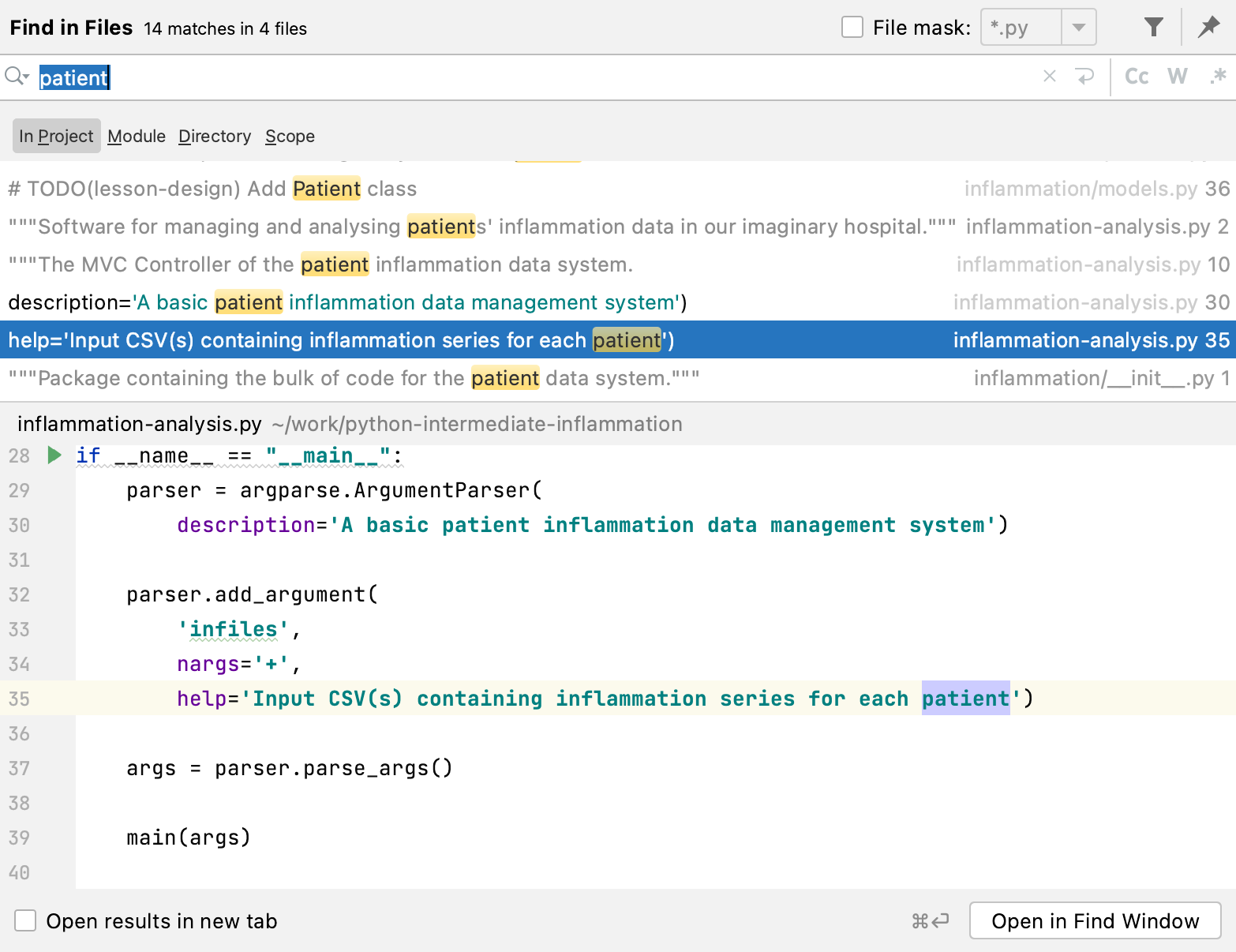

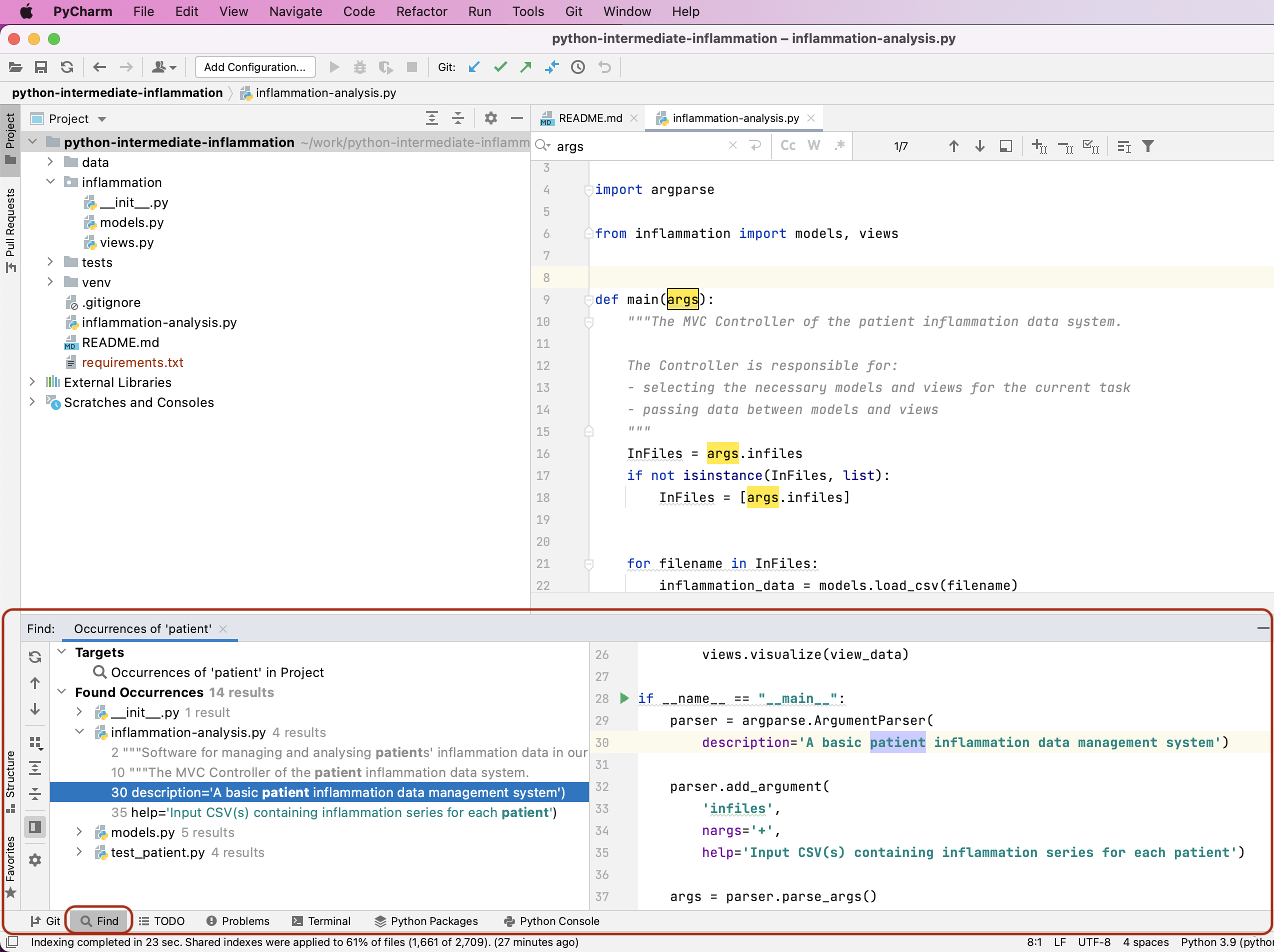

- code search - finding package, class, function and variable declarations, their usages and referencing

- version control support - to interact with source code repositories

- debugging support - for setting breakpoints in the code editor, step-by-step execution of code and inspection of variables

IDEs are extremely useful and modern software development would be very hard without them. There are a number of IDEs available for Python development; a good overview is available from the Python Project Wiki. In addition to IDEs, there are also a number of code editors that have Python support. Code editors can be as simple as a text editor with syntax highlighting and code formatting capabilities (e.g., GNU EMACS, Vi/Vim). Most good code editors can also execute code and control a debugger, and some can also interact with a version control system. Compared to an IDE, a good dedicated code editor is usually smaller and quicker, but often less feature-rich.

You will have to decide which one is the best for you in your daily work. In this course you have the choice of using two free and open source IDEs - PyCharm Community Edition from JetBrains or Microsoft’s Visual Studio Code (VS Code). A popular alternative to consider is free and open source Spyder IDE - we are not covering it here but it should be possible to switch.

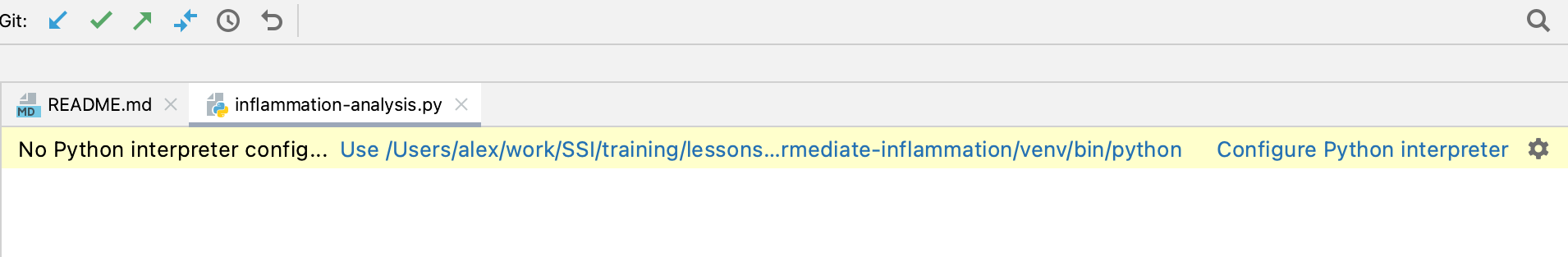

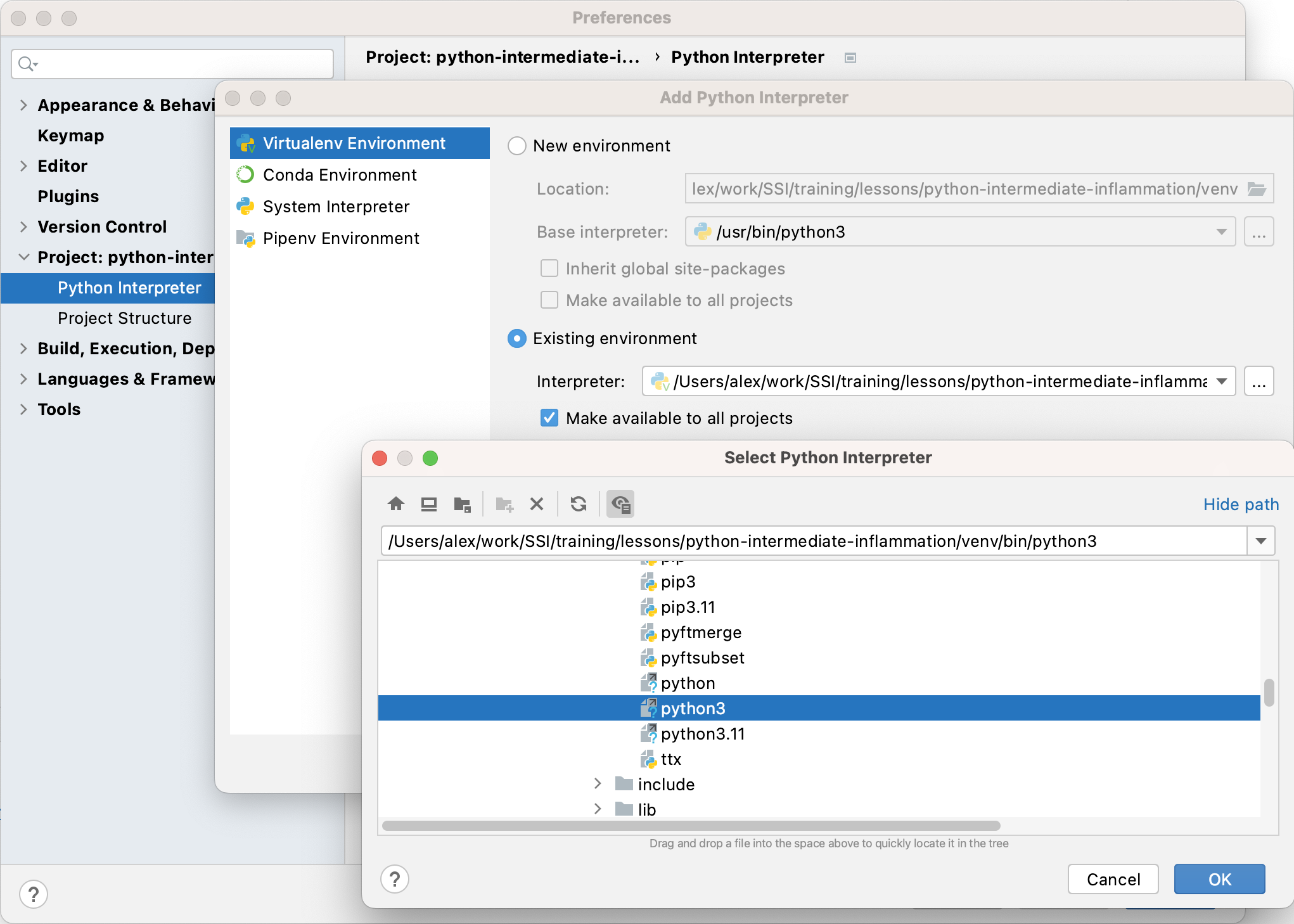

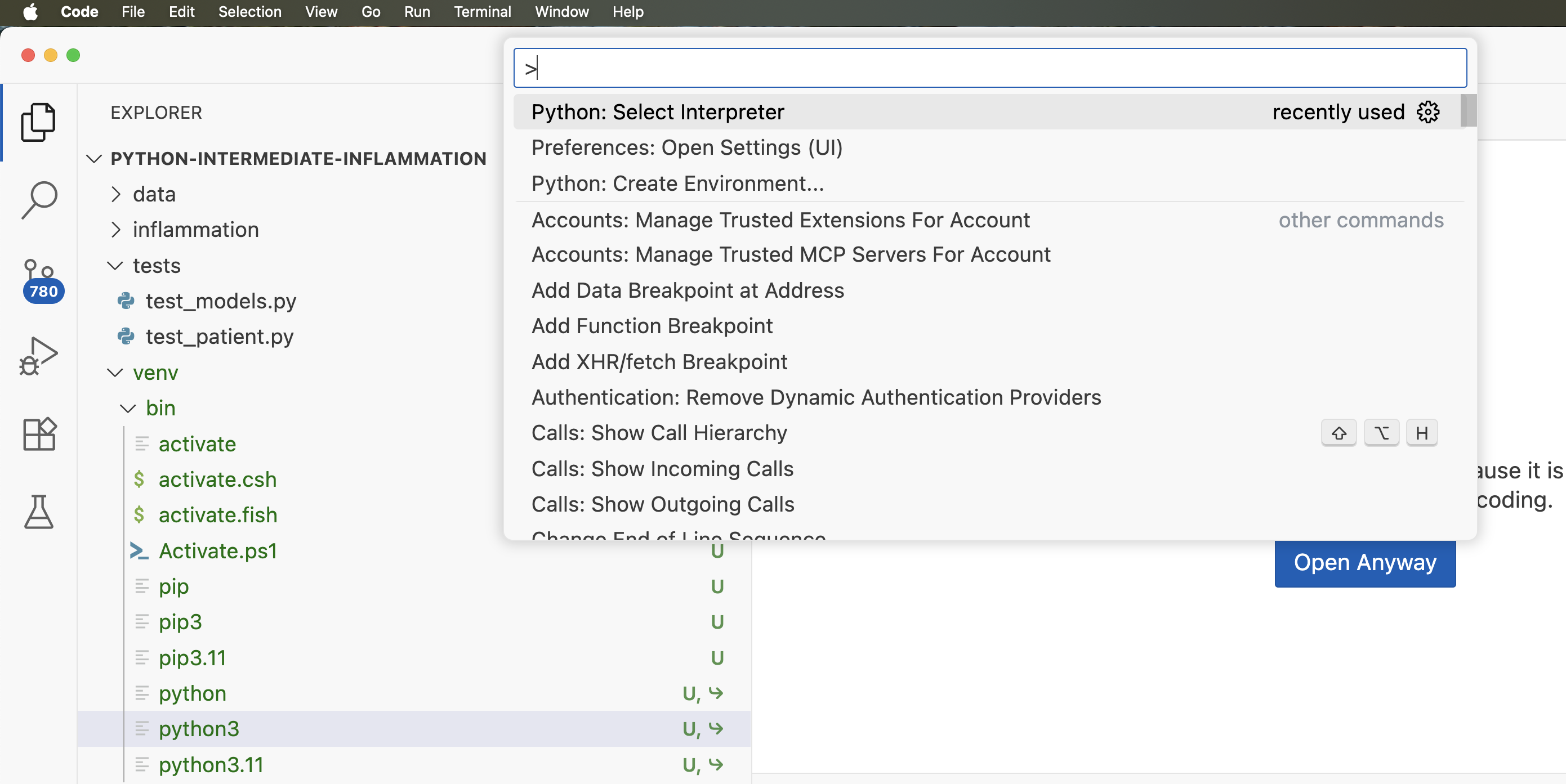

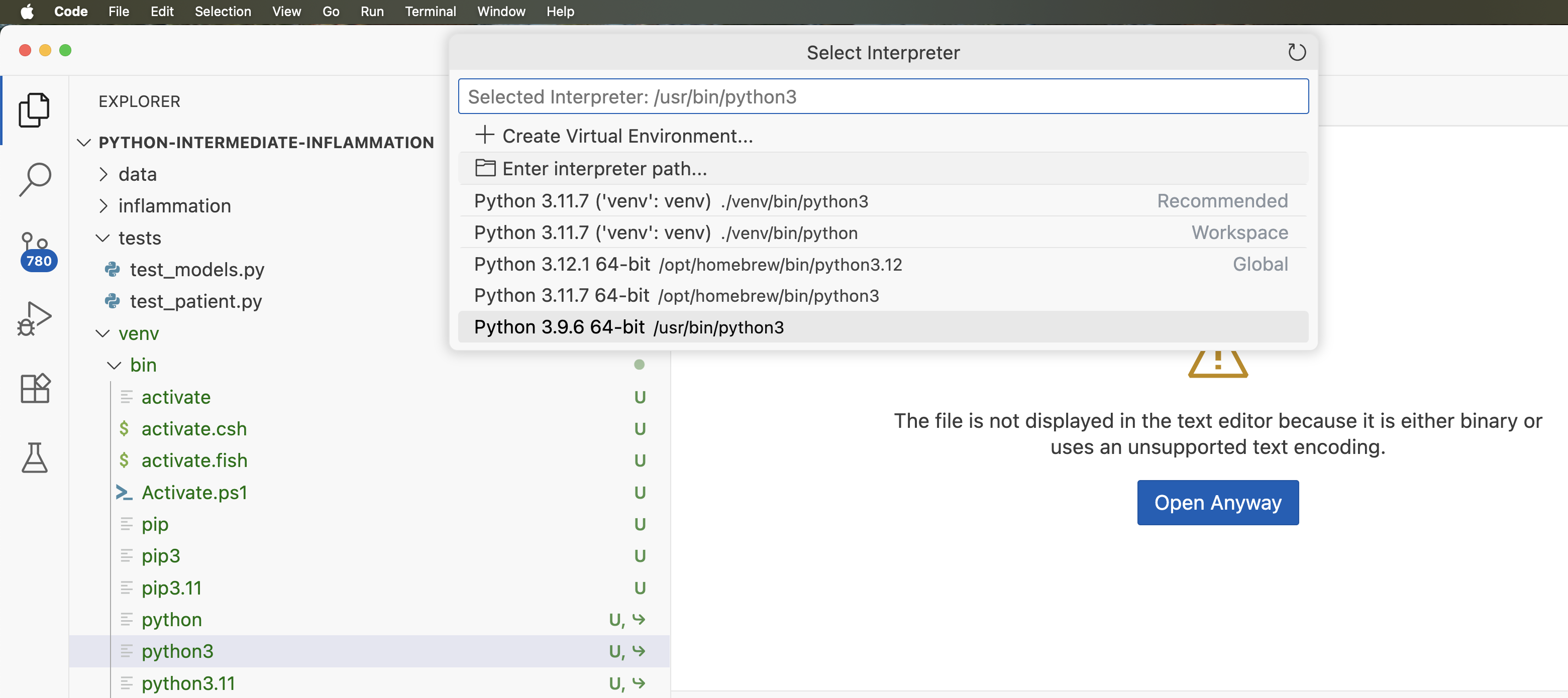

Configuring a Virtual Environment

Before you can run the code from an IDE, you need to explicitly tell the IDE the path to the Python interpreter on your system. The same goes for any dependencies your code may have (that form part of the virtual environment together with the Python interpreter) - you need to tell the IDE where to find them, much like we did from the command line in the previous episode.

Luckily for us, we have already set up a virtual environment for our project from the command line already and some IDEs are clever enough to understand it.

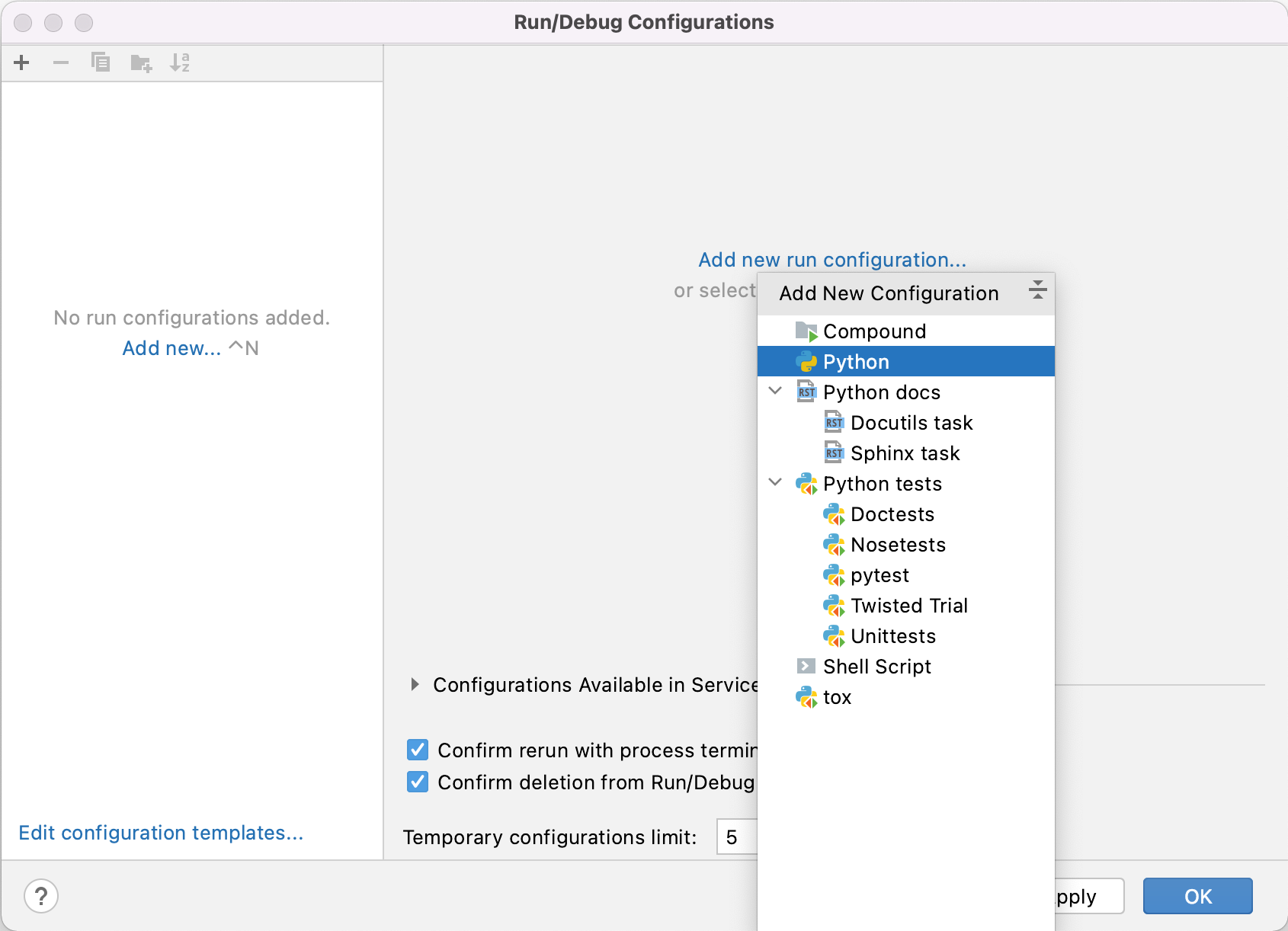

Adding a Python Interpreter

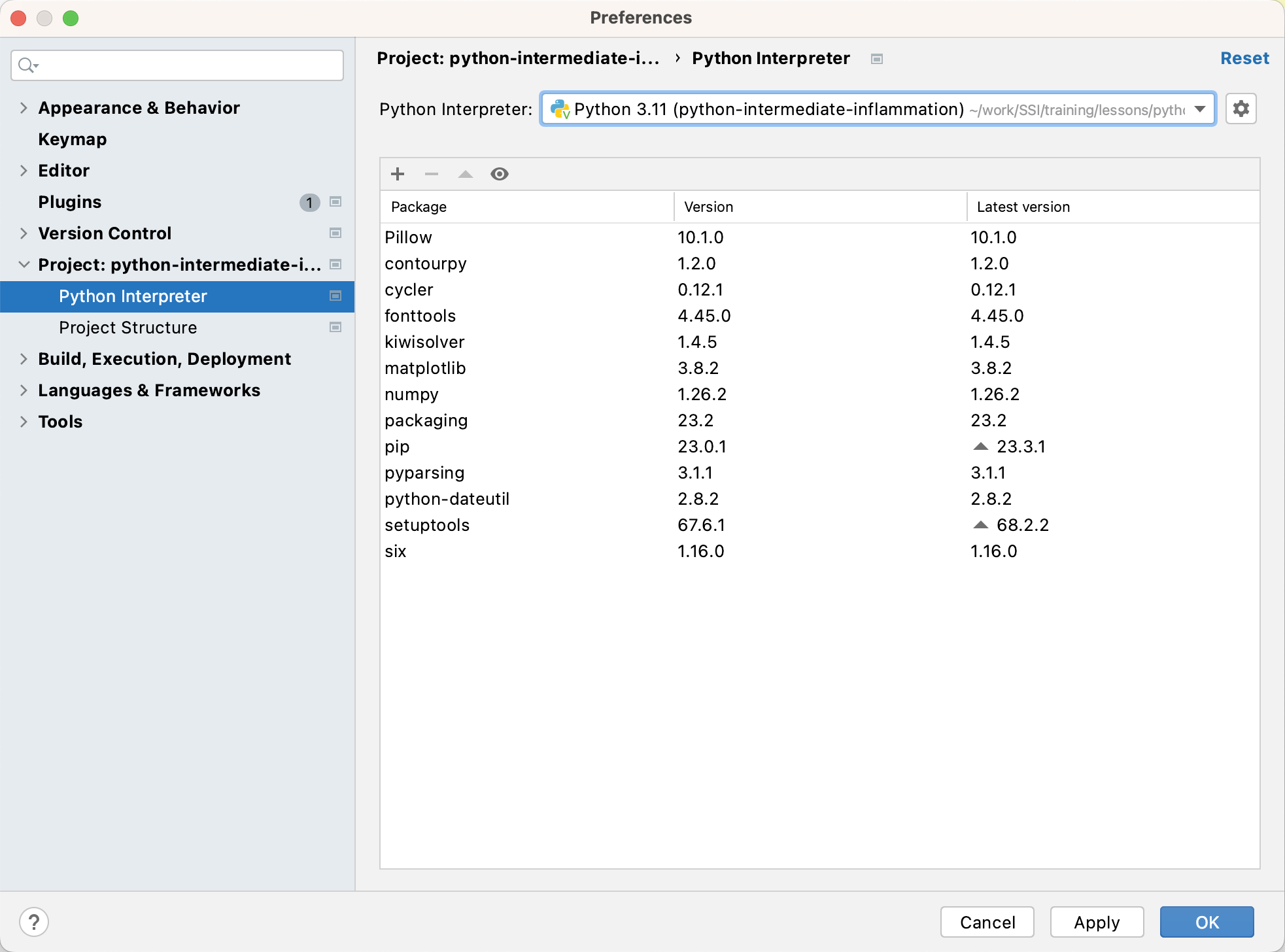

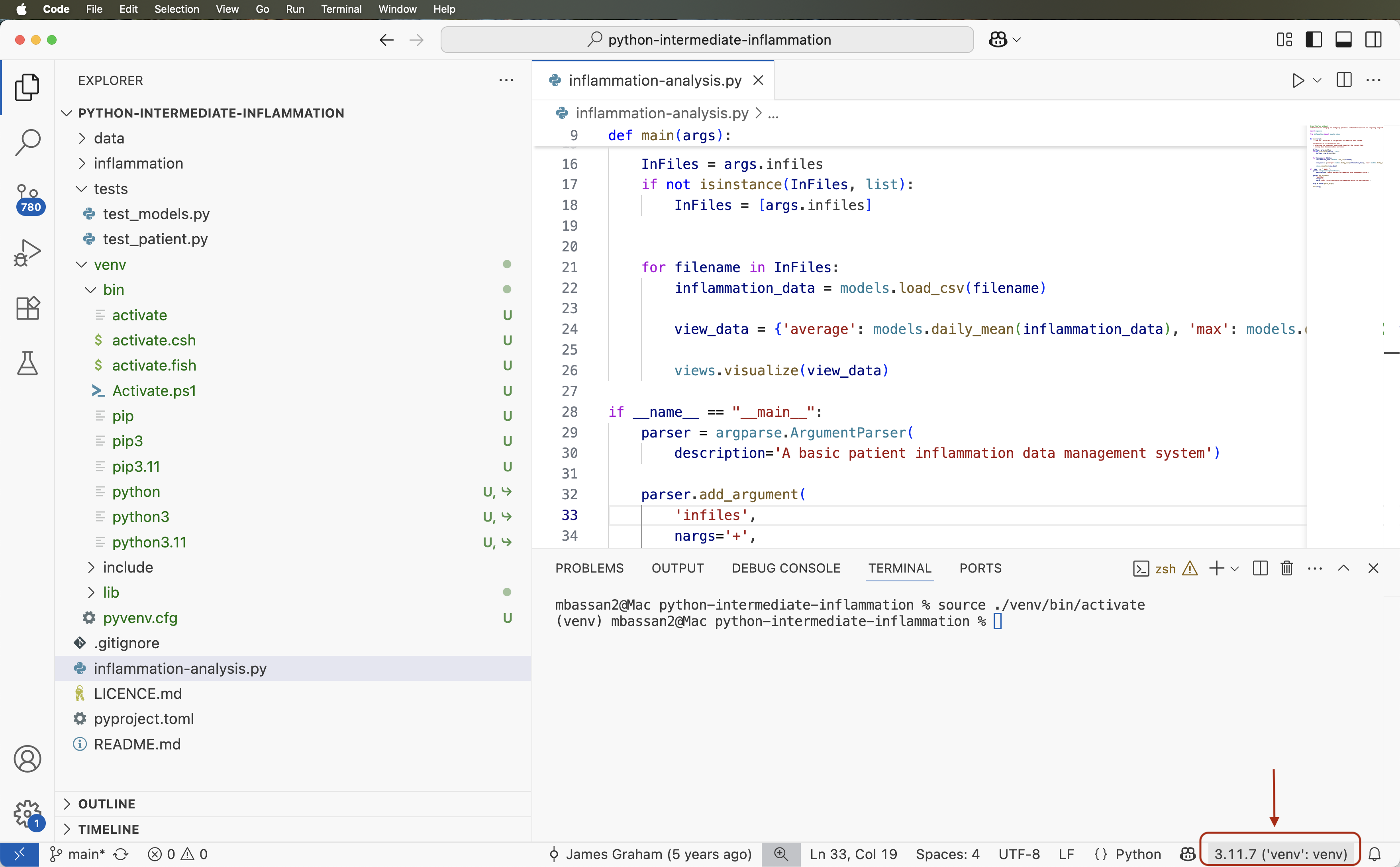

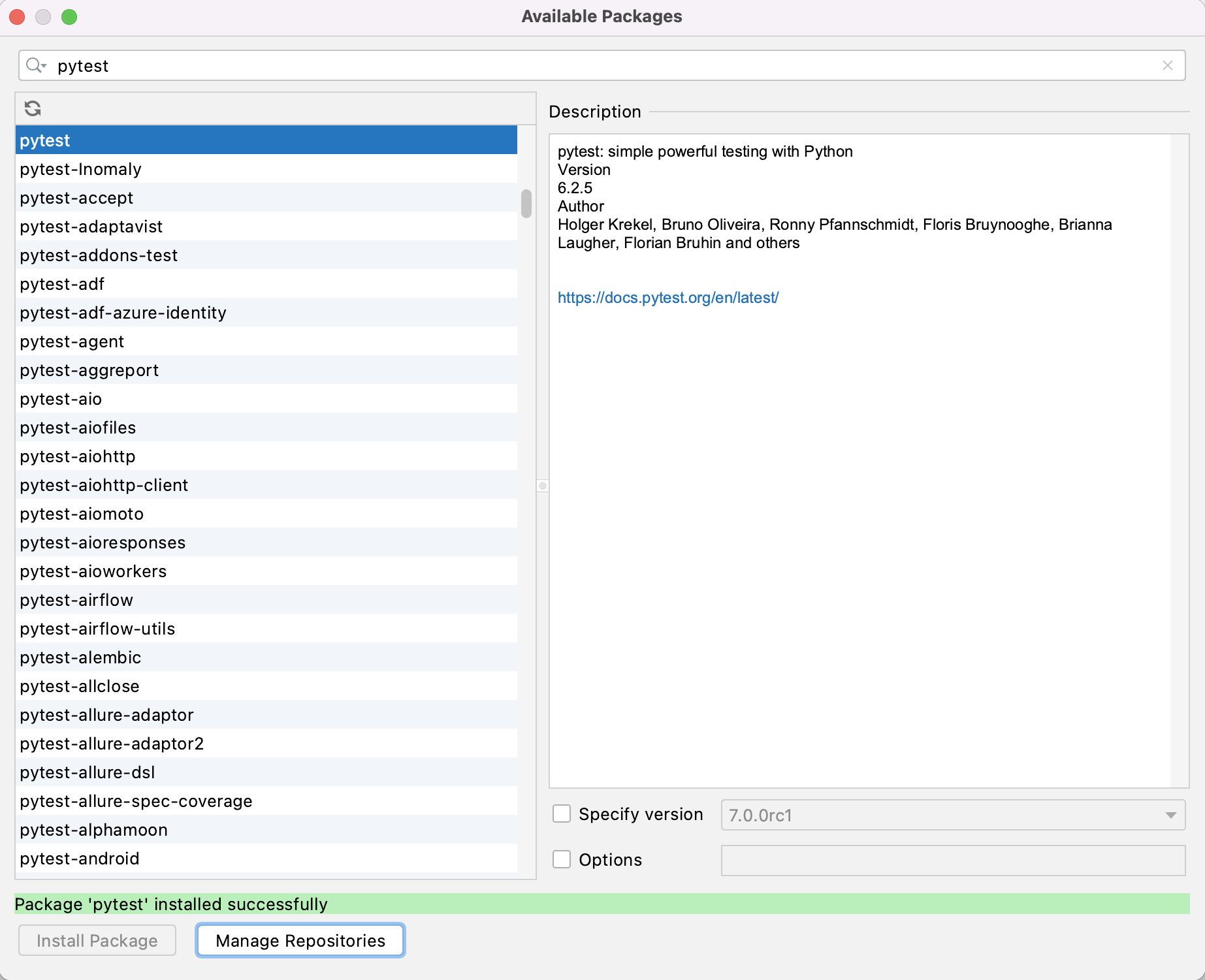

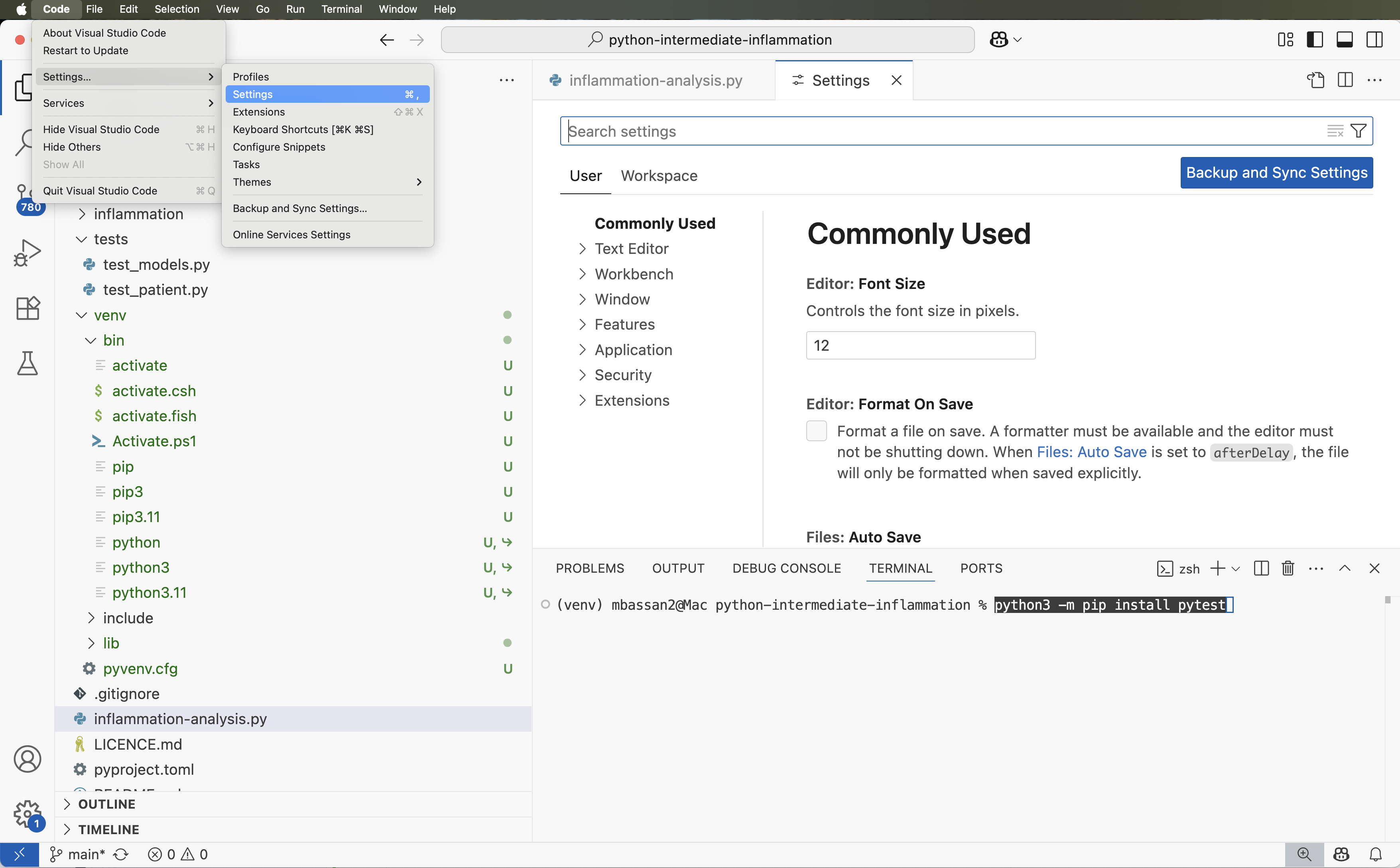

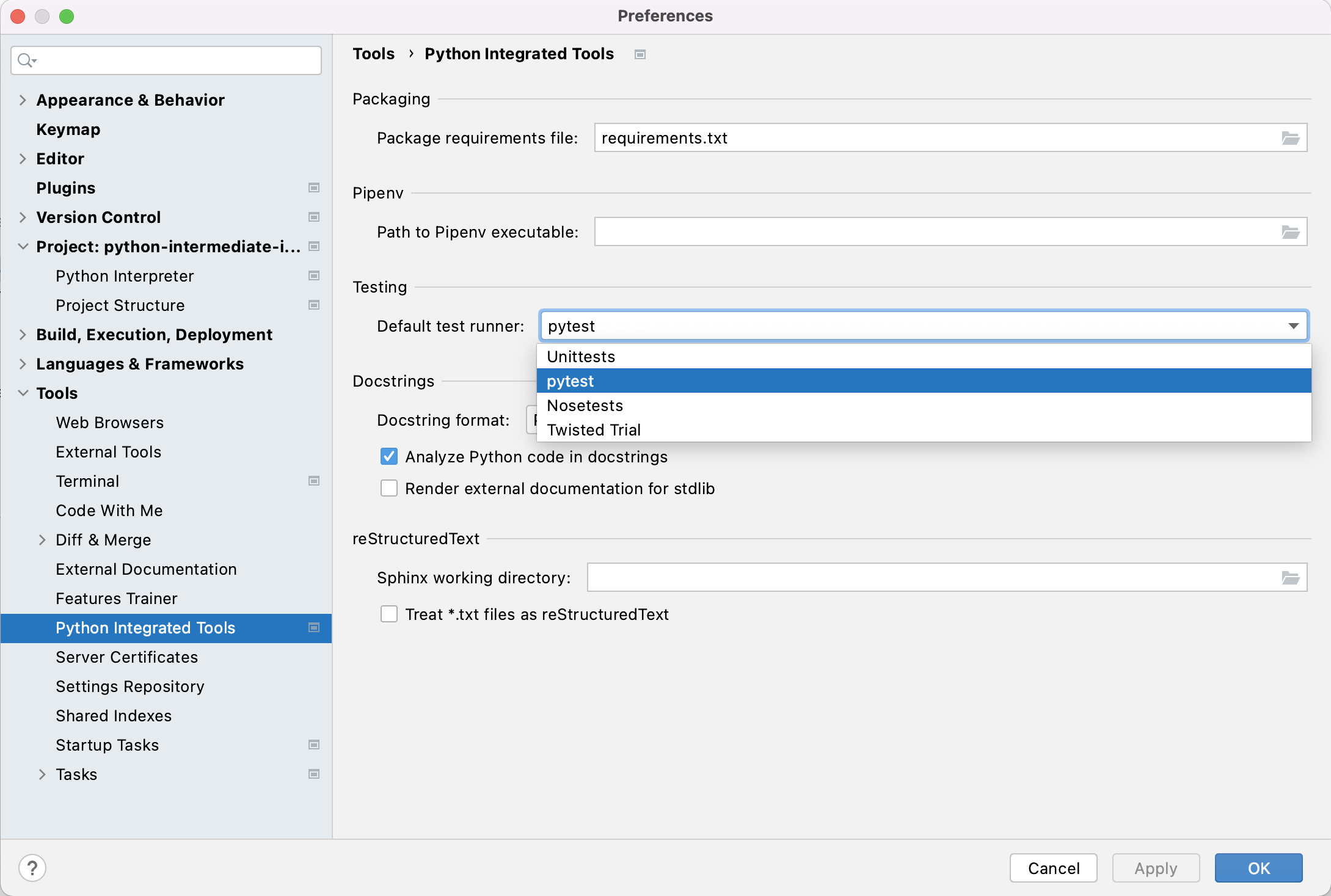

Adding an External Dependency from IDE

We have already added packages numpy and

matplotlib to our virtual environment from the command line

in the previous episode, so we are up-to-date with all external

libraries we require at the moment. However, we will need library

pytest soon to implement tests for our code. We will use

this opportunity to install it from the IDE in order to see an

alternative way of doing this and how it propagates to the command

line.

Update Requirements File After Adding a New Dependency

Export the newly updated virtual environment into

requirements.txt file.

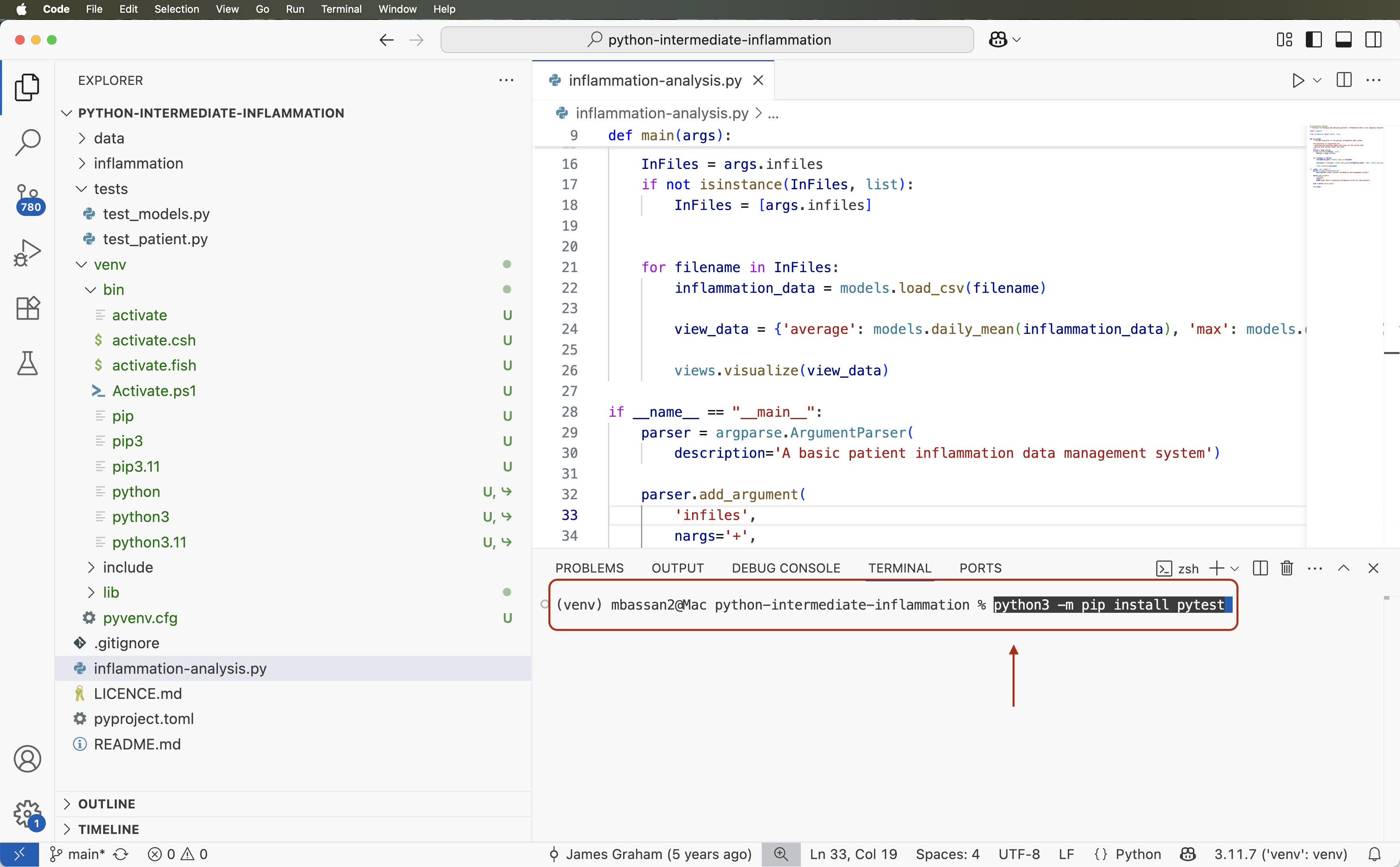

Let us verify first that the newly installed library

pytest is appearing in our virtual environment but not in

requirements.txt. First, let us check the list of installed

packages:

OUTPUT

Package Version

***

contourpy 1.2.0

cycler 0.12.1

fonttools 4.45.0

iniconfig 2.0.0

kiwisolver 1.4.5

matplotlib 3.8.2

numpy 1.26.2

packaging 23.2

Pillow 10.1.0

pip 23.0.1

pluggy 1.3.0

pyparsing 3.1.1

pytest 7.4.3

python-dateutil 2.8.2

setuptools 67.6.1

six 1.16.0We can see the pytest library appearing in the listing

above. However, if we do:

OUTPUT

contourpy==1.2.0

cycler==0.12.1

fonttools==4.45.0

kiwisolver==1.4.5

matplotlib==3.8.2

numpy==1.26.2

packaging==23.2

Pillow==10.1.0

pyparsing==3.1.1

python-dateutil==2.8.2

six==1.16.0pytest is missing from requirements.txt. To

add it, we need to update the file by repeating the command:

pytest is now present in

requirements.txt:

OUTPUT

contourpy==1.2.0

cycler==0.12.1

fonttools==4.45.0

iniconfig==2.0.0

kiwisolver==1.4.5

matplotlib==3.8.2

numpy==1.26.2

packaging==23.2

Pillow==10.1.0

pluggy==1.3.0

pyparsing==3.1.1

pytest==7.4.3

python-dateutil==2.8.2

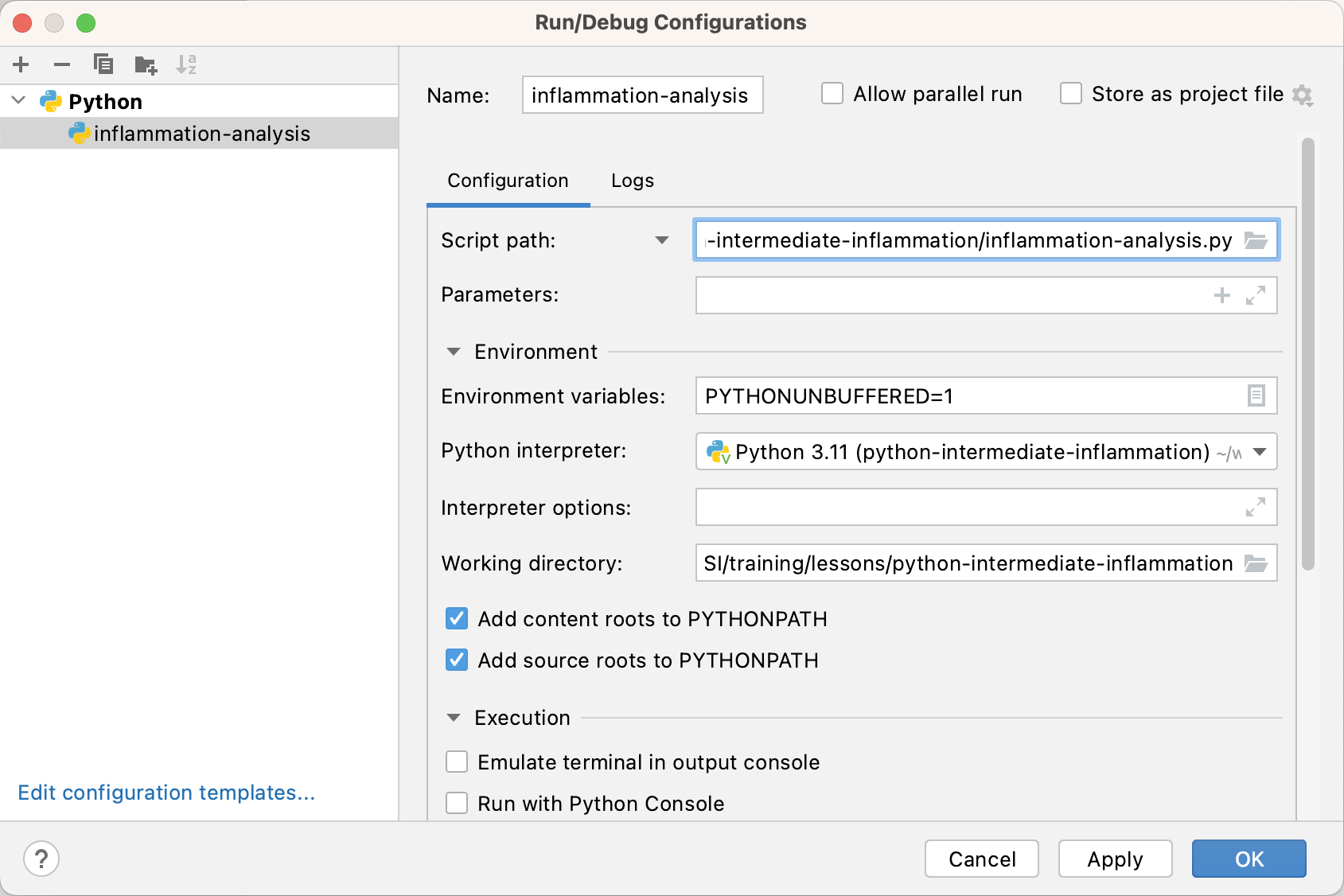

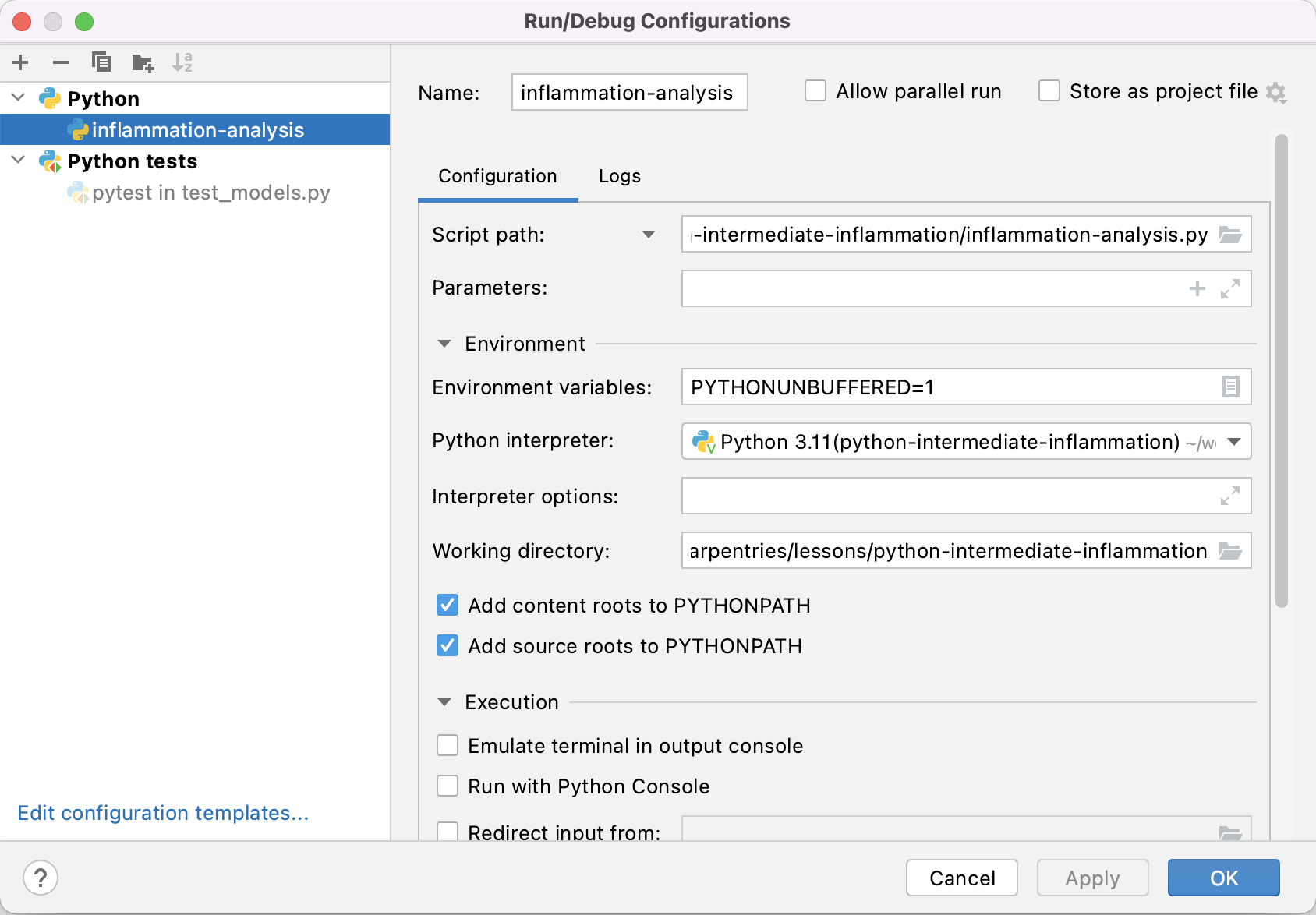

six==1.16.0Adding a Run Configuration for Our Project

Now you know how to configure and manipulate your environment in both tools (command line and IDE), which is a useful parallel to be aware of. As you may have noticed, using the command line terminal facility integrated into an IDE allows you to run commands (e.g. to manipulate files, interact with version control, etc.) and execute code without leaving the development environment, making it easier and faster to work by having all essential tools in one window.

Let us have a look at some other features afforded to us by IDEs.

Syntax Highlighting

The first thing you may notice is that code is displayed using different colours. Syntax highlighting is a feature that displays source code terms in different colours and fonts according to the syntax category the highlighted term belongs to. It also makes syntax errors visually distinct. Highlighting does not affect the meaning of the code itself - it is intended only for humans to make reading code and finding errors easier.

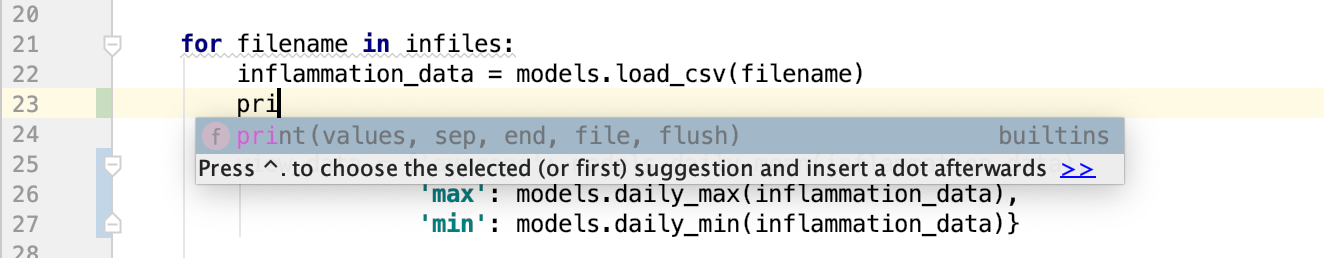

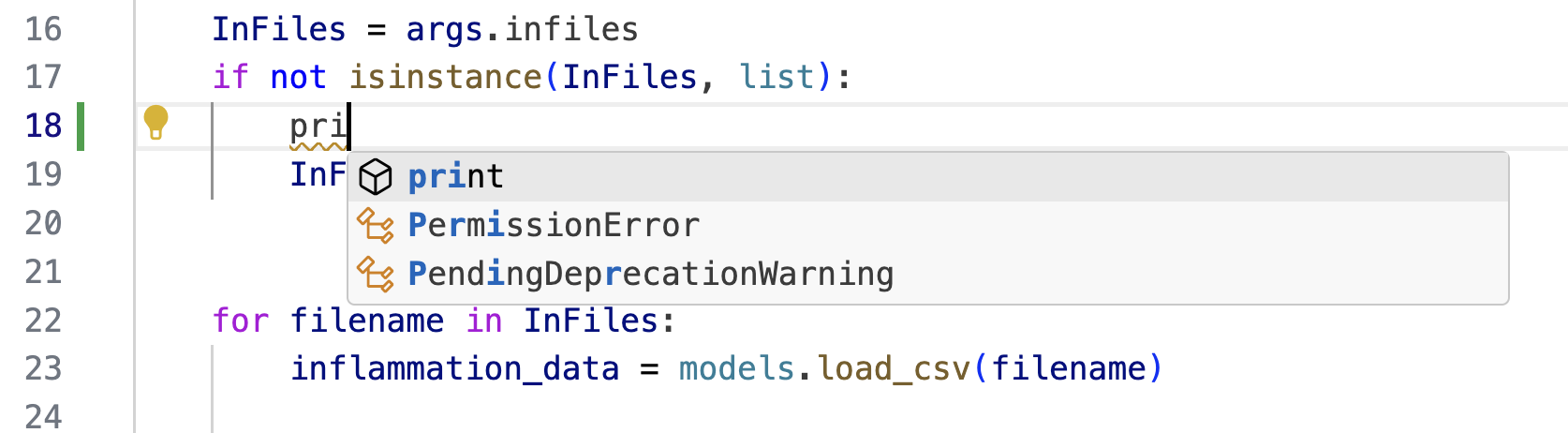

Code Completion

As you start typing code, the IDE will offer to complete some of the code for you in the form of an auto completion popup. This is a context-aware code completion feature that speeds up the process of coding (e.g. reducing typos and other common mistakes) by offering available variable names, functions from available packages, parameters of functions, hints related to syntax errors, etc.

Code Definition & Documentation References

You will often need code reference information to help you code. The IDE shows this useful information, such as definitions of symbols (e.g. functions, parameters, classes, fields, and methods) and documentation references by means of quick popups and inline tooltips.

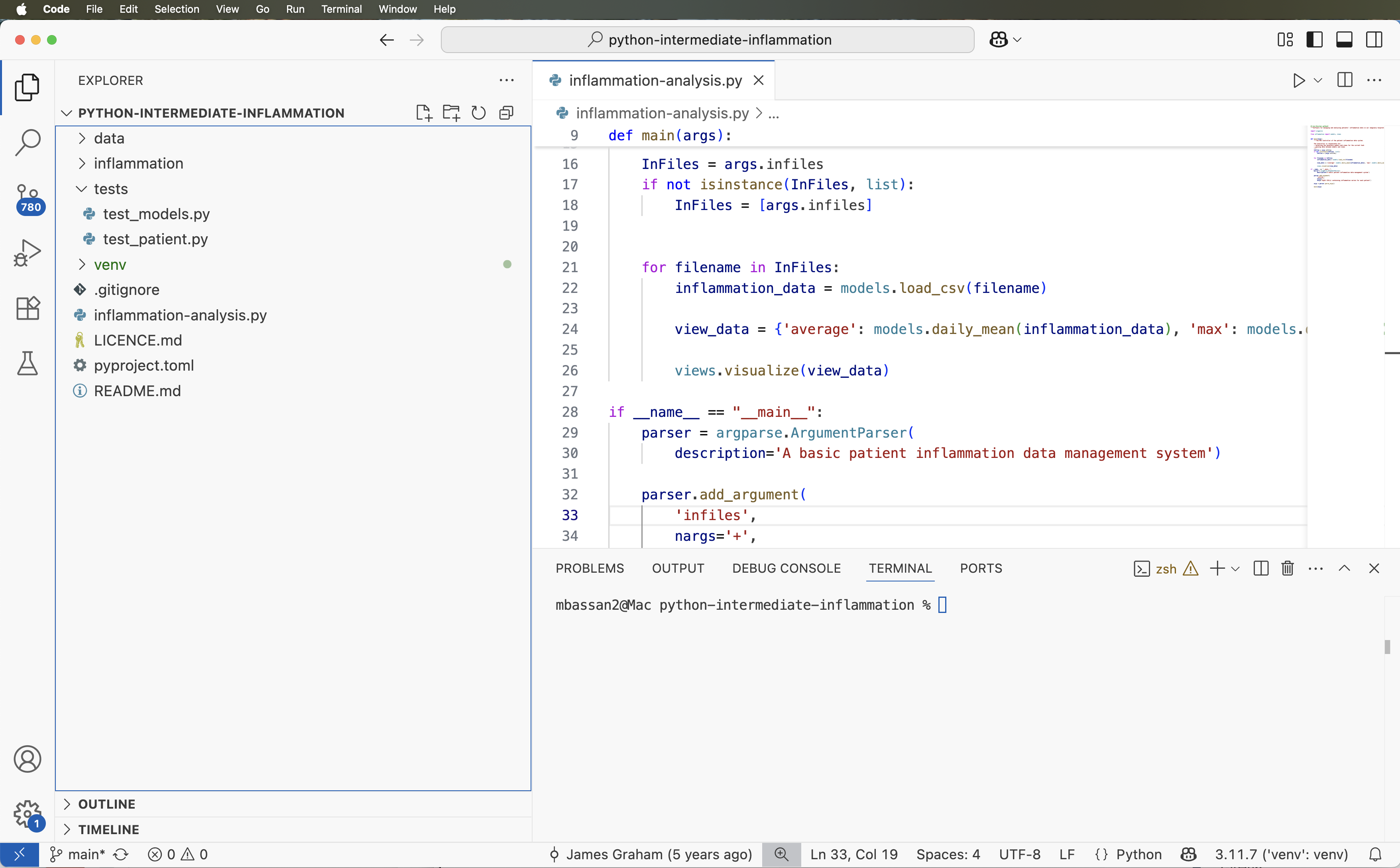

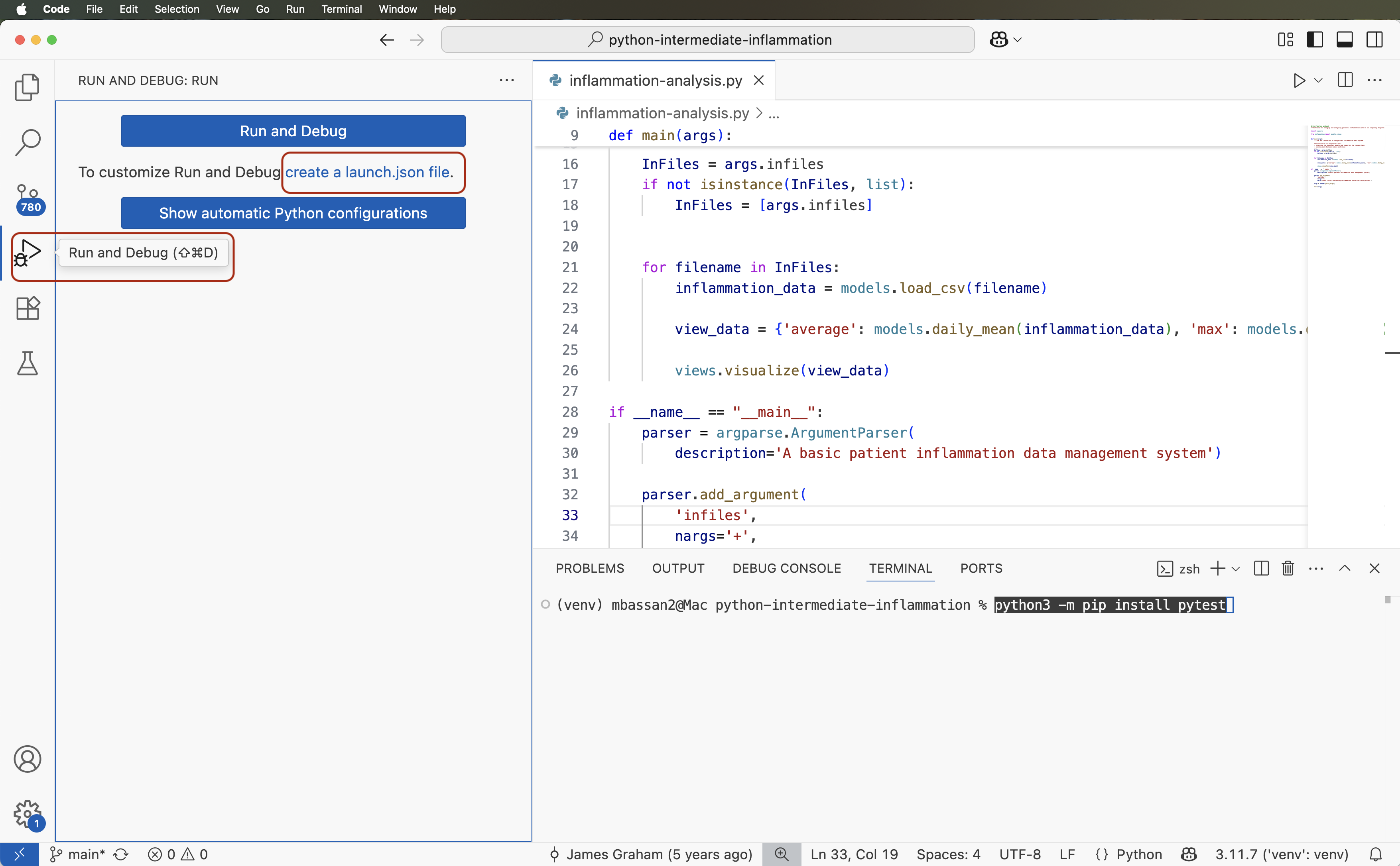

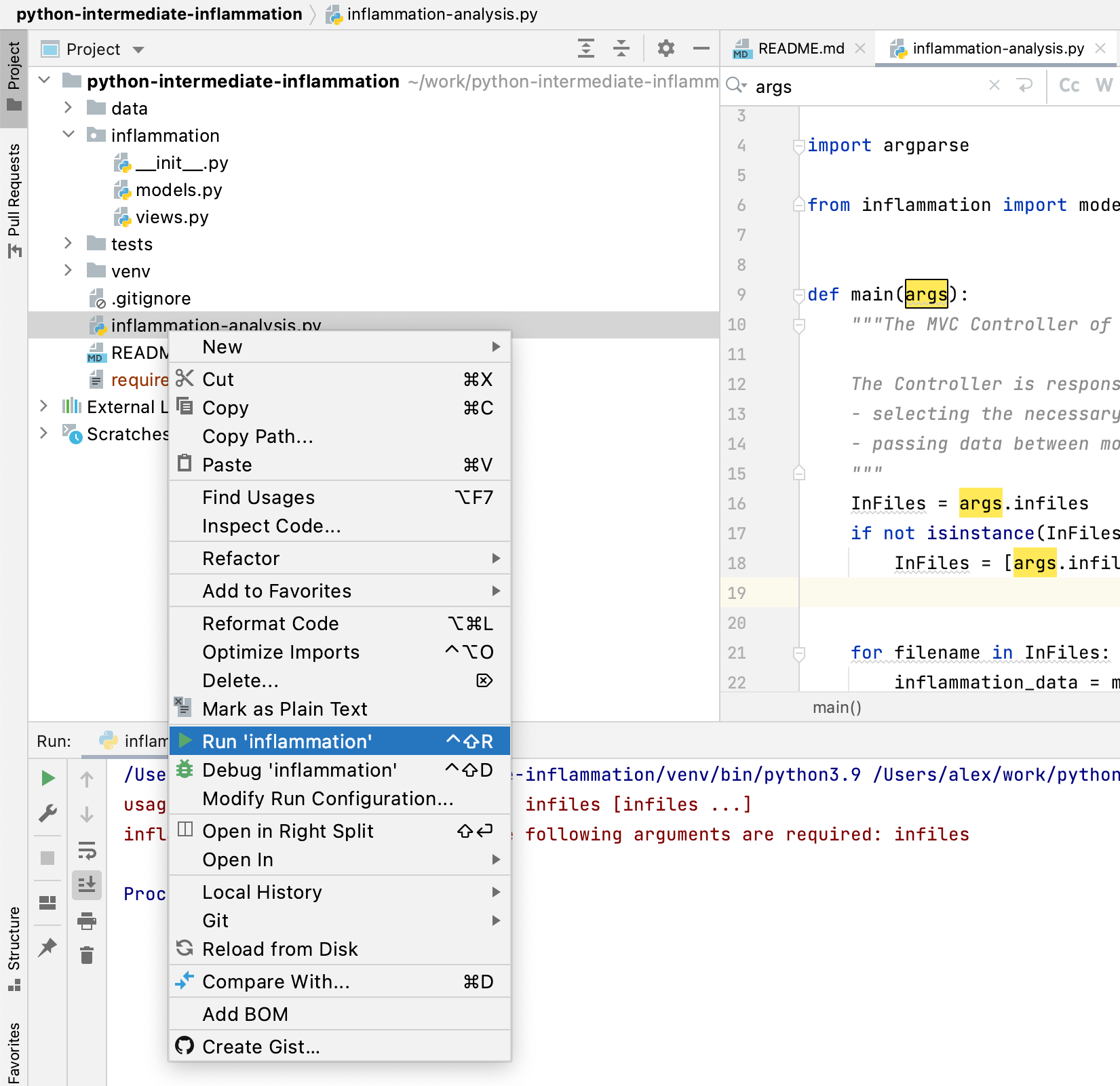

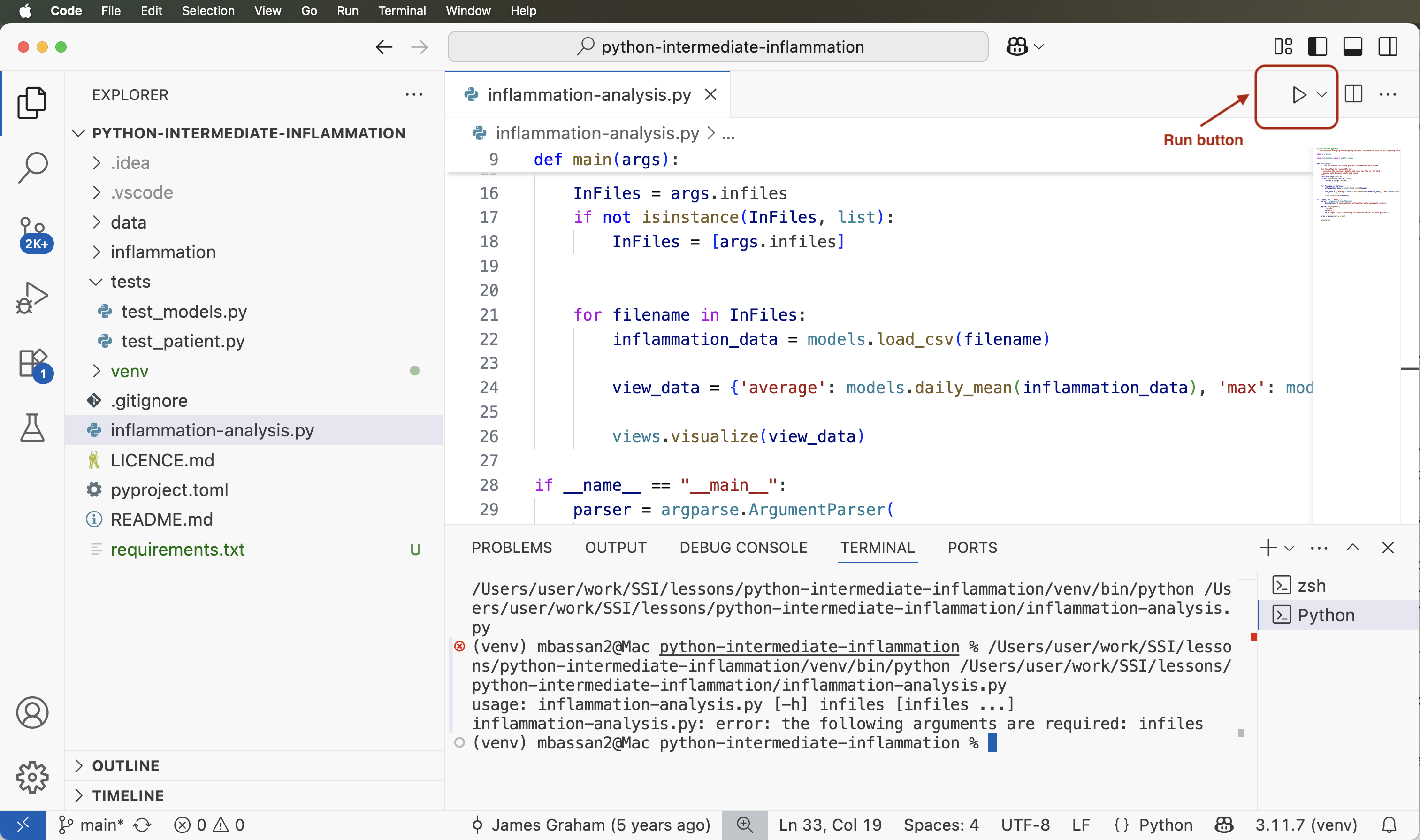

Running Code from IDE

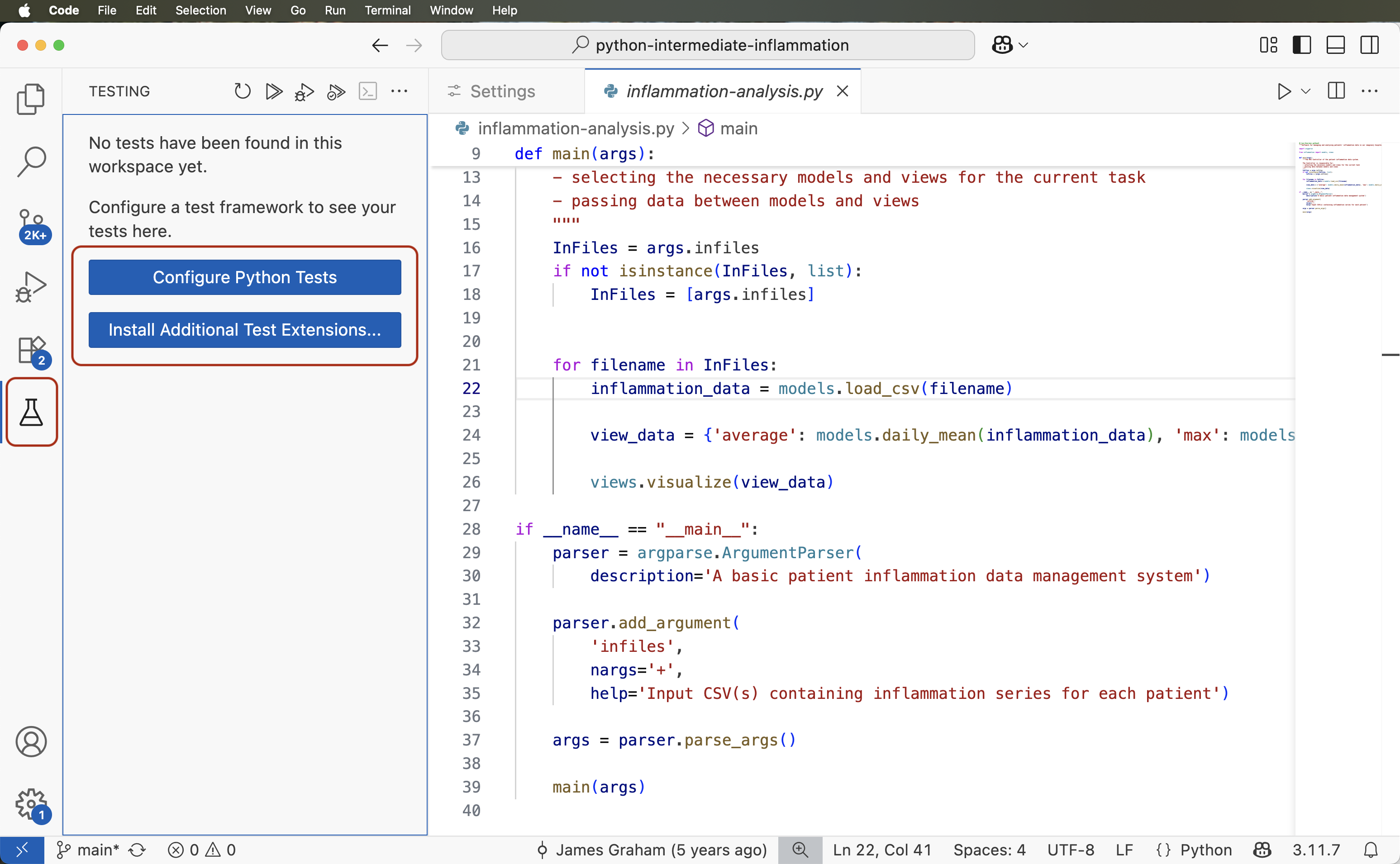

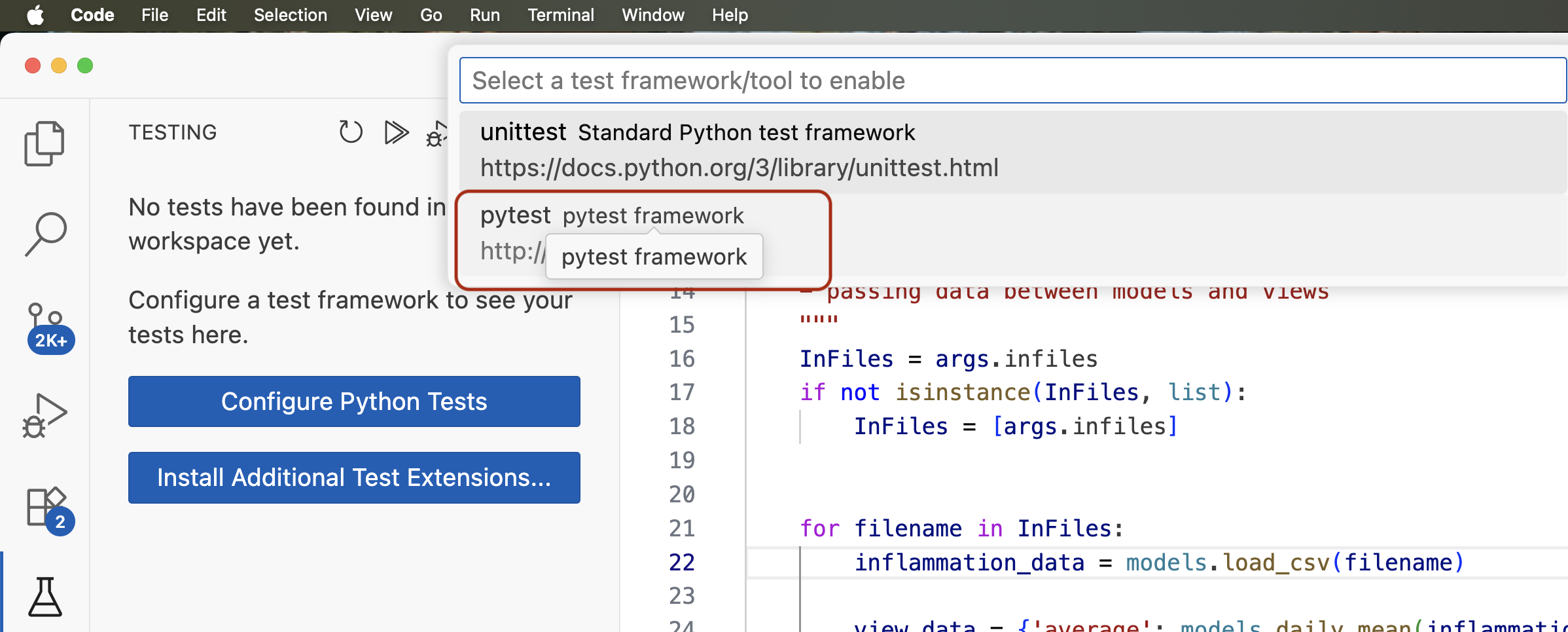

We have configured our environment and explored some of the most commonly used IDE features and are now ready to run our Python script from the IDE.

Running code using the graphical interface of an IDE provides a simple, user-friendly way to execute programs with just a click, reducing the need type commands manually in the command line terminal. On the other hand, running code from a terminal window in an IDE offers the flexibility and control of the command line — both approaches complement each other by supporting different user preferences and tasks within the same unified environment.

In this lesson, we prioritise using the command line and typing commands whenever possible, as these skills are easily transferable across different IDEs (with a note that you should feel free to use other equivalent ways for doing things that suit you more). However, for tasks like debugging - where the graphical interface offers significant advantages — we will make use of the IDE’s built-in visual tools.

The new terminal window will open at the bottom of the IDE window (or could be on the side - depending on how panes are split in your IDE), the script will be run inside it and the result will be displayed as:

OUTPUT

/Users/alex/work/python-intermediate-inflammation/venv/bin/python /Users/alex/work/python-intermediate-inflammation/inflammation-analysis.py

usage: inflammation-analysis.py [-h] infiles [infiles ...]

inflammation-analysis.py: error: the following arguments are required: infiles

Process finished with exit code 2This is the same error we got when running the script from the

command line! Essentially what happened was the IDE opened a command

line terminal within its interface and executed the Python command to

run the script for us (python3 inflammation-analysis.py) -

saving us some typing. You can carry on to run the Python script in

whatever way you find more convenient - some developers prefer to type

the commands in a terminal manually as that gives the feel of having

more control over what is happening and what commands are being

executed.

We will get back to the above error shortly - for now, the good thing is that we managed to set up our project for development both from the command line and IDE and are getting the same outputs. Before we move on to fixing errors and writing more code, let us have a look at the last set of tools for collaborative code development which we will be using in this course - Git and GitHub.

Optional exercises

Checkout this optional exercise to try out different IDEs and code editors.

- An IDE is an application that provides a comprehensive set of facilities for software development, including syntax highlighting, code search and completion, version control, testing and debugging.

- IDEs like PyCharm and VS Code recognise virtual environments

configured from the command line using

venvandpip.

Content from 1.4 Software Development Using Git and GitHub

Last updated on 2026-02-24 | Edit this page

Estimated time: 35 minutes

Overview

Questions

- What are Git branches and why are they useful for code development?

- What are some best practices when developing software collaboratively using Git?

Objectives

- Commit changes in a software project to a local repository and publish them in a remote repository on GitHub

- Create branches for managing different threads of code development

- Learn to use feature branch workflow to effectively collaborate with a team on a software project

Introduction

So far we have checked out our software project from GitHub and used command line tools to configure a virtual environment for our project and run our code. We have also familiarised ourselves with our IDE of choice - a graphical tool we will use for code development, testing and debugging. We are now going to start using another set of tools from the collaborative code development toolbox - namely, the version control system Git and code sharing platform GitHub. These two will enable us to track changes to our code and share it with others.

You may recall that we have already made some changes to our project

locally - we created a virtual environment in the directory called

“venv” and exported it to the requirements.txt file. We

should now decide which of those changes we want to check in and share

with others in our team. This is a typical software development workflow

- you work locally on code, test it to make sure it works correctly and

as expected, then record your changes using version control and share

your work with others via a shared and centrally backed-up

repository.

Firstly, let us remind ourselves how to work with Git from the command line.

Git Refresher

Git is a version control system for tracking changes in computer files and coordinating work on those files among multiple people. It is primarily used for source code management in software development but it can be used to track changes in files in general - it is particularly effective for tracking text-based files (e.g. source code files, CSV, Markdown, HTML, CSS, Tex, etc. files).

Git has several important characteristics:

- support for non-linear development allowing you and your colleagues to work on different parts of a project concurrently,

- support for distributed development allowing for multiple people to be working on the same project (even the same file) at the same time,

- every change recorded by Git remains part of the project history and can be retrieved at a later date, so even if you make a mistake you can revert to a point before it.

The diagram below shows a typical software development lifecycle with Git (in our case starting from making changes in a local branch that “tracks” a remote branch) and the commonly used commands to interact with different parts of the Git infrastructure, including:

-

working tree - a local directory (including any

subdirectories) where your project files live and where you are

currently working. It is also known as the “untracked” area of Git or

“working directory”. Any changes to files will be marked by Git in the

working tree. If you make changes to the working tree and do not

explicitly tell Git to save them - you will likely lose those changes.

Using

git add filenamecommand, you tell Git to start tracking changes to filefilenamewithin your working tree. -

staging area (index) - once you tell Git to start

tracking changes to files (with

git add filenamecommand), Git saves those changes in the staging area on your local machine. Each subsequent change to the same file needs to be followed by anothergit add filenamecommand to tell Git to update it in the staging area. To see what is in your working tree and staging area at any moment (i.e. what changes is Git tracking), run the commandgit status. -

local repository - stored within the

.gitworking tree of your project locally, this is where Git wraps together all your changes from the staging area and puts them using thegit commitcommand. Each commit is a new, permanent snapshot (checkpoint, record) of your project in time, which you can share or revert to. -

remote repository - this is a version of your

project that is hosted somewhere on the Internet (e.g., on GitHub,

GitLab or somewhere else). While your project is nicely

version-controlled in your local repository, and you have snapshots of

its versions from the past, if your machine crashes - you still may lose

all your work. Furthermore, you cannot share or collaborate on this

local work with others easily. Working with a remote repository involves

pushing your local changes remotely (using

git push) and pulling other people’s changes from a remote repository to your local copy (usinggit fetchorgit pull) to keep the two in sync in order to collaborate (with a bonus that your work also gets backed up to another machine). Note that a common best practice when collaborating with others on a shared repository is to always do agit pullbefore agit push, to ensure you have any latest changes before you push your own.

sequenceDiagram

accDescr {Basic development lifecycle with Git and commands for moving files between the Git infrastructure: working tree, staging area, local repository, and remote repository.}

Working Tree-->>+Staging Area: git add

Staging Area-->>+Local Repository: git commit

Local Repository-->>+Remote Repository: git push

Remote Repository-->>+Local Repository: git fetch

Local Repository-->>+Working Tree:git checkout

Local Repository-->>+Working Tree:git merge

Remote Repository-->>+Working Tree: git pull (shortcut for git fetch followed by git checkout/merge)Checking-in Changes to Our Project

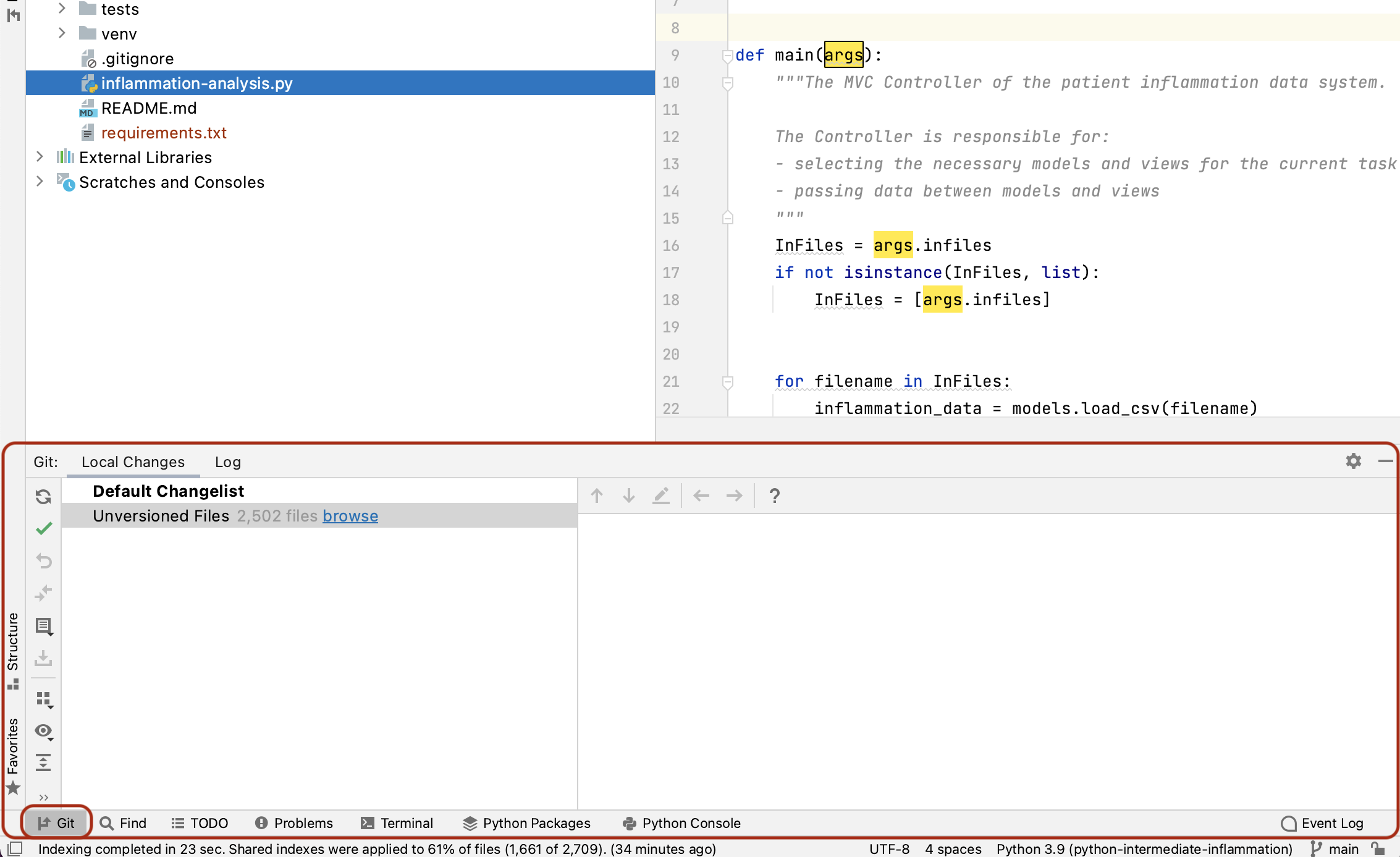

Let us check-in the changes we have done to our project so far. The first thing to do upon navigating into our software project’s directory root is to check the current status of our local working directory and repository.

OUTPUT

On branch main

Your branch is up to date with 'origin/main'.

Untracked files:

(use "git add <file>..." to include in what will be committed)

requirements.txt

venv/

nothing added to commit but untracked files present (use "git add" to track)As expected, Git is telling us that we have some untracked files -

requirements.txt and directory “venv” - present in our

working directory which we have not staged nor committed to our local

repository yet. You do not want to commit the newly created directory

“venv” and share it with others because this directory is specific to

your machine and setup only (i.e. it contains local paths to libraries

on your system that most likely would not work on any other machine).

You do, however, want to share requirements.txt with your

team as this file can be used to replicate the virtual environment on

your collaborators’ systems.

To tell Git to intentionally ignore and not track certain files and

directories, you need to specify them in the .gitignore

text file. A common place to put this file is in the project root. Our

project already has .gitignore, but in cases where you do

not have it - you can simply create it yourself. In our case, we want to

tell Git to ignore the “venv” directory (and “.venv” as another naming

convention for directories containing virtual environments) and stop

notifying us about it. Edit your .gitignore file in your

IDE and add a line containing “venv/” and another one containing

“.venv/”. It does not matter much in this case where within the file you

add these lines, so let us do it at the end. Your

.gitignore should look something like this:

OUTPUT

# IDEs

.vscode/

.idea/

# Intermediate Coverage file

.coverage

# Output files

*.png

# Python runtime

*.pyc

*.egg-info

.pytest_cache

# Virtual environments

venv/

.venv/You may notice that we are already not tracking certain files and

directories with useful comments about what exactly we are ignoring. You

may also notice that each line in .gitignore is actually a

pattern, so you can ignore multiple files that match a pattern

(e.g. “*.png” will ignore all PNG files in the current directory).

If you run the git status command now, you will notice

that Git has cleverly understood that you want to ignore changes to the

“venv” directory so it is not warning us about it any more. However, it

has now detected a change to .gitignore file that needs to

be committed.

OUTPUT

On branch main

Your branch is up to date with 'origin/main'.

Changes not staged for commit:

(use "git add <file>..." to update what will be committed)

(use "git restore <file>..." to discard changes in working directory)

modified: .gitignore

Untracked files:

(use "git add <file>..." to include in what will be committed)

requirements.txt

no changes added to commit (use "git add" and/or "git commit -a")To commit the changes .gitignore and

requirements.txt to the local repository, we first have to

add these files to staging area to prepare them for committing. We can

do that at the same time as:

Now we can commit them to the local repository with:

Remember to use meaningful messages for your commits.

So far we have been working in isolation - all the changes we have

done are still only stored locally on our individual machines. In order

to share our work with others, we should push our changes to the remote

repository on GitHub. Before we push our changes however, we should

first do a git pull. This is considered best practice,

since any changes made to the repository - notably by other people - may

impact the changes we are about to push. This could occur, for example,

by two collaborators making different changes to the same lines in a

file. By pulling first, we are made aware of any changes made by others,

in particular if there are any conflicts between their changes and

ours.

Now we have ensured our repository is synchronised with the remote one, we can now push our changes:

In the above command, origin is an alias for the remote

repository you used when cloning the project locally (it is called that

by convention and set up automatically by Git when you run

git clone remote_url command to replicate a remote

repository locally); main is the name of our main (and

currently only) development branch.

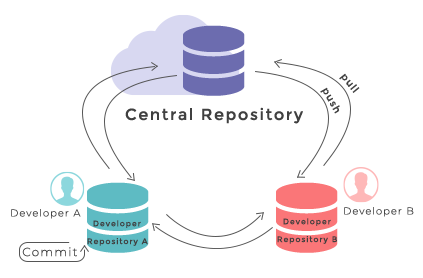

Git Remotes

Note that systems like Git allow us to synchronise work between any two or more copies of the same repository - the ones that are not located on your machine are “Git remotes” for you. In practice, though, it is easiest to agree with your collaborators to use one copy as a central hub (such as GitHub or GitLab), where everyone pushes their changes to. This also avoid risks associated with keeping the “central copy” on someone’s laptop. You can have more than one remote configured for your local repository, each of which generally is either read-only or read/write for you. Collaborating with others involves managing these remote repositories and pushing and pulling information to and from them when you need to share work.

Git - distributed version control system

From

W3Docs

(freely available)

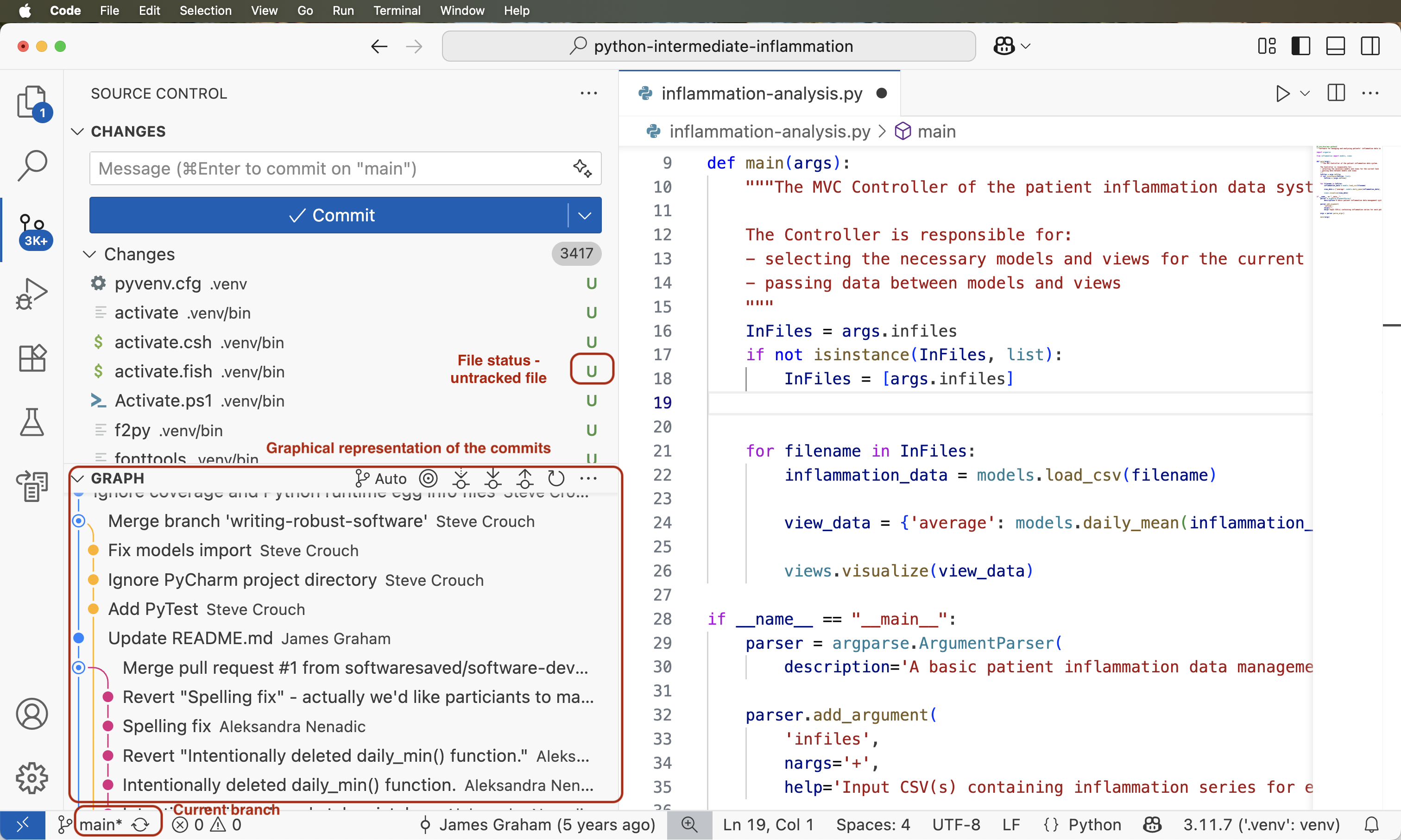

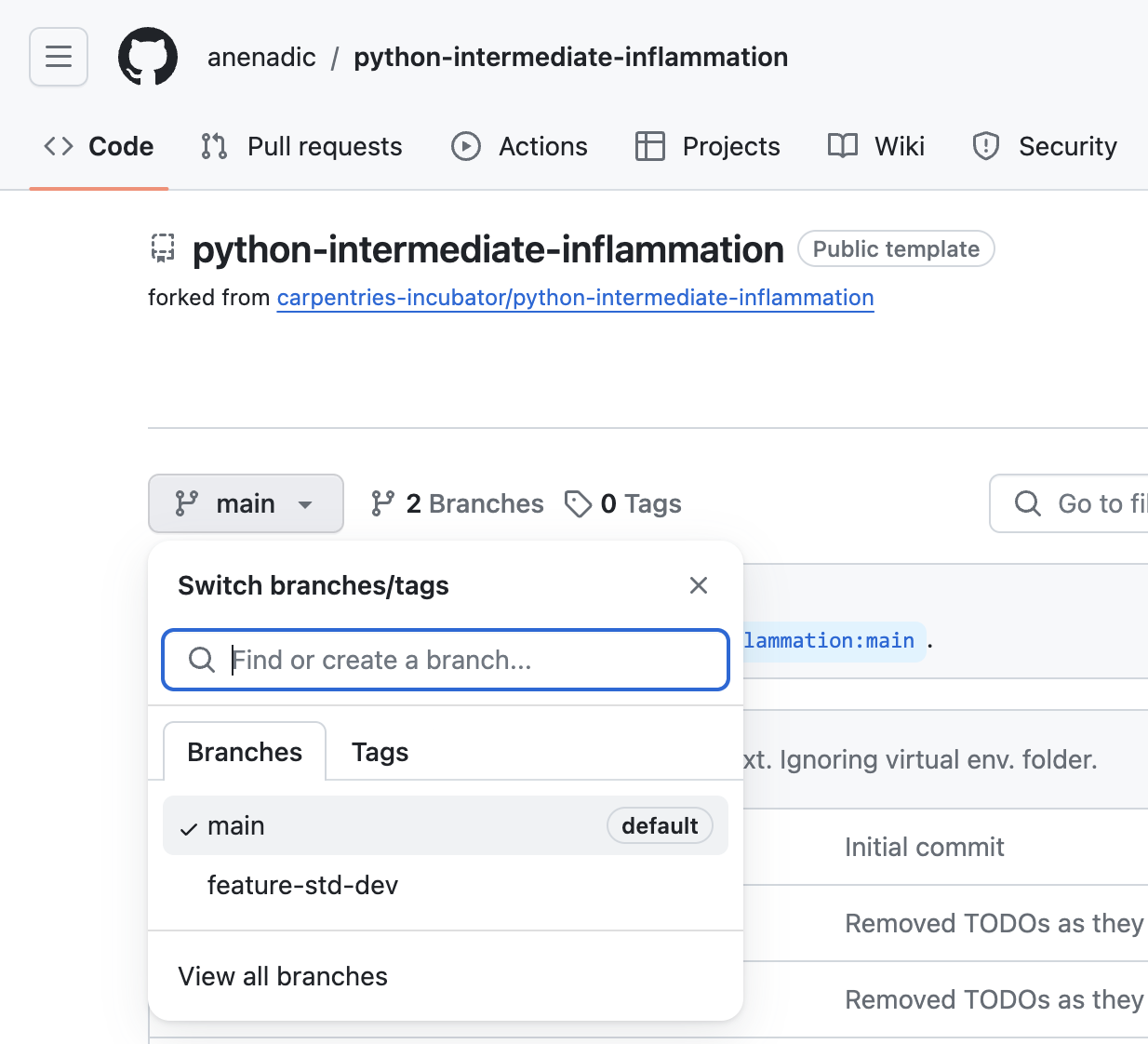

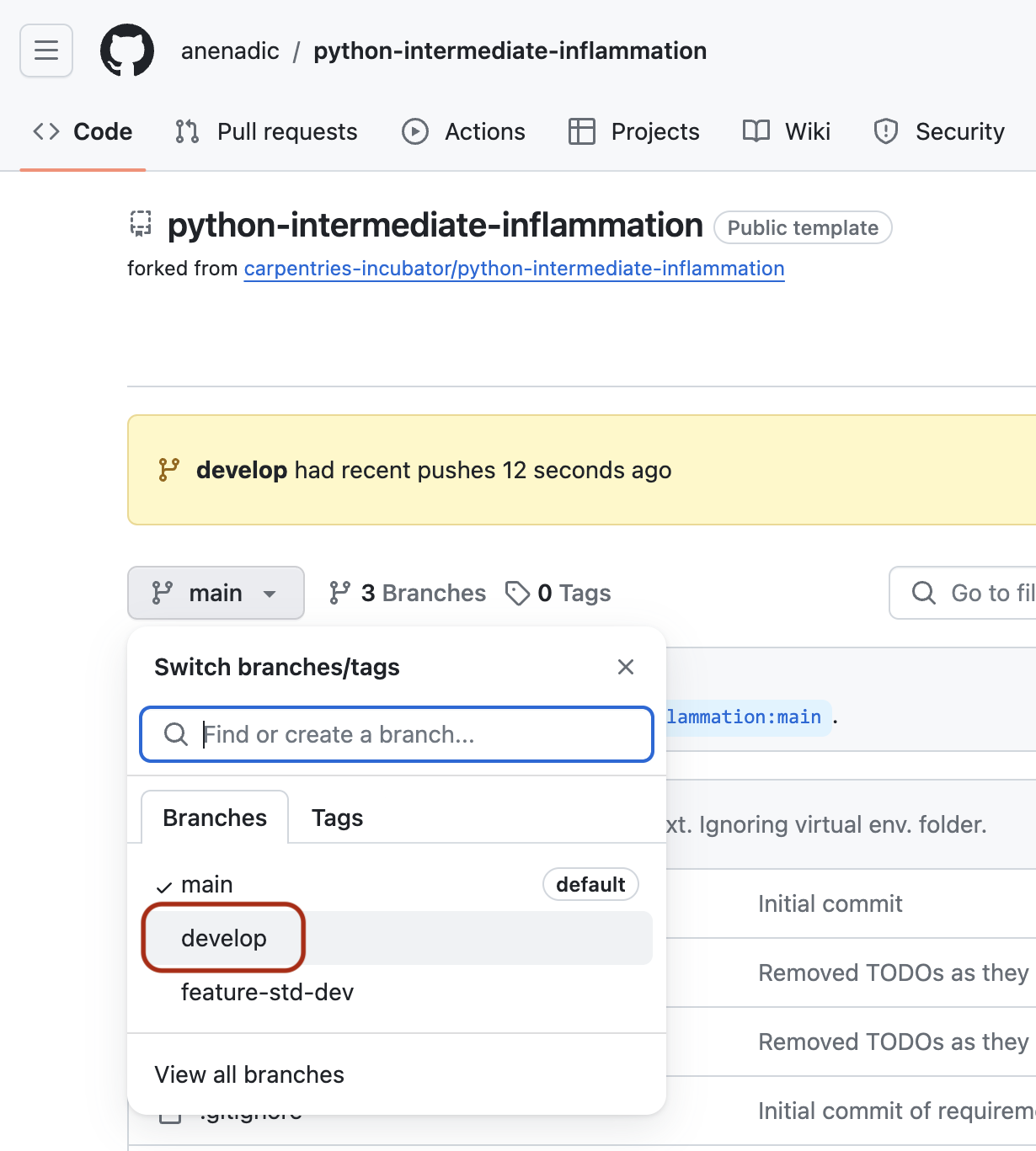

Git Branches

When we do git status, Git also tells us that we are

currently on the main branch of the project. A branch is

one version of your project (the files in your repository) that can

contain its own set of commits. We can create a new branch, make changes

to the code which we then commit to the branch, and, once we are happy

with those changes, merge them back to the main branch. To see what

other branches are available, do:

OUTPUT

* mainAt the moment, there is only one branch (main) and hence

only one version of the code available. When you create a Git repository

for the first time, by default you only get one version (i.e. branch) -

main. Let us have a look at why having different branches

might be useful.

Feature Branch Software Development Workflow

While it is technically OK to commit your changes directly to

main branch, and you may often find yourself doing so for

some minor changes, the best practice is to use a new branch for each

separate and self-contained unit/piece of work you want to add to the

project. This unit of work is also often called a feature and

the branch where you develop it is called a feature branch.

Each feature branch should have its own meaningful name - indicating its

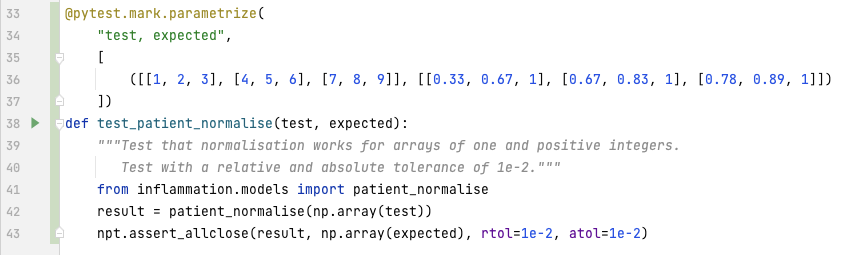

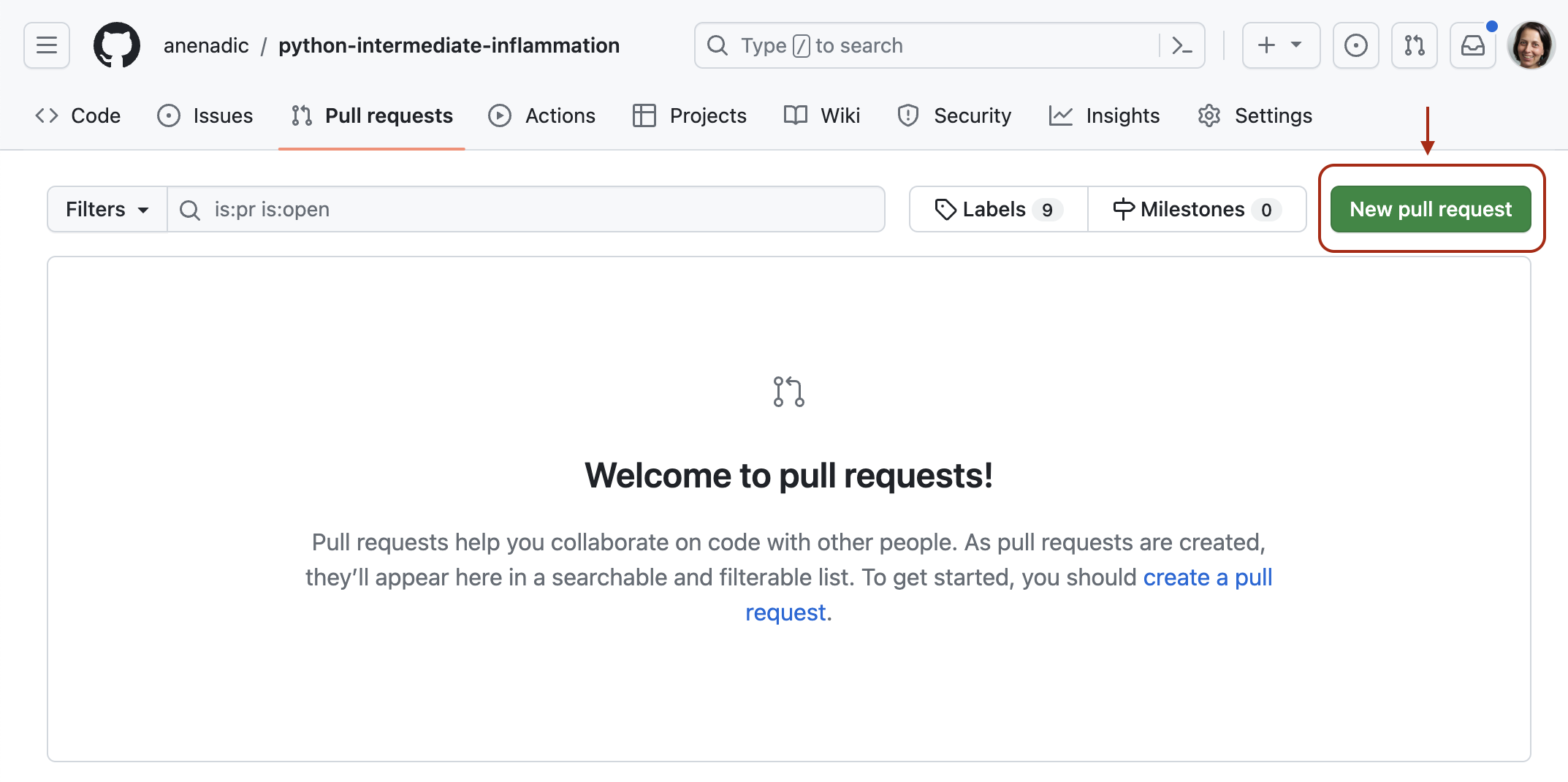

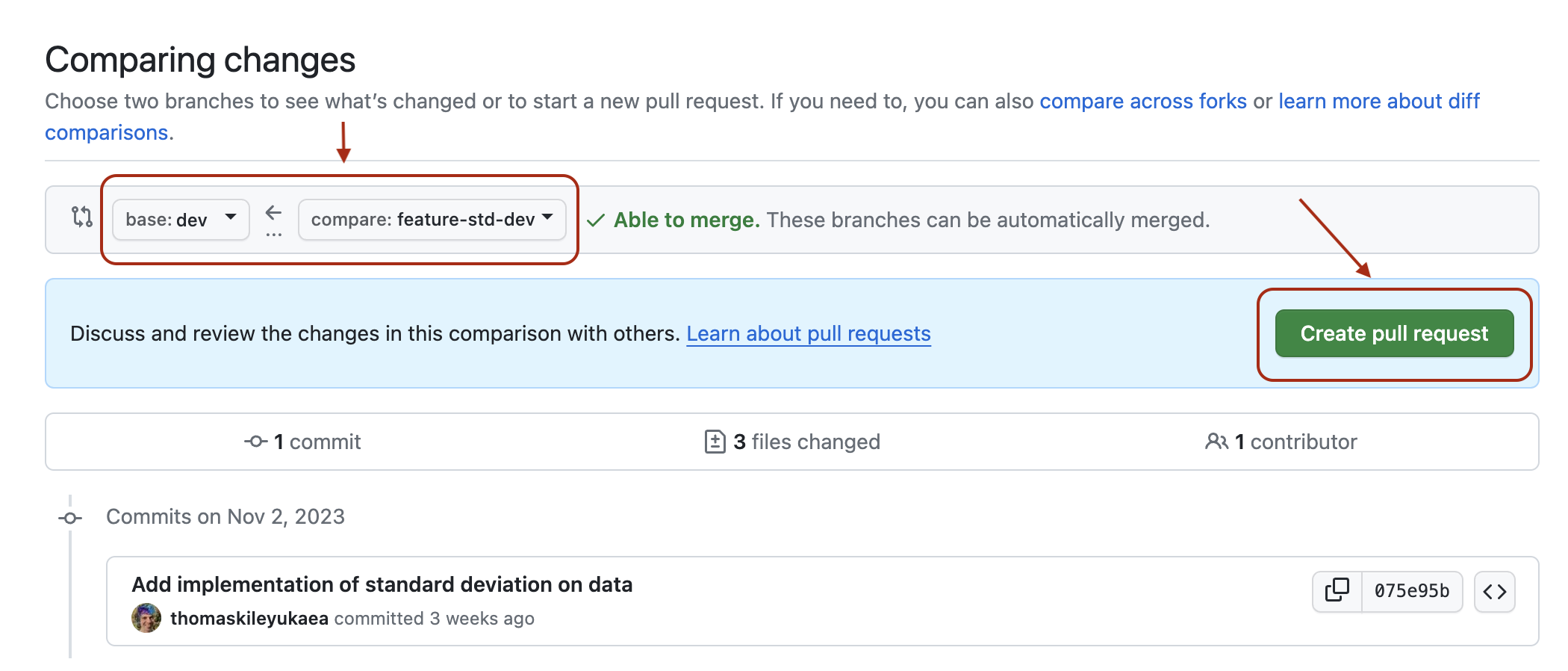

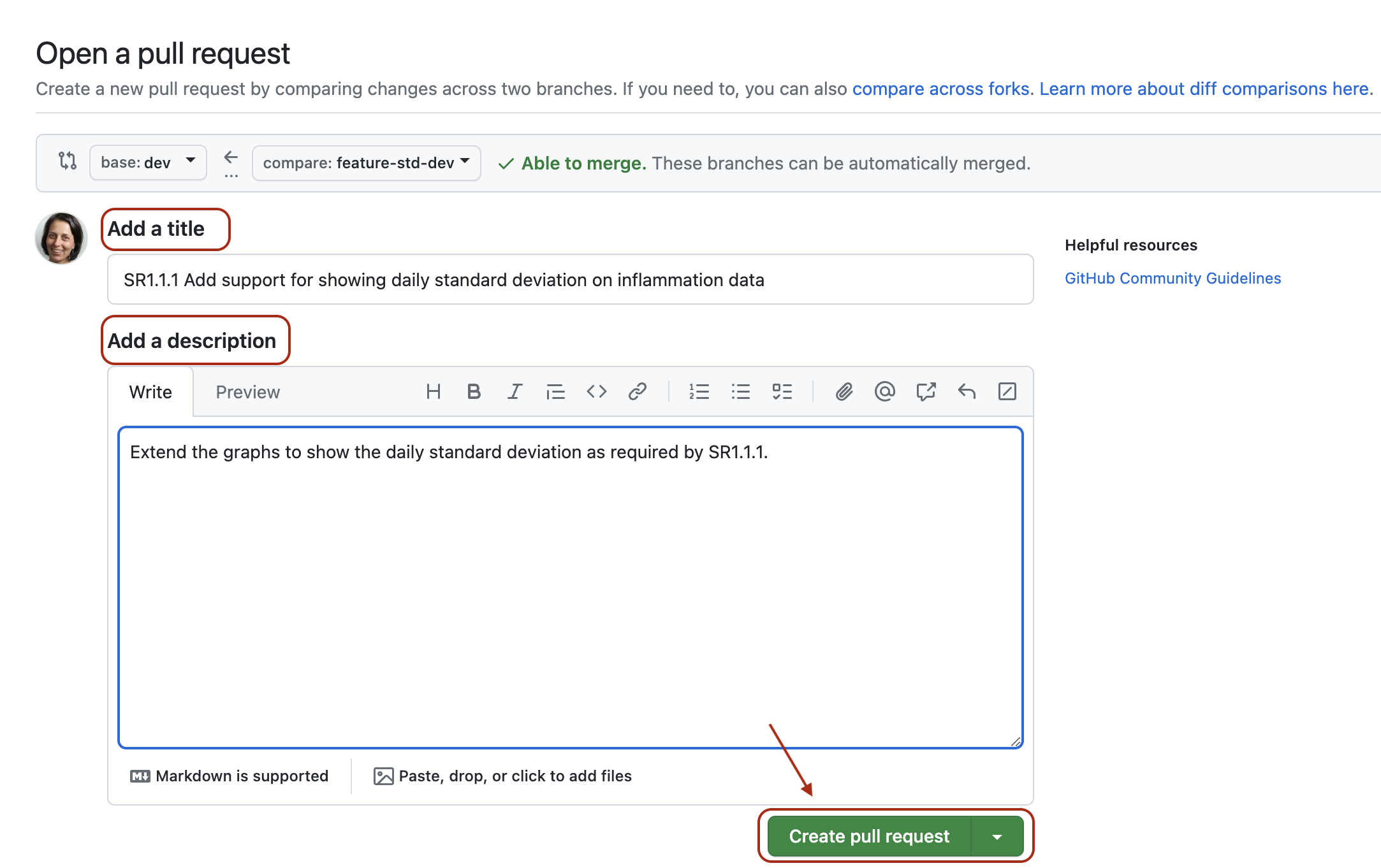

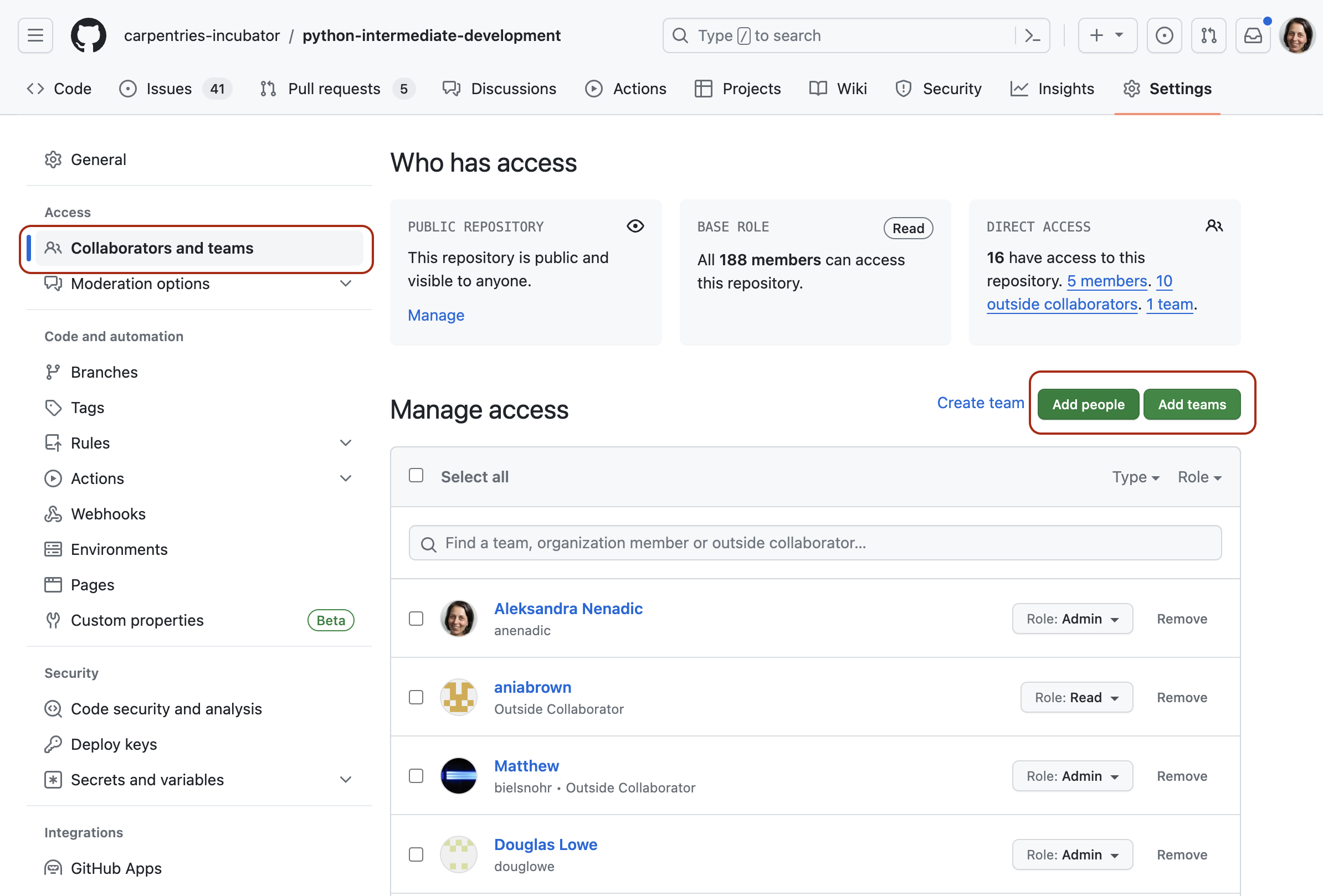

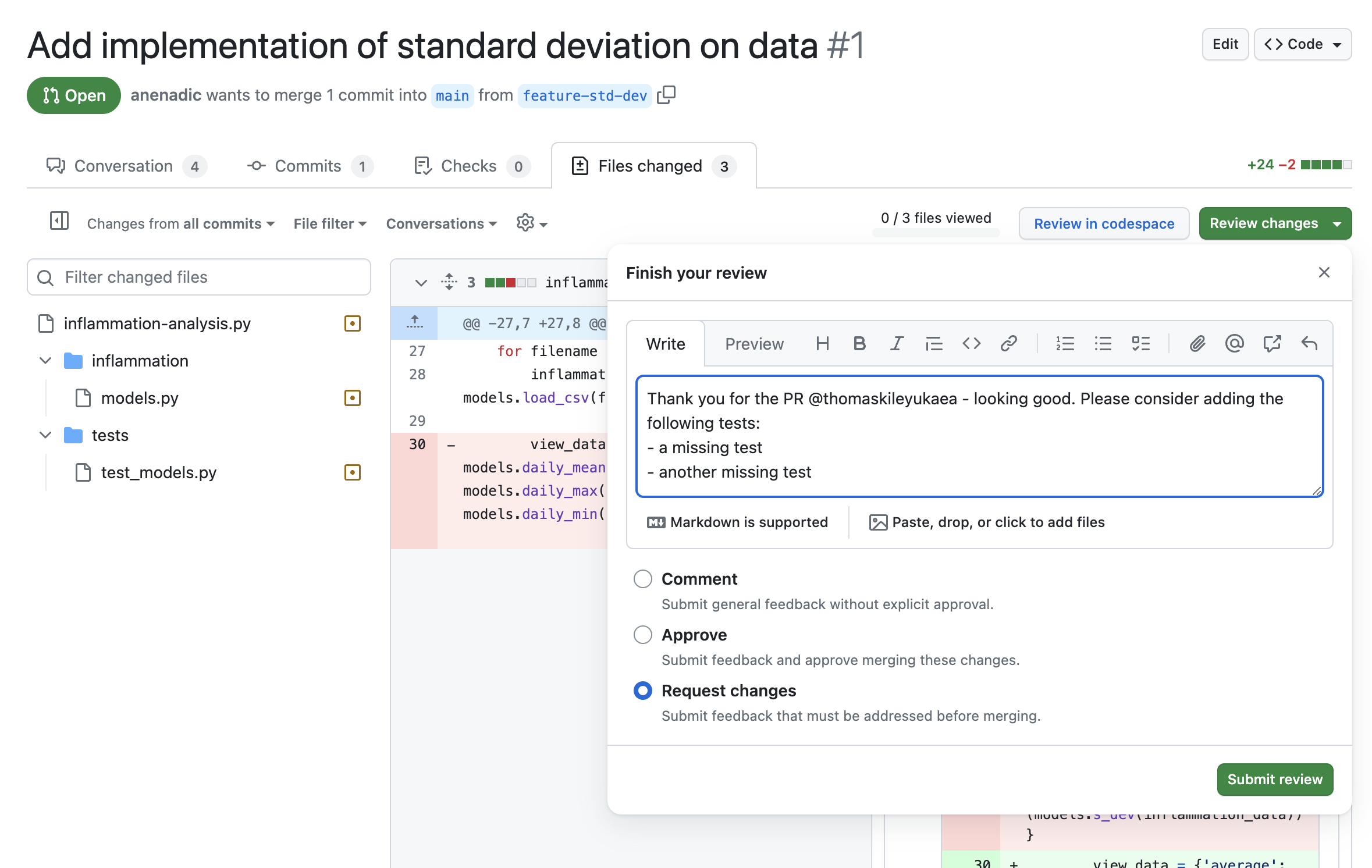

purpose (e.g. “issue23-fix”). If we keep making changes and pushing them