Introduction

Overview

Teaching: min

Exercises: minQuestions

Objectives

What is machine learning?

Machine learning is a set of techniques that enable computers to use data to improve their performance in a given task. This is similar in concept to how humans learn to make predictions based upon previous experience and knowledge. Machine learning encompasses a wide range of activities, but broadly speaking it can be used to: find trends in a dataset, classify data into groups or categories, make predictions based upon data, and even “learn” how to interact with an environment when provided with goals to achieve.

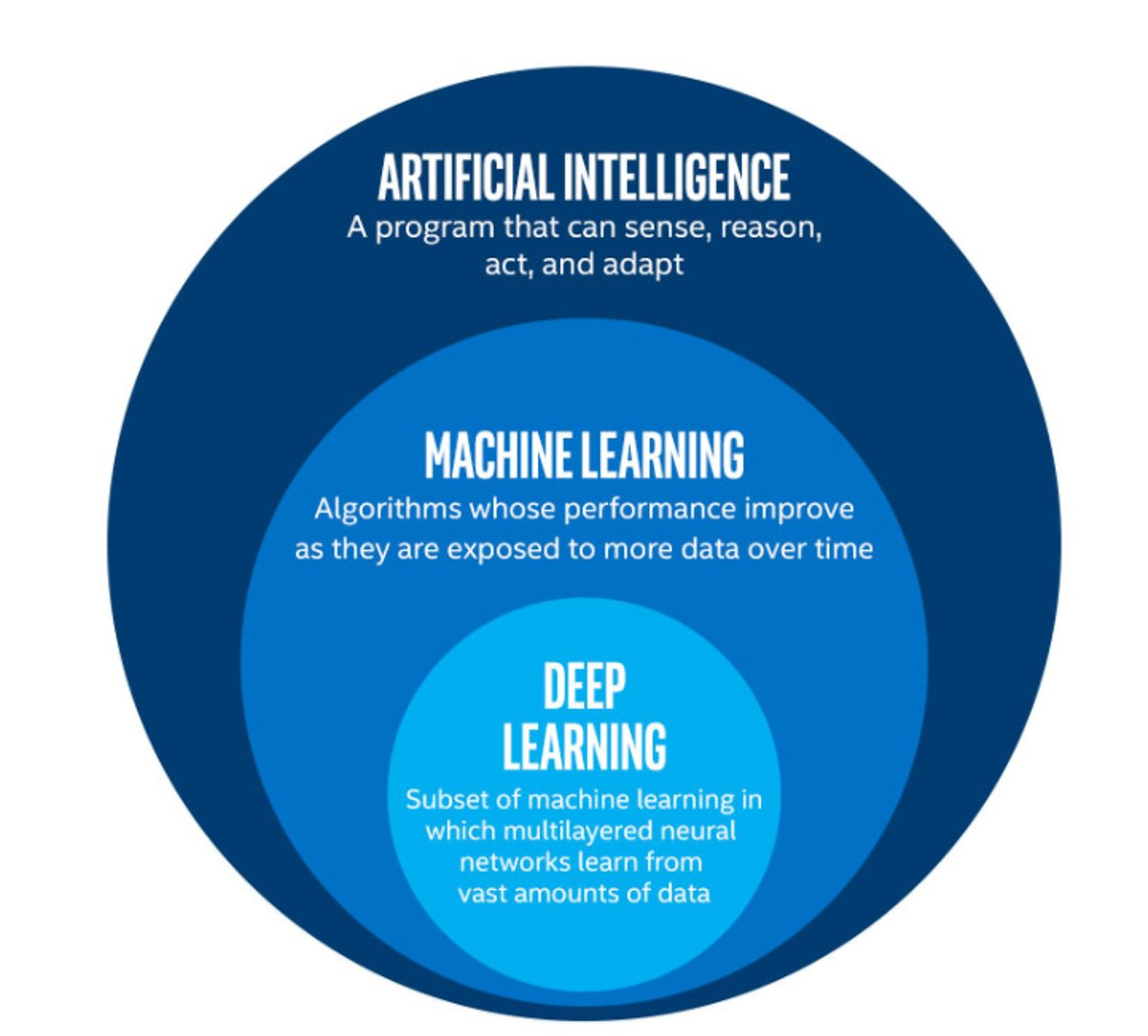

Artificial intelligence vs machine learning

The term machine learning (ML) is often mentioned alongside artificial intelligence (AI) and deep learning (DL). Deep learning is a subset of machine learning, and machine learning is a subset of artificial intelligence.

AI is increasingly being used as a catch-all term to describe things that encompass ML and DL systems - from simple email spam filters, to more complex image recognition systems, to large language models such as ChatGPT. The more specific term “Artificial General Intelligence” (AGI) is used to describe a system possessing a “general intelligence” that can be applied to solve a diverse range of problems, often mimicking the behaviour of intelligent biological systems. Modern attempts at AGI are getting close to fooling humans, but while there have been great advances in AI research, human-like intelligence is only possible in a few specialist areas.

ML refers to techniques where a computer can “learn” patterns in data, usually by being shown many training examples. While ML algorithms can learn to solve specific problems, or multiple similar problems, they are not considered to possess a general intelligence. ML algorithms often need hundreds or thousands of examples to learn a task and are confined to activities such as simple classifications. A human-like system could learn much quicker than this, and potentially learn from a single example by using it’s knowledge of many other problems.

DL is a particular field of machine learning where algorithms called neural networks are used to create highly complex systems. Large collections of neural networks are able to learn from vast quantities of data. Deep learning can be used to solve a wide range of problems, but it can also require huge amounts of input data and computational resources to train.

The image below shows the relationships between artificial intelligence, machine learning and deep learning.

The image above is by Tukijaaliwa, CC BY-SA 4.0, via Wikimedia Commons, original source

The image above is by Tukijaaliwa, CC BY-SA 4.0, via Wikimedia Commons, original source

Machine learning in our daily lives

Machine learning has quickly become an important technology and is now frequently used to perform services we encounter in our daily lives. Here are just a few examples:

- Banks look for trends in transaction data to detect outliers that may be fraudulent

- Email inboxes use text to decide whether an email is spam or not, and adjust their rules based upon how we flag emails

- Travel apps use live and historic data to estimate traffic, travel times, and journey routes

- Retail companies and streaming services use data to recommend new content we might like based upon our demographic and historical preferences

- Image, object, and pattern recognition is used to identify humans and vehicles, capture text, generate subtitles, and much more

- Self-driving cars and robots use object detection and performance feedback to improve their interaction with the world

Where else have you encountered machine learning already?

Now that we have explored machine learning in a bit more detail, discuss with the person next to you:

- Where else have I seen machine learning in use?

- What kind of input data does that machine learning system use to make predictions/classifications?

- Is there any evidence that your interaction with the system contributes to further training?

- Do you have any examples of the system failing?

Limitations of machine learning

Like any other systems machine learning has limitations, caveats, and “gotchas” to be aware of that may impact the accuracy and performance of a machine learning system.

Garbage in = garbage out

There is a classic expression in computer science: “garbage in = garbage out”. This means that if the input data we use is garbage then the ouput will be too. If, for example, we try to use a machine learning system to find a link between two unlinked variables then it may well manage to produce a model attempting this, but the output will be meaningless.

Biases due to training data

The performance of a ML system depends on the breadth and quality of input data used to train it. If the input data contains biases or blind spots then these will be reflected in the ML system. For example, if we collect data on public transport use from only high socioeconomic areas, the resulting input data may be biased due to a range of factors that may increase the likelihood of people from those areas using private transport vs public options.

Extrapolation

We can only make reliable predictions about data which is in the same range as our training data. If we try to extrapolate beyond the boundaries of the training data we cannot be confident in our results. As we shall see some algorithms are better suited (or less suited) to extrapolation than others.

Over fitting

Sometimes ML algorithms become over-trained and subsequently don’t perform well when presented with real data. It’s important to consider how many rounds of training a ML system has recieved and whether or not it may have become over-trained.

Inability to explain answers

Machine learning techniques will return an answer based on the input data and model parameters even if that answer is wrong. Most systems are unable to explain the logic used to arrive at that answer. This can make detecting and diagnosing problems difficult.

Getting started with Scikit-Learn

About Scikit-Learn

Scikit-Learn is a python package designed to give access to well-known machine learning algorithms within Python code, through a clean application programming interface (API). It has been built by hundreds of contributors from around the world, and is used across industry and academia.

Scikit-Learn is built upon Python’s NumPy (Numerical Python) and SciPy (Scientific Python) libraries, which enable efficient in-core numerical and scientific computation within Python. As such, Scikit-Learn is not specifically designed for extremely large datasets, though there is some work in this area. For this introduction to ML we are going to stick to processing small to medium datasets with Scikit-Learn, without the need for a graphical processing unit (GPU).

Like any other Python package, we can import Scikit-Learn and check the package version using the following Python commands:

import sklearn

print('scikit-learn:', sklearn.__version__)

Representation of Data in Scikit-learn

Machine learning is about creating models from data: for that reason, we’ll start by discussing how data can be represented in order to be understood by the computer.

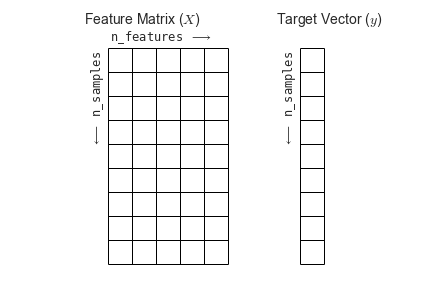

Most machine learning algorithms implemented in scikit-learn expect data to be stored in a two-dimensional array or matrix. The arrays can be either numpy arrays, or in some cases scipy.sparse matrices. The size of the array is expected to be [n_samples, n_features]

We typically have a “Features Matrix” (usually referred to as the code variable X) which are the “features” data we wish to train on.

- n_samples: The number of samples. A sample can be a document, a picture, a sound, a video, an astronomical object, a row in database or CSV file, or whatever you can describe with a fixed set of quantitative traits.

- n_features: The number of features (variables) that can be used to describe each item in a quantitative manner. Features are generally real-valued, but may be boolean or discrete-valued in some cases.

If we want our ML models to make predictions or classifications, we also provide “labels” as our expected “answers/results”. The model will then be trained on the input features to try and match our provided labels. This is done by providing a “Target Array” (usually referred to as the code variable y) which contains the “labels or values” that we wish to predict using the features data.

Figure from the Python Data Science Handbook

Figure from the Python Data Science Handbook

What will we cover today?

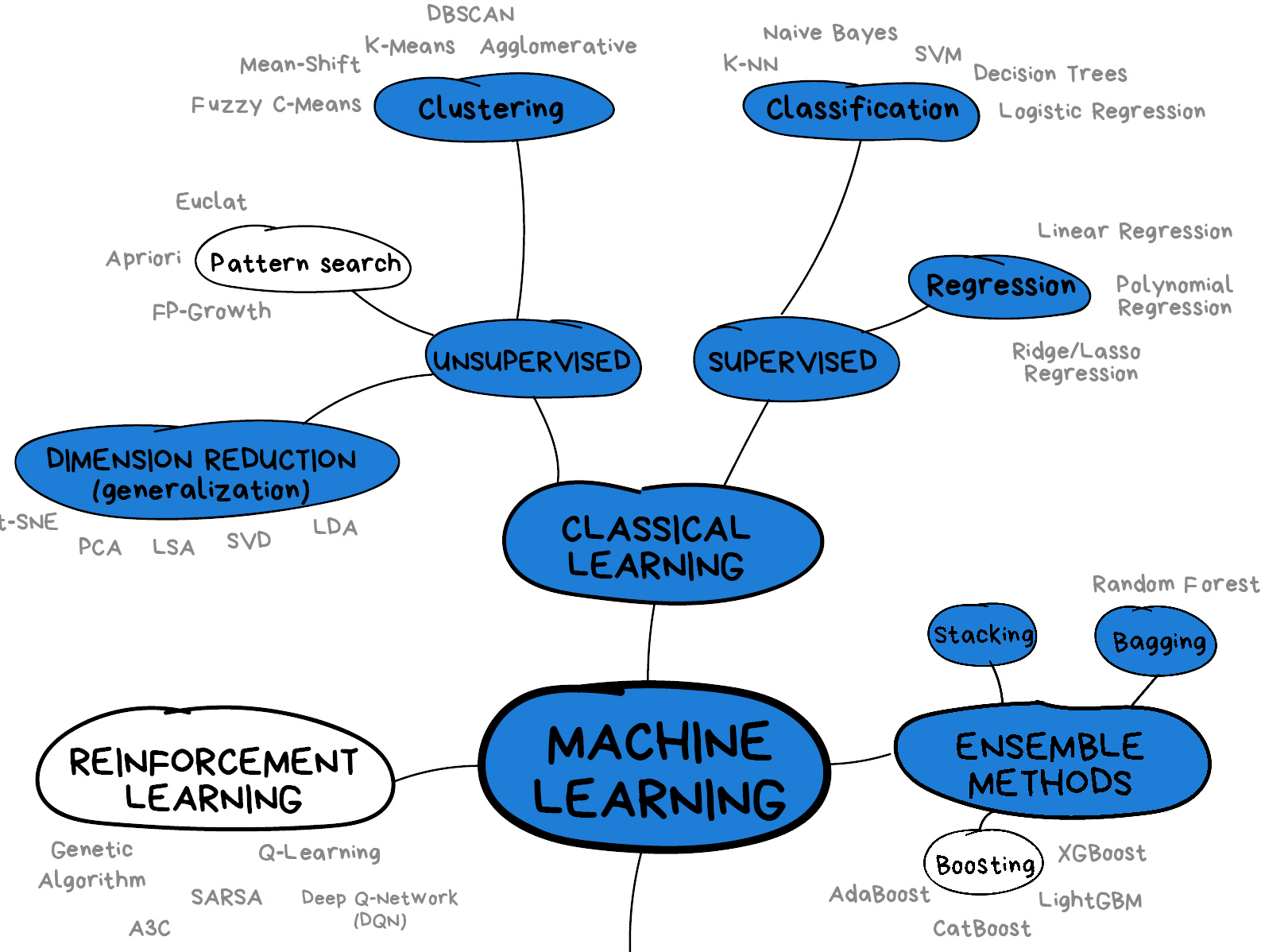

This lesson will introduce you to some of the key concepts and sub-domains of ML such as supervised learning, unsupervised learning, and neural networks.

The figure below provides a nice overview of some of the sub-domains of ML and the techniques used within each sub-domain. We recommend checking out the Scikit-Learn webpage for additional examples of the topics we will cover in this lesson. We will cover topics highlighted in blue: classical learning techniques such as regression, classification, clustering, and dimension reduction, as well as ensemble methods and a brief introduction to neural networks using perceptrons.

Image from Vasily Zubarev via their blog with modifications in blue to denote lesson content.

Image from Vasily Zubarev via their blog with modifications in blue to denote lesson content.

Key Points

Supervised methods - Regression

Overview

Teaching: min

Exercises: minQuestions

Objectives

Supervised learning

Classical machine learning is often divided into two categories – supervised and unsupervised learning.

For the case of supervised learning we act as a “supervisor” or “teacher” for our ML algorithms by providing the algorithm with “labelled data” that contains example answers of what we wish the algorithm to achieve.

For instance, if we wish to train our algorithm to distinguish between images of cats and dogs, we would provide our algorithm with images that have already been labelled as “cat” or “dog” so that it can learn from these examples. If we wished to train our algorithm to predict house prices over time we would provide our algorithm with example data of datetime values that are “labelled” with house prices.

Supervised learning is split up into two further categories: classification and regression. For classification the labelled data is discrete, such as the “cat” or “dog” example, whereas for regression the labelled data is continuous, such as the house price example.

In this episode we will explore how we can use regression to build a “model” that can be used to make predictions.

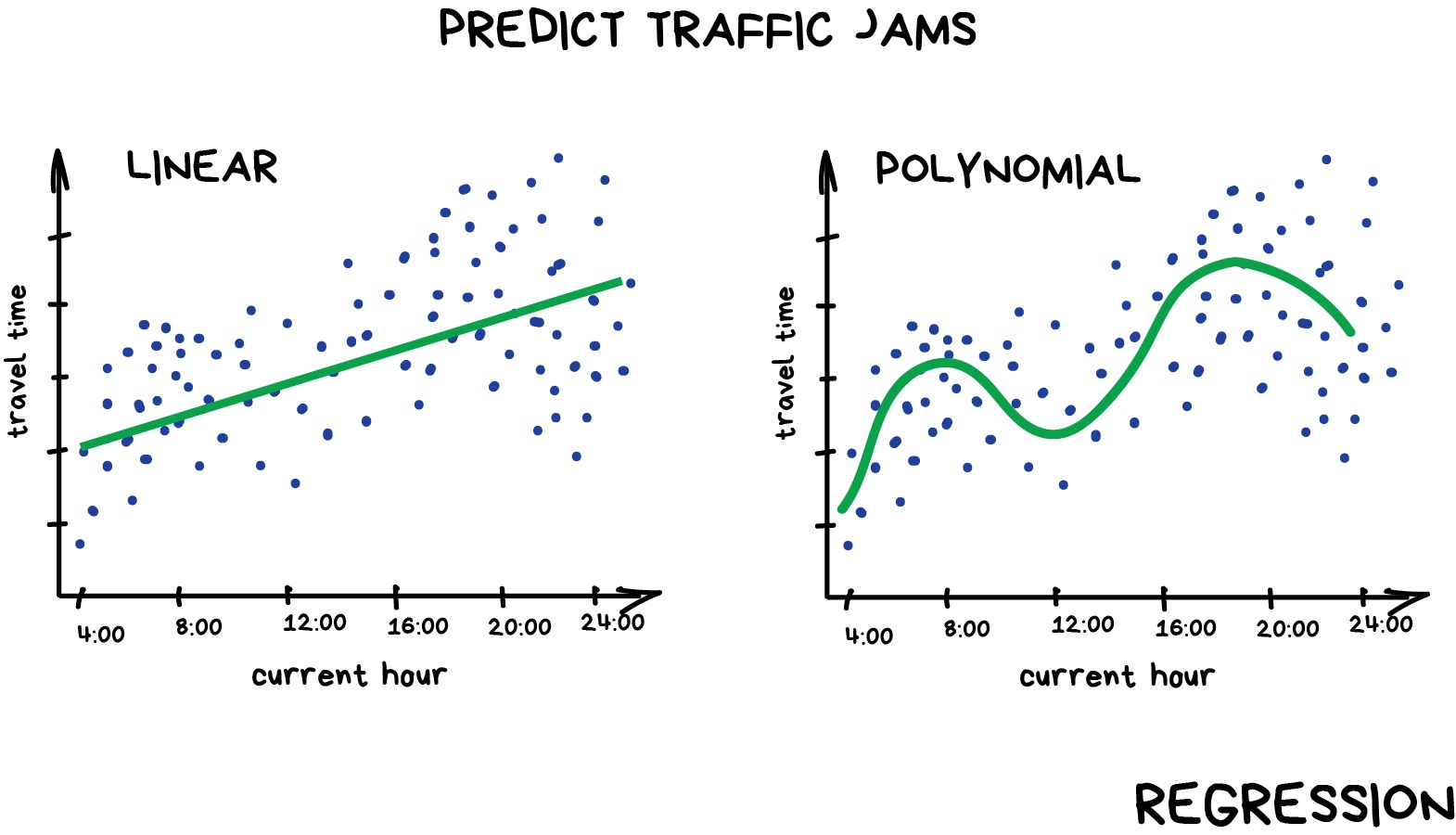

Regression

Regression is a statistical technique that relates a dependent variable (a label in ML terms) to one or more independent variables (features in ML terms). A regression model attempts to describe this relation by fitting the data as closely as possible according to mathematical criteria. This model can then be used to predict new labelled values by inputting the independent variables into it. For example, if we create a house price model we can then feed in any datetime value we wish, and get a new house price value prediction.

Regression can be as simple as drawing a “line of best fit” through data points, known as linear regression, or more complex models such as polynomial regression, and is used routinely around the world in both industry and research. You may have already used regression in the past without knowing that it is also considered a machine learning technique!

Linear regression using Scikit-Learn

We’ve had a lot of theory so time to start some actual coding! Let’s create regression models for a small bundle of datasets known as Anscombe’s Quartet. These datasets are available through the Python plotting library Seaborn. Let’s define our bundle of datasets, extract out the first dataset, and inspect it’s contents:

import seaborn as sns

# Anscomes Quartet consists of 4 sets of data

data = sns.load_dataset("anscombe")

print(data.head())

# Split out the 1st dataset from the total

data_1 = data[data["dataset"]=="I"]

data_1 = data_1.sort_values("x")

# Inspect the data

print(data_1.head())

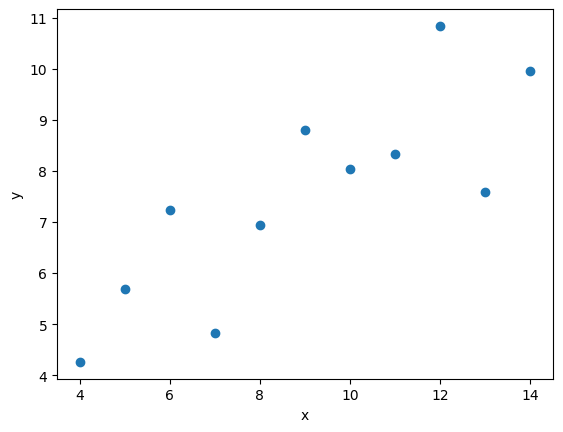

We see that the dataset bundle has the 3 columns dataset, x, and y. We have already used the dataset column to extract out Dataset I ready for our regression task. Let’s visually inspect the data:

import matplotlib.pyplot as plt

plt.scatter(data_1["x"], data_1["y"])

plt.xlabel("x")

plt.ylabel("y")

plt.show()

In this regression example we will create a Linear Regression model that will try to predict y values based upon x values.

In machine learning terminology: we will use our x feature (variable) and y labels(“answers”) to train our Linear Regression model to predict y values when provided with x values.

The mathematical equation for a linear fit is y = mx + c where y is our label data, x is our input feature(s), m represents the gradient of the linear fit, and c represents the intercept with the y-axis.

A typical ML workflow is as following:

- Define the model (also known as an estimator)

- Tweak your data into the required format for your model

- Train your model on the input data

- Predict some values using the trained model

- Check the accuracy of the prediction, and visualise the result

We’ll define functions for each of these steps so that we can quickly perform linear regressions on our data. First we’ll define a function to pre-process our data into a format that Scikit-Learn can use.

import numpy as np

def pre_process_linear(x, y):

# sklearn requires a 2D array, so lets reshape our 1D arrays.

x_data = np.array(x).reshape(-1, 1)

y_data = np.array(y).reshape(-1, 1)

return x_data, y_data

Next we’ll define a model, and train it on the pre-processed data. We’ll also inspect the trained model parameters m and c:

from sklearn.linear_model import LinearRegression

def fit_a_linear_model(x_data, y_data):

# Define our estimator/model

model = LinearRegression(fit_intercept=True)

# train our estimator/model using our data

lin_regress = model.fit(x_data,y_data)

# inspect the trained estimator/model parameters

m = lin_regress.coef_

c = lin_regress.intercept_

print("linear coefs=",m, c)

return lin_regress

Then we’ll define a function to make predictions using our trained model, and calculate the Root Mean Squared Error (RMSE) of our predictions:

import math

from sklearn.metrics import mean_squared_error

def predict_linear_model(lin_regress, x_data, y_data):

# predict some values using our trained estimator/model

# (in this case we predict our input data!)

linear_data = lin_regress.predict(x_data)

# calculated a RMS error as a quality of fit metric

error = math.sqrt(mean_squared_error(y_data, linear_data))

print("linear error=",error)

# return our trained model so that we can use it later

return linear_data

Finally, we’ll define a function to plot our input data, our linear fit, and our predictions:

def plot_linear_model(x_data, y_data, predicted_data):

# visualise!

# Don't call .show() here so that we can add extra stuff to the figure later

plt.scatter(x_data, y_data, label="input")

plt.plot(x_data, predicted_data, "-", label="fit")

plt.plot(x_data, predicted_data, "rx", label="predictions")

plt.xlabel("x")

plt.ylabel("y")

plt.legend()

We will be training a few Linear Regression models in this episode, so let’s define a handy function to combine input data processing, model creation, training our model, inspecting the trained model parameters m and c, make some predictions, and finally visualise our data.

def fit_predict_plot_linear(x, y):

x_data, y_data = pre_process_linear(x, y)

lin_regress = fit_a_linear_model(x_data, y_data)

linear_data = predict_linear_model(lin_regress, x_data, y_data)

plot_linear_model(x_data, y_data, linear_data)

return lin_regress

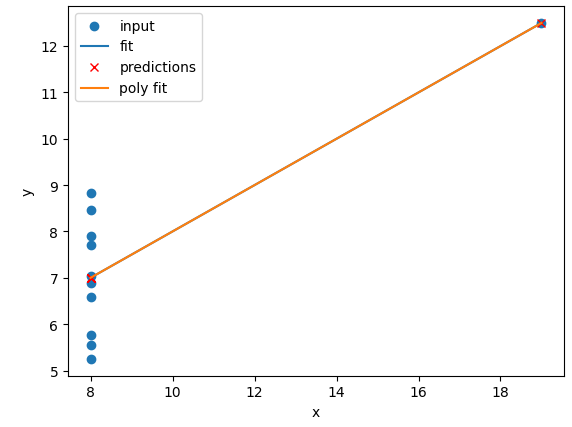

Now we have defined our generic function to fit a linear regression we can call the function to train it on some data, and show the plot that was generated:

# just call the function here rather than assign.

# We don't need to reuse the trained model yet

fit_predict_plot_linear(data_1["x"], data_1["y"])

plt.show()

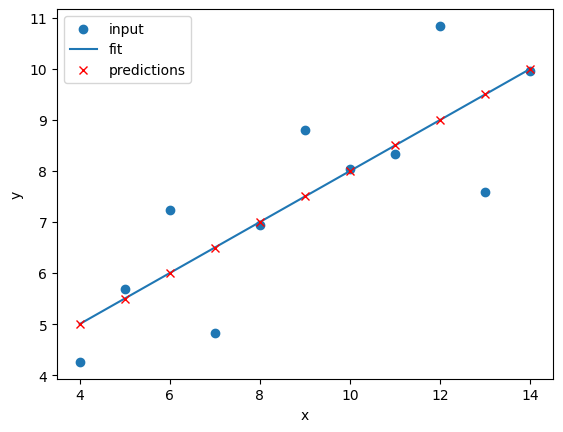

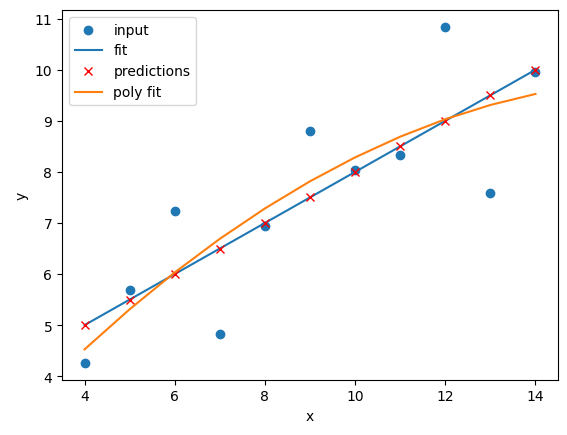

This looks like a reasonable linear fit to our first dataset. Thanks to our function we can quickly perform more linear regressions on other datasets.

Let’s quickly perform a new linear fit on the 2nd Anscombe dataset:

data_2 = data[data["dataset"]=="II"]

fit_predict_plot_linear(data_2["x"],data_2["y"])

plt.show()

It looks like our linear fit on Dataset II produces a nearly identical fit to the linear fit on Dataset I. Although our errors look to be almost identical our visual inspection tells us that Dataset II is probably not a linear correllation and we should try to make a different model.

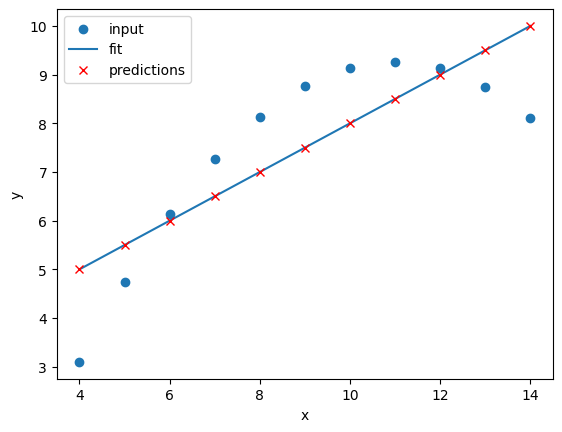

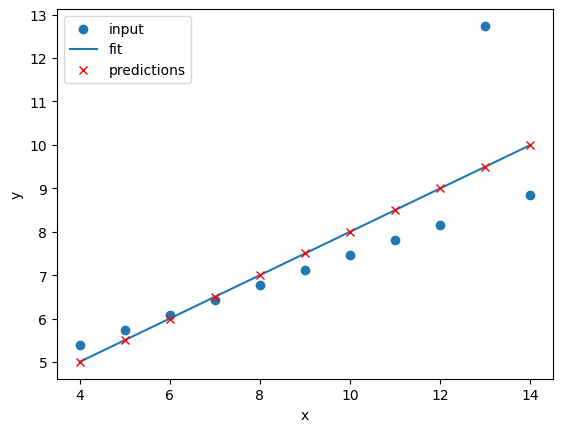

Exercise: Repeat the linear regression excercise for Datasets III and IV.

Adjust your code to repeat the linear regression for the other datasets. What can you say about the similarities and/or differences between the linear regressions on the 4 datasets?

Solution

# Repeat the following and adjust for dataset IV data_3 = data[data["dataset"]=="III"] fit_predict_plot_linear(data_3["x"],data_3["y"]) plt.show()

The 4 datasets all produce very similar linear regression fit parameters (

mandc) and RMSEs despite visual differences in the 4 datasets.This is intentional as the Anscombe Quartet is designed to produce near identical basic statistical values such as means and standard deviations.

While the trained model parameters and errors are near identical, our visual inspection tells us that a linear fit might not be the best way of modelling all of these datasets.

Polynomial regression using Scikit-Learn

Now that we have learnt how to do a linear regression it’s time look into polynomial regressions. Polynomial functions are non-linear functions that are commonly-used to model data. Mathematically they have N degrees of freedom and they take the following form y = a + bx + cx^2 + dx^3 ... + mx^N

If we have a polynomial of degree N=1 we once again return to a linear equation y = a + bx or as it is more commonly written y = mx + c. Let’s create a polynomial regression using N=2.

In Scikit-Learn this is done in two steps. First we pre-process our input data x_data into a polynomial representation using the PolynomialFeatures function. Then we can create our polynomial regressions using the LinearRegression().fit() function, but this time using the polynomial representation of our x_data.

from sklearn.preprocessing import PolynomialFeatures

def pre_process_poly(x, y):

# sklearn requires a 2D array, so lets reshape our 1D arrays.

x_data = np.array(x).reshape(-1, 1)

y_data = np.array(y).reshape(-1, 1)

# create a polynomial representation of our data

poly_features = PolynomialFeatures(degree=2)

x_poly = poly_features.fit_transform(x_data)

return x_poly, x_data, y_data

def fit_poly_model(x_poly, y_data):

# Define our estimator/model(s)

poly_regress = LinearRegression()

# define and train our model

poly_regress.fit(x_poly,y_data)

# inspect trained model parameters

poly_m = poly_regress.coef_

poly_c = poly_regress.intercept_

print("poly_coefs",poly_m, poly_c)

return poly_regress

def predict_poly_model(poly_regress, x_poly, y_data):

# predict some values using our trained estimator/model

# (in this case - our input data)

poly_data = poly_regress.predict(x_poly)

poly_error = math.sqrt(mean_squared_error(y_data, poly_data))

print("poly error=", poly_error)

return poly_data

def plot_poly_model(x_data, poly_data):

# visualise!

plt.plot(x_data, poly_data, label="poly fit")

plt.legend()

def fit_predict_plot_poly(x, y):

# Combine all of the steps

x_poly, x_data, y_data = pre_process_poly(x, y)

poly_regress = fit_poly_model(x_poly, y_data)

poly_data = predict_poly_model(poly_regress, x_poly, y_data)

plot_poly_model(x_data, poly_data)

return poly_regress

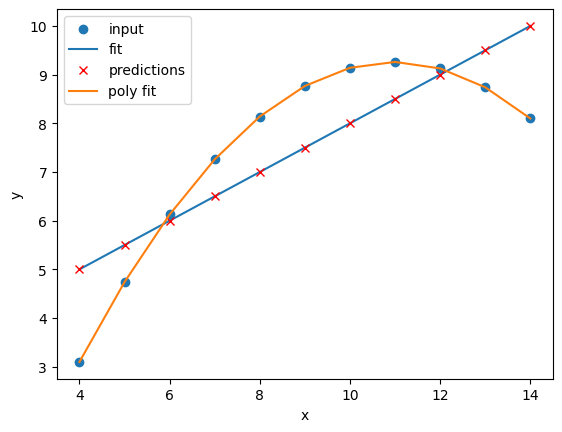

Lets plot our input dataset II, linear model, and polynomial model together, as well as compare the errors of the linear and polynomial fits.

# Sort our data in order of our x (feature) values

data_2 = data[data["dataset"]=="II"]

data_2 = data_2.sort_values("x")

fit_predict_plot_linear(data_2["x"],data_2["y"])

fit_predict_plot_poly(data_2["x"],data_2["y"])

plt.show()

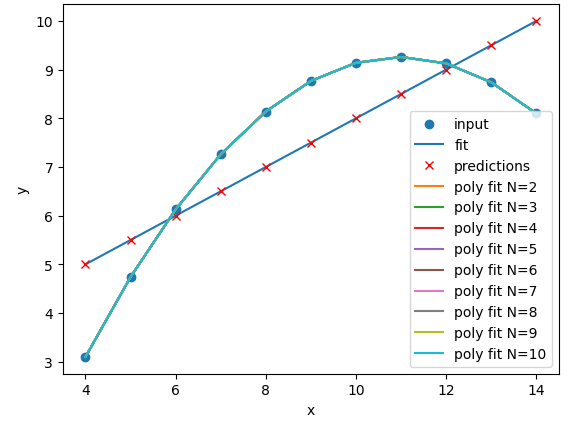

Comparing the plots and errors it seems like a polynomial regression of N=2 is a far superior fit to Dataset II than a linear fit. In fact, it looks like our polynomial fit almost perfectly fits Dataset II… which is because Dataset II is created from a N=2 polynomial equation!

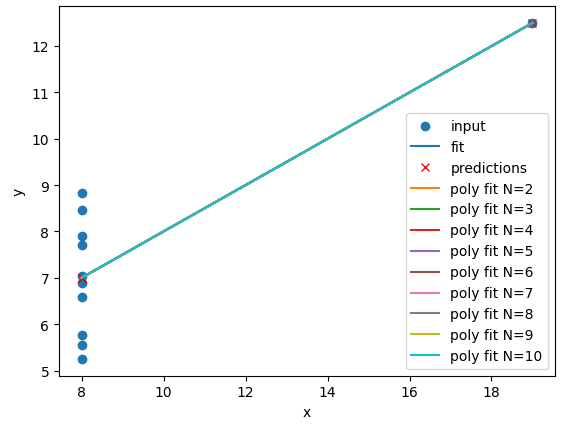

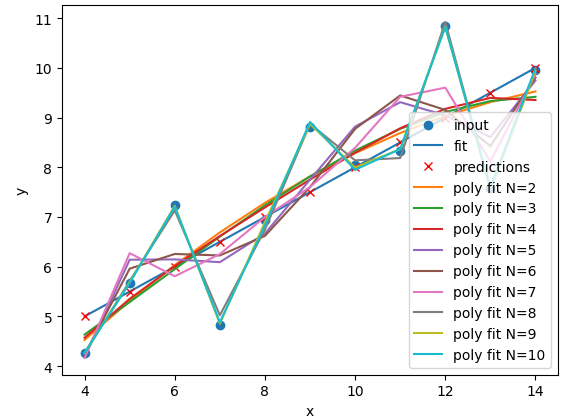

Exercise: Perform and compare linear and polynomial fits for Datasets I, II, III, and IV.

Which performs better for each dataset? Modify your polynomial regression function to take

Nas an input parameter to your regression model. How does changing the degree of polynomial fit affect each dataset?Solution

for ds in ["I","II","III","IV"]: # Sort our data in order of our x (feature) values data_ds = data[data["dataset"]==ds] data_ds = data_ds.sort_values("x") fit_predict_plot_linear(data_ds["x"],data_ds["y"]) fit_predict_plot_poly(data_ds["x"],data_ds["y"]) plt.show()

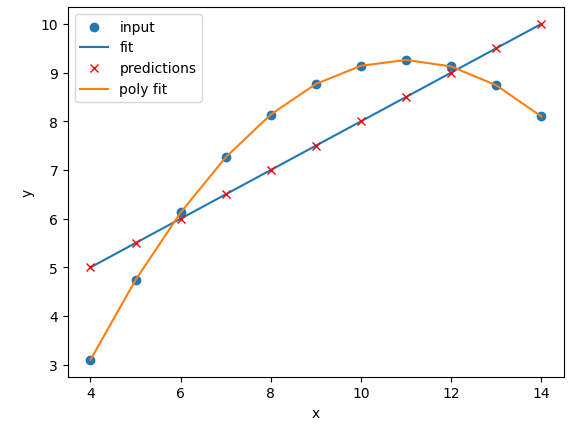

The

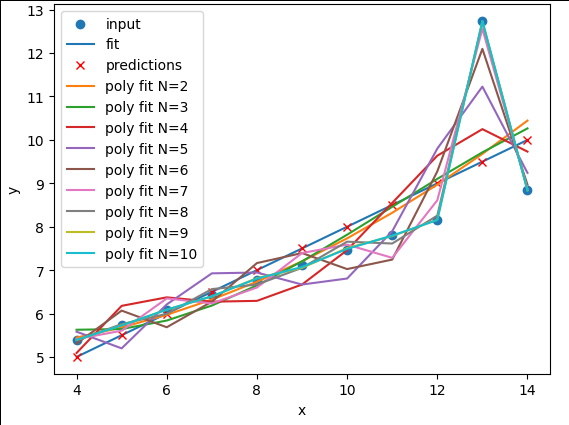

N=2polynomial fit is far better for Dataset II. According to the RMSE the polynomial is a slightly better fit for Datasets I and III, however it could be argued that a linear fit is good enough. Dataset III looks like a linear relation that has a single outlier, rather than a truly non-linear relation. The polynomial and linear fits perform just as well (or poorly) on Dataset IV. For Dataset IV it looks likeymay be a better estimator ofx, thanxis at estimatingy.def pre_process_poly(x, y, N): # sklearn requires a 2D array, so lets reshape our 1D arrays. x_data = np.array(x).reshape(-1, 1) y_data = np.array(y).reshape(-1, 1) # create a polynomial representation of our data poly_features = PolynomialFeatures(degree=N) x_poly = poly_features.fit_transform(x_data) return x_poly, x_data, y_data def plot_poly_model(x_data, poly_data, N): # visualise! plt.plot(x_data, poly_data, label="poly fit N=" + str(N)) plt.legend() def fit_predict_plot_poly(x, y, N): # Combine all of the steps x_poly, x_data, y_data = pre_process_poly(x, y, N) poly_regress = fit_poly_model(x_poly, y_data) poly_data = predict_poly_model(poly_regress, x_poly, y_data) plot_poly_model(x_data, poly_data, N) return poly_regress for ds in ["I","II","III","IV"]: # Sort our data in order of our x (feature) values data_ds = data[data["dataset"]==ds] data_ds = data_ds.sort_values("x") fit_predict_plot_linear(data_ds["x"],data_ds["y"]) for N in range(2,11): print("Polynomial degree =",N) fit_predict_plot_poly(data_ds["x"],data_ds["y"],N) plt.show()and

for ds in ["I","II","III","IV"]: # Sort our data in order of our x (feature) values data_ds = data[data["dataset"]==ds] data_ds = data_ds.sort_values("x") fit_predict_plot_linear(data_ds["x"],data_ds["y"]) for N in range(2,11): print("Polynomial degree =",N) fit_predict_plot_poly(data_ds["x"],data_ds["y"],N) plt.show()With a large enough polynomial you can fit through every point with a unique

xvalue. Datasets II and IV remain unchanged beyondN=2as the polynomial has converged (dataset II) or cannot model the data (Dataset IV). Datasets I and III slowly decrease their RMSE and N is increased, but it is likely that these more complex models are overfitting the data. Overfitting is discussed later in the lesson.

Let’s explore a more realistic scenario

Now that we have some convenient Python functions to perform quick regressions on data it’s time to explore a more realistic regression modelling scenario.

Let’s start by loading in and examining a new dataset from Seaborn: a penguin dataset containing a few hundred samples and a number of features and labels.

dataset = sns.load_dataset("penguins")

dataset.head()

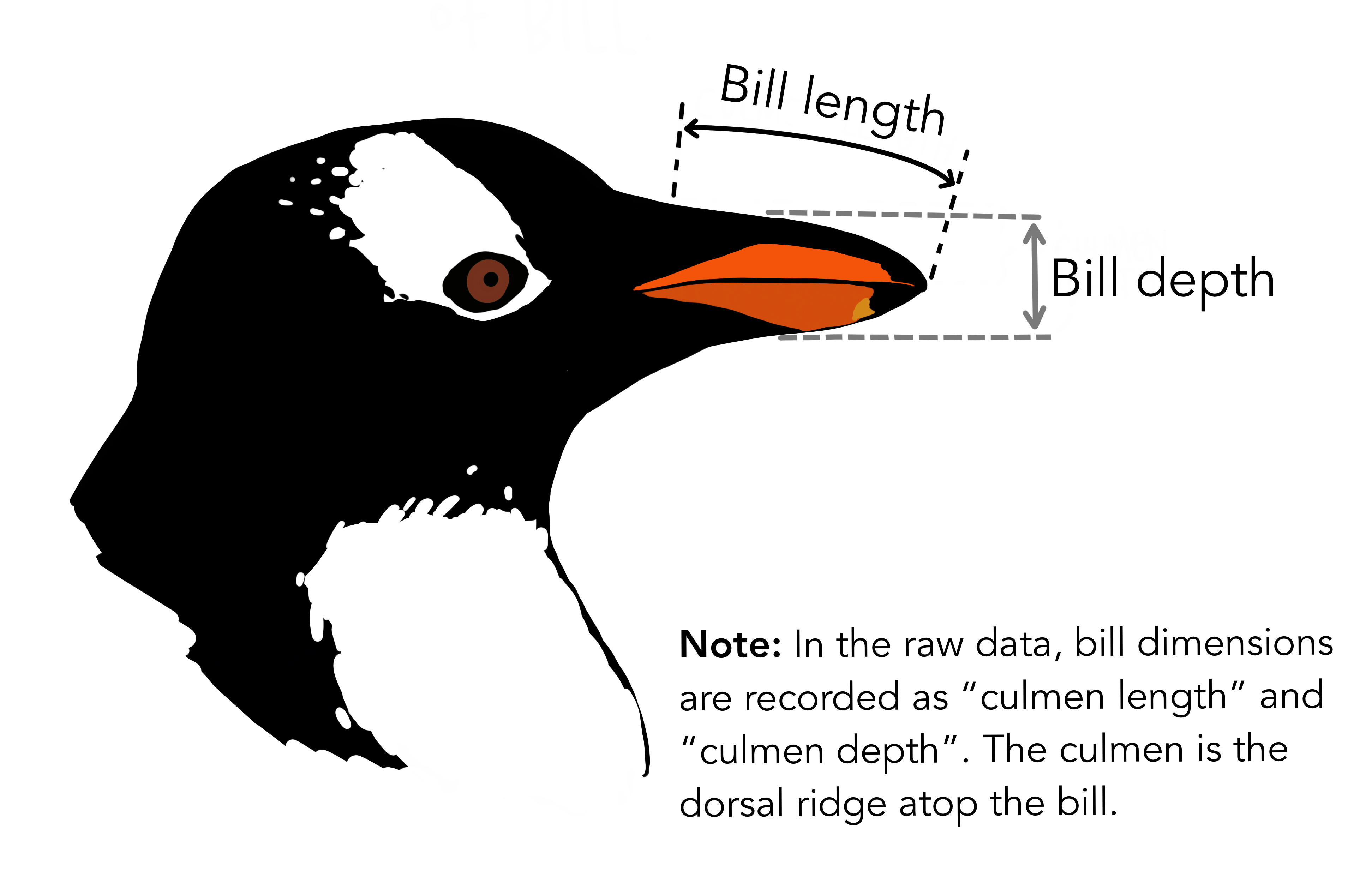

We can see that we have seven columns in total: 4 continuous (numerical) columns named bill_length_mm, bill_depth_mm, flipper_length_mm, and body_mass_g; and 3 discrete (categorical) columns named species, island, and sex. We can also see from a quick inspection of the first 5 samples that we have some missing data in the form of NaN values. Let’s go ahead and remove any rows that contain NaN values:

dataset.dropna(inplace=True)

dataset.head()

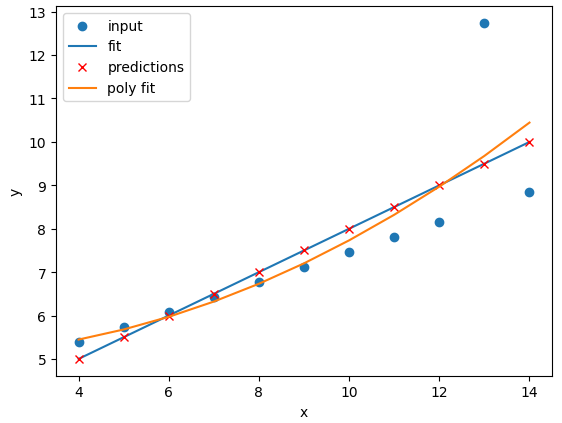

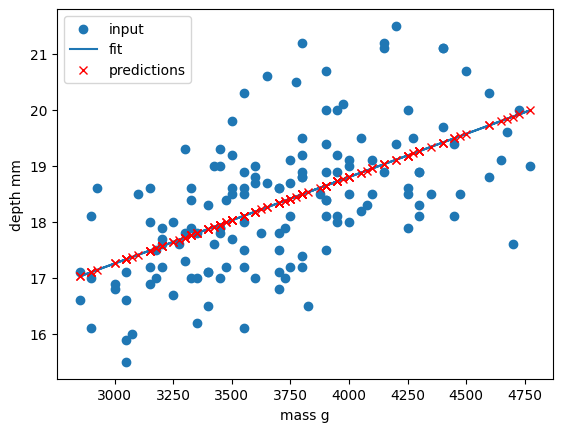

Now that we have cleaned our data we can try and predict a penguins bill depth using their body mass. In this scenario we will train a linear regression model using body_mass_g as our feature data and bill_depth_mm as our label data. We will train our model on a subset of the data by slicing the first 146 samples of our cleaned data. We will then use our regression function to train and plot our model.

dataset_1 = dataset[:146]

x_data = dataset_1["body_mass_g"]

y_data = dataset_1["bill_depth_mm"]

trained_model = fit_predict_plot_linear(x_data, y_data)

plt.xlabel("mass g")

plt.ylabel("depth mm")

plt.show()

Congratulations! We’ve taken our linear regression function and quickly created and trained a new linear regression model on a brand new dataset. Note that this time we have returned our model from the regression function and assigned it to the variable trained_model. We can now use this model to predict bill_depth_mm values for any given body_mass_g values that we pass it.

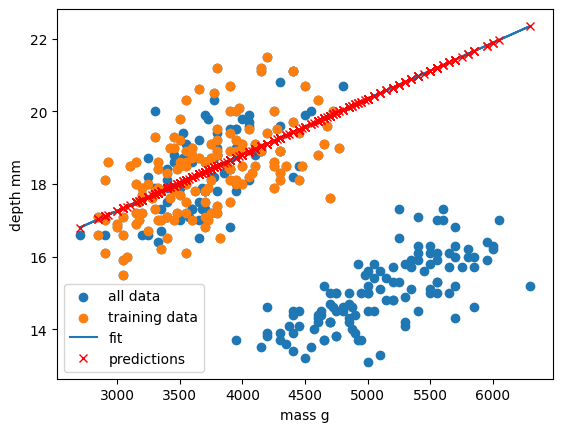

Let’s provide the model with all of the penguin samples and visually inspect how the linear regression model performs.

x_data_all, y_data_all = pre_process_linear(dataset["body_mass_g"], dataset["bill_depth_mm"])

y_predictions = predict_linear_model(trained_model, x_data_all, y_data_all)

plt.scatter(x_data_all, y_data_all, label="all data")

plt.scatter(x_data, y_data, label="training data")

plt.plot(x_data_all, y_predictions, label="fit")

plt.plot(x_data_all, y_predictions, "rx", label="predictions")

plt.xlabel("mass g")

plt.ylabel("depth mm")

plt.legend()

plt.show()

Oh dear. It looks like our linear regression fits okay for our subset of the penguin data, and a few additional samples, but there appears to be a cluster of points that are poorly predicted by our model.

This is a classic Machine Learning scenario known as over-fitting

We have trained our model on a specific set of data, and our model has learnt to reproduce those specific answers at the expense of creating a more generally-applicable model. Over fitting is the ML equivalent of learning an exam papers mark scheme off by heart, rather than understanding and answering the questions.

Perhaps our model is too simple? Perhaps our data is more complex than we thought? Perhaps our question/goal needs adjusting? Let’s explore the penguin dataset in more depth in the next section!

Key Points

Supervised methods - Classification

Overview

Teaching: min

Exercises: minQuestions

Objectives

Classification

Classification is a supervised method to recognise and group data objects into a pre-determined categories. Where regression uses labelled observations to predict a continuous numerical value, classification predicts a discrete categorical fit to a class. Classification in ML leverages a wide range of algorithms to classify a set of data/datasets into their respective categories.

In this episode we are going to introduce the concept of supervised classification by classifying penguin data into different species of penguins using Scikit-Learn.

The penguins dataset

We’re going to be using the penguins dataset of Allison Horst, published here, The dataset contains 344 size measurements for three penguin species (Chinstrap, Gentoo and Adélie) observed on three islands in the Palmer Archipelago, Antarctica.

The physical attributes measured are flipper length, beak length, beak width, body mass, and sex.

In other words, the dataset contains 344 rows with 7 features i.e. 5 physical attributes, species and the island where the observations were made.

import seaborn as sns

dataset = sns.load_dataset('penguins')

dataset.head()

Our aim is to develop a classification model that will predict the species of a penguin based upon measurements of those variables.

As a rule of thumb for ML/DL modelling, it is best to start with a simple model and progressively add complexity in order to meet our desired classification performance.

For this lesson we will limit our dataset to only numerical values such as bill_length, bill_depth, flipper_length, and body_mass while we attempt to classify species.

The above table contains multiple categorical objects such as species. If we attempt to include the other categorical fields, island and sex, we might hinder classification performance due to the complexity of the data.

Preprocessing our data

Lets do some pre-processing on our dataset and specify our X features and y labels:

# Extract the data we need

feature_names = ['bill_length_mm', 'bill_depth_mm', 'flipper_length_mm', 'body_mass_g']

dataset.dropna(subset=feature_names, inplace=True)

class_names = dataset['species'].unique()

X = dataset[feature_names]

y = dataset['species']

Having extracted our features X and labels y, we can now split the data using the train_test_split function.

Training-testing split

When undertaking any machine learning project, it’s important to be able to evaluate how well your model works.

Rather than evaluating this manually we can instead set aside some of our training data, usually 20% of our training data, and use these as a testing dataset. We then train on the remaining 80% and use the testing dataset to evaluate the accuracy of our trained model.

We lose a bit of training data in the process, But we can now easily evaluate the performance of our model. With more advanced test-train split techniques we can even recover this lost training data!

Why do we do this?

It’s important to do this early, and to do all of your work with the training dataset - this avoids any risk of you introducing bias to the model based on your own manual observations of data in the testing set (afterall, we want the model to make the decisions about parameters!). This can also highlight when you are over-fitting on your training data.

How we split the data into training and testing sets is also extremely important. We need to make sure that our training data is representitive of both our test data and actual data.

For classification problems this means we should ensure that each class of interest is represented proportionately in both training and testing sets. For regression problems we should ensure that our training and test sets cover the range of feature values that we wish to predict.

In the previous regression episode we created the penguin training data by taking the first 146 samples our the dataset. Unfortunately the penguin data is sorted by species and so our training data only considered one type of penguin and thus was not representitive of the actual data we tried to fit. We could have avoided this issue by randomly shuffling our penguin samples before splitting the data.

When not to shuffle your data

Sometimes your data is dependant on it’s ordering, such as time-series data where past values influence future predictions. Creating train-test splits for this can be tricky at first glance, but fortunately there are existing techniques to tackle this (often called stratification): See Scikit-Learn for more information.

We specify the fraction of data to use as test data, and the function randomly shuffles our data prior to splitting:

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=0)

We’ll use X_train and y_train to develop our model, and only look at X_test and y_test when it’s time to evaluate its performance.

Visualising the data

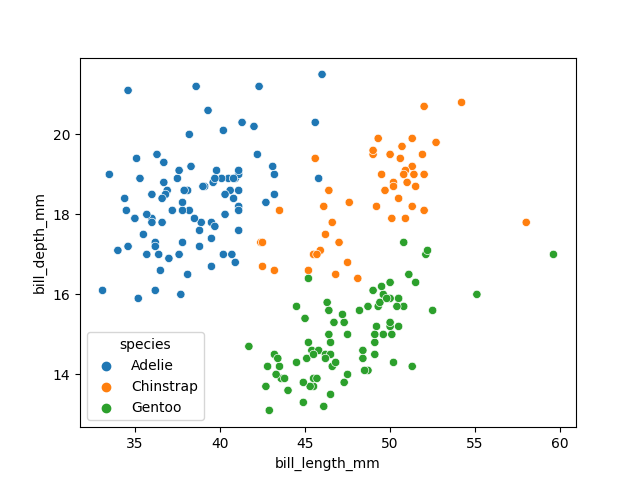

In order to better understand how a model might classify this data, we can first take a look at the data visually, to see what patterns we might identify.

import matplotlib.pyplot as plt

fig01 = sns.scatterplot(X_train, x=feature_names[0], y=feature_names[1], hue=dataset['species'])

plt.show()

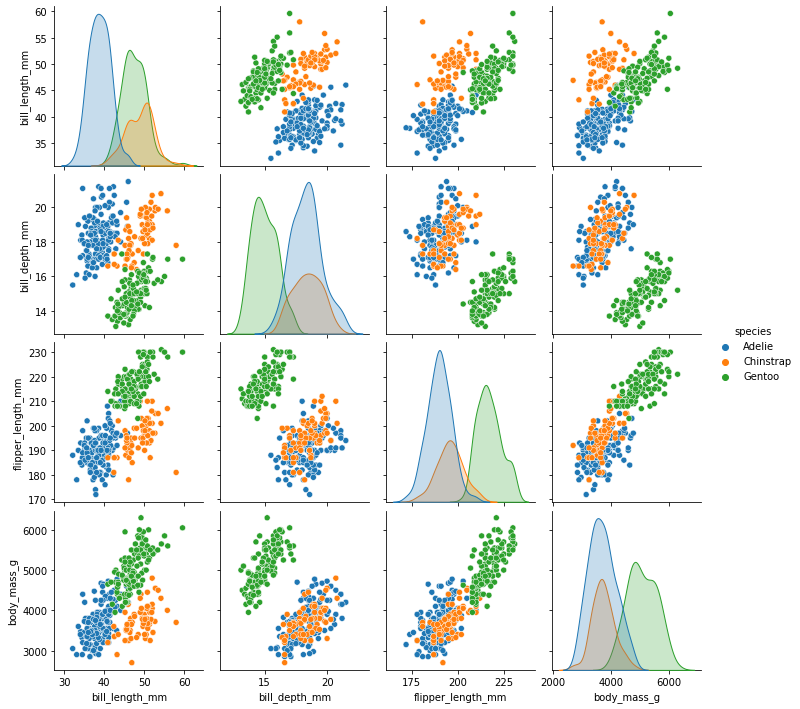

As there are four measurements for each penguin, we need quite a few plots to visualise all four dimensions against each other. Here is a handy Seaborn function to do so:

sns.pairplot(dataset, hue="species")

plt.show()

We can see that penguins from each species form fairly distinct spatial clusters in these plots, so that you could draw lines between those clusters to delineate each species. This is effectively what many classification algorithms do. They use the training data to delineate the observation space, in this case the 4 measurement dimensions, into classes. When given a new observation, the model finds which of those class areas the new observation falls in to.

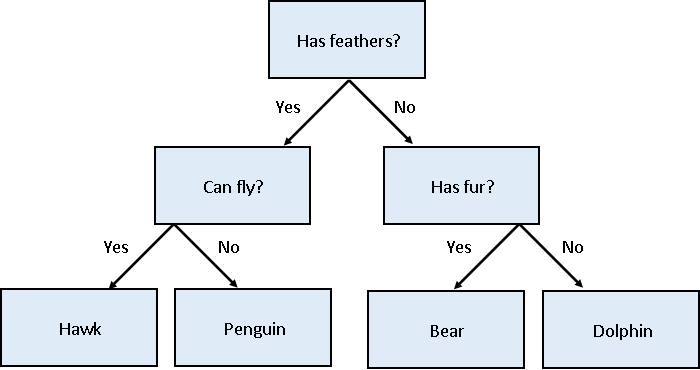

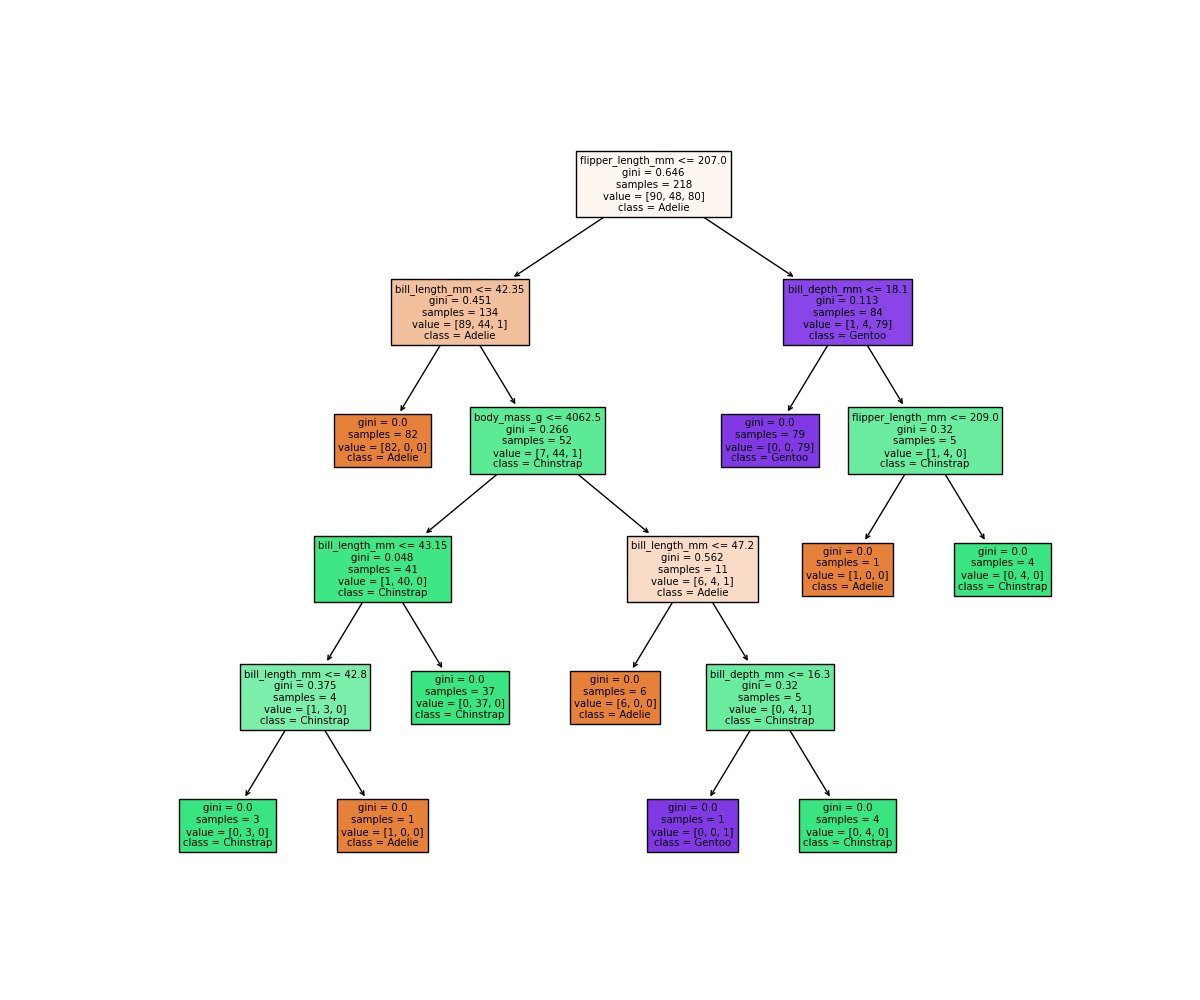

Classification using a decision tree

We’ll first apply a decision tree classifier to the data. Decisions trees are conceptually similar to flow diagrams (or more precisely for the biologists: dichotomous keys). They split the classification problem into a binary tree of comparisons, at each step comparing a measurement to a value, and moving left or right down the tree until a classification is reached.

Training and using a decision tree in Scikit-Learn is straightforward:

from sklearn.tree import DecisionTreeClassifier, plot_tree

clf = DecisionTreeClassifier(max_depth=2)

clf.fit(X_train, y_train)

clf.predict(X_test)

Hyper-parameters: parameters that tune a model

‘Max Depth’ is an example of a hyper-parameter for the decision tree model. Where models use the parameters of an observation to predict a result, hyper-parameters are used to tune how a model works. Each model you encounter will have its own set of hyper-parameters, each of which affects model behaviour and performance in a different way. The process of adjusting hyper-parameters in order to improve model performance is called hyper-parameter tuning.

We can conveniently check how our model did with the .score() function, which will make predictions and report what proportion of them were accurate:

clf_score = clf.score(X_test, y_test)

print(clf_score)

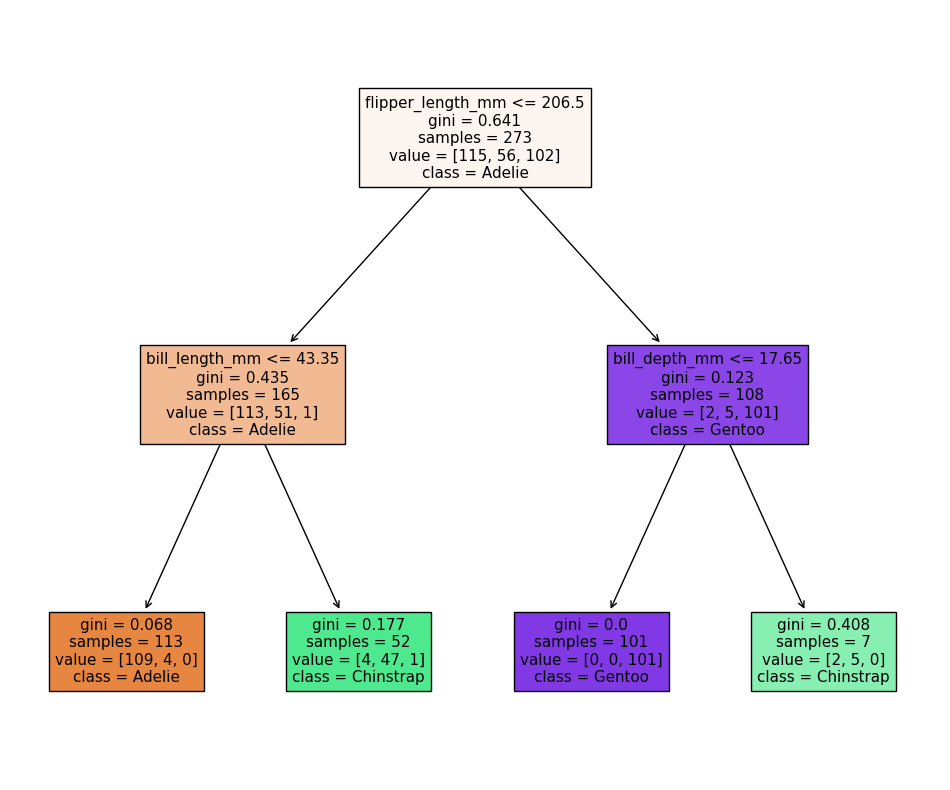

Our model reports an accuracy of ~98% on the test data! We can also look at the decision tree that was generated:

fig = plt.figure(figsize=(12, 10))

plot_tree(clf, class_names=class_names, feature_names=feature_names, filled=True, ax=fig.gca())

plt.show()

The first first question (depth=1) splits the training data into “Adelie” and “Gentoo” categories using the criteria flipper_length_mm <= 206.5, and the next two questions (depth=2) split the “Adelie” and “Gentoo” categories into “Adelie & Chinstrap” and “Gentoo & Chinstrap” predictions.

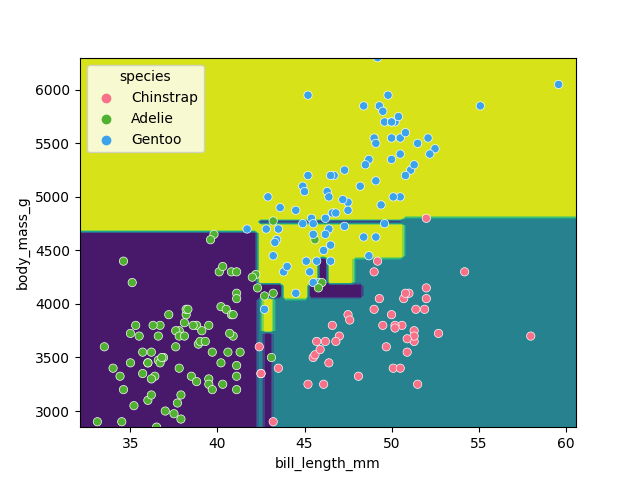

Visualising the classification space

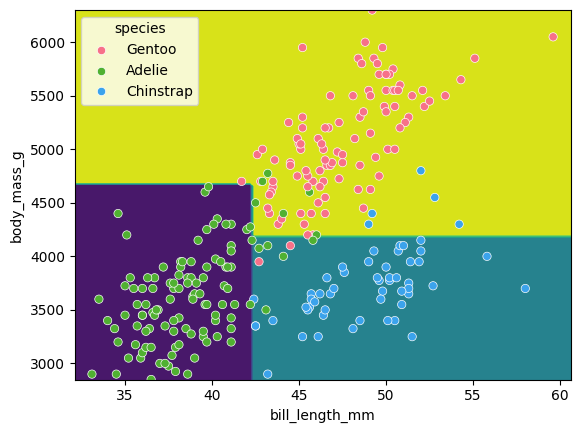

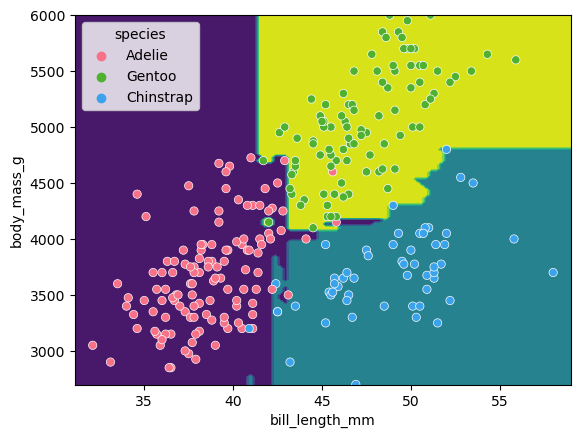

We can visualise the classification space (decision tree boundaries) to get a more intuitive feel for what it is doing.Note that our 2D plot can only show two parameters at a time, so we will quickly visualise by training a new model on only 2 features:

from sklearn.inspection import DecisionBoundaryDisplay

f1 = feature_names[0]

f2 = feature_names[3]

clf = DecisionTreeClassifier(max_depth=2)

clf.fit(X_train[[f1, f2]], y_train)

d = DecisionBoundaryDisplay.from_estimator(clf, X_train[[f1, f2]])

sns.scatterplot(X_train, x=f1, y=f2, hue=y_train, palette="husl")

plt.show()

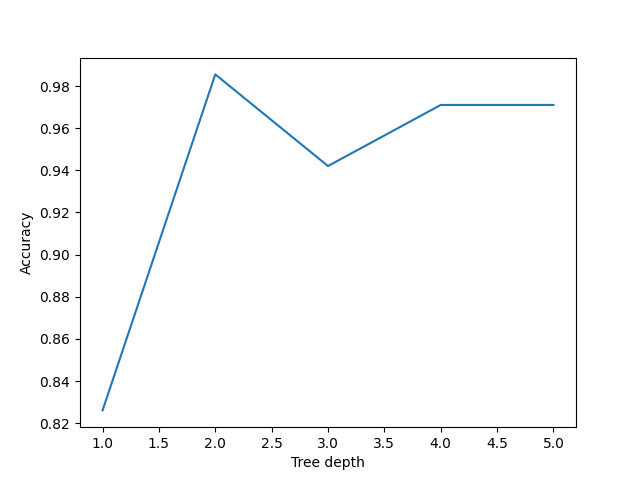

Tuning the max_depth hyperparameter

Our decision tree using a max_depth=2 is fairly simple and there are still some incorrect predictions in our final classifications. Let’s try varying the max_depth hyperparameter to see if we can improve our model predictions.

import pandas as pd

max_depths = [1, 2, 3, 4, 5]

accuracy = []

for i, d in enumerate(max_depths):

clf = DecisionTreeClassifier(max_depth=d)

clf.fit(X_train, y_train)

acc = clf.score(X_test, y_test)

accuracy.append((d, acc))

acc_df = pd.DataFrame(accuracy, columns=['depth', 'accuracy'])

sns.lineplot(acc_df, x='depth', y='accuracy')

plt.xlabel('Tree depth')

plt.ylabel('Accuracy')

plt.show()

Here we can see that a max_depth=2 performs slightly better on the test data than those with max_depth > 2. This can seem counter intuitive, as surely more questions should be able to better split up our categories and thus give better predictions?

Let’s reuse our fitting and plotting codes from above to inspect a decision tree that has max_depth=5:

clf = DecisionTreeClassifier(max_depth=5)

clf.fit(X_train, y_train)

fig = plt.figure(figsize=(12, 10))

plot_tree(clf, class_names=class_names, feature_names=feature_names, filled=True, ax=fig.gca())

plt.show()

It looks like our decision tree has split up the training data into the correct penguin categories and more accurately than the max_depth=2 model did, however it used some very specific questions to split up the penguins into the correct categories. Let’s try visualising the classification space for a more intuitive understanding:

f1 = feature_names[0]

f2 = feature_names[3]

clf = DecisionTreeClassifier(max_depth=5)

clf.fit(X_train[[f1, f2]], y_train)

d = DecisionBoundaryDisplay.from_estimator(clf, X_train[[f1, f2]])

sns.scatterplot(X_train, x=f1, y=f2, hue=y_train, palette='husl')

plt.show()

Earlier we saw that the max_depth=2 model split the data into 3 simple bounding boxes, whereas for max_depth=5 we see the model has created some very specific classification boundaries to correctly classify every point in the training data.

This is a classic case of over-fitting - our model has produced extremely specific parameters that work for the training data but are not representitive of our test data. Sometimes simplicity is better!

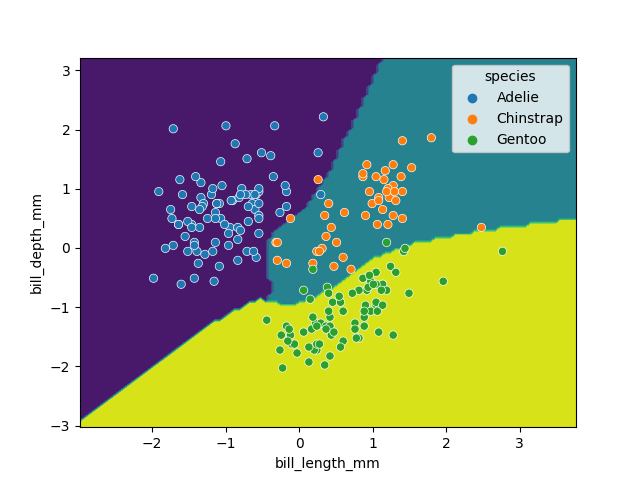

Classification using support vector machines

Next, we’ll look at another commonly used classification algorithm, and see how it compares. Support Vector Machines (SVM) work in a way that is conceptually similar to your own intuition when first looking at the data. They devise a set of hyperplanes that delineate the parameter space, such that each region contains ideally only observations from one class, and the boundaries fall between classes.

Normalising data

Unlike decision trees, SVMs require an additional pre-processing step for our data. We need to normalise it. Our raw data has parameters with different magnitudes such as bill length measured in 10’s of mm’s, whereas body mass is measured in 1000’s of grams. If we trained an SVM directly on this data, it would only consider the parameter with the greatest variance (body mass).

Normalising maps each parameter to a new range so that it has a mean of 0 and a standard deviation of 1.

from sklearn import preprocessing

import pandas as pd

scalar = preprocessing.StandardScaler()

scalar.fit(X_train)

X_train_scaled = pd.DataFrame(scalar.transform(X_train), columns=X_train.columns, index=X_train.index)

X_test_scaled = pd.DataFrame(scalar.transform(X_test), columns=X_test.columns, index=X_test.index)

Note that we fit the scalar to our training data - we then use this same pre-trained scalar to transform our testing data.

With this scaled data, training the models works exactly the same as before.

from sklearn import svm

SVM = svm.SVC(kernel='poly', degree=3, C=1.5)

SVM.fit(X_train_scaled, y_train)

svm_score = SVM.score(X_test_scaled, y_test)

print("Decision tree score is ", clf_score)

print("SVM score is ", svm_score)

We can again visualise the decision space produced, also using only two parameters:

x2 = X_train_scaled[[feature_names[0], feature_names[1]]]

SVM = svm.SVC(kernel='poly', degree=3, C=1.5)

SVM.fit(x2, y_train)

DecisionBoundaryDisplay.from_estimator(SVM, x2) #, ax=ax

sns.scatterplot(x2, x=feature_names[0], y=feature_names[1], hue=dataset['species'])

plt.show()

While this SVM model performs slightly worse than our decision tree (95.6% vs. 98.5%), it’s likely that the non-linear boundaries will perform better when exposed to more and more real data, as decision trees are prone to overfitting and requires complex linear models to reproduce simple non-linear boundaries. It’s important to pick a model that is appropriate for your problem and data trends!

Key Points

Ensemble methods

Overview

Teaching: min

Exercises: minQuestions

Objectives

Ensemble methods

What’s better than one decision tree? Perhaps two? or three? How about enough trees to make up a forest? Ensemble methods bundle individual models together and use each of their outputs to contribute towards a final consensus for a given problem. Ensemble methods are based on the mantra that the whole is greater than the sum of the parts.

Thinking back to the classification episode with decision trees we quickly stumbled into the problem of overfitting our training data. If we combine predictions from a series of over/under fitting estimators then we can often produce a better final prediction than using a single reliable model - in the same way that humans often hear multiple opinions on a scenario before deciding a final outcome. Decision trees and regressions are often very sensitive to training outliers and so are well suited to be a part of an ensemble.

Ensemble methods are used for a variety of applciations including, but not limited to, search systems and object detection. We can use any model/estimator available in sci-kit learn to create an ensemble. There are three main methods to create ensembles approaches:

- Stacking

- Bagging

- Boosting

Let’s explore them in a bit more depth.

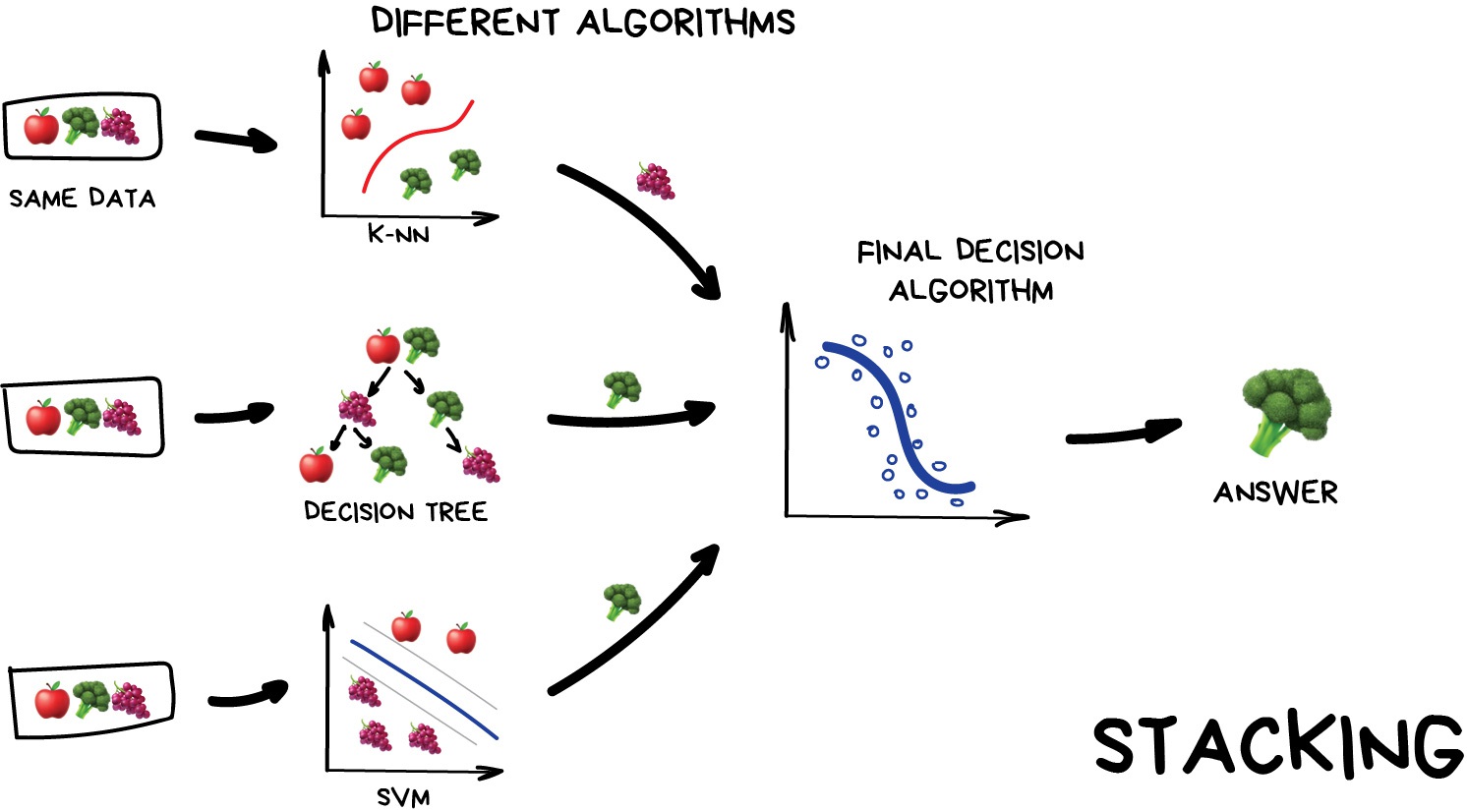

Stacking

This is where we train a series of different models/estimators on the same input data in parallel. We then take the output of each model and pass them into a final decision algorithm/model that makes the final prediction.

If we trained the same model multiple times on the same data we would expect very similar answers, and so the emphasis with stacking is to choose different models that can be used to build up a reliable concensus. Regression is then typically a good choice for the final decision-making model.

Image from Vasily Zubarev via their blog

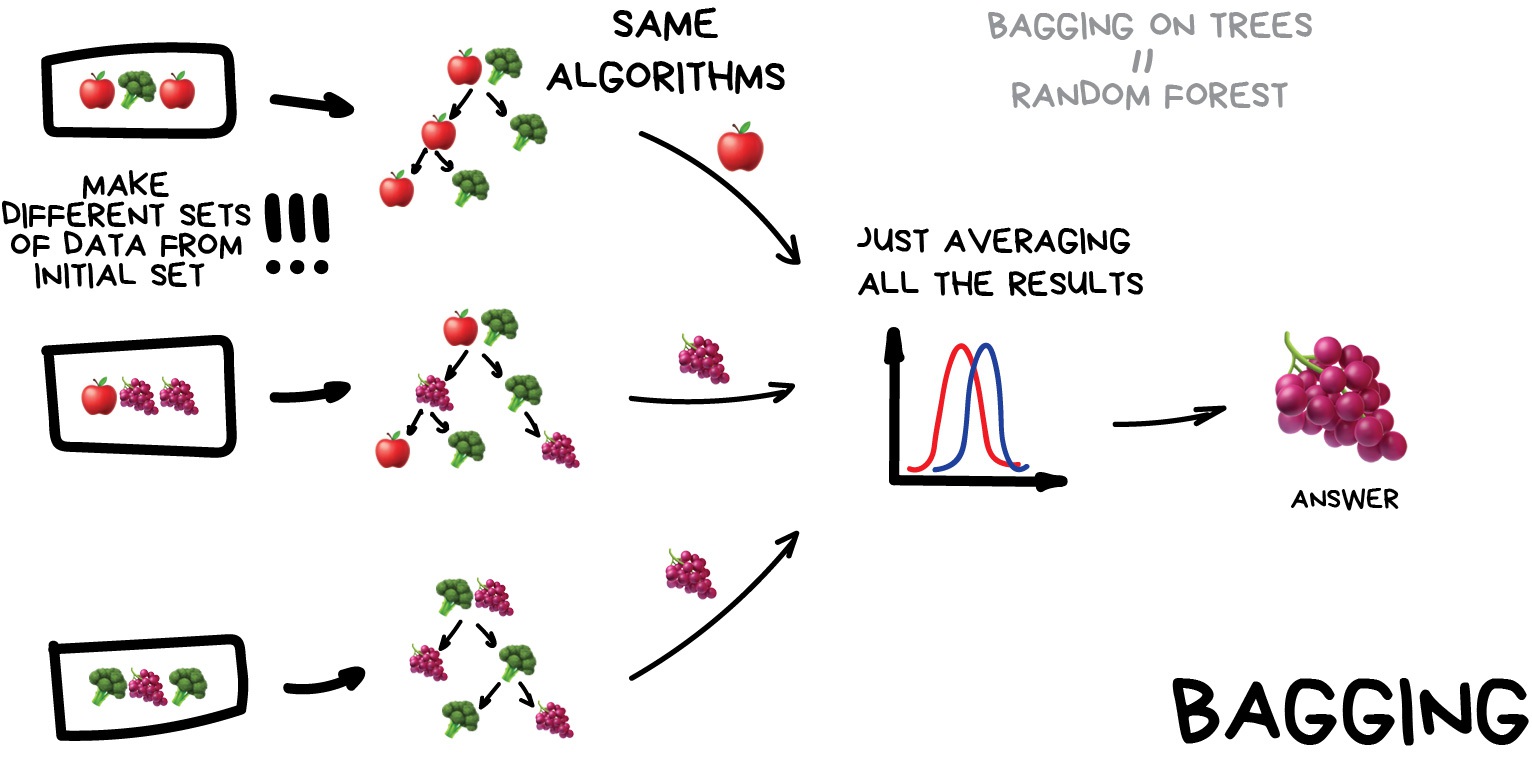

Bagging (a.k.a Bootstrap AGGregatING )

This is where we use the same model/estimator and fit it on different subsets of the training data. We can then average the results from each model to produce a final prediction. The subsets are random and may even repeat themselves.

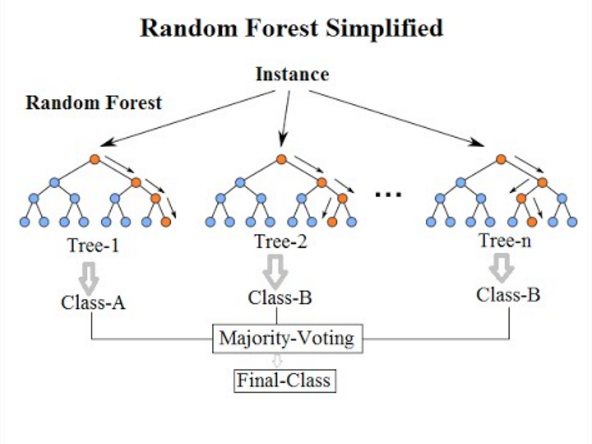

The most common example is known as the Random Forest algorithm, which we’ll take a look at later on. Random Forests are typically used as a faster, computationally cheaper alternative to Neural Networks, which is ideal for real-time applications like camera face detection prompts.

Image from Vasily Zubarev via their blog

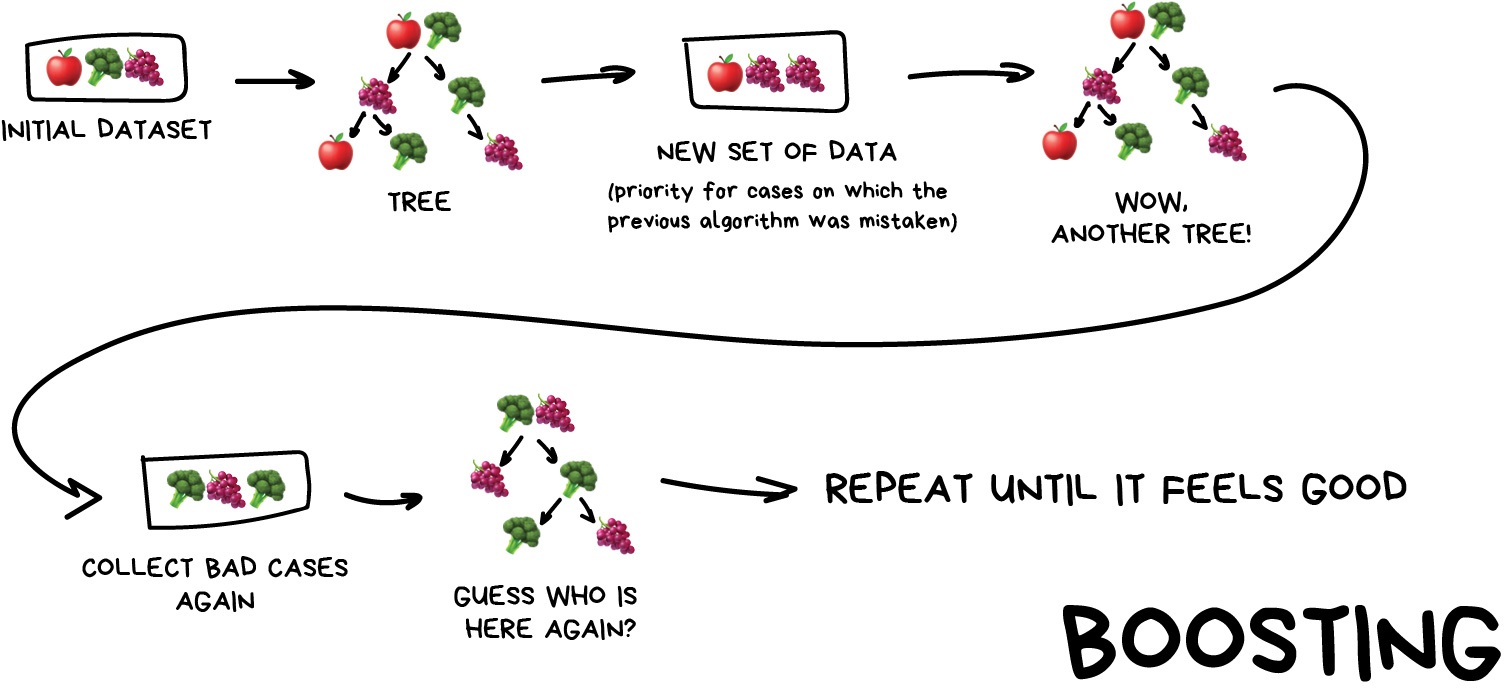

Boosting

This is where we train a single type of Model/estimator on an initial dataset, test it’s accuracy, and then subsequently train the same type of models on poorly predicted samples i.e. each new model pays most attention to data that were incorrectly predicted by the last one.

Just like for bagging, boosting is trained mostly on subsets, however in this case these subsets are not randomly generated but are instead built using poorly estimated predictions. Boosting can produce some very high accuracies by learning from it’s mistakes, but due to the iterative nature of these improvements it doesn’t parallelize well unlike the other ensemble methods. Despite this it can still be a faster, and computationally cheaper alternative to Neural Networks.

Image from Vasily Zubarev via their blog

Ensemble summary

Machine learning jargon can often be hard to remember, so here is a quick summary of the 3 ensemble methods:

- Stacking - same dataset, different models, trained in parallel

- Bagging - different subsets, same models, trained in parallel

- Boosting - subsets of bad estimates, same models, trained in series

Using Bagging (Random Forests) for a classification problem

In this session we’ll take another look at the penguins data and applying one of the most common bagging approaches, random forests, to try and solve our species classification problem. First we’ll load in the dataset and define a train and test split.

# import libraries

import numpy as np

import pandas as pd

import seaborn as sns

from sklearn.model_selection import train_test_split

# load penguins data

penguins = sns.load_dataset('penguins')

# prepare and define our data and targets

feature_names = ['bill_length_mm', 'bill_depth_mm', 'flipper_length_mm', 'body_mass_g']

penguins.dropna(subset=feature_names, inplace=True)

species_names = penguins['species'].unique()

X = penguins[feature_names]

y = penguins.species

# Split data in training and test set

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=5)

print("train size:", X_train.shape)

print("test size", X_test.shape)

We’ll now take a look how we can use ensemble methods to perform a classification task such as identifying penguin species! We’re going to use a Random forest classifier available in scikit-learn which is a widely used example of a bagging approach.

Random forests are built on decision trees and can provide another way to address over-fitting. Rather than classifying based on one single decision tree (which could overfit the data), an average of results of many trees can be derived for more robust/accurate estimates compared against single trees used in the ensemble.

We can now define a random forest estimator and train it using the penguin training data. We have a similar set of attritbutes to the DecisionTreeClassifier but with an extra parameter called n_estimators which is the number of trees in the forest.

from sklearn.ensemble import RandomForestClassifier

from sklearn.tree import plot_tree

# Define our model

# extra parameter called n_estimators which is number of trees in the forest

# a leaf is a class label at the end of the decision tree

forest = RandomForestClassifier(n_estimators=100, max_depth=7, min_samples_leaf=1)

# train our model

forest.fit(X_train, y_train)

# Score our model

print(forest.score(X_test, y_test))

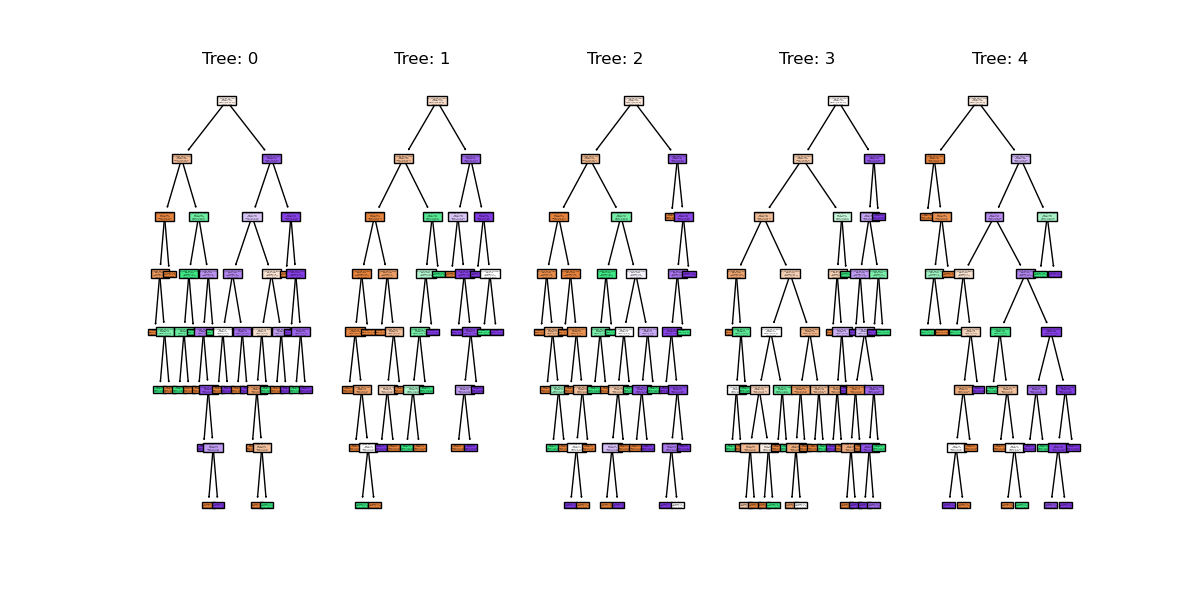

You might notice that we have a different value (hopefully increased) compared with the decision tree classifier used above on the same training data. Lets plot the first 5 trees in the forest to get an idea of how this model differs from a single decision tree.

import matplotlib.pyplot as plt

fig, axes = plt.subplots(nrows=1, ncols=5 ,figsize=(12,6))

# plot first 5 trees in forest

for index in range(0, 5):

plot_tree(forest.estimators_[index],

class_names=species_names,

feature_names=feature_names,

filled=True,

ax=axes[index])

axes[index].set_title(f'Tree: {index}')

plt.show()

We can see the first 5 (of 100) trees that were fitted as part of the forest.

If we train the random forest estimator using the same two parameters used to plot the classification space for the decision tree classifier what do we think the plot will look like?

# lets train a random forest for only two features (body mass and bill length)

from sklearn.inspection import DecisionBoundaryDisplay

f1 = feature_names[0]

f2 = feature_names[3]

# plot classification space for body mass and bill length with random forest

forest_2d = RandomForestClassifier(n_estimators=100, max_depth=7, min_samples_leaf=1, random_state=5)

forest_2d.fit(X_train[[f1, f2]], y_train)

# Lets plot the decision boundaries made by the model for the two trained features

d = DecisionBoundaryDisplay.from_estimator(forest_2d, X_train[[f1, f2]])

sns.scatterplot(X_train, x=f1, y=f2, hue=y_train, palette="husl")

plt.show()

There is still some overfitting indicated by the regions that contain only single points but using the same hyper-parameter settings used to fit the decision tree classifier, we can see that overfitting is reduced.

Stacking a regression problem

We’ve had a look at a bagging approach, but we’ll now take a look at a stacking approach and apply it to a regression problem. We’ll also introduce a new dataset to play around with.

California house price prediction

The California housing dataset for regression problems contains 8 training features such as, Median Income, House Age, Average Rooms, Average Bedrooms etc. for 20,640 properties. The target variable is the median house value for those 20,640 properties, note that all prices are in units of $100,000. This toy dataset is available as part of the scikit learn library. We’ll start by loading the dataset to very briefly inspect the attributes by printing them out.

import sklearn

from sklearn.datasets import fetch_california_housing

# load the dataset

X, y = fetch_california_housing(return_X_y=True, as_frame=True)

## All price variables are in units of $100,000

print(X.shape)

print(X.head())

print("Housing price as the target: ")

## Target is in units of $100,000

print(y.head())

print(y.shape)

For the the purposes of learning how to create and use ensemble methods and since it is a toy dataset, we will blindly use this dataset without inspecting it, cleaning or pre-processing it further.

Exercise: Investigate and visualise the dataset

For this episode we simply want to learn how to build and use an Ensemble rather than actually solve a regression problem. To build up your skills as an ML practitioner, investigate and visualise this dataset. What can you say about the dataset itself, and what can you summarise about about any potential relationships or prediction problems?

Lets start by splitting the dataset into training and testing subsets:

# split into train and test sets, We are selecting an 80%-20% train-test split.

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=5)

print(f'train size: {X_train.shape}')

print(f'test size: {X_test.shape}')

Lets stack a series of regression models. In the same way the RandomForest classifier derives a results from a series of trees, we will combine the results from a series of different models in our stack. This is done using what’s called an ensemble meta-estimator called a VotingRegressor.

We’ll apply a Voting regressor to a random forest, gradient boosting and linear regressor.

Lets stack a series of regression models. In the same way the RandomForest classifier derives a results from a series of trees, we will combine the results from a series of different models in our stack. This is done using what’s called an ensemble meta-estimator called a VotingRegressor.

We’ll apply a Voting regressor to a random forest, gradient boosting and linear regressor.

But wait, aren’t random forests/decision tree for classification problems?

Yes they are, but quite often in machine learning various models can be used to solve both regression and classification problems.

Decision trees in particular can be used to “predict” specific numerical values instead of categories, essentially by binning a group of values into a single value.

This works well for periodic/repeating numerical data. These trees are extremely sensitive to the data they are trained on, which makes them a very good model to use as a Random Forest.

But wait again, isn’t a random forest (and a gradient boosting model) an ensemble method instead of a regression model?

Yes they are, but they can be thought of as one big complex model used like any other model. The awesome thing about ensemble methods, and the generalisation of Scikit-Learn models, is that you can put an ensemble in an ensemble!

A VotingRegressor can train several base estimators on the whole dataset, and it can take the average of the individual predictions to form a final prediction.

from sklearn.ensemble import (

GradientBoostingRegressor,

RandomForestRegressor,

VotingRegressor,

)

from sklearn.linear_model import LinearRegression

# Initialize estimators

rf_reg = RandomForestRegressor(random_state=5)

gb_reg = GradientBoostingRegressor(random_state=5)

linear_reg = LinearRegression()

voting_reg = VotingRegressor([("rf", rf_reg), ("gb", gb_reg), ("lr", linear_reg)])

# fit/train voting estimator

voting_reg.fit(X_train, y_train)

# lets also fit/train the individual models for comparison

rf_reg.fit(X_train, y_train)

gb_reg.fit(X_train, y_train)

linear_reg.fit(X_train, y_train)

We fit the voting regressor in the same way we would fit a single model. When the voting regressor is instantiated we pass it a parameter containing a list of tuples that contain the estimators we wish to stack: in this case the random forest, gradient boosting and linear regressors. To get a sense of what this is doing lets predict the first 20 samples in the test portion of the data and plot the results.

import matplotlib.pyplot as plt

# make predictions

X_test_20 = X_test[:20] # first 20 for visualisation

rf_pred = rf_reg.predict(X_test_20)

gb_pred = gb_reg.predict(X_test_20)

linear_pred = linear_reg.predict(X_test_20)

voting_pred = voting_reg.predict(X_test_20)

plt.figure()

plt.plot(gb_pred, "o", color="black", label="GradientBoostingRegressor")

plt.plot(rf_pred, "o", color="blue", label="RandomForestRegressor")

plt.plot(linear_pred, "o", color="green", label="LinearRegression")

plt.plot(voting_pred, "x", color="red", ms=10, label="VotingRegressor")

plt.tick_params(axis="x", which="both", bottom=False, top=False, labelbottom=False)

plt.ylabel("predicted")

plt.xlabel("training samples")

plt.legend(loc="best")

plt.title("Regressor predictions and their average")

plt.show()

Finally, lets see how the average compares against each single estimator in the stack?

print(f'random forest: {rf_reg.score(X_test, y_test)}')

print(f'gradient boost: {gb_reg.score(X_test, y_test)}')

print(f'linear regression: {linear_reg.score(X_test, y_test)}')

print(f'voting regressor: {voting_reg.score(X_test, y_test)}')

Each of our models score between 0.61-0.82, which at the high end is good, but at the low end is a pretty poor prediction accuracy score. Do note that the toy datasets are not representative of real world data. However what we can see is that the stacked result generated by the voting regressor fits different sub-models and then averages the individual predictions to form a final prediction. The benefit of this approach is that, it reduces overfitting and increases generalizability. Of course, we could try and improve our accuracy score by tweaking with our indivdual model hyperparameters, using more advaced boosted models or adjusting our training data features and train-test-split data.

Exercise: Stacking a classification problem.

Scikit learn also has method for stacking ensemble classifiers

sklearn.ensemble.VotingClassifierdo you think you could apply a stack to the penguins dataset using a random forest, SVM and decision tree classifier, or a selection of any other classifier estimators available in sci-kit learn?penguins = sns.load_dataset('penguins') feature_names = ['bill_length_mm', 'bill_depth_mm', 'flipper_length_mm', 'body_mass_g'] penguins.dropna(subset=feature_names, inplace=True) species_names = penguins['species'].unique() # Define data and targets X = penguins[feature_names] y = penguins.species # Split data in training and test set from sklearn.model_selection import train_test_split X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=5) print(f'train size: {X_train.shape}') print(f'test size: {X_test.shape}')The code above loads the penguins data and splits it into test and training portions. Have a play around with stacking some classifiers using the

sklearn.ensemble.VotingClassifierusing the code comments below as a guide.# import classifiers # instantiate classifiers # fit classifiers # instantiate voting classifier and fit data # make predictions # compare scores

Key Points

Unsupervised methods - Clustering

Overview

Teaching: min

Exercises: minQuestions

Objectives

Unsupervised learning

In episode 2 we learnt about supervised learning. Now it is time to explore unsupervised learning.

Sometimes we do not have the luxury of using labelled data. This could be for a number of reasons:

- We have labelled data, but not enough to accurately train our model

- Our existing labelled data is low-quality or innacurate

- It is too time-consuming to (manually) label more data

- We have data, but no idea what correlations might exist that we could model!

In this case we need to use unsupervised learning. As the name suggests, this time we do not “supervise” the ML algorithm by providing it labels, but instead we let it try to find its own patterns in the data and report back on any correlations that it might find. You can think of unsupervised learning as a way to discover labels from the data itself.

Clustering

Clustering is the grouping of data points which are similar to each other. It can be a powerful technique for identifying patterns in data. Clustering analysis does not usually require any training and is therefore known as an unsupervised learning technique. Clustering can be applied quickly due to this lack of training.

Applications of clustering

- Looking for trends in data

- Reducing the data around a point to just that point (e.g. reducing colour depth in an image)

- Pattern recognition

K-means clustering

The k-means clustering algorithm is a simple clustering algorithm that tries to identify the centre of each cluster. It does this by searching for a point which minimises the distance between the centre and all the points in the cluster. The algorithm needs to be told how many k clusters to look for, but a common technique is to try different numbers of clusters and combine it with other tests to decide on the best combination.

Hyper-parameters again

‘K’ is also an exmaple of a hyper-parameter for the k-means clustering technique. Another example of a hyper-parameter is the N-degrees of freedom for polynomial regression. Keep an eye out for others throughout the lesson!

K-means with Scikit-Learn

To perform a k-means clustering with Scikit-Learn we first need to import the sklearn.cluster module.

import sklearn.cluster as skl_cluster

For this example, we’re going to use Scikit-Learn’s built-in ‘random data blob generator’ instead of using an external dataset. Therefore we’ll need the sklearn.datasets.samples_generator module.

import sklearn.datasets as skl_datasets

Now lets create some random blobs using the make_blobs function. The n_samples argument sets how many points we want to use in all of our blobs while cluster_std sets the standard deviation of the points. The smaller this value the closer together they will be. centers sets how many clusters we’d like. random_state is the initial state of the random number generator. By specifying this value we’ll get the same results every time we run the program. If we don’t specify a random state then we’ll get different points every time we run. This function returns two things: an array of data points and a list of which cluster each point belongs to.

import matplotlib.pyplot as plt

#Lets define some functions here to avoid repetitive code

def plots_labels(data, labels):

tx = data[:, 0]

ty = data[:, 1]

fig = plt.figure(1, figsize=(4, 4))

plt.scatter(tx, ty, edgecolor='k', c=labels)

plt.show()

def plot_clusters(data, clusters, Kmean):

tx = data[:, 0]

ty = data[:, 1]

fig = plt.figure(1, figsize=(4, 4))

plt.scatter(tx, ty, s=5, linewidth=0, c=clusters)

for cluster_x, cluster_y in Kmean.cluster_centers_:

plt.scatter(cluster_x, cluster_y, s=100, c='r', marker='x')

plt.show()

Lets create the clusters.

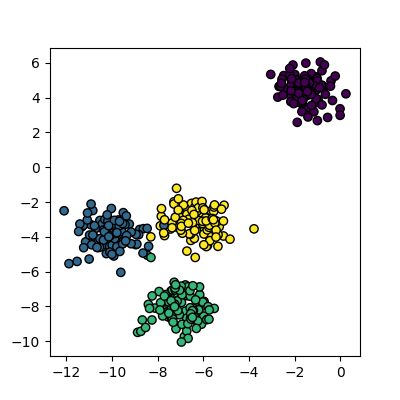

data, cluster_id = skl_datasets.make_blobs(n_samples=400, cluster_std=0.75, centers=4, random_state=1)

plots_labels(data, cluster_id)

Now that we have some data we can try to identify the clusters using k-means. First, we need to initialise the KMeans module and tell it how many clusters to look for. Next, we supply it with some data via the fit function, in much the same way we did with the regression functions earlier on. Finally, we run the predict function to find the clusters.

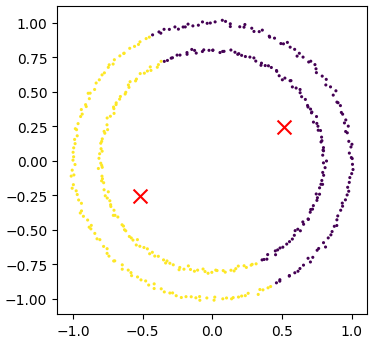

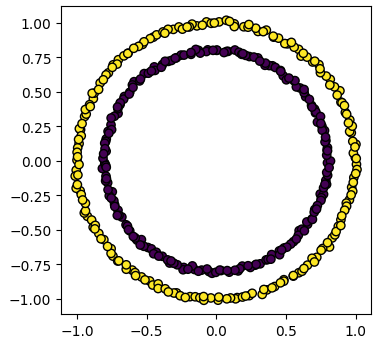

Kmean = skl_cluster.KMeans(n_clusters=4)

Kmean.fit(data)

clusters = Kmean.predict(data)

The data can now be plotted to show all the points we randomly generated. To make it clearer which cluster points have been classified we can set the colours (the c parameter) to use the clusters list that was returned by the predict function. The Kmeans algorithm also lets us know where it identified the centre of each cluster. These are stored as a list called ‘cluster_centers_’ inside the Kmean object. Let’s plot the points from the clusters, colouring them by the output from the K-means algorithm, and also plot the centres of each cluster as a red X.

plot_clusters(data, clusters, Kmean)

Here is the code all in a single block.

import sklearn.cluster as skl_cluster

import sklearn.datasets as skl_datasets

import matplotlib.pyplot as plt

data, cluster_id = skl_datasets.make_blobs(n_samples=400, cluster_std=0.75, centers=4, random_state=1)

Kmean = skl_cluster.KMeans(n_clusters=4)

Kmean.fit(data)

clusters = Kmean.predict(data)

plot_clusters(data, clusters, Kmean)

Working in multiple dimensions

Although this example shows two dimensions, the kmeans algorithm can work in more than two. It becomes very difficult to show this visually once we get beyond 3 dimensions. Its very common in machine learning to be working with multiple variables and so our classifiers are working in multi-dimensional spaces.

Limitations of k-means

- Requires number of clusters to be known in advance

- Struggles when clusters have irregular shapes

- Will always produce an answer finding the required number of clusters even if the data isn’t clustered (or clustered in that many clusters)

- Requires linear cluster boundaries

Advantages of k-means

- Simple algorithm and fast to compute

- A good choice as the first thing to try when attempting to cluster data

- Suitable for large datasets due to its low memory and computing requirements

Exercise: K-Means with overlapping clusters

Adjust the program above to increase the standard deviation of the blobs (the cluster_std parameter to make_blobs) and increase the number of samples (n_samples) to 4000. You should start to see the clusters overlapping. Do the clusters that are identified make sense? Is there any strange behaviour?

Solution

Increasing n_samples to 4000 and cluster_std to 3.0 looks like this:

The straight line boundaries between clusters look a bit strange.

Exercise: How many clusters should we look for?

Using k-means requires us to specify the number of clusters to expect. A common strategy to get around this is to vary the number of clusters we are looking for. Modify the program to loop through searching for between 2 and 10 clusters. Which (if any) of the results look more sensible? What criteria might you use to select the best one?

Solution

for cluster_count in range(2,11): Kmean = skl_cluster.KMeans(n_clusters=cluster_count) Kmean.fit(data) clusters = Kmean.predict(data) plt.scatter(data[:, 0], data[:, 1], s=5, linewidth=0,c=clusters) for cluster_x, cluster_y in Kmean.cluster_centers_: plt.scatter(cluster_x, cluster_y, s=100, c='r', marker='x') # give the graph a title with the number of clusters plt.title(str(cluster_count)+" Clusters") plt.show()None of these look like very sensible clusterings because all of the points form one large cluster. We might look at a measure of similarity to test if this single cluster is actually multiple clusters. A simple standard deviation or interquartile range might be a good starting point.

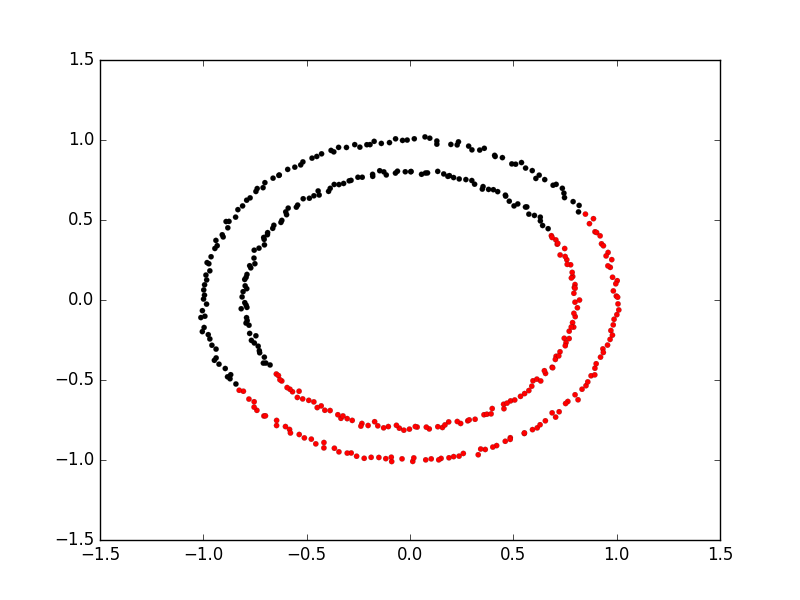

Spectral clustering

Spectral clustering is a technique that attempts to overcome the linear boundary problem of k-means clustering. It works by treating clustering as a graph partitioning problem and looks for nodes in a graph with a small distance between them. See this introduction to spectral clustering if you are interested in more details about how spectral clustering works.

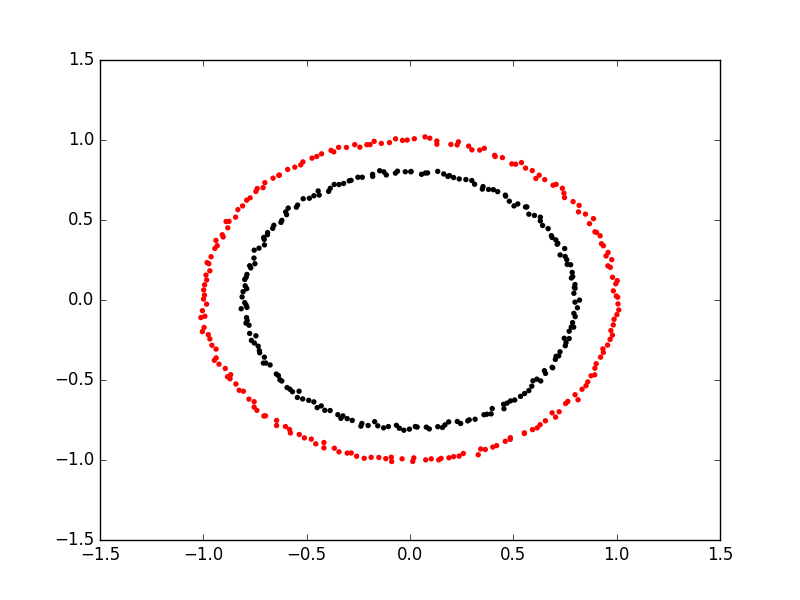

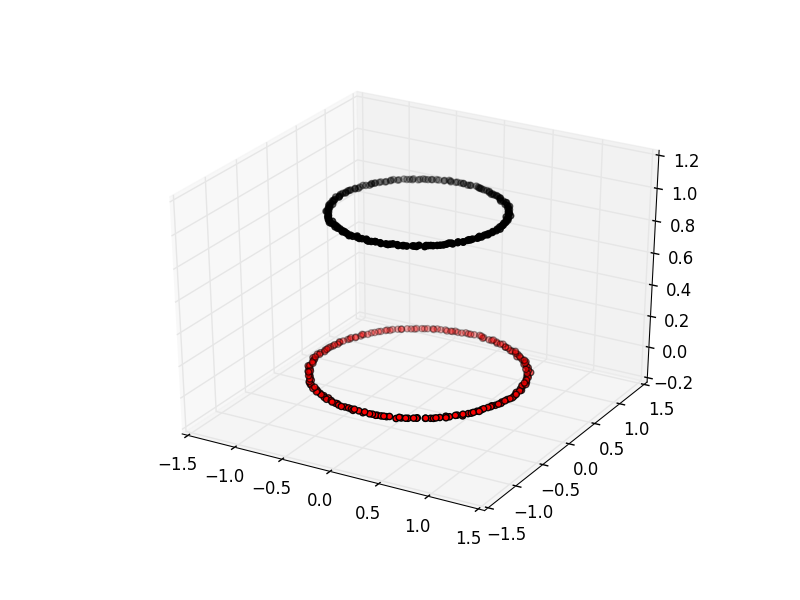

Here is an example of spectral clustering on two concentric circles:

Spectral clustering uses something called a ‘kernel trick’ to introduce additional dimensions to the data. A common example of this is trying to cluster one circle within another (concentric circles). A k-means classifier will fail to do this and will end up effectively drawing a line which crosses the circles. However spectral clustering will introduce an additional dimension that effectively moves one of the circles away from the other in the additional dimension. This does have the downside of being more computationally expensive than k-means clustering.

Spectral clustering with Scikit-Learn

Lets try out using Scikit-Learn’s spectral clustering. To make the concentric circles in the above example we need to use the make_circles function in the sklearn.datasets module. This works in a very similar way to the make_blobs function we used earlier on.

import sklearn.datasets as skl_data

circles, circles_clusters = skl_data.make_circles(n_samples=400, noise=.01, random_state=0)

plots_labels(circles, circles_clusters)

The code for calculating the SpectralClustering is very similar to the kmeans clustering, but instead of using the sklearn.cluster.KMeans class we use the sklearn.cluster.SpectralClustering class.

model = skl_cluster.SpectralClustering(n_clusters=2, affinity='nearest_neighbors', assign_labels='kmeans')

The SpectralClustering class combines the fit and predict functions into a single function called fit_predict.

labels = model.fit_predict(circles)

plots_labels(circles, labels)

Here is the whole program combined with the kmeans clustering for comparison. Note that this produces two figures. To view both of them use the “Inline” graphics terminal inside the Python console instead of the “Automatic” method which will open a window and only show you one of the graphs.

import sklearn.cluster as skl_cluster

import sklearn.datasets as skl_data

circles, circles_clusters = skl_data.make_circles(n_samples=400, noise=.01, random_state=0)

# cluster with kmeans

Kmean = skl_cluster.KMeans(n_clusters=2)

Kmean.fit(circles)

clusters = Kmean.predict(circles)

# plot the data, colouring it by cluster

plot_clusters(circles, clusters, Kmean)

# cluster with spectral clustering

model = skl_cluster.SpectralClustering(n_clusters=2, affinity='nearest_neighbors', assign_labels='kmeans')

labels = model.fit_predict(circles)

plots_labels(circles, labels)

Comparing k-means and spectral clustering performance

Modify the program we wrote in the previous exercise to use spectral clustering instead of k-means and save it as a new file. Time how long both programs take to run. Add the line

import timeat the top of both files as the first line, and get the start time withstart_time = time.time(). End the program by getting the time again and subtracting the start time from it to get the total run time. Addend_time = time.time()andprint("Elapsed time:",end_time-start_time,"seconds")to the end of both files. Compare how long both programs take to run generating 4,000 samples and testing them for between 2 and 10 clusters. How much did your run times differ? How much do they differ if you increase the number of samples to 8,000? How long do you think it would take to compute 800,000 samples (estimate this, it might take a while to run for real)?Solution

KMeans version: runtime around 4 seconds (your computer might be faster/slower)

import matplotlib.pyplot as plt import sklearn.cluster as skl_cluster from sklearn.datasets import make_blobs import time start_time = time.time() data, cluster_id = make_blobs(n_samples=4000, cluster_std=3, centers=4, random_state=1) for cluster_count in range(2,11): Kmean = skl_cluster.KMeans(n_clusters=cluster_count) Kmean.fit(data) clusters = Kmean.predict(data) plt.scatter(data[:, 0], data[:, 1], s=15, linewidth=0, c=clusters) plt.title(str(cluster_count)+" Clusters") plt.show() end_time = time.time() print("Elapsed time = ", end_time-start_time, "seconds")Spectral version: runtime around 9 seconds (your computer might be faster/slower)

import matplotlib.pyplot as plt import sklearn.cluster as skl_cluster from sklearn.datasets import make_blobs import time start_time = time.time() data, cluster_id = make_blobs(n_samples=4000, cluster_std=3, centers=4, random_state=1) for cluster_count in range(2,11): model = skl_cluster.SpectralClustering(n_clusters=cluster_count, affinity='nearest_neighbors', assign_labels='kmeans') labels = model.fit_predict(data) plt.scatter(data[:, 0], data[:, 1], s=15, linewidth=0, c=labels) plt.title(str(cluster_count)+" Clusters") plt.show() end_time = time.time() print("Elapsed time = ", end_time-start_time, "seconds")When the number of points increases to 8000 the runtimes are 24 seconds for the spectral version and 5.6 seconds for kmeans. The runtime numbers will differ depending on the speed of your computer, but the relative difference should be similar. For 4000 points kmeans took 4 seconds, while spectral took 9 seconds. A 2.25 fold difference. For 8000 points kmeans took 5.6 seconds, while spectral took 24 seconds. A 4.28 fold difference. Kmeans is 1.4 times slower for double the data, while spectral is 2.6 times slower. The realative difference is diverging. If we used 100 times more data we might expect a 100 fold divergence in execution times. Kmeans might take a few minutes while spectral will take hours.

Key Points

Unsupervised methods - Dimensionality reduction

Overview

Teaching: min

Exercises: minQuestions

Objectives

Dimensionality reduction

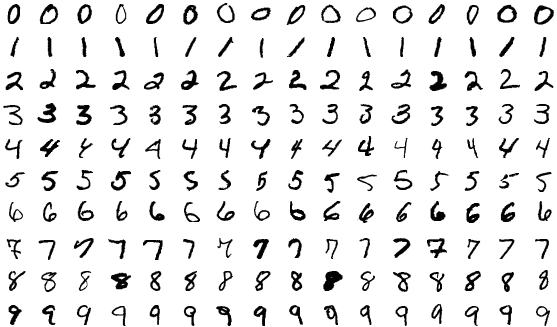

As seen in the last episode, general clustering algorithms work well with low-dimensional data. In this episode we see how higher-dimensional data, such as images of handwritten text or numbers, can be processed with dimensionality reduction techniques to make the datasets more accessible for other modelling techniques. The dataset we will be using is the Scikit-Learn subset of the Modified National Institute of Standards and Technology (MNIST) dataset.

The MNIST dataset contains 70,000 images of handwritten numbers, and are labelled from 0-9 with the number that each image contains. Each image is a greyscale and 28x28 pixels in size for a total of 784 pixels per image. Each pixel can take a value between 0-255 (8bits). When dealing with a series of images in machine learning we consider each pixel to be a feature that varies according to each of the sample images. Our previous penguin dataset only had no more than 7 features to train with, however even a small 28x28 MNIST image has as much as 784 features (pixels) to work with.

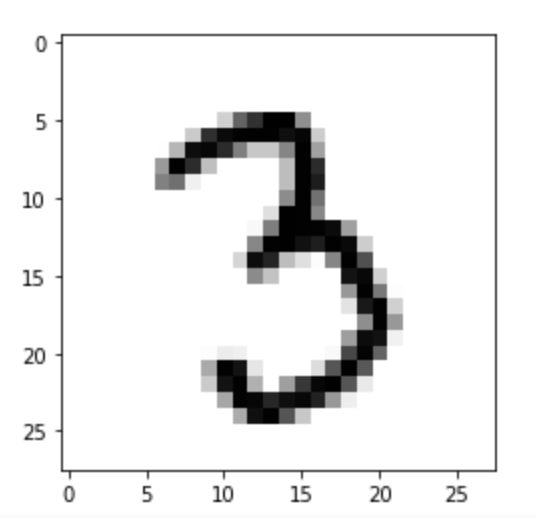

To make this episode a bit less computationally intensive, the Scikit-Learn example that we will work with is a smaller sample of 1797 images. Each image is 8x8 in size for a total of 64 pixels per image, resulting in 64 features for us to work with. The pixels can take a value between 0-15 (4bits). Let’s retrieve and inspect the Scikit-Learn dataset with the following code:

import numpy as np

import matplotlib.pyplot as plt

import sklearn.cluster as skl_cluster

from sklearn import manifold, decomposition, datasets

# Let's define these here to avoid repetitive code

def plots_labels(data, labels):

tx = data[:, 0]

ty = data[:, 1]

fig = plt.figure(1, figsize=(4, 4))

plt.scatter(tx, ty, edgecolor='k', c=labels)

plt.show()

def plot_clusters(data, clusters, Kmean):

tx = data[:, 0]

ty = data[:, 1]

fig = plt.figure(1, figsize=(4, 4))

plt.scatter(tx, ty, s=5, linewidth=0, c=clusters)

for cluster_x, cluster_y in Kmean.cluster_centers_:

plt.scatter(cluster_x, cluster_y, s=100, c='r', marker='x')

plt.show()

def plot_clusters_labels(data, labels):

tx = data[:, 0]

ty = data[:, 1]

# with labels

fig = plt.figure(1, figsize=(5, 4))

plt.scatter(tx, ty, c=labels, cmap="nipy_spectral",

edgecolor='k', label=labels)

plt.colorbar(boundaries=np.arange(11)-0.5).set_ticks(np.arange(10))

plt.show()

Next lets load in the digits dataset,

# load in dataset as a Pandas Dataframe, return X and Y

features, labels = datasets.load_digits(return_X_y=True, as_frame=True)

print(features.shape, labels.shape)

print(labels)

features.head()

Our goal: using dimensionality-reduction to help with machine learning

As humans we are pretty good at object and pattern recognition. We can look at the images above, inspect the intensity and position pixels relative to other pixels, and pretty quickly make an accurate guess at what the image shows. As humans we spends much of our younger lives learning these spatial relations, and so it stands to reason that computers can also extract these relations. Let’s see if it is possible to use unsupervised clustering techniques to pull out relations in our MNIST dataset of number images.

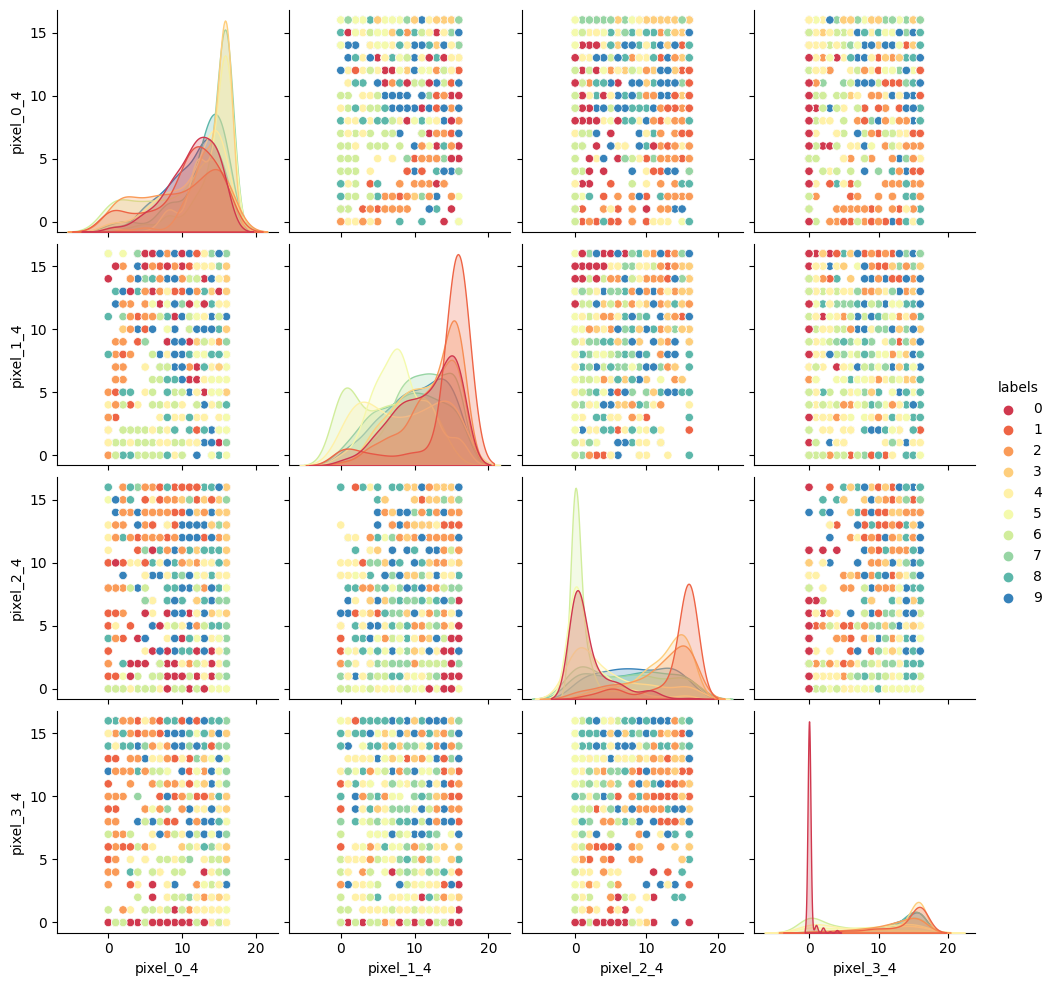

Exercise: Try to visually inspect the dataset and features for correlations

As we did for previous datasets, lets visually inspect relationships between our features/pixels. Try and investigate the following pixels for relations (written “row_column”): 0_4, 1_4, 2_4, and 3_4.

Solution

print(features.iloc[0]) image_1D = features.iloc[0] image_2D = np.array(image_1D).reshape(-1,8) plt.imshow(image_2D,cmap="gray_r") # these points are the pixels we will investigate # pixels 0,1,2,3 of row 4 of the image plt.plot([0,1,2,3],[4,4,4,4],"rx") plt.show()

import seaborn as sns # make a temporary copy of data for plotting here only seaborn_data = features # add labels for pairplot color coding seaborn_data["labels"] = labels # make a short list of N features for plotting N*N figures # 4**2 = 16 plots, whereas 64**2 is over 4000! feature_subset = [] for i in range(4): feature_subset.append("pixel_"+str(i)+"_4") sns.pairplot(seaborn_data, vars=feature_subset, hue="labels", palette=sns.mpl_palette("Spectral", n_colors=10))

As we can see the dataset relations are far more complex than our previous examples. The histograms show that some numbers appear in those pixel positions more than others, but the

feature_vs_featureplots are quite messy to try and decipher. There are gaps and patches of colour suggesting that there is some kind of structure there, but it’s far harder to inspect than the penguin data. We can’t easily see definitive clusters in our 2D representations, and we know our clustering algorithms will take a long time to try and crunch 64 dimensions at once, so let’s see if we can represent our 64D data in fewer dimensions.

Dimensionality reduction with Scikit-Learn

We will look at two commonly used techniques for dimensionality reduction: Principal Component Analysis (PCA) and t-distributed Stochastic Neighbor Embedding (t-SNE). Both of these techniques are supported by Scikit-Learn.

Principal Component Analysis (PCA)

PCA allows us to replace our 64 features with a smaller number of dimensional representations that retain the majority of our variance/relational data. Using Scikit-Learn lets apply PCA in a relatively simple way.

For more in depth explanations of PCA please see the following links:

- https://builtin.com/data-science/step-step-explanation-principal-component-analysis

- https://scikit-learn.org/stable/modules/decomposition.html#pca

Let’s apply PCA to the MNIST dataset and retain the two most-major components:

# PCA with 2 components

pca = decomposition.PCA(n_components=2)

x_pca = pca.fit_transform(features)

print(x_pca.shape)

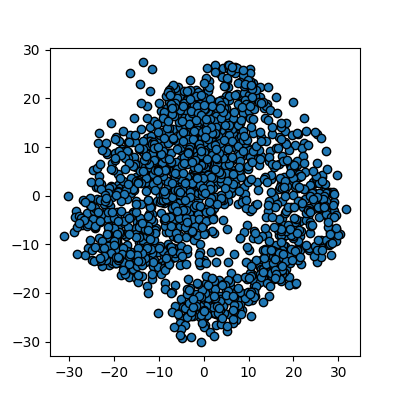

This returns us an array of 1797x2 where the 2 remaining columns(our new “features” or “dimensions”) contain vector representations of the first principle components (column 0) and second principle components (column 1) for each of the images. We can plot these two new features against each other:

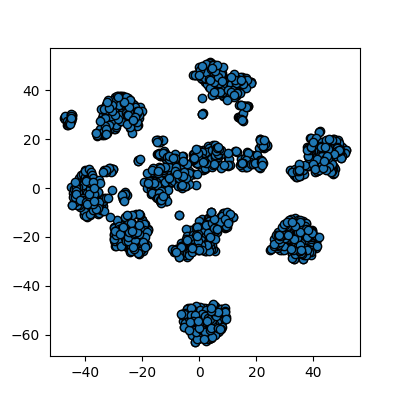

# We are passing None becuase it is an unlabelled plot

plots_labels(x_pca, None)

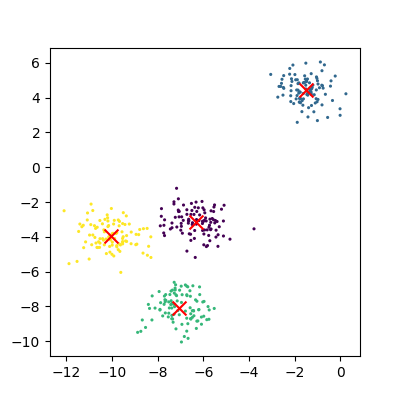

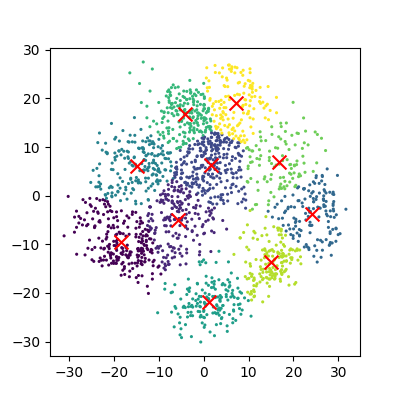

We now have a 2D representation of our 64D dataset that we can work with instead. Let’s try some quick K-means clustering on our 2D representation of the data. Because we already have some knowledge about our data we can set k=10 for the 10 digits present in the dataset.

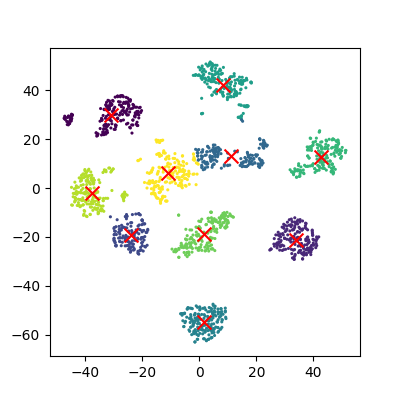

Kmean = skl_cluster.KMeans(n_clusters=10)

Kmean.fit(x_pca)

clusters = Kmean.predict(x_pca)

plot_clusters(x_pca, clusters, Kmean)

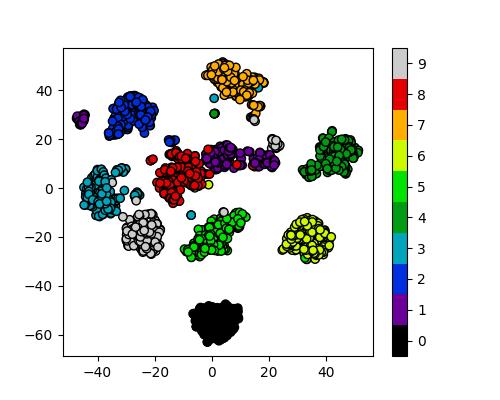

And now we can compare how these clusters look against our actual image labels by colour coding our first scatter plot:

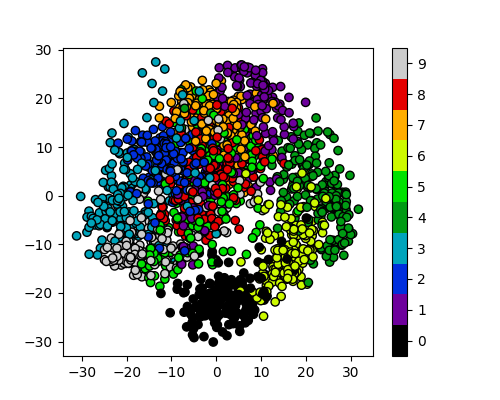

plot_clusters_labels(x_pca, labels)