Performance Overview

Last updated on 2026-02-26 | Edit this page

Estimated time: 10 minutes

Overview

Questions

- Is job wall-time the only way to study job performance?

- What are commonly used metrics and perspectives on job performance?

Objectives

After completing this episode, participants should be able to …

- Create a comprehensive performance overview through dedicated tools.

- Explain the difference between sampling and tracing.

- Measure utilization and the impact of underlying hardware components.

Narrative:

- Scaling study, scheduler tools, project proposal is written and handed in

- Maybe I can squeeze out more from my current system by trying to understand better how it behaves

- Another colleague told us about performance measurement tools

- We are learning more about our application

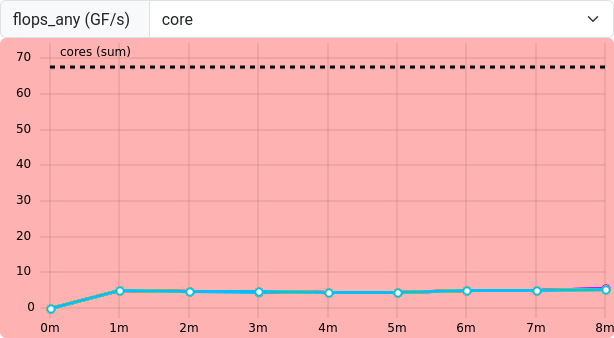

- Aha, there IS room to optimize! Compile with vectorization

What we’re doing here:

- Get a complete picture

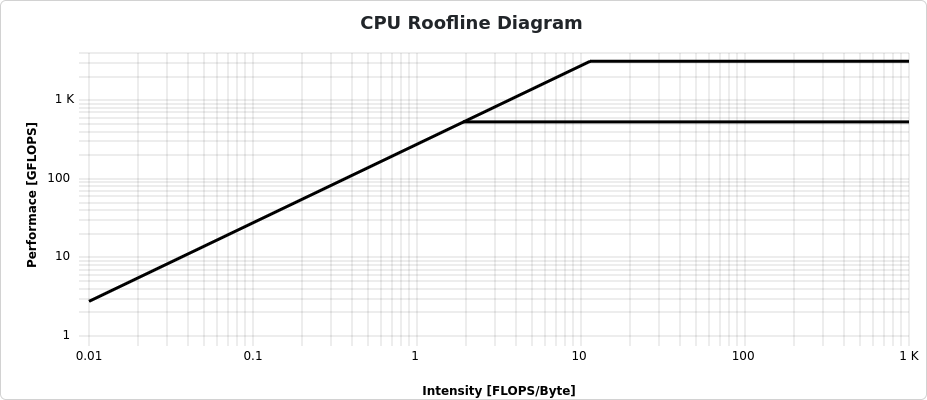

- Introduce additional metrics / definitions, and popular representations of data, e.g. Roofline

- Relate to hardware on the same level of detail

Wall-time measurements with time do not tell us why

exactly an application is slower than expected. To learn more about the

why, we have to measure our applications behavior in more

detail and capture the utilization of underlying hardware. Broadly

categorized, a jobs performance is mostly dependent on

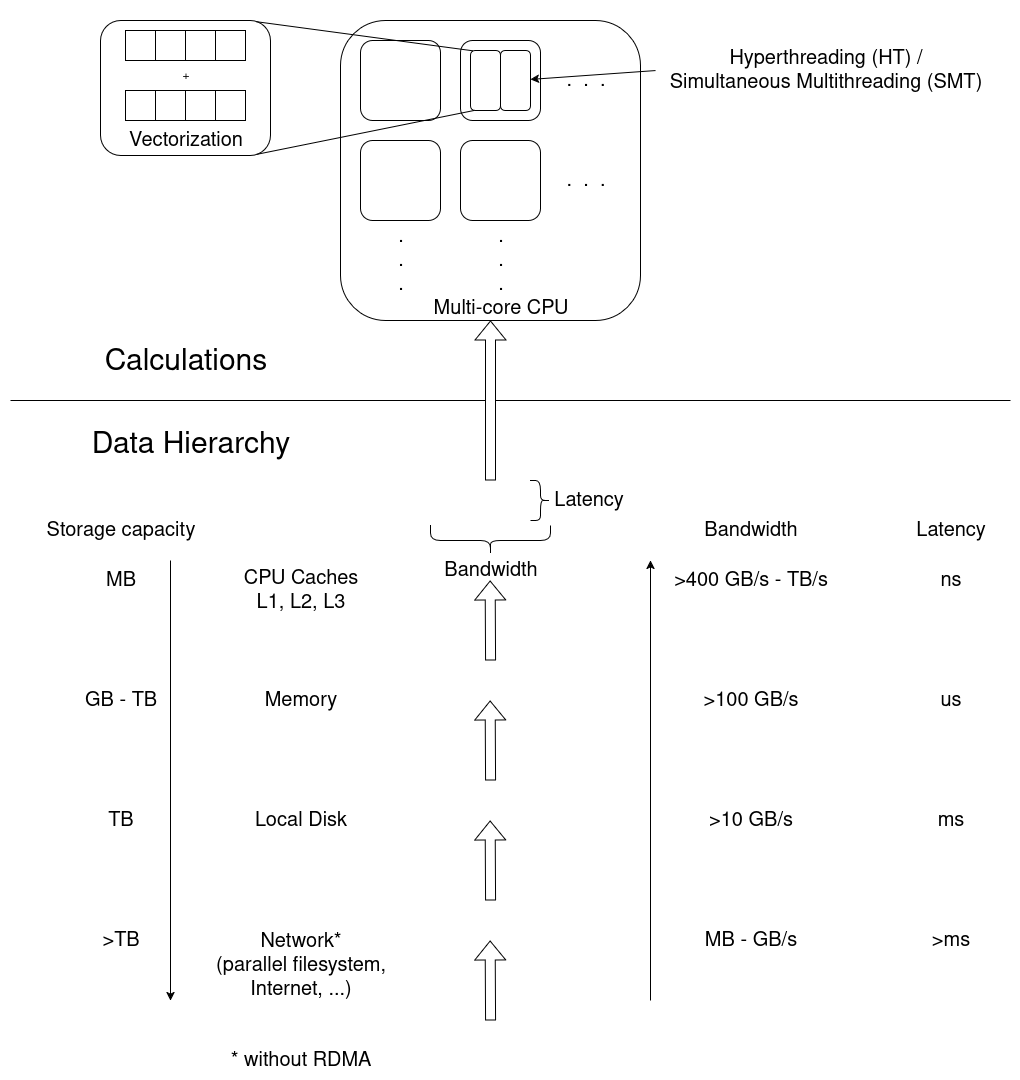

- CPU utilization, e.g. how quickly instructions can be send to the processor, how quickly and how much data can be read from memory, and the raw calculation capabilities of the CPU.

- Memory utilization may vary in terms of how much data is stored in memory, how often data in memory is written or read, and how quickly the data can be read and written to.

- Disk input and output affects jobs that work with amounts of data that exceed available memory capacities.

- Network input and output affects applications that rely on remote data, e.g. MPI applications that regularly share results between processes on multiple worker nodes.

Measurement Workflows

To learn how applications utilize the computers hardware, we employ third party tools that read usage metrics from performance counters, often implemented either in the operating system kernels (software) or in hardware.

Dedicated performance measurement tools often employ similar methods and rely on the same sources of information, but they my focus on different issues and use different data processing and visualization methods.

In general there are two approaches to performance measurements:

- Sampling: Read out performance counters and the application state at regular intervals during execution

- Tracing: Record every event and operation that occurs

Tracing is exact and allows for a very detailed analysis. On the other hand, it results in very large amounts of measurement data that even affects the applications performance during data collection. It may be impractical in some situations.

Sampling on the other hand is less exact and results in a statistical description of the applications behavior. Sampling has a smaller measurement overhead, but may suffer from, for example, slight mis-attributions of measurements to wrong sections of the code and fluctuating results between repeated measurements.

Measurement results are either stored and analysed in a timeline, or aggregated into a final measurement, often called a profile.

We move on with three alternatives here.

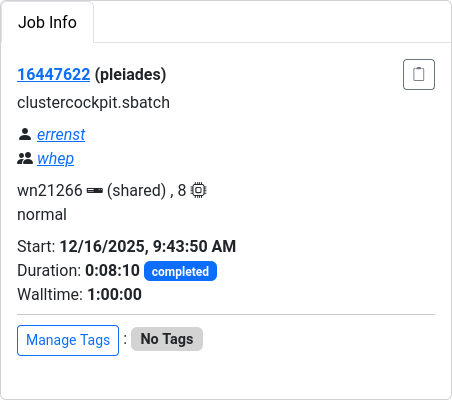

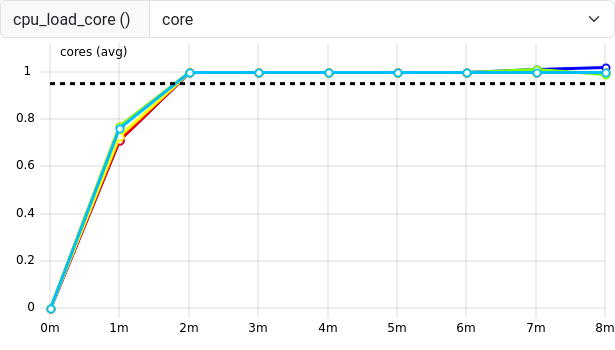

- ClusterCockpit is a job monitoring systems that can be configured to capture many performance metrics. It is easy to use, but has to be deployed by the cluster administration team.

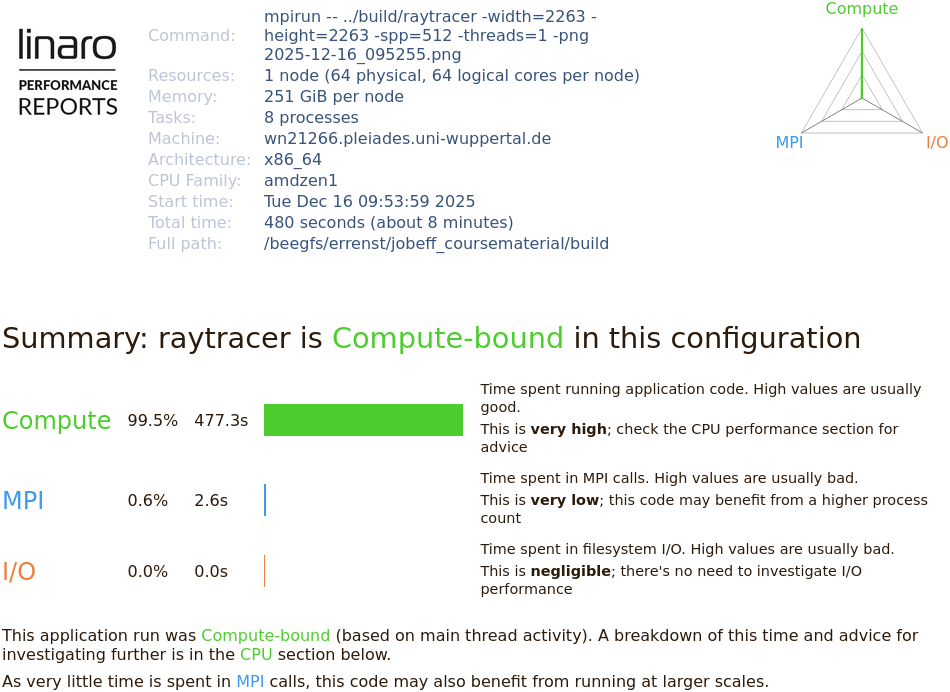

- Linaro Forge Performance Reports provides a good first performance overview, but is a commercial application that requires access to valid licenses.

- TBD is a set of open source tools to create a performance overview independent of centralized services and licenses.

Pick one tool and stick to it throughout the rest of the course. Consider mentioning alternatives and that learners may not have access to certain tools on every cluster, e.g. missing licenses for Linaro Forge.

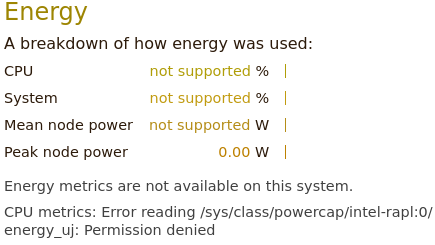

Be aware of site-specific setups, e.g. limiting access to performance

counters, offering non-standard Slurm options during sbatch

submission, and how licenses are handled.

Pick your tool!

For the following episodes, you can choose between three alternative perspectives on our jobs. Choose one tool and stick to it for the rest of the course. The alternatives are:

- ClusterCockpit: A job monitoring service available on many clusters in NRW. Sampled measurements of the application are stored and visualized in a timeline for each job. It needs to be centrally provided by your HPC administration team and may not be available to you!

- Linaro Forge Performance Reports: A commercial sampling-based profiler providing a single page performance overview of your job. Access to licenses required.

- TBD: A free, open source tool/set of tools, to get a general performance overview of your job.

These tools may require access to performance counters, sometimes

granted by requesting --exclusive, but it really depends on

the system. Look at your cluster documentation or talk to your HPC

support staff.

cap_perfmon,cap_sys_ptrace,cap_syslog=ep

kernel.perf_event_paranoidLet us set up our performance measurement tool by running an example

job with 8 cores. To give the job enough work to be worth measuring,

let’s go with \(2263 \times 2263\)

pixels and -spp=512.

General Report

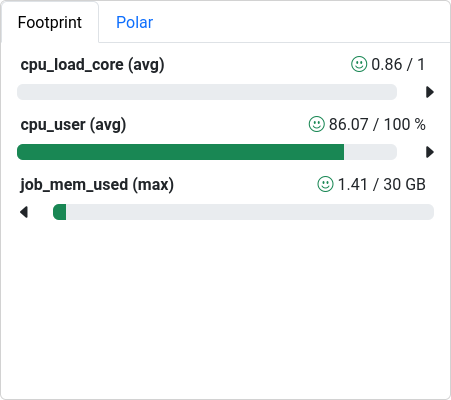

As a first step, to go beyond a wall-time analysis, we employ the measurement tool to produce a general overview of our applications behavior. Here, we typically try to answer questions like:

- Are available CPU, memory, disk, and network capabilities utilized well?

- Dose the jobs performance depend on a particular hardware component?

- Is there an obvious contention point that could be eliminated?

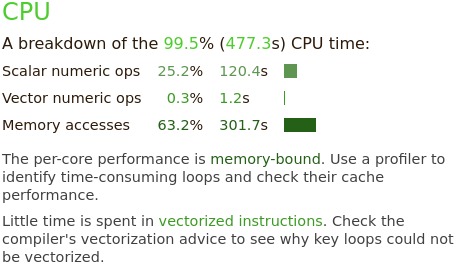

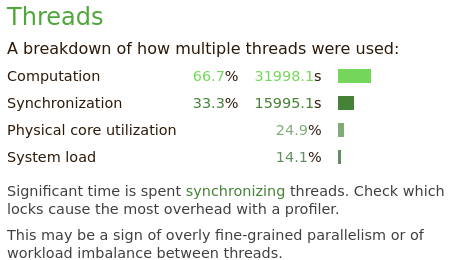

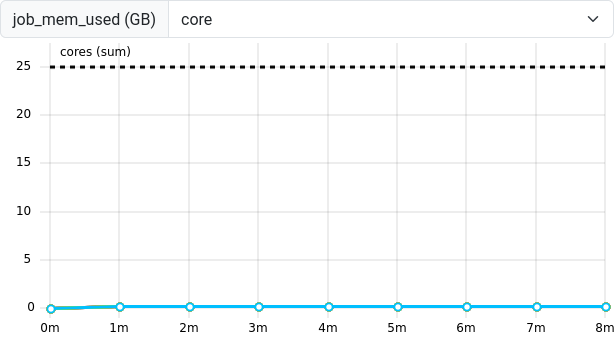

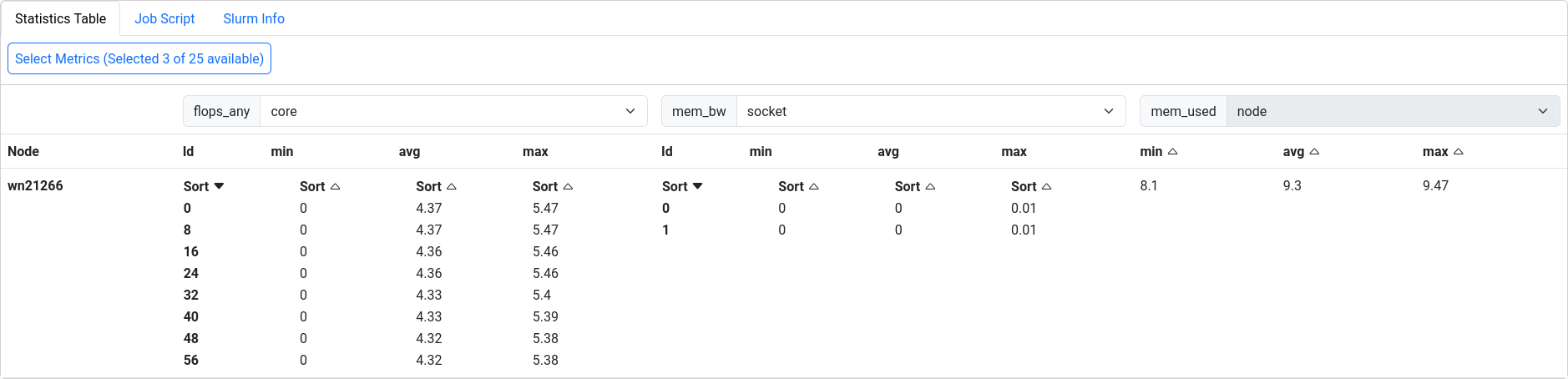

CPU

CPU performance can be categorized in

- Front-end utilization: preparation and scheduling of instructions of program code provided through the cache hierarchy

- Computation: Arithmetic and logical operations with various data types, including the utilization of vectorized instructions, etc.

- Back-end utilization: loading and storing of data in the cache hierarchy

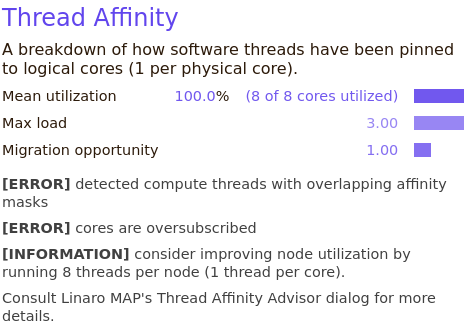

The front- and backend hardware of a physical CPU core is often duplicated to implement simultaneous multithreading (SMT, also called hyperthreading). Here, the arithmetic logical unit receives data and instructions from two independent threads to achieve a sufficient amount, which is a common limiting factor in everyday calculations. On HPC systems, the benefit of SMT is very much application-dependent. It is often disabled on HPC systems, since code is optimized to maximize computational intensity.

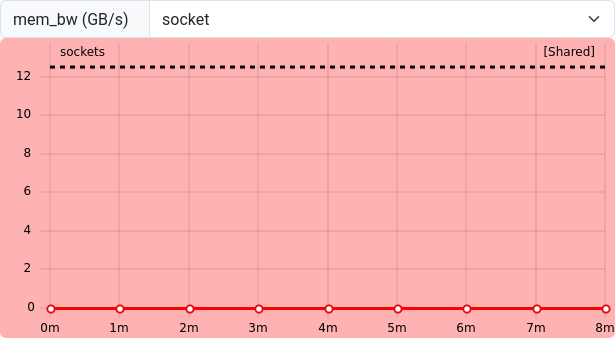

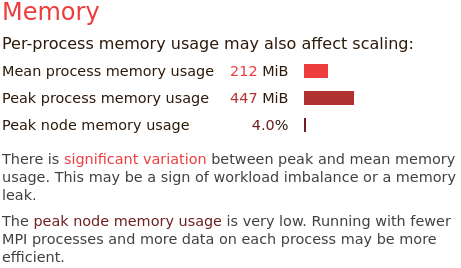

Memory

Memory utilization is characterized in terms of used capacity, bandwidth and access latencies.

Miscellaneous

Typically, many more measurements and perspectives on the data are available for each tool.

Exercise: Match application behavior to hardware

Which parts of the computer hardware may become a point of contention for these application patterns:

- Calculating matrix multiplications

- Reading data from processes on other computers

- Calling many different functions from many equally likely if/else branches

- Writing very large files (TB)

- Comparing strings for matches

- Constructing a large simulation model

- Reading thousands of small files for each iteration

Maybe not the best questions, also missing something for accelerators.

- CPU (FLOPS), maybe the cache hierarchy if matrix elements do not align well to cache sizes

- I/O (network)

- CPU (Front-End), difficult to prepare instructions in time

- I/O (disk), bandwidth limited

- CPU (Back-End), getting strings through the caches

- Memory (capacity)

- I/O (disk)

Summary

Dedicated performance measurement tools are helpful to create reports of the general job behavior. These tools either trace every event, or sample the application and hardware state at regular intervals. Many tools are available, but some may have to be set up by the HPC system administrators, or rely on valid licenses.

The relationship between a job and the execution on physical hardware can become a very deep topic. One of these topics is the correct mapping of job processes to the requested number of CPU cores, addressed in the next episode.

- Performance tools measure data as regular samples or by tracing every event

- The data is either processed and visualized in a timeline or aggregated in a final profile

- Job performance relates closely to contention points in physical

hardware

- CPU utilization (front-end, ALU, back-end), multithreading, vectorization

- Memory utilization (capacity, bandwidth, latency)

- Disk I/O

- Network I/O

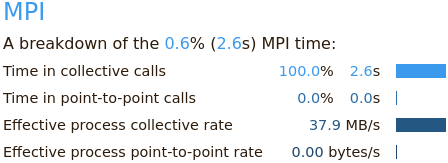

Performance Reports

also summarizes the applications behavior in terms of MPI calls,

e.g. time spent in collective calls involving all processors, or

point-to-point communications.

Performance Reports

also summarizes the applications behavior in terms of MPI calls,

e.g. time spent in collective calls involving all processors, or

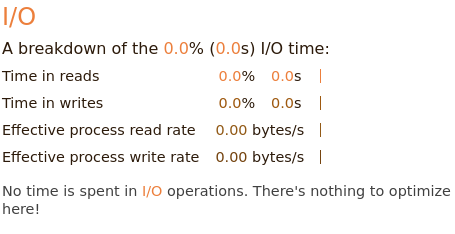

point-to-point communications. The I/O block

summarizes measurements of interactions with the local file systems.

Here, no I/O operations are affecting the applications performance at

all.

The I/O block

summarizes measurements of interactions with the local file systems.

Here, no I/O operations are affecting the applications performance at

all.