All in One View

Content from Introducing Containers

Last updated on 2024-10-22 | Edit this page

Overview

Questions

- What are containers, and why might they be useful to me?

Objectives

- Show how software depending on other software leads to configuration management problems.

- Identify the problems that software installation can pose for research.

- Explain the advantages of containerization.

- Explain how using containers can solve software configuration problems

Learning about Docker Containers

The Australian Research Data Commons has produced a short introductory video about Docker containers that covers many of the points below. Watch it before or after you go through this section to reinforce your understanding!

How can software containers help your research?

Australian Research Data Commons, 2021. How can software containers help your research?. [video] Available at: https://www.youtube.com/watch?v=HelrQnm3v4g DOI: http://doi.org/10.5281/zenodo.5091260

Scientific Software Challenges

What’s Your Experience?

Take a minute to think about challenges that you have experienced in using scientific software (or software in general!) for your research. Then, share with your neighbors and try to come up with a list of common gripes or challenges.

What is a software dependency?

We will mention software dependencies a lot in this section of the workshop so it is good to clarify this term up front. A software dependency is a relationship between software components where one component relies on the other to work properly. For example, if a software application uses a library to query a database, the application depends on that library.

You may have come up with some of the following:

- you want to use software that doesn’t exist for the operating system (Mac, Windows, Linux) you’d prefer.

- you struggle with installing a software tool because you have to install a number of other dependencies first. Those dependencies, in turn, require other things, and so on (i.e. combinatoric explosion).

- the software you’re setting up involves many dependencies and only a subset of all possible versions of those dependencies actually works as desired.

- you’re not actually sure what version of the software you’re using because the install process was so circuitous.

- you and a colleague are using the same software but get different results because you have installed different versions and/or are using different operating systems.

- you installed everything correctly on your computer but now need to install it on a colleague’s computer/campus computing cluster/etc.

- you’ve written a package for other people to use but a lot of your users frequently have trouble with installation.

- you need to reproduce a research project from a former colleague and the software used was on a system you no longer have access to.

A lot of these characteristics boil down to one fact: the main program you want to use likely depends on many, many, different other programs (including the operating system!), creating a very complex, and often fragile system. One change or missing piece may stop the whole thing from working or break something that was already running. It’s no surprise that this situation is sometimes informally termed dependency hell.

Software and Science

Again, take a minute to think about how the software challenges we’ve discussed could impact (or have impacted!) the quality of your work. Share your thoughts with your neighbors. What can go wrong if our software doesn’t work?

Unsurprisingly, software installation and configuration challenges can have negative consequences for research:

- you can’t use a specific tool at all, because it’s not available or installable.

- you can’t reproduce your results because you’re not sure what tools you’re actually using.

- you can’t access extra/newer resources because you’re not able to replicate your software set up.

- others cannot validate and/or build upon your work because they cannot recreate your system’s unique configuration.

Thankfully there are ways to get underneath (a lot of) this mess: containers to the rescue! Containers provide a way to package up software dependencies and access to resources such as files and communications networks in a uniform manner.

What is a Container?

Imagine you want to install some research software but don’t want to take the chance of making a mess of your existing system by installing a bunch of additional stuff (libraries/dependencies/etc.). You don’t want to buy a whole new computer because it’s too expensive. What if, instead, you could have another independent filesystem and running operating system that you could access from your main computer, and that is actually stored within this existing computer?

More concretely, Docker Inc use the following definition of a container:

A container is a standard unit of software that packages up code and all its dependencies so the application runs reliably from one computing environment to another.

https://www.docker.com/resources/what-container/

The term container can be usefully considered with reference to shipping containers. Before shipping containers were developed, packing and unpacking cargo ships was time consuming and error prone, with high potential for different clients’ goods to become mixed up. Just like shipping containers keep things together that should stay together, software containers standardize the description and creation of a complete software system: you can drop a container into any computer with the container software installed (the ‘container host’), and it should just work.

Virtualization

Containers are an example of what’s called virtualization – having a second virtual computer running and accessible from a main or host computer. Another example of virtualization are virtual machines or VMs. A virtual machine typically contains a whole copy of an operating system in addition to its own filesystem and has to get booted up in the same way a computer would. A container is considered a lightweight version of a virtual machine; underneath, the container is (usually) using the Linux kernel and simply has some flavour of Linux + the filesystem inside.

What is Docker?

Docker is a tool that allows you to build and run containers. It’s not the only tool that can create containers, but is the one we’ve chosen for this workshop.

Container Images

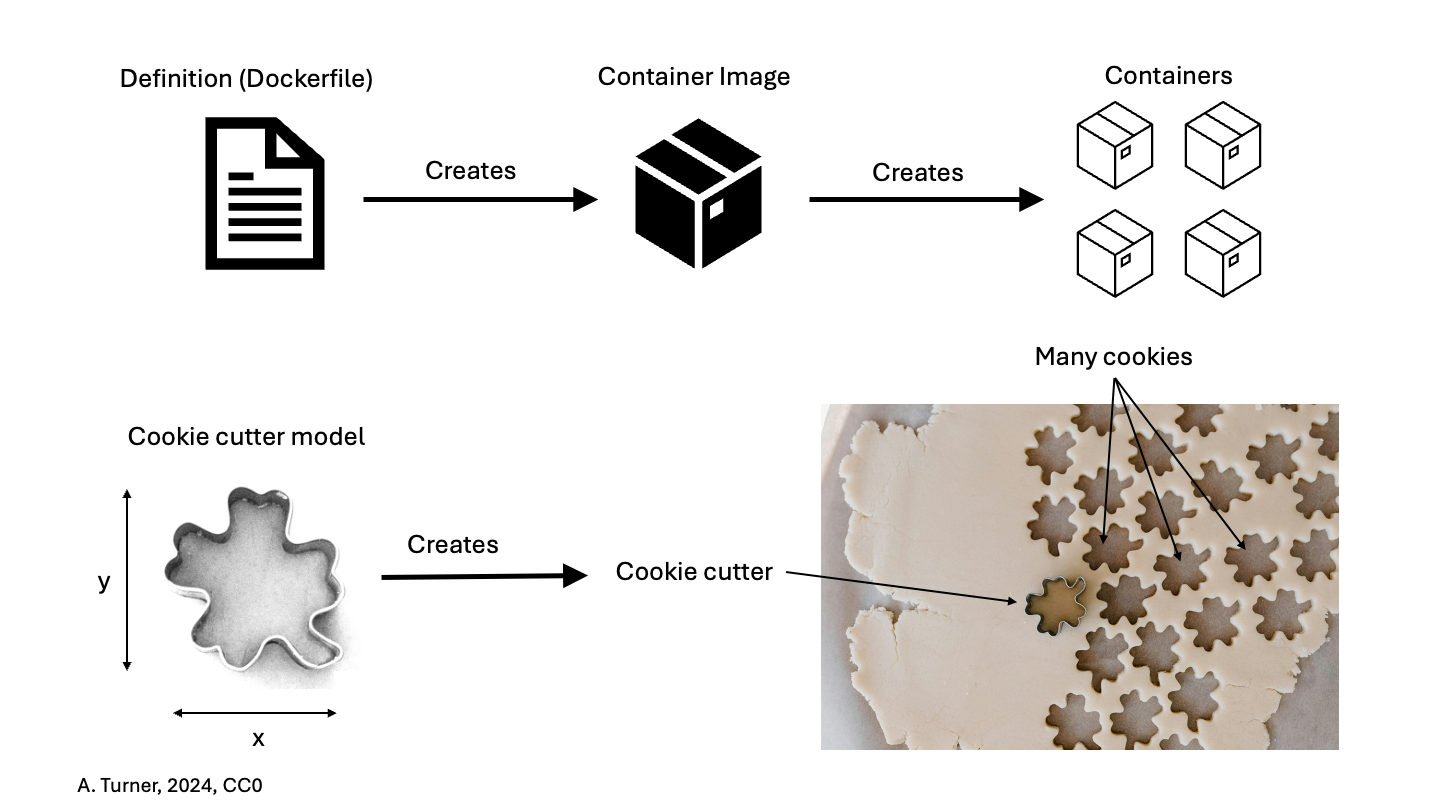

One final term: while the container is an alternative filesystem layer that you can access and run from your computer, the container image is the ‘recipe’ or template for a container. The container image has all the required information to start up a running copy of the container. A running container tends to be transient and can be started and shut down. The container image is more long-lived, as a definition for the container. You could think of the container image like a cookie cutter – it can be used to create multiple copies of the same shape (or container) and is relatively unchanging, where cookies come and go. If you want a different type of container (cookie) you need a different container image (cookie cutter).

Putting the Pieces Together

Think back to some of the challenges we described at the beginning. The many layers of scientific software installations make it hard to install and re-install scientific software – which ultimately, hinders reliability and reproducibility.

But now, think about what a container is – a self-contained, complete, separate computer filesystem. What advantages are there if you put your scientific software tools into containers?

This solves several of our problems:

- documentation – there is a clear record of what software and software dependencies were used, from bottom to top.

- portability – the container can be used on any computer that has Docker installed – it doesn’t matter whether the computer is Mac, Windows or Linux-based.

- reproducibility – you can use the exact same software and environment on your computer and on other resources (like a large-scale computing cluster).

- configurability – containers can be sized to take advantage of more resources (memory, CPU, etc.) on large systems (clusters) or less, depending on the circumstances.

The rest of this workshop will show you how to download and run containers from pre-existing container images on your own computer, and how to create and share your own container images.

Use cases for containers

Now that we have discussed a little bit about containers – what they do and the issues they attempt to address – you may be able to think of a few potential use cases in your area of work. Some examples of common use cases for containers in a research context include:

- Using containers solely on your own computer to use a specific software tool or to test out a tool (possibly to avoid a difficult and complex installation process, to save your time or to avoid dependency hell).

- Creating a

Dockerfilethat generates a container image with software that you specify installed, then sharing a container image generated using this Dockerfile with your collaborators for use on their computers or a remote computing resource (e.g. cloud-based or HPC system). - Archiving the container images so you can repeat analysis/modelling using the same software and configuration in the future – capturing your workflow.

- Almost all software depends on other software components to function, but these components have independent evolutionary paths.

- Small environments that contain only the software that is needed for a given task are easier to replicate and maintain.

- Critical systems that cannot be upgraded, due to cost, difficulty, etc. need to be reproduced on newer systems in a maintainable and self-documented way.

- Virtualization allows multiple environments to run on a single computer.

- Containerization improves upon the virtualization of whole computers by allowing efficient management of the host computer’s memory and storage resources.

- Containers are built from ‘recipes’ that define the required set of software components and the instructions necessary to build/install them within a container image.

- Docker is just one software platform that can create containers and the resources they use.

Content from Introducing the Docker Command Line

Last updated on 2024-08-01 | Edit this page

Overview

Questions

- How do I know Docker is installed and running?

- How do I interact with Docker?

Objectives

- Explain how to check that Docker is installed and is ready to use.

- Demonstrate some initial Docker command line interactions.

- Use the built-in help for Docker commands.

Docker command line

Start the Docker application that you installed in working through the setup instructions for this session. Note that this might not be necessary if your laptop is running Linux or if the installation added the Docker application to your startup process.

You may need to login to Docker Hub

The Docker application will usually provide a way for you to log in to the Docker Hub using the application’s menu (macOS) or systray icon (Windows) and it is usually convenient to do this when the application starts. This will require you to use your Docker Hub username and your password. We will not actually require access to the Docker Hub until later in the course but if you can login now, you should do so.

Determining your Docker Hub username

If you no longer recall your Docker Hub username, e.g., because you have been logging into the Docker Hub using your email address, you can find out what it is through the steps:

- Open https://hub.docker.com/ in a web browser window

- Sign-in using your email and password (don’t tell us what it is)

- In the top-right of the screen you will see your username

Once your Docker application is running, open a shell (terminal) window, and run the following command to check that Docker is installed and the command line tools are working correctly. Below is the output for a Mac version, but the specific version is unlikely to matter much: it does not have to precisely match the one listed below.

OUTPUT

Docker version 20.10.5, build 55c4c88The above command has not actually relied on the part of Docker that runs containers, just that Docker is installed and you can access it correctly from the command line.

A command that checks that Docker is working correctly is the

docker container ls command (we cover this command in more

detail later in the course).

Without explaining the details, output on a newly installed system would likely be:

OUTPUT

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES(The command docker system info could also be used to

verify that Docker is correctly installed and operational but it

produces a larger amount of output.)

However, if you instead get a message similar to the following

OUTPUT

Cannot connect to the Docker daemon at unix:///var/run/docker.sock. Is the docker daemon running?then you need to check that you have started the Docker Desktop, Docker Engine, or however else you worked through the setup instructions.

Getting help

Often when working with a new command line tool, we need to get help.

These tools often have some sort of subcommand or flag (usually

help, -h, or --help) that

displays a prompt describing how to use the tool. For Docker, it’s no

different. If we run docker --help, we see the following

output (running docker also produces the help message):

OUTPUT

Usage: docker [OPTIONS] COMMAND

A self-sufficient runtime for containers

Options:

--config string Location of client config files (default "/Users/vini/.docker")

-c, --context string Name of the context to use to connect to the daemon (overrides DOCKER_HOST env var and default context set with "docker context use")

-D, --debug Enable debug mode

-H, --host list Daemon socket(s) to connect to

-l, --log-level string Set the logging level ("debug"|"info"|"warn"|"error"|"fatal") (default "info")

--tls Use TLS; implied by --tlsverify

--tlscacert string Trust certs signed only by this CA (default "/Users/vini/.docker/ca.pem")

--tlscert string Path to TLS certificate file (default "/Users/vini/.docker/cert.pem")

--tlskey string Path to TLS key file (default "/Users/vini/.docker/key.pem")

--tlsverify Use TLS and verify the remote

-v, --version Print version information and quit

Management Commands:

app* Docker App (Docker Inc., v0.9.1-beta3)

builder Manage builds

buildx* Build with BuildKit (Docker Inc., v0.5.1-docker)

config Manage Docker configs

container Manage containers

context Manage contexts

image Manage images

manifest Manage Docker image manifests and manifest lists

network Manage networks

node Manage Swarm nodes

plugin Manage plugins

scan* Docker Scan (Docker Inc., v0.6.0)

secret Manage Docker secrets

service Manage services

stack Manage Docker stacks

swarm Manage Swarm

system Manage Docker

trust Manage trust on Docker images

volume Manage volumes

Commands:

attach Attach local standard input, output, and error streams to a running container

build Build an image from a Dockerfile

commit Create a new image from a container's changes

cp Copy files/folders between a container and the local filesystem

create Create a new container

diff Inspect changes to files or directories on a container's filesystem

events Get real time events from the server

exec Run a command in a running container

export Export a container's filesystem as a tar archive

history Show the history of an image

images List images

import Import the contents from a tarball to create a filesystem image

info Display system-wide information

inspect Return low-level information on Docker objects

kill Kill one or more running containers

load Load an image from a tar archive or STDIN

login Log in to a Docker registry

logout Log out from a Docker registry

logs Fetch the logs of a container

pause Pause all processes within one or more containers

port List port mappings or a specific mapping for the container

ps List containers

pull Pull an image or a repository from a registry

push Push an image or a repository to a registry

rename Rename a container

restart Restart one or more containers

rm Remove one or more containers

rmi Remove one or more images

run Run a command in a new container

save Save one or more images to a tar archive (streamed to STDOUT by default)

search Search the Docker Hub for images

start Start one or more stopped containers

stats Display a live stream of container(s) resource usage statistics

stop Stop one or more running containers

tag Create a tag TARGET_IMAGE that refers to SOURCE_IMAGE

top Display the running processes of a container

unpause Unpause all processes within one or more containers

update Update configuration of one or more containers

version Show the Docker version information

wait Block until one or more containers stop, then print their exit codes

Run 'docker COMMAND --help' for more information on a command.There is a list of commands and the end of the help message says:

Run 'docker COMMAND --help' for more information on a command.

For example, take the docker container ls command that we

ran previously. We can see from the Docker help prompt that

container is a Docker command, so to get help for that

command, we run:

OUTPUT

Usage: docker container COMMAND

Manage containers

Commands:

attach Attach local standard input, output, and error streams to a running container

commit Create a new image from a container's changes

cp Copy files/folders between a container and the local filesystem

create Create a new container

diff Inspect changes to files or directories on a container's filesystem

exec Run a command in a running container

export Export a container's filesystem as a tar archive

inspect Display detailed information on one or more containers

kill Kill one or more running containers

logs Fetch the logs of a container

ls List containers

pause Pause all processes within one or more containers

port List port mappings or a specific mapping for the container

prune Remove all stopped containers

rename Rename a container

restart Restart one or more containers

rm Remove one or more containers

run Run a command in a new container

start Start one or more stopped containers

stats Display a live stream of container(s) resource usage statistics

stop Stop one or more running containers

top Display the running processes of a container

unpause Unpause all processes within one or more containers

update Update configuration of one or more containers

wait Block until one or more containers stop, then print their exit codes

Run 'docker container COMMAND --help' for more information on a command.There’s also help for the container ls command:

OUTPUT

Usage: docker container ls [OPTIONS]

List containers

Aliases:

ls, ps, list

Options:

-a, --all Show all containers (default shows just running)

-f, --filter filter Filter output based on conditions provided

--format string Pretty-print containers using a Go template

-n, --last int Show n last created containers (includes all states) (default -1)

-l, --latest Show the latest created container (includes all states)

--no-trunc Don't truncate output

-q, --quiet Only display container IDs

-s, --size Display total file sizesYou may notice that there are many commands that stem from the

docker command. Instead of trying to remember all possible

commands and options, it’s better to learn how to effectively get help

from the command line. Although we can always search the web, getting

the built-in help from our tool is often much faster and may provide the

answer right away. This applies not only to Docker, but also to most

command line-based tools.

Docker Command Line Interface (CLI) syntax

In this lesson we use the newest Docker CLI syntax introduced

with the Docker Engine version 1.13. This new syntax combines

commands into groups you will most often want to interact with. In the

help example above you can see image and

container management commands, which can be used to

interact with your images and containers respectively. With this new

syntax you issue commands using the following pattern

docker [command] [subcommand] [additional options]

Comparing the output of two help commands above, you can see that the

same thing can be achieved in multiple ways. For example to start a

Docker container using the old syntax you would use

docker run. To achieve the same with the new syntax, you

use docker container run instead. Even though the old

approach is shorter and still officially supported, the new syntax is

more descriptive, less error-prone and is therefore recommended.

Exploring a command

Run docker --help and pick a command from the list.

Explore the help prompt for that command. Try to guess how a command

would work by looking at the Usage: section of the

prompt.

Suppose we pick the docker image build command:

OUTPUT

Usage: docker image build [OPTIONS] PATH | URL | -

Build an image from a Dockerfile

Options:

--add-host list Add a custom host-to-IP mapping (host:ip)

--build-arg list Set build-time variables

--cache-from strings Images to consider as cache sources

--cgroup-parent string Optional parent cgroup for the container

--compress Compress the build context using gzip

--cpu-period int Limit the CPU CFS (Completely Fair Scheduler) period

--cpu-quota int Limit the CPU CFS (Completely Fair Scheduler) quota

-c, --cpu-shares int CPU shares (relative weight)

--cpuset-cpus string CPUs in which to allow execution (0-3, 0,1)

--cpuset-mems string MEMs in which to allow execution (0-3, 0,1)

--disable-content-trust Skip image verification (default true)

-f, --file string Name of the Dockerfile (Default is 'PATH/Dockerfile')

--force-rm Always remove intermediate containers

--iidfile string Write the image ID to the file

--isolation string Container isolation technology

--label list Set metadata for an image

-m, --memory bytes Memory limit

--memory-swap bytes Swap limit equal to memory plus swap: '-1' to enable unlimited swap

--network string Set the networking mode for the RUN instructions during build (default "default")

--no-cache Do not use cache when building the image

--pull Always attempt to pull a newer version of the image

-q, --quiet Suppress the build output and print image ID on success

--rm Remove intermediate containers after a successful build (default true)

--security-opt strings Security options

--shm-size bytes Size of /dev/shm

-t, --tag list Name and optionally a tag in the 'name:tag' format

--target string Set the target build stage to build.

--ulimit ulimit Ulimit options (default [])We could try to guess that the command could be run like this:

or

Where https://github.com/docker/rootfs.git could be any

relevant URL that supports a Docker image.

- A toolbar icon indicates that Docker is ready to use (on Windows and macOS).

- You will typically interact with Docker using the command line.

- To learn how to run a certain Docker command, we can type the

command followed by the

--helpflag.

Content from Exploring and Running Containers

Last updated on 2024-06-27 | Edit this page

Overview

Questions

- How do I interact with Docker containers and container images on my computer?

Objectives

- Use the correct command to see which Docker container images are on your computer.

- Be able to download new Docker container images.

- Demonstrate how to start an instance of a container from a container image.

- Describe at least two ways to execute commands inside a running Docker container.

Reminder of terminology: container images and containers

Recall that a container image is the template from which particular instances of containers will be created.

Let’s explore our first Docker container. The Docker team provides a

simple container image online called hello-world. We’ll

start with that one.

Downloading Docker images

The docker image command is used to interact with Docker

container images. You can find out what container images you have on

your computer by using the following command (“ls” is short for

“list”):

If you’ve just installed Docker, you won’t see any container images listed.

To get a copy of the hello-world Docker container image

from the internet, run this command:

You should see output like this:

OUTPUT

Using default tag: latest

latest: Pulling from library/hello-world

1b930d010525: Pull complete

Digest: sha256:f9dfddf63636d84ef479d645ab5885156ae030f611a56f3a7ac7f2fdd86d7e4e

Status: Downloaded newer image for hello-world:latest

docker.io/library/hello-world:latestDocker Hub

Where did the hello-world container image come from? It

came from the Docker Hub website, which is a place to share Docker

container images with other people. More on that in a later episode.

Exercise: Check on Your Images

What command would you use to see if the hello-world

Docker container image had downloaded successfully and was on your

computer? Give it a try before checking the solution.

Note that the downloaded hello-world container image is

not in the folder where you are in the terminal! (Run ls by

itself to check.) The container image is not a file like our normal

programs and documents; Docker stores it in a specific location that

isn’t commonly accessed, so it’s necessary to use the special

docker image command to see what Docker container images

you have on your computer.

Running the hello-world container

To create and run containers from named Docker container images you

use the docker container run command. Try the following

docker container run invocation. Note that it does not

matter what your current working directory is.

OUTPUT

Hello from Docker!

This message shows that your installation appears to be working correctly.

To generate this message, Docker took the following steps:

1. The Docker client contacted the Docker daemon.

2. The Docker daemon pulled the "hello-world" image from the Docker Hub.

(amd64)

3. The Docker daemon created a new container from that image which runs the

executable that produces the output you are currently reading.

4. The Docker daemon streamed that output to the Docker client, which sent it

to your terminal.

To try something more ambitious, you can run an Ubuntu container with:

$ docker run -it ubuntu bash

Share images, automate workflows, and more with a free Docker ID:

https://hub.docker.com/

For more examples and ideas, visit:

https://docs.docker.com/get-started/What just happened? When we use the docker container run

command, Docker does three things:

| 1. Starts a Running Container | 2. Performs Default Action | 3. Shuts Down the Container |

|---|---|---|

| Starts a running container, based on the container image. Think of this as the “alive” or “inflated” version of the container – it’s actually doing something. | If the container has a default action set, it will perform that default action. This could be as simple as printing a message (as above) or running a whole analysis pipeline! | Once the default action is complete, the container stops running (or exits). The container image is still there, but nothing is actively running. |

The hello-world container is set up to run an action by

default – namely to print this message.

Using docker container run to get

the image

We could have skipped the docker image pull step; if you

use the docker container run command and you don’t already

have a copy of the Docker container image, Docker will automatically

pull the container image first and then run it.

Running a container with a chosen command

But what if we wanted to do something different with the container?

The output just gave us a suggestion of what to do – let’s use a

different Docker container image to explore what else we can do with the

docker container run command. The suggestion above is to

use ubuntu, but we’re going to run a different type of

Linux, alpine instead because it’s quicker to download.

Run the Alpine Docker container

Try downloading the alpine container image and using it

to run a container. You can do it in two steps, or one. What are

they?

What happened when you ran the Alpine Docker container?

If you have never used the alpine Docker container image

on your computer, Docker probably printed a message that it couldn’t

find the container image and had to download it. If you used the

alpine container image before, the command will probably

show no output. That’s because this particular container is designed for

you to provide commands yourself. Try running this instead:

You should see the output of the cat /etc/os-release

command, which prints out the version of Alpine Linux that this

container is using and a few additional bits of information.

Hello World, Part 2

Can you run a copy of the alpine container and make it

print a “hello world” message?

Give it a try before checking the solution.

So here, we see another option – we can provide commands at the end

of the docker container run command and they will execute

inside the running container.

Running containers interactively

In all the examples above, Docker has started the container, run a

command, and then immediately stopped the container. But what if we

wanted to keep the container running so we could log into it and test

drive more commands? The way to do this is by adding the interactive

flags -i and -t (usually combined as

-it) to the docker container run command and

provide a shell (bash,sh, etc.) as our

command. The alpine Docker container image doesn’t include

bash so we need to use sh.

Technically…

Technically, the interactive flag is just -i – the extra

-t (combined as -it above) is the “pseudo-TTY”

option, a fancy term that means a text interface. This allows you to

connect to a shell, like sh, using a command line. Since

you usually want to have a command line when running interactively, it

makes sense to use the two together.

Your prompt should change significantly to look like this:

That’s because you’re now inside the running container! Try these commands:

pwdlswhoamiecho $PATHcat /etc/os-release

All of these are being run from inside the running container, so

you’ll get information about the container itself, instead of your

computer. To finish using the container, type exit.

Practice Makes Perfect

Can you find out the version of Ubuntu installed on the

ubuntu container image? (Hint: You can use the same command

as used to find the version of alpine.)

Can you also find the apt-get program? What does it do?

(Hint: try passing --help to almost any command will give

you more information.)

Run an interactive ubuntu container – you can use

docker image pull first, or just run it with this

command:

OR you can get the bash shell instead

Then try, running these commands

Exit when you’re done.

Even More Options

There are many more options, besides -it that can be

used with the docker container run command! A few of them

will be covered in later episodes and

we’ll share two more common ones here:

--rm: this option guarantees that any running container is completely removed from your computer after the container is stopped. Without this option, Docker actually keeps the “stopped” container around, which you’ll see in a later episode. Note that this option doesn’t impact the container images that you’ve pulled, just running instances of containers.--name=: By default, Docker assigns a random name and ID number to each container instance that you run on your computer. If you want to be able to more easily refer to a specific running container, you can assign it a name using this option.

Conclusion

So far, we’ve seen how to download Docker container images, use them to run commands inside running containers, and even how to explore a running container from the inside. Next, we’ll take a closer look at all the different kinds of Docker container images that are out there.

- The

docker image pullcommand downloads Docker container images from the internet. - The

docker image lscommand lists Docker container images that are (now) on your computer. - The

docker container runcommand creates running containers from container images and can run commands inside them. - When using the

docker container runcommand, a container can run a default action (if it has one), a user specified action, or a shell to be used interactively.

Content from Cleaning Up Containers

Last updated on 2024-06-27 | Edit this page

Overview

Questions

- How do I interact with a Docker container on my computer?

- How do I manage my containers and container images?

Objectives

- Explain how to list running and completed containers.

- Know how to list and remove container images.

Removing images

The container images and their corresponding containers can start to take up a lot of disk space if you don’t clean them up occasionally, so it’s a good idea to periodically remove containers and container images that you won’t be using anymore.

In order to remove a specific container image, you need to find out details about the container image, specifically, the “Image ID”. For example, say my laptop contained the following container image:

OUTPUT

REPOSITORY TAG IMAGE ID CREATED SIZE

hello-world latest fce289e99eb9 15 months ago 1.84kBYou can remove the container image with a

docker image rm command that includes the Image

ID, such as:

or use the container image name, like so:

However, you may see this output:

OUTPUT

Error response from daemon: conflict: unable to remove repository reference "hello-world" (must force) - container e7d3b76b00f4 is using its referenced image fce289e99eb9This happens when Docker hasn’t cleaned up some of the previously running containers based on this container image. So, before removing the container image, we need to be able to see what containers are currently running, or have been run recently, and how to remove these.

What containers are running?

Working with containers, we are going to shift back to the command:

docker container. Similar to docker image, we

can list running containers by typing:

OUTPUT

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMESNotice that this command didn’t return any containers because our containers all exited and thus stopped running after they completed their work.

docker ps

The command docker ps serves the same purpose as

docker container ls, and comes from the Unix shell command

ps which describes running processes.

What containers have run recently?

There is also a way to list running containers, and those that have

completed recently, which is to add the

--all/-a flag to the

docker container ls command as shown below.

OUTPUT

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

9c698655416a hello-world "/hello" 2 minutes ago Exited (0) 2 minutes ago zen_dubinsky

6dd822cf6ca9 hello-world "/hello" 3 minutes ago Exited (0) 3 minutes ago eager_engelbartKeeping it clean

You might be surprised at the number of containers Docker is still

keeping track of. One way to prevent this from happening is to add the

--rm flag to docker container run. This will

completely wipe out the record of the run container when it exits. If

you need a reference to the running container for any reason,

don’t use this flag.

How do I remove an exited container?

To delete an exited container you can run the following command,

inserting the CONTAINER ID for the container you wish to

remove. It will repeat the CONTAINER ID back to you, if

successful.

OUTPUT

9c698655416aAn alternative option for deleting exited containers is the

docker container prune command. Note that this command

doesn’t accept a container ID as an option because it deletes ALL exited

containers! Be careful with this command as deleting

the container is forever. Once a container is

deleted you can not get it back. If you have containers you may

want to reconnect to, you should not use this command.

It will ask you if to confirm you want to remove these containers, see

output below. If successful it will print the full

CONTAINER ID back to you for each container it has

removed.

OUTPUT

WARNING! This will remove all stopped containers.

Are you sure you want to continue? [y/N] y

Deleted Containers:

9c698655416a848278d16bb1352b97e72b7ea85884bff8f106877afe0210acfc

6dd822cf6ca92f3040eaecbd26ad2af63595f30bb7e7a20eacf4554f6ccc9b2bRemoving images, for real this time

Now that we’ve removed any potentially running or stopped containers,

we can try again to delete the hello-world

container image.

OUTPUT

Untagged: hello-world:latest

Untagged: hello-world@sha256:5f179596a7335398b805f036f7e8561b6f0e32cd30a32f5e19d17a3cda6cc33d

Deleted: sha256:fce289e99eb9bca977dae136fbe2a82b6b7d4c372474c9235adc1741675f587e

Deleted: sha256:af0b15c8625bb1938f1d7b17081031f649fd14e6b233688eea3c5483994a66a3The reason that there are a few lines of output, is that a given

container image may have been formed by merging multiple underlying

layers. Any layers that are used by multiple Docker container images

will only be stored once. Now the result of docker image ls

should no longer include the hello-world container

image.

-

docker containerhas subcommands used to interact and manage containers. -

docker imagehas subcommands used to interact and manage container images. -

docker container lsordocker pscan provide information on currently running containers.

Content from Finding Containers on Docker Hub

Last updated on 2024-10-22 | Edit this page

Overview

Questions

- What is the Docker Hub, and why is it useful?

Objectives

- Understand the importance of container registries such as Docker Hub, quay.io, etc.

- Explore the Docker Hub webpage for a popular Docker container image.

- Find the list of tags for a particular Docker container image.

- Identify the three components of a container image’s identifier.

In the previous episode, we ran a few different containers derived

from different container images: hello-world,

alpine, and maybe ubuntu. Where did these

container images come from? The Docker Hub!

Introducing the Docker Hub

The Docker Hub is an online repository of container images, a vast number of which are publicly available. A large number of the container images are curated by the developers of the software that they package. Also, many commonly used pieces of software that have been containerized into images are officially endorsed, which means that you can trust the container images to have been checked for functionality, stability, and that they don’t contain malware.

Docker can be used without connecting to the Docker Hub

Note that while the Docker Hub is well integrated into Docker functionality, the Docker Hub is certainly not required for all types of use of Docker containers. For example, some organizations may run container infrastructure that is entirely disconnected from the Internet.

Exploring an Example Docker Hub Page

As an example of a Docker Hub page, let’s explore the page for the

official Python language container images. The most basic form of

containerized Python is in the python container image

(which is endorsed by the Docker team). Open your web browser to https://hub.docker.com/_/python

to see what is on a typical Docker Hub software page.

The top-left provides information about the name, short description, popularity (i.e., more than a billion downloads in the case of this container image), and endorsements.

The top-right provides the command to pull this container image to your computer.

The main body of the page contains many used headings, such as:

- Which tags (i.e., container image versions) are supported;

- Summary information about where to get help, which computer architectures are supported, etc.;

- A longer description of the container image;

- Examples of how to use the container image; and

- The license that applies.

The “How to use the image” section of most container images’ pages will provide examples that are likely to cover your intended use of the container image.

Exploring Container Image Versions

A single Docker Hub page can have many different versions of

container images, based on the version of the software inside. These

versions are indicated by “tags”. When referring to the specific version

of a container image by its tag, you use a colon, :, like

this:

CONTAINER_IMAGE_NAME:TAGSo if I wanted to download the python container image,

with Python 3.8, I would use this name:

But if I wanted to download a Python 3.6 container image, I would use this name:

The default tag (which is used if you don’t specify one) is called

latest.

So far, we’ve only seen container images that are maintained by the Docker team. However, it’s equally common to use container images that have been produced by individual owners or organizations. Container images that you create and upload to Docker Hub would fall into this category, as would the container images maintained by organizations like ContinuumIO (the folks who develop the Anaconda Python environment) or community groups like rocker, a group that builds community R container images.

The name for these group- or individually-managed container images have this format:

OWNER/CONTAINER_IMAGE_NAME:TAGRepositories

The technical name for the contents of a Docker Hub page is a “repository.” The tag indicates the specific version of the container image that you’d like to use from a particular repository. So a slightly more accurate version of the above example is:

OWNER/REPOSITORY:TAGWhat’s in a name?

How would I download the Docker container image produced by the

rocker group that has version 3.6.1 of R and the tidyverse

installed?

Note: the container image described in this exercise is large and

won’t be used later in this lesson, so you don’t actually need to pull

the container image – constructing the correct docker pull

command is sufficient.

Finding Container Images on Docker Hub

There are many different container images on Docker Hub. This is where the real advantage of using containers shows up – each container image represents a complete software installation that you can use and access without any extra work!

The easiest way to find container images is to search on Docker Hub, but sometimes software pages have a link to their container images from their home page.

Note that anyone can create an account on Docker Hub and share container images there, so it’s important to exercise caution when choosing a container image on Docker Hub. These are some indicators that a container image on Docker Hub is consistently maintained, functional and secure:

- The container image is updated regularly.

- The container image associated with a well established company, community, or other group that is well-known.

- There is a Dockerfile or other listing of what has been installed to the container image.

- The container image page has documentation on how to use the container image.

If a container image is never updated, created by a random person, and does not have a lot of metadata, it is probably worth skipping over. Even if such a container image is secure, it is not reproducible and not a dependable way to run research computations.

What container image is right for you?

Find a Docker container image that’s relevant to you. Take into account the suggestions above of what to look for as you evaluate options. If you’re unsuccessful in your search, or don’t know what to look for, you can use the R or Python container image we’ve already seen.

Once you find a container image, use the skills from the previous episode to download the container image and explore it.

- The Docker Hub is an online repository of container images.

- Many Docker Hub container images are public, and may be officially endorsed.

- Each Docker Hub page about a container image provides structured information and subheadings

- Most Docker Hub pages about container images contain sections that provide examples of how to use those container images.

- Many Docker Hub container images have multiple versions, indicated by tags.

- The naming convention for Docker container images is:

OWNER/CONTAINER_IMAGE_NAME:TAG

Content from Creating Your Own Container Images

Last updated on 2024-10-22 | Edit this page

Overview

Questions

- How can I make my own Docker container images?

- How do I document the ‘recipe’ for a Docker container image?

Objectives

- Explain the purpose of a

Dockerfileand show some simple examples. - Demonstrate how to build a Docker container image from a

Dockerfile. - Compare the steps of creating a container image interactively versus

a

Dockerfile. - Create an installation strategy for a container image.

- Demonstrate how to upload (‘push’) your container images to the Docker Hub.

- Describe the significance of the Docker Hub naming scheme.

There are lots of reasons why you might want to create your own Docker container image.

- You can’t find a container image with all the tools you need on Docker Hub.

- You want to have a container image to “archive” all the specific software versions you ran for a project.

- You want to share your workflow with someone else.

Interactive installation

Before creating a reproducible installation, let’s experiment with

installing software inside a container. Start a container from the

alpine container image we used before, interactively:

Because this is a basic container, there’s a lot of things not

installed – for example, python3.

OUTPUT

sh: python3: not foundInside the container, we can run commands to install Python 3. The

Alpine version of Linux has a installation tool called apk

that we can use to install Python 3.

We can test our installation by running a Python command:

Exercise: Searching for Help

Can you find instructions for installing R on Alpine Linux? Do they work?

Once we exit, these changes are not saved to a new container image by

default. There is a command that will “snapshot” our changes, but

building container images this way is not easily reproducible. Instead,

we’re going to take what we’ve learned from this interactive

installation and create our container image from a reproducible recipe,

known as a Dockerfile.

If you haven’t already, exit out of the interactively running container.

Put installation instructions in a Dockerfile

A Dockerfile is a plain text file with keywords and

commands that can be used to create a new container image.

From your shell, go to the folder you downloaded at the start of the lesson and print out the Dockerfile inside:

OUTPUT

FROM <EXISTING IMAGE>

RUN <INSTALL CMDS FROM SHELL>

CMD <CMD TO RUN BY DEFAULT>Let’s break this file down:

- The first line,

FROM, indicates which container image we’re starting with. It is the “base” container image we are going to start from. - The next two lines

RUN, will indicate installation commands we want to run. These are the same commands that we used interactively above. - The last line,

CMD, indicates the default command we want a container based on this container image to run, if no other command is provided. It is recommended to provideCMDin exec-form (see theCMDsection of the Dockerfile documentation for more details). It is written as a list which contains the executable to run as its first element, optionally followed by any arguments as subsequent elements. The list is enclosed in square brackets ([]) and its elements are double-quoted (") strings which are separated by commas. For example,CMD ["ls", "-lF", "--color", "/etc"]would translate tols -lF --color /etc.

shell-form and exec-form for CMD

Another way to specify the parameter for the CMD

instruction is the shell-form. Here you type the command as

you would call it from the command line. Docker then silently runs this

command in the image’s standard shell. CMD cat /etc/passwd

is equivalent to CMD ["/bin/sh", "-c", "cat /etc/passwd"].

We recommend to prefer the more explicit exec-form because we

will be able to create more flexible container image command options and

make sure complex commands are unambiguous in this format.

Exercise: Take a Guess

Do you have any ideas about what we should use to fill in the sample Dockerfile to replicate the installation we did above?

Based on our experience above, edit the Dockerfile (in

your text editor of choice) to look like this:

FROM alpine

RUN apk add --update python3 py3-pip python3-dev

CMD ["python3", "--version"]The recipe provided by the Dockerfile shown in the

solution to the preceding exercise will use Alpine Linux as the base

container image, add Python 3, the pip package management tool and some

additional Python header files, and set a default command to request

Python 3 to report its version information.

Create a new Docker image

So far, we only have a text file named Dockerfile – we

do not yet have a container image. We want Docker to take this

Dockerfile, run the installation commands contained within

it, and then save the resulting container as a new container image. To

do this we will use the docker image build command.

We have to provide docker image build with two pieces of

information:

- the location of the

Dockerfile - the name of the new container image. Remember the naming scheme from

before? You should name your new image with your Docker Hub username and

a name for the container image, like this:

USERNAME/CONTAINER_IMAGE_NAME.

All together, the build command that you should run on your computer, will have a similar structure to this:

The -t option names the container image; the final dot

indicates that the Dockerfile is in our current

directory.

For example, if my user name was alice and I wanted to

call my container image alpine-python, I would use this

command:

Build Context

Notice that the final input to docker image build isn’t

the Dockerfile – it’s a directory! In the command above, we’ve used the

current working directory (.) of the shell as the final

input to the docker image build command. This option

provides what is called the build context to Docker – if there

are files being copied into the built container image more details in the next episode

they’re assumed to be in this location. Docker expects to see a

Dockerfile in the build context also (unless you tell it to look

elsewhere).

Even if it won’t need all of the files in the build context directory, Docker does “load” them before starting to build, which means that it’s a good idea to have only what you need for the container image in a build context directory, as we’ve done in this example.

Exercise: Review!

Think back to earlier. What command can you run to check if your container image was created successfully? (Hint: what command shows the container images on your computer?)

We didn’t specify a tag for our container image name. What tag did Docker automatically use?

What command will run a container based on the container image you’ve created? What should happen by default if you run such a container? Can you make it do something different, like print “hello world”?

To see your new image, run

docker image ls. You should see the name of your new container image under the “REPOSITORY” heading.In the output of

docker image ls, you can see that Docker has automatically used thelatesttag for our new container image.We want to use

docker container runto run a container based on a container image.

The following command should run a container and print out our default message, the version of Python:

To run a container based on our container image and print out “Hello world” instead:

While it may not look like you have achieved much, you have already effected the combination of a lightweight Linux operating system with your specification to run a given command that can operate reliably on macOS, Microsoft Windows, Linux and on the cloud!

Boring but important notes about installation

There are a lot of choices when it comes to installing software – sometimes too many! Here are some things to consider when creating your own container image:

- Start smart, or, don’t install everything from scratch! If you’re using Python as your main tool, start with a Python container image. Same with the R programming language. We’ve used Alpine Linux as an example in this lesson, but it’s generally not a good container image to start with for initial development and experimentation because it is a less common distribution of Linux; using Ubuntu, Debian and CentOS are all good options for scientific software installations. The program you’re using might recommend a particular distribution of Linux, and if so, it may be useful to start with a container image for that distribution.

- How big? How much software do you really need to install? When you have a choice, lean towards using smaller starting container images and installing only what’s needed for your software, as a bigger container image means longer download times to use.

-

Know (or Google) your Linux. Different

distributions of Linux often have distinct sets of tools for installing

software. The

apkcommand we used above is the software package installer for Alpine Linux. The installers for various common Linux distributions are listed below:- Ubuntu:

aptorapt-get - Debian:

deb - CentOS:

yumMost common software installations are available to be installed via these tools. A web search for “install X on Y Linux” is usually a good start for common software installation tasks; if something isn’t available via the Linux distribution’s installation tools, try the options below.

- Ubuntu:

-

Use what you know. You’ve probably used commands

like

piporinstall.packages()before on your own computer – these will also work to install things in container images (if the basic scripting language is installed). - README. Many scientific software tools have a README or installation instructions that lay out how to install software. You want to look for instructions for Linux. If the install instructions include options like those suggested above, try those first.

In general, a good strategy for installing software is:

- Make a list of what you want to install.

- Look for pre-existing container images.

- Read through instructions for software you’ll need to install.

- Try installing everything interactively in your base container – take notes!

- From your interactive installation, create a

Dockerfileand then try to build the container image from that.

Share your new container image on Docker Hub

Container images that you release publicly can be stored on the

Docker Hub for free. If you name your container image as described

above, with your Docker Hub username, all you need to do is run the

opposite of docker image pull –

docker image push.

Make sure to substitute the full name of your container image!

In a web browser, open https://hub.docker.com, and on your user page you should now see your container image listed, for anyone to use or build on.

Logging In

Technically, you have to be logged into Docker on your computer for

this to work. Usually it happens by default, but if

docker image push doesn’t work for you, run

docker login first, enter your Docker Hub username and

password, and then try docker image push again.

What’s in a name? (again)

You don’t have to name your containers images using the

USERNAME/CONTAINER_IMAGE_NAME:TAG naming scheme. On your

own computer, you can call container images whatever you want, and refer

to them by the names you choose. It’s only when you want to share a

container image that it needs the correct naming format.

You can rename container images using the

docker image tag command. For example, imagine someone

named Alice has been working on a workflow container image and called it

workflow-test on her own computer. She now wants to share

it in her alice Docker Hub account with the name

workflow-complete and a tag of v1. Her

docker image tag command would look like this:

She could then push the re-named container image to Docker Hub, using

docker image push alice/workflow-complete:v1

-

Dockerfiles specify what is within Docker container images. - The

docker image buildcommand is used to build a container image from aDockerfile. - You can share your Docker container images through the Docker Hub so that others can create Docker containers from your container images.

Content from Creating More Complex Container Images

Last updated on 2024-08-16 | Edit this page

Overview

Questions

How can I add local files (e.g. data files) into container images at build time?

How can I access files stored on the host system from within a running Docker container?

Objectives

- Explain how you can include files within Docker container images when you build them.

- Explain how you can access files on the Docker host from your Docker containers.

In order to create and use your own container images, you may need more information than our previous example. You may want to use files from outside the container, that are not included within the container image, either by copying the files into the container image, or by making them visible within a running container from their existing location on your host system. You may also want to learn a little bit about how to install software within a running container or a container image. This episode will look at these advanced aspects of running a container or building a container image. Note that the examples will get gradually more and more complex – most day-to-day use of containers and container images can be accomplished using the first 1–2 sections on this page.

Using scripts and files from outside the container

In your shell, change to the sum folder in the

docker-intro folder and look at the files inside.

This folder has both a Dockerfile and a Python script

called sum.py. Let’s say we wanted to try running the

script using a container based on our recently created

alpine-python container image.

Running containers

Question: What command would we use to run Python from the

alpine-python container?

We can run a container from the alpine-python container image using:

What happens? Since the Dockerfile that we built this

container image from had a CMD entry that specified

["python3", "--version"], running the above command simply

starts a container from the image, runs the

python3 --version command and exits. You should have seen

the installed version of Python printed to the terminal.

Instead, if we want to run an interactive Python terminal, we can use

docker container run to override the default run command

embedded within the container image. So we could run:

The -it tells Docker to set up and interactive terminal

connection to the running container, and then we’re telling Docker to

run the python3 command inside the container which gives us

an interactive Python interpreter prompt. (type exit()

to exit!)

If we try running the container and Python script, what happens?

OUTPUT

python3: can't open file '//sum.py': [Errno 2] No such file or directoryNo such file or directory

Question: What does the error message mean? Why might the Python inside the container not be able to find or open our script?

This question is here for you to think about - we explore the answer to this question in the content below.

The problem here is that the container and its filesystem is separate from our host computer’s filesystem. When the container runs, it can’t see anything outside itself, including any of the files on our computer. In order to use Python (inside the container) and our script (outside the container, on our host computer), we need to create a link between the directory on our computer and the container.

This link is called a “mount” and is what happens automatically when a USB drive or other external hard drive gets connected to a computer – you can see the contents appear as if they were on your computer.

We can create a mount between our computer and the running container

by using an additional option to docker container run.

We’ll also use the variable ${PWD} which will substitute in

our current working directory. The option will look like this

--mount type=bind,source=${PWD},target=/temp

What this means is: make my current working directory (on the host

computer) – the source – visible within the container that is

about to be started, and inside this container, name the directory

/temp – the target.

Types of mounts

You will notice that we set the mount type=bind, there

are other types of mount that can be used in Docker

(e.g. volume and tmpfs). We do not cover other

types of mounts or the differences between these mount types in the

course as it is more of an advanced topic. You can find more information

on the different mount types in the Docker

documentation.

Let’s try running the command now:

BASH

$ docker container run --mount type=bind,source=${PWD},target=/temp alice/alpine-python python3 sum.pyBut we get the same error!

OUTPUT

python3: can't open file '//sum.py': [Errno 2] No such file or directoryThis final piece is a bit tricky – we really have to remember to put

ourselves inside the container. Where is the sum.py file?

It’s in the directory that’s been mapped to /temp – so we

need to include that in the path to the script. This command should give

us what we need:

BASH

$ docker container run --mount type=bind,source=${PWD},target=/temp alice/alpine-python python3 /temp/sum.pyNote that if we create any files in the /temp directory

while the container is running, these files will appear on our host

filesystem in the original directory and will stay there even when the

container stops.

Other Commonly Used Docker Run Flags

Docker run has many other useful flags to alter its function. A

couple that are commonly used include -w and

-u.

The --workdir/-w flag sets the working

directory a.k.a. runs the command being executed inside the directory

specified. For example, the following code would run the

pwd command in a container started from the latest ubuntu

image in the /home/alice directory and print

/home/alice. If the directory doesn’t exist in the image it

will create it.

docker container run -w /home/alice/ ubuntu pwdThe --user/-u flag lets you specify the

username you would like to run the container as. This is helpful if

you’d like to write files to a mounted folder and not write them as

root but rather your own user identity and group. A common

example of the -u flag is

--user $(id -u):$(id -g) which will fetch the current

user’s ID and group and run the container as that user.

Exercise: Explore the script

What happens if you use the docker container run command

above and put numbers after the script name?

This script comes from the Python Wiki and is set to add all numbers that are passed to it as arguments.

Exercise: Checking the options

Our Docker command has gotten much longer! Can you go through each piece of the Docker command above and explain what it does? How would you characterize the key components of a Docker command?

Here’s a breakdown of each piece of the command above

-

docker container run: use Docker to run a container -

--mount type=bind,source=${PWD},target=/temp: connect my current working directory (${PWD}) as a folder inside the container called/temp -

alice/alpine-python: name of the container image to use to run the container -

python3 /temp/sum.py: what commands to run in the container

More generally, every Docker command will have the form:

docker [action] [docker options] [docker container image] [command to run inside]

Exercise: Interactive jobs

Try using the directory mount option but run the container interactively. Can you find the folder that’s connected to your host computer? What’s inside?

The docker command to run the container interactively is:

Once inside, you should be able to navigate to the /temp

folder and see that’s contents are the same as the files on your host

computer:

Mounting a directory can be very useful when you want to run the software inside your container on many different input files. In other situations, you may want to save or archive an authoritative version of your data by adding it to the container image permanently. That’s what we will cover next.

Including your scripts and data within a container image

Our next project will be to add our own files to a container image –

something you might want to do if you’re sharing a finished analysis or

just want to have an archived copy of your entire analysis including the

data. Let’s assume that we’ve finished with our sum.py

script and want to add it to the container image itself.

In your shell, you should still be in the sum folder in

the docker-intro folder.

Let’s add a new line to the Dockerfile we’ve been using

so far to create a copy of sum.py. We can do so by using

the COPY keyword.

COPY sum.py /homeThis line will cause Docker to copy the file from your computer into the container’s filesystem. Let’s build the container image like before, but give it a different name:

The Importance of Command Order in a Dockerfile

When you run docker image build it executes the build in

the order specified in the Dockerfile. This order is

important for rebuilding and you typically will want to put your

RUN commands before your COPY commands.

Docker builds the layers of commands in order. This becomes important

when you need to rebuild container images. If you change layers later in

the Dockerfile and rebuild the container image, Docker

doesn’t need to rebuild the earlier layers but will instead used a

stored (called “cached”) version of those layers.

For example, in an instance where you wanted to copy

multiply.py into the container image instead of

sum.py. If the COPY line came before the

RUN line, it would need to rebuild the whole image. If the

COPY line came second then it would use the cached

RUN layer from the previous build and then only rebuild the

COPY layer.

Exercise: Did it work?

Can you remember how to run a container interactively? Try that with this one. Once inside, try running the Python script.

This COPY keyword can be used to place your own scripts

or own data into a container image that you want to publish or use as a

record. Note that it’s not necessarily a good idea to put your scripts

inside the container image if you’re constantly changing or editing

them. Then, referencing the scripts from outside the container is a good

idea, as we did in the previous section. You also want to think

carefully about size – if you run docker image ls you’ll

see the size of each container image all the way on the right of the

screen. The bigger your container image becomes, the harder it will be

to easily download.

Security Warning

Login credentials including passwords, tokens, secure access tokens or other secrets must never be stored in a container. If secrets are stored, they are at high risk to be found and exploited when made public.

Copying alternatives

Another trick for getting your own files into a container image is by

using the RUN keyword and downloading the files from the

internet. For example, if your code is in a GitHub repository, you could

include this statement in your Dockerfile to download the latest version

every time you build the container image:

RUN git clone https://github.com/alice/mycodeSimilarly, the wget command can be used to download any

file publicly available on the internet:

RUN wget ftp://ftp.ncbi.nlm.nih.gov/blast/executables/blast+/2.10.0/ncbi-blast-2.10.0+-x64-linux.tar.gzNote that the above RUN examples depend on commands

(git and wget respectively) that must be

available within your container: Linux distributions such as Alpine may

require you to install such commands before using them within

RUN statements.

More fancy Dockerfile options (optional, for

presentation or as exercises)

We can expand on the example above to make our container image even more “automatic”. Here are some ideas:

Make the sum.py script run automatically

FROM alpine

RUN apk add --update python3 py3-pip python3-dev

COPY sum.py /home

# Run the sum.py script as the default command

CMD ["python3", "/home/sum.py"]Build and test it:

You’ll notice that you can run the container without arguments just

fine, resulting in sum = 0, but this is boring. Supplying

arguments however doesn’t work:

results in

OUTPUT

docker: Error response from daemon: OCI runtime create failed:

container_linux.go:349: starting container process caused "exec:

\"10\": executable file not found in $PATH": unknown.This is because the arguments 10 11 12 are interpreted

as a command that replaces the default command given by

CMD ["python3", "/home/sum.py"] in the image.

To achieve the goal of having a command that always runs

when a container is run from the container image and can be

passed the arguments given on the command line, use the keyword

ENTRYPOINT in the Dockerfile.

FROM alpine

RUN apk add --update python3 py3-pip python3-dev

COPY sum.py /home

# Run the sum.py script as the default command and

# allow people to enter arguments for it

ENTRYPOINT ["python3", "/home/sum.py"]

# Give default arguments, in case none are supplied on

# the command-line

CMD ["10", "11"]Build and test it:

BASH

$ docker image build -t alpine-sum:v2 .

# Most of the time you are interested in the sum of 10 and 11:

$ docker container run alpine-sum:v2

# Sometimes you have more challenging calculations to do:

$ docker container run alpine-sum:v2 12 13 14Overriding the ENTRYPOINT

Sometimes you don’t want to run the image’s ENTRYPOINT.

For example if you have a specialized container image that does only

sums, but you need an interactive shell to examine the container:

will yield

OUTPUT

Please supply integer argumentsYou need to override the ENTRYPOINT statement in the

container image like so:

Add the sum.py script to the PATH so you

can run it directly:

FROM alpine

RUN apk add --update python3 py3-pip python3-dev

COPY sum.py /home

# set script permissions

RUN chmod +x /home/sum.py

# add /home folder to the PATH

ENV PATH /home:$PATHBuild and test it:

Best practices for writing Dockerfiles

Take a look at Nüst et al.’s “Ten simple rules

for writing Dockerfiles for reproducible data science” [1] for

some great examples of best practices to use when writing Dockerfiles.

The GitHub

repository associated with the paper also has a set of example

Dockerfiles demonstrating how the rules highlighted by

the paper can be applied.

[1] Nüst D, Sochat V, Marwick B, Eglen SJ, Head T, et al. (2020) Ten simple rules for writing Dockerfiles for reproducible data science. PLOS Computational Biology 16(11): e1008316. https://doi.org/10.1371/journal.pcbi.1008316

- Docker allows containers to read and write files from the Docker host.

- You can include files from your Docker host into your Docker

container images by using the

COPYinstruction in yourDockerfile.

Content from Examples of Using Container Images in Practice

Last updated on 2024-08-16 | Edit this page

Overview

Questions

- How can I use Docker for my own work?

Objectives

- Use existing container images and Docker in a research project.

Now that we have learned the basics of working with Docker container images and containers, let’s apply what we learned to an example workflow.

You may choose one or more of the following examples to practice using containers.

GitHub Actions Example

In this GitHub Actions example, you can learn more about continuous integration in the cloud and how you can use container images with GitHub to automate repetitive tasks like testing code or deploying websites.

Using Containers on an HPC Cluster

It is possible to run containers on shared computing systems run by a university or national computing center. As a researcher, you can build container images and test containers on your own computer and then run your full-scale computing work on a shared computing system like a high performance cluster or high throughput grid.

The catch? Most university and national computing centers do not support running containers with Docker commands, and instead use a similar tool called Singularity or Shifter. However, both of these programs can be used to run containers based on Docker container images, so often people create their container image as a Docker container image, so they can run it using either of Docker or Singularity.

There isn’t yet a working example of how to use Docker container images on a shared computing system, partially because each system is slightly different, but the following resources show what it can look like:

- Introduction to Singularity: See the episode titled “Running MPI parallel jobs using Singularity containers”

- Container Workflows at Pawsey: See the episode titled “Run containers on HPC with Shifter (and Singularity)”

Seeking Examples

Do you have another example of using Docker in a workflow related to your field? Please open a lesson issue or submit a pull request to add it to this episode and the extras section of the lesson.

- There are many ways you might use Docker and existing container images in your research project.

Content from Containers in Research Workflows: Reproducibility and Granularity

Last updated on 2024-08-16 | Edit this page

Overview

Questions

- How can I use container images to make my research more reproducible?

- How do I incorporate containers into my research workflow?

Objectives

- Understand how container images can help make research more reproducible.

- Understand what practical steps I can take to improve the reproducibility of my research using containers.

Although this workshop is titled “Reproducible computational environments using containers”, so far we have mostly covered the mechanics of using Docker with only passing reference to the reproducibility aspects. In this section, we discuss these aspects in more detail.

Work in progress…

Note that reproducibility aspects of software and containers are an active area of research, discussion and development so are subject to many changes. We will present some ideas and approaches here but best practices will likely evolve in the near future.

Reproducibility